AlphaFold/run_alphafold.py代码阅读理解

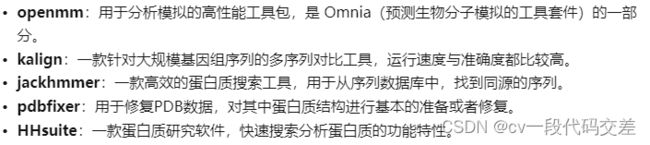

前言:代码中使用到多个生物学工具:参考超算跑模型 | Alphafold 蛋白质结构预测 - 知乎

正式阅读代码:

flags.DEFINE_list():设置命令行需要的参数,在运行代码时直接传入。list为参数数据类型,为list

flags.DEFINE_string():参数类型为String字符串

翻译:FASTA文件的路径,每个路径包含一个预测目标,该目标将被一个接一个地折叠。如果FASTA文件包含多个序列,则将其折叠为多序列。路径应以逗号分隔。所有FASTA路径必须具有唯一的basename,因为basename用于命名每个预测的输出目录

也就是说fasta文件路径,以逗号分隔,传入程序

flags.DEFINE_list(

'fasta_paths', None, 'Paths to FASTA files, each containing a prediction '

'target that will be folded one after another. If a FASTA file contains '

'multiple sequences, then it will be folded as a multimer. Paths should be '

'separated by commas. All FASTA paths must have a unique basename as the '

'basename is used to name the output directories for each prediction.')代码前面定义了参数如下(按顺序):

一些参数的设置:

按顺序:最大模板数、

MAX_TEMPLATE_HITS = 20

RELAX_MAX_ITERATIONS = 0

RELAX_ENERGY_TOLERANCE = 2.39

RELAX_STIFFNESS = 10.0

RELAX_EXCLUDE_RESIDUES = []

RELAX_MAX_OUTER_ITERATIONS = 3必须要有的参数列表(fasta文件、输出文件、数据库文件模板文件路径)、运行main函数

if __name__ == '__main__':

flags.mark_flags_as_required([

'fasta_paths',

'output_dir',

'data_dir',

'uniref90_database_path',

'mgnify_database_path',

'template_mmcif_dir',

'max_template_date',

'obsolete_pdbs_path',

'use_gpu_relax',

])

app.run(main)main函数

1. 检查参数合不合法、检查工具是否都存在

2. _check_flag()函数:检查输入的参数合不合法

3. num_ensemble :(这里不知道是啥意思)???

如果使用casp14的模型,则num_ensemble = 8,其他等于1

if FLAGS.model_preset == 'monomer_casp14':

num_ensemble = 8

else:

num_ensemble = 14. 检查fasta文件名是否重复,set自动去重

fasta_names = [pathlib.Path(p).stem for p in FLAGS.fasta_paths]

if len(fasta_names) != len(set(fasta_names)):

raise ValueError('All FASTA paths must have a unique basename.')5. run_multimer_system:多聚体True,其他False。

两种模式创建对象的方式不同:创建并实例化两个对象template_search、template_featurizer

hmmsearch.py是hmm 的python包:根据序列数据库搜索配置文件

hmmbuild.py :从MSA构造HMM配置文件

TemplateHitFeaturizer:将模板hits转换为模板特征

HmmsearchHitFeaturizer:将a3m hits从hmmsearch转换成模板特征

if run_multimer_system:

template_searcher = hmmsearch.Hmmsearch(

binary_path=FLAGS.hmmsearch_binary_path,

hmmbuild_binary_path=FLAGS.hmmbuild_binary_path,

database_path=FLAGS.pdb_seqres_database_path)

template_featurizer = templates.HmmsearchHitFeaturizer(

mmcif_dir=FLAGS.template_mmcif_dir,

max_template_date=FLAGS.max_template_date,

max_hits=MAX_TEMPLATE_HITS,

kalign_binary_path=FLAGS.kalign_binary_path,

release_dates_path=None,

obsolete_pdbs_path=FLAGS.obsolete_pdbs_path)

else:

template_searcher = hhsearch.HHSearch(

binary_path=FLAGS.hhsearch_binary_path,

databases=[FLAGS.pdb70_database_path])

template_featurizer = templates.HhsearchHitFeaturizer(

mmcif_dir=FLAGS.template_mmcif_dir,

max_template_date=FLAGS.max_template_date,

max_hits=MAX_TEMPLATE_HITS,

kalign_binary_path=FLAGS.kalign_binary_path,

release_dates_path=None,

obsolete_pdbs_path=FLAGS.obsolete_pdbs_path)

monomer_data_pipeline = pipeline.DataPipeline(

jackhmmer_binary_path=FLAGS.jackhmmer_binary_path,

hhblits_binary_path=FLAGS.hhblits_binary_path,

uniref90_database_path=FLAGS.uniref90_database_path,

mgnify_database_path=FLAGS.mgnify_database_path,

bfd_database_path=FLAGS.bfd_database_path,

uniclust30_database_path=FLAGS.uniclust30_database_path,

small_bfd_database_path=FLAGS.small_bfd_database_path,

template_searcher=template_searcher,

template_featurizer=template_featurizer,

use_small_bfd=use_small_bfd,

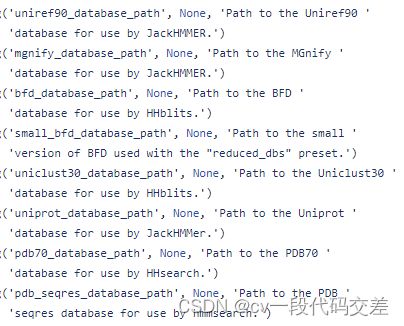

use_precomputed_msas=FLAGS.use_precomputed_msas)6.monomer_data_pipeline 单体数据管道

pipeline.py:用于为alfhafold模型构建输入特征的函数

DataPipeline:运行对齐工具并组装输入特征的类

创建并实例化对象monomer_data_pipeline

monomer_data_pipeline = pipeline.DataPipeline(

jackhmmer_binary_path=FLAGS.jackhmmer_binary_path,

hhblits_binary_path=FLAGS.hhblits_binary_path,

uniref90_database_path=FLAGS.uniref90_database_path,

mgnify_database_path=FLAGS.mgnify_database_path,

bfd_database_path=FLAGS.bfd_database_path,

uniclust30_database_path=FLAGS.uniclust30_database_path,

small_bfd_database_path=FLAGS.small_bfd_database_path,

template_searcher=template_searcher,

template_featurizer=template_featurizer,

use_small_bfd=use_small_bfd,

use_precomputed_msas=FLAGS.use_precomputed_msas)7. 多聚体和其他两种情况:

是:一个模型生成多少个预测结果、多聚体数据管道对象(传入多聚体数据管道)

否:1、数据管道=单体数据管道

if run_multimer_system:

num_predictions_per_model = FLAGS.num_multimer_predictions_per_model

data_pipeline = pipeline_multimer.DataPipeline(

monomer_data_pipeline=monomer_data_pipeline,

jackhmmer_binary_path=FLAGS.jackhmmer_binary_path,

uniprot_database_path=FLAGS.uniprot_database_path,

use_precomputed_msas=FLAGS.use_precomputed_msas)

else:

num_predictions_per_model = 1

data_pipeline = monomer_data_pipeline8.

model_names = 根据model不同读取不同的预设model名字

获取模型配置:model_config,根据模型的不同num_ensemble的设置位置不同。(多聚体与其他)

获取模型参数、运行模型预测,一个模型预测几个结果就设置几个模型运行器model_runder(只有多聚体大于1,单体为1)

model_runners = {}

model_names = config.MODEL_PRESETS[FLAGS.model_preset]

for model_name in model_names:

model_config = config.model_config(model_name)

if run_multimer_system:

model_config.model.num_ensemble_eval = num_ensemble

else:

model_config.data.eval.num_ensemble = num_ensemble

model_params = data.get_model_haiku_params(

model_name=model_name, data_dir=FLAGS.data_dir)

model_runner = model.RunModel(model_config, model_params)

for i in range(num_predictions_per_model):

model_runners[f'{model_name}_pred_{i}'] = model_runner

logging.info('Have %d models: %s', len(model_runners),

list(model_runners.keys()))config.py:模型配置

model_config():如果是多聚体,返回ConfigDict类(CONFIG_MULTIMER 模型);如果不是多聚体,模型名字也不是其他两个,报错;如果是其他类型,

copy.deepcopy():深复制函数,完全复制,新变量的改变不会影响原变量的值,相当于复制了一块内存。

cfg.update_from_flattened_dict(CONFIG_DIFFS[name]):把cfg字典中的键值和CONFIG_DIFFS[name]一样的键值更新,因为CONFIG_DIFFS中只有模型参数,所以只更新了模型参数。

def model_config(name: str) -> ml_collections.ConfigDict:

"""Get the ConfigDict of a CASP14 model."""

if 'multimer' in name:

return CONFIG_MULTIMER

if name not in CONFIG_DIFFS:

raise ValueError(f'Invalid model name {name}.')

cfg = copy.deepcopy(CONFIG)

cfg.update_from_flattened_dict(CONFIG_DIFFS[name])

return cfgupdate_from_flattened_dict()函数:查源码举例:

update_from_flattened_dict(self, flattened_dict, strip_prefix='')

Args:

flattened_dict: A mapping (key path) -> value.

strip_prefix: A prefix to be stripped from `path`. If specified, only

paths matching `strip_prefix` will be processed.

For example, consider we have the following values returned as flags::

flags = {

'flag1': x,

'flag2': y,

'config': 'some_file.py',

'config.a.b': 1,

'config.a.c': 2

}

config = ConfigDict({

'a': {

'b': 0,

'c': 0

}

})

config.update_from_flattened_dict(flags, 'config.')

Then we will now have::

config = ConfigDict({

'a': {

'b': 1,

'c': 2

}

})剩下的之后再补

9. data.get_model_haiku_params()

10. model.RunModel()

11. 如果进行松弛折叠,则amber_relaxer =

否则=none

if FLAGS.run_relax:

amber_relaxer = relax.AmberRelaxation(

max_iterations=RELAX_MAX_ITERATIONS,

tolerance=RELAX_ENERGY_TOLERANCE,

stiffness=RELAX_STIFFNESS,

exclude_residues=RELAX_EXCLUDE_RESIDUES,

max_outer_iterations=RELAX_MAX_OUTER_ITERATIONS,

use_gpu=FLAGS.use_gpu_relax)

else:

amber_relaxer = None12. 设置随机种子

random_seed = FLAGS.random_seed

if random_seed is None:

random_seed = random.randrange(sys.maxsize // len(model_runners))

logging.info('Using random seed %d for the data pipeline', random_seed)13. 预测结构

predict_structure()

for i, fasta_path in enumerate(FLAGS.fasta_paths):

fasta_name = fasta_names[i]

predict_structure(

fasta_path=fasta_path,

fasta_name=fasta_name,

output_dir_base=FLAGS.output_dir,

data_pipeline=data_pipeline,

model_runners=model_runners,

amber_relaxer=amber_relaxer,

benchmark=FLAGS.benchmark,

random_seed=random_seed)