MapReduce-Reduce Join应用 (FROM 尚硅谷)

个人学习整理,所有资料来自尚硅谷

B站学习连接:添加链接描述

MapReduce-Reduce Join应用

1. Reduce Join

Map端的主要工作:为来自不同表或文件的key/value对,打标签以区别不同来源的记录。然后用连接字段作为key,其余部分和新加的标志作为key,最后进行输出。

Reduce端的主要工作:在Reduce端以连接字段作为key的分组已经完成,只需要在每一个分组当中将那些来源于不同文件的记录(在Map阶段已经打标志)分开,最后进行合并。

2. Reduce Join案例实操

(1)需求

数据连接:添加链接描述

提取码:7zv7

(2)需求分析

通过将关联条件作为Map输出的key,将两表满足Join条件的数据并携带数据所来源的文件信息,发往同一个ReduceTask,在Reduce中进行数据的串联。

- 需要将pid相同的内容传送到reduce端,然后换成相应的pname,因此将map阶段的key设置为共同字段pid。

- 封装的bean对象中写下五个字段的内容:id、pid、amount、pname和flag(标记是什么表)。

- 按pid将内容发送到不同的reduce方法中。按照文件不同设置为两个集合,依次循环遍历order表中的bean对象,设置pd表中产品名称。

(3)代码实现

- TableBean

package com.atguigu.mapreduce.reduceJoin;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class TableBean implements Writable {

//id pid amount

//pid pname

private String id;

private String pid;

private int amount;

private String pname;

private String flag;//标记是什么表,order/pd

//空参构造

public TableBean() {

}

public String getId() {

return id;

}

public void setId(String id) {

this.id = id;

}

public String getPid() {

return pid;

}

public void setPid(String pid) {

this.pid = pid;

}

public int getAmount() {

return amount;

}

public void setAmount(int amount) {

this.amount = amount;

}

public String getPname() {

return pname;

}

public void setPname(String pname) {

this.pname = pname;

}

public String getFlag() {

return flag;

}

public void setFlag(String flag) {

this.flag = flag;

}

@Override

public void write(DataOutput dataOutput) throws IOException {

//id pid amount

//pid pname

dataOutput.writeUTF(id);

dataOutput.writeUTF(pid);

dataOutput.writeInt(amount);

dataOutput.writeUTF(pname);

dataOutput.writeUTF(flag);

}

@Override

public void readFields(DataInput dataInput) throws IOException {

this.id = dataInput.readUTF();

this.pid = dataInput.readUTF();

this.amount = dataInput.readInt();

this.pname = dataInput.readUTF();

this.flag = dataInput.readUTF();

}

@Override

public String toString() {

//id pname amount

return id + "\t" +pname + "\t" + amount;

}

}

- TableMapper

TableMapper中重写一个setup方法,该方法能获取fileName,默认切片规则:一个文件一个切片,因此一个文件进入之后有一个setup方法,一个map方法;若不写setup方法,则每一行都会获取当前文件的名称。

package com.atguigu.mapreduce.reduceJoin;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.IOException;

public class TableMapper extends Mapper<LongWritable, Text,Text,TableBean> {

private String fileName;

private Text outK = new Text();

private TableBean outV = new TableBean();

@Override

protected void setup(Mapper<LongWritable, Text, Text, TableBean>.Context context) throws IOException, InterruptedException {

FileSplit split = (FileSplit) context.getInputSplit();

//在Setup获取fileName,默认切片规则:一个文件一个切片。因此一个文件进入之后有一个setup方法,一个map方法

//若不是用setup方法,则每一行都会获取当前文件的名称

//fileName后续要使用,要设置为全局变量

fileName = split.getPath().getName();

}

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, TableBean>.Context context) throws IOException, InterruptedException {

//1.获取一行

String line = value.toString();

//2.判断是哪个文件

if (fileName.contains("order")){//处理的是order表

String[] split = line.split("\t");

//3.封装kv

//order表字段:id pid amount,key:pid,value:TableBean

outK.set(split[1]);

outV.setId(split[0]);

outV.setPid(split[1]);

outV.setAmount(Integer.parseInt(split[2]));

outV.setPname("");

outV.setFlag("order");

}else{//处理的是pd表

String[] split = line.split("\t");

//3.封装kv

//pd表字段:pid pname,key:pid,value:TableBean

outK.set(split[0]);

outV.setId("");

outV.setPid(split[0]);

outV.setAmount(0);

outV.setPname(split[1]);

outV.setFlag("pd");

}

//写出

context.write(outK,outV);

}

}

- TableReducer

package com.atguigu.mapreduce.reduceJoin;

import org.apache.commons.beanutils.BeanUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

import java.lang.reflect.InvocationTargetException;

import java.util.ArrayList;

public class TableReducer extends Reducer<Text,TableBean,TableBean, NullWritable> {

@Override

protected void reduce(Text key, Iterable<TableBean> values, Reducer<Text, TableBean, TableBean, NullWritable>.Context context) throws IOException, InterruptedException {

//01 1001 1 order

//01 1004 4 order

//01 小米 pd

//准备初始化集合

ArrayList<TableBean> orderBeans = new ArrayList<>();

TableBean pdBean = new TableBean();

//循环遍历

for (TableBean value : values) {

if ("order".equals(value.getFlag())){//订单表

//orderBeans.add(value);//该句语法不可使用,在Hadoop框架中,迭代出的对象只是给出了地址,会往orderBeans中覆盖地址

//正确做法:迭代出的对象赋值给一个新new出的临时对象,再赋值给orderBeans

TableBean tmptableBean = new TableBean();

//将value赋值给tmptableBean,使用BeanUtils.copyProperties赋值对象

try {

BeanUtils.copyProperties(tmptableBean,value);

} catch (IllegalAccessException e) {

e.printStackTrace();

} catch (InvocationTargetException e) {

e.printStackTrace();

}

orderBeans.add(tmptableBean);

}else{//商品表

try {

BeanUtils.copyProperties(pdBean,value);

} catch (IllegalAccessException e) {

e.printStackTrace();

} catch (InvocationTargetException e) {

e.printStackTrace();

}

}

}

//循环遍历orderBeans,赋值pdname

for (TableBean orderBean : orderBeans) {

orderBean.setPname(pdBean.getPname());

context.write(orderBean,NullWritable.get());

}

}

}

- TableDriver

package com.atguigu.mapreduce.reduceJoin;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class TableDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(TableDriver.class);

job.setMapperClass(TableMapper.class);

job.setReducerClass(TableReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(TableBean.class);

job.setOutputKeyClass(TableBean.class);

job.setOutputValueClass(NullWritable.class);

FileInputFormat.setInputPaths(job,new Path("D:\\downloads\\hadoop-3.1.0\\data\\11_input\\inputtable"));

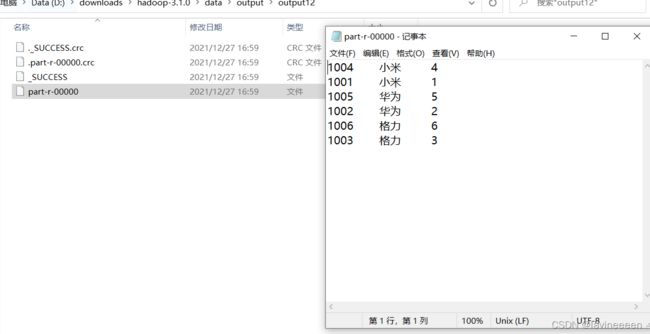

FileOutputFormat.setOutputPath(job,new Path("D:\\downloads\\hadoop-3.1.0\\data\\output\\output12"));

boolean result = job.waitForCompletion(true);

System.exit(result?0:1);

}

}

(5)总结

缺点:这种方式中,合并的操作是在Reduce阶段完成,Reduce端的处理压力太大,Map节点的运算负载则很低,资源利用率不高,且在Reduce阶段极易产生数据倾斜(即大量数据在Reduce端进行汇总)

解决方案:Map端实现数据合并。