HDFD 回收站【Trash】机制

一、回收站 Trash 机制开启

HDFS本身是一个文件系统,默认情况下HDFS不开启回收站,数据删除后将被永久删除

添加并修改两个属性值可开启Trash功能 - (core-site.xml)

fs.trash.interval

1440

注:Trash分钟数,当超过分钟数后检查点会被删除,默认值为0,为0时Trash功能将被禁用

fs.trash.checkpoint.interval

0

注:检查点的创建时间间隔(单位为分钟数),其值应该小于或等于 fs.trash.internal。默认为0,为0时,该值设置为 fs.trash.internal的值

以上两参数的作用:当NameNode启动后,会启动一个守护线程,每隔【fs.trash.checkpoint.interval】的时间会在【.Trash】目录下创建当前时间的检查点,把最近删除的数据放在此检查点内,并在【fs.trash.interval】的周期去将过期的检查点删除

注:检查点 【Trash checkpoint】 : 1.检查点仅仅是用户回收站下的一个目录,用于存储在创建检查点之前删除的所有文件或目录。和 Current 同级目录/user/deploy/.Trash/{timestamp_of_checkpoint_creation}

2.删除的文件被移动到回收站Current目录,并且在可配置的时间间隔内,HDFS会为在Current回收站目录下的文件创建检查点/user/${username}/.Trash/<创建日期>,并在过期时删除旧的检查点。图为:官网参数描述

图为 .Trash 下的目录

二、回收站 Trash 功能机制使用

当用户开始回收站后,从HDFS删除的文件或目录不会立即被清除,会被移动到回收站Current目录中:/user/deploy/.Trash/Current,当然还可以使用命令,跳过回收站进行删除,将数据直接永久删除

-- 1.删除 HDFS 数据

hadoop fs -rm -r -f /user/hive/external/dwd/dwd_test

-- 2.恢复误删除的文件

hadoop fs -mv /user/deploy/.Trash/Current/user/hive/external/dwd/dwd_test /user/hive/external/dwd/dwd_test

-- 3.强制删除数据不进入回收站

hadoop fs -rm -r -f -skipTrash /user/hive/external/dwd/dwd_test

-- 4.手动删除回收站文件

hadoop fs -rm -r -f /user/deploy/.Trash/Cureent/user/hive/external/dwd/dwd/dwd_test

-- 5,清空 HDFS 的回收站

hadoop fs -expunge (hdfs dfs -expunge 命令只会创建新的checkpoint,不会删除过期的checkpoint)

1.Hadoop 官方文档命名的说明-检查点 Checkpoints 的创建与删除

expunge

Usage: hadoop fs -expunge [-immediate] [-fs ]

Permanently delete files in checkpoints older than the retention threshold from trash directory, and create new checkpoint.

-- 永久删除超过阈值的检查点中文件,并创建新的检查点

When checkpoint is created, recently deleted files in trash are moved under the checkpoint. Files in checkpoints older than fs.trash.interval will be permanently deleted on the next invocation of -expunge command.

-- 当检查点被创建了,最近删除的数据会被移动到检查点中,在下次执行 expunge 时过期的checkpoint会被永久删除

If the file system supports the feature, users can configure to create and delete checkpoints periodically by the parameter stored as fs.trash.checkpoint.interval (in core-site.xml). This value should be smaller or equal to fs.trash.interval.

-- 如果文件系统支持该特性,用户可以配置通过存储在fs.trash.checkpoint.interval(在core-site.xml中)中的参数定期创建和删除检查点。这个值应该小于或等于fs.trash.interval。

If the -immediate option is passed, all files in the trash for the current user are immediately deleted, ignoring the fs.trash.interval setting.

If the -fs option is passed, the supplied filesystem will be expunged, rather than the default filesystem and checkpoint is created.

For example

hadoop fs -expunge --immediate -fs s3a://landsat-pds/

2.操作如下,执行命令会先进行删除已达到过期时间的 checkpoint ,然后会创建新的checkpoint,将最近删除的数据放入 图为 手动执行hadoop fs -extunge时先delete检查点,后create检查点的

三、回收站 Trash工作原理-源码

1.1 初始化

NameNode启动时会在后台启动一个emptier守护线程,用于定时(NameNode重启周期清零)清理HDFS集群上每个用户下的回收站数据,定时周期为 fs.trash.checkpoint.interval。

源码路径:org.apache.hadoop.hdfs.server.namenode

private void startTrashEmptier(final Configuration conf) throws IOException {

long trashInterval =

conf.getLong(FS_TRASH_INTERVAL_KEY, FS_TRASH_INTERVAL_DEFAULT);

if (trashInterval == 0) {

return;

} else if (trashInterval < 0) {

throw new IOException("Cannot start trash emptier with negative interval."

+ " Set " + FS_TRASH_INTERVAL_KEY + " to a positive value.");

}

// This may be called from the transitionToActive code path, in which

// case the current user is the administrator, not the NN. The trash

// emptier needs to run as the NN. See HDFS-3972.

FileSystem fs = SecurityUtil.doAsLoginUser(

new PrivilegedExceptionAction() {

@Override

public FileSystem run() throws IOException {

return FileSystem.get(conf);

}

});

this.emptier = new Thread(new Trash(fs, conf).getEmptier(), "Trash Emptier");

this.emptier.setDaemon(true);

this.emptier.start();

} 调用Trash类初始化配置信息和垃圾回收策略。

源码路径:org.apache.hadoop.fs.Trash

public Trash(FileSystem fs, Configuration conf) throws IOException {

super(conf);

trashPolicy = TrashPolicy.getInstance(conf, fs, fs.getHomeDirectory());

}HDFS为每个执行用户创建一个专属主目录/user/$USER/,被删除的数据会移动到执行用户的主目录下。

源码路径:org.apache.hadoop.fs.FileSystem

/** Return the current user's home directory in this filesystem.

* The default implementation returns "/user/$USER/".

*/

public Path getHomeDirectory() {

return this.makeQualified(

new Path("/user/"+System.getProperty("user.name")));

}通过反射创建TrashPolicy对象,垃圾回收策略可以用户自定义实现,通过参数fs.trash.classname指定。系统默认使用TrashPolicyDefault.class。

源码路径:org.apache.hadoop.fs.TrashPolicy

public static TrashPolicy getInstance(Configuration conf, FileSystem fs, Path home) {

Class trashClass = conf.getClass(

"fs.trash.classname", TrashPolicyDefault.class, TrashPolicy.class);

TrashPolicy trash = ReflectionUtils.newInstance(trashClass, conf);

trash.initialize(conf, fs, home); // initialize TrashPolicy

return trash;

}2.2 启动定时线程

NameNode通过this.emptier.start()方法启动线程,emptier线程周期性休眠后唤醒,执行删除垃圾数据trashPolicy.deleteCheckpoint()和创建检查点trashPolicy.createCheckpoint()操作。

源码路径:org.apache.hadoop.fs.TrashPolicy

@Override

public void run() {

if (emptierInterval == 0)

return; // trash disabled

long now = Time.now();

long end;

while (true) {

end = ceiling(now, emptierInterval);

try { // sleep for interval

Thread.sleep(end - now);

} catch (InterruptedException e) {

break; // exit on interrupt

}

try {

now = Time.now();

if (now >= end) {

FileStatus[] homes = null;

try {

homes = fs.listStatus(homesParent); // list all home dirs

} catch (IOException e) {

LOG.warn("Trash can't list homes: "+e+" Sleeping.");

continue;

}

for (FileStatus home : homes) { // dump each trash

if (!home.isDirectory())

continue;

try {

TrashPolicyDefault trash = new TrashPolicyDefault(

fs, home.getPath(), conf);

trash.deleteCheckpoint(); //删除垃圾数据

trash.createCheckpoint(); //创建检查点

} catch (IOException e) {

LOG.warn("Trash caught: "+e+". Skipping "+home.getPath()+".");

}

}

}

} catch (Exception e) {

LOG.warn("RuntimeException during Trash.Emptier.run(): ", e);

}

}

try {

fs.close();

} catch(IOException e) {

LOG.warn("Trash cannot close FileSystem: ", e);

}

}2.3 删除垃圾数据

检查/user/${user.name}/.Trash/(所有用户)下的第一级子目录,将目录名为格式yyMMddHHmmss的目录转化为时间 time(跳过Current和无法解析的目录),如果符合条件(now - deletionInterval > time),则删除该目录 (deletionInterval = ${fs.trash.interval})。回收站的默认清理机制粒度比较粗,只针对/user/${user.name}/.Trash/下的第一级子目录.

public void deleteCheckpoint() throws IOException {

FileStatus[] dirs = null;

try {

dirs = fs.listStatus(trash); // scan trash sub-directories

} catch (FileNotFoundException fnfe) {

return;

}

long now = Time.now();

for (int i = 0; i < dirs.length; i++) {

Path path = dirs[i].getPath();

String dir = path.toUri().getPath();

String name = path.getName();

if (name.equals(CURRENT.getName())) // skip current

continue;

long time;

try {

time = getTimeFromCheckpoint(name); //将目录名转换为时间

} catch (ParseException e) {

LOG.warn("Unexpected item in trash: "+dir+". Ignoring.");

continue;

}

if ((now - deletionInterval) > time) {

if (fs.delete(path, true)) { //删除目录

LOG.info("Deleted trash checkpoint: "+dir);

} else {

LOG.warn("Couldn't delete checkpoint: "+dir+" Ignoring.");

}

}

}

}2.4 创建检查点

如果/user/${user.name}/.Trash/目录下存在Current目录,则将该目录重命名为yyMMddHHmmss(执行到该条代码的当前时间)。如果不存在Current目录,则直接跳过。重命名后,新的删除数据写入时仍会创建Current目录。

public void createCheckpoint() throws IOException {

if (!fs.exists(current)) // no trash, no checkpoint

return;

Path checkpointBase;

synchronized (CHECKPOINT) {

checkpointBase = new Path(trash, CHECKPOINT.format(new Date()));

}

Path checkpoint = checkpointBase;

int attempt = 0;

while (true) {

try {

fs.rename(current, checkpoint, Rename.NONE); //重命名目录

break;

} catch (FileAlreadyExistsException e) {

if (++attempt > 1000) {

throw new IOException("Failed to checkpoint trash: "+checkpoint);

}

checkpoint = checkpointBase.suffix("-" + attempt);

}

}

LOG.info("Created trash checkpoint: "+checkpoint.toUri().getPath());

}四、特殊案例

集群配置垃圾回收参数如下:

fs.trash.interval = 4320 //3天 fs.trash.checkpoint.interval = 0 //未自定义设置,

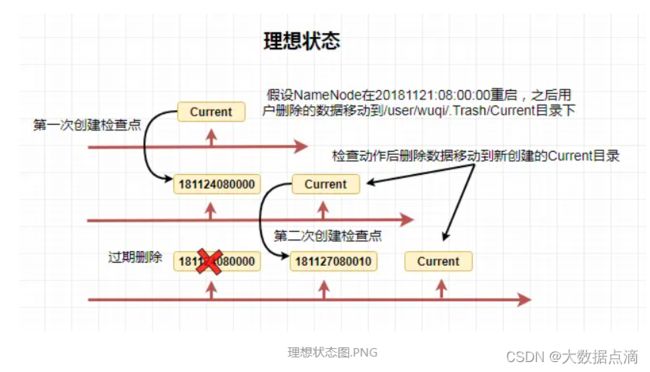

fs.trash.checkpoint.interval=${fs.trash.interval}2018:11:27 08:00:00开始唤醒emptier线程,先执行deleteCheckpoint()方法,理想情况下应该是符合条件((now - deletionInterval) > time)。

now:大于181127080000,小于181127080010的某个时间点

deletionInterval:4320 minutes

time:181124080000 => 符合条件,开始删除181124080000目录而在现实操作中,往往会发生如下极端情况:

now:大于181127080000,小于181127080010的某个时间点

deletionInterval:4320 minutes

time:181124080033 => 不符合条件,跳过执行createCheckpoint()方法

fs.trash.checkpoint.interval默认不设置的情况下,会出现本来设置回收站数据保存3天,而实际上会保留接近9天的情况。五、expunge命令

用户可以通过手动执行hadoop shell命令清理过期检查点和创建新的检查点,功能同emptier线程的单次执行。

hdfs dfs -expunge

hadoop fs -expunge源码路径:org.apache.hadoop.fs.shell

protected void processArguments(LinkedList args)

throws IOException {

Trash trash = new Trash(getConf());

trash.expunge();

trash.checkpoint();

} 源码路径:org.apache.hadoop.fs.Trash

/** Delete old checkpoint(s). */

public void expunge() throws IOException {

trashPolicy.deleteCheckpoint();

}