Hadoop集群搭建

系列文章目录

Ubuntu常见基本问题

Hadoop3.1.3安装(单机、伪分布)

Hadoop集群搭建

HBase2.2.2安装(单机、伪分布)

Zookeeper集群搭建

HBase集群搭建

Spark安装和编程实践(Spark2.4.0)

Spark集群搭建

文章目录

- 系列文章目录

- Hadoop集群启动步骤

- 一、网络配置

- 二、SSH无密码登录节点

- 三、配置PATH变量

- 四、配置集群/分布式环境

-

- 1、修改配置文件

-

- ① workers

- ② core-site.xml

- ③ hdfs-site.xml

- ④ mapred-site.xml

- ⑤ yarn-site.xml

- 2、删除之前在伪分布式模式下生成的临时文件

- 3、启动

- 4、运行分布式实例

- 5、关闭

Hadoop集群启动步骤

- 步骤1:选定一台机器作为 Master;

- 步骤2:在Master节点上创建hadoop用户、安装SSH服务端、安装Java环境;

- 步骤3:在Master节点上安装Hadoop,并完成配置;

- 步骤4:在其他Slave节点上创建hadoop用户、安装SSH服务端、安装Java环境;

- 步骤5:将Master节点上的“/usr/local/hadoop”目录复制到其他Slave节点上;

- 步骤6:在Master节点上开启Hadoop;

一、网络配置

- 我们在 Master 节点上执行如下命令修改主机名

sudo vim /etc/hostname

将其内容改为 Master,然后重启虚拟机:

2. 进行虚拟机克隆,打开副本,执行

sudo vim /etc/hostname

将其内容改为:Slave1

- 下载 net-tools

sudo apt install net-tools

- 查看IP

ifconfig

分别记下两台虚拟机的IP

5. 修改“/etc/hosts”文件

sudo vim /etc/hosts

增加如下内容,IP要与主机对应

192.168.168.128 Master

192.168.168.129 Slave1

- 测试连接

ping Master -c 3

ping Slave1 -c 3

二、SSH无密码登录节点

- 必须要让Master节点可以SSH无密码登录到各个Slave节点上。首先,生成Master节点的公匙,如

果之前已经生成过公钥,必须要删除原来生成的公钥,重新生成一次,因为前面我们对主机名进

行了修改。具体命令如下

cd ~/.ssh # 如果没有该目录,先执行一次ssh localhost

rm ./id_rsa* # 删除之前生成的公匙(如果已经存在)

ssh-keygen -t rsa # 执行该命令后,遇到提示信息,一直按回车就可以

- 为了让Master节点能够无密码SSH登录本机,需要在Master节点上执行如下命令

cat ./id_rsa.pub >> ./authorized_keys

- 完成后可以执行命令“ssh Master”来验证一下,可能会遇到提示信息,只要输入yes即可,测试成

功后,请执行“exit”命令返回原来的终端。 - 接下来,在Master节点将上公匙传输到Slave1节点

scp ~/.ssh/id_rsa.pub hadoop@Slave1:/home/hadoop/

传输成功!

4. 在Slave1节点上,将SSH公匙加入授权

mkdir ~/.ssh # 如果不存在该文件夹需先创建,若已存在,则忽略本命令

cat ~/id_rsa.pub >> ~/.ssh/authorized_keys

rm ~/id_rsa.pub # 用完以后就可以删掉

- 在 Master 节点上,进行测试

ssh Slave1

三、配置PATH变量

修改配置

vim ~/.bashrc

加入配置

export PATH=$PATH:/usr/local/hadoop/bin:/usr/local/hadoop/sbin

保存后执行命令“source ~/.bashrc”,使配置生效。

source ~/.bashrc

四、配置集群/分布式环境

1、修改配置文件

① workers

cd /usr/local/hadoop/etc/hadoop/

sudo vim workers

修改为:

Slave1

② core-site.xml

cd /usr/local/hadoop/etc/hadoop/

sudo vim core-site.xml

修改为:

<configuration>

<property>

<name>fs.defaultFSname>

<value>hdfs://Master:9000value>

property>

<property>

<name>hadoop.tmp.dirname>

<value>file:/usr/local/hadoop/tmpvalue>

<description>Abase for other temporary directories.description>

property>

configuration>

③ hdfs-site.xml

cd /usr/local/hadoop/etc/hadoop/

sudo vim hdfs-site.xml

修改为:

<configuration>

<property>

<name>dfs.namenode.secondary.http-addressname>

<value>Master:50090value>

property>

<property>

<name>dfs.replicationname>

<value>1value>

property>

<property>

<name>dfs.namenode.name.dirname>

<value>file:/usr/local/hadoop/tmp/dfs/namevalue>

property>

<property>

<name>dfs.datanode.data.dirname>

<value>file:/usr/local/hadoop/tmp/dfs/datavalue>

property>

configuration>

④ mapred-site.xml

cd /usr/local/hadoop/etc/hadoop/

sudo vim mapred-site.xml

修改为:

<configuration>

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>

<property>

<name>mapreduce.jobhistory.addressname>

<value>Master:10020value>

property>

<property>

<name>mapreduce.jobhistory.webapp.addressname>

<value>Master:19888value>

property>

<property>

<name>yarn.app.mapreduce.am.envname>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoopvalue>

property>

<property>

<name>mapreduce.map.envname>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoopvalue>

property>

<property>

<name>mapreduce.reduce.envname>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoopvalue>

property>

configuration>

⑤ yarn-site.xml

cd /usr/local/hadoop/etc/hadoop/

sudo vim yarn-site.xml

修改为:

<configuration>

<property>

<name>yarn.resourcemanager.hostnamename>

<value>Mastervalue>

property>

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

configuration>

2、删除之前在伪分布式模式下生成的临时文件

Master节点

cd /usr/local

sudo rm -r ./hadoop/tmp # 删除 Hadoop 临时文件

sudo rm -r ./hadoop/logs/* # 删除日志文件

tar -zcf ~/hadoop.master.tar.gz ./hadoop # 先压缩再复制

cd ~

scp ./hadoop.master.tar.gz Slave1:/home/hadoop

Slave1节点

sudo rm -r /usr/local/hadoop # 删掉旧的(如果存在)

sudo tar -zxf ~/hadoop.master.tar.gz -C /usr/local

sudo chown -R hadoop /usr/local/hadoop

3、启动

首次启动Hadoop集群时,需要先在Master节点执行名称节点的格式化(只需要执行这一次,后面再启动Hadoop时,不要再次格式化名称节点),命令如下

hdfs namenode -format

成功啦!

启动Hadoop,在Master节点上进行,执行如下命令

start-dfs.sh

start-yarn.sh

mr-jobhistory-daemon.sh start historyserver

通过命令jps可以查看各个节点所启动的进程。如果已经正确启动,则在Master节点上可以看到 NameNode、ResourceManager、SecondrryNameNode和JobHistoryServer进程

在Slave节点可以看到DataNode和NodeManager进程

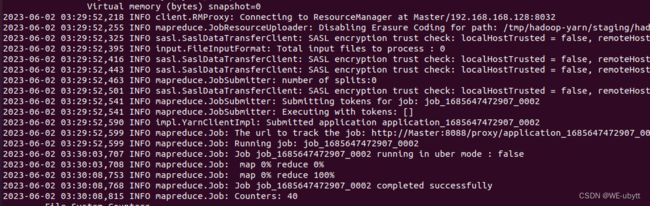

4、运行分布式实例

- 首先创建HDFS上的用户目录,命令如下

hdfs dfs -mkdir -p /user/hadoop

- 然后,在HDFS中创建一个input目录,并把“/usr/local/hadoop/etc/hadoop”目录中的配置文件作为

输入文件复制到input目录中,命令如下

hdfs dfs -mkdir input

hdfs dfs -put /usr/local/hadoop/etc/hadoop/*.xml input

- 接着就可以运行 MapReduce 作业了,命令如下

hadoop jar /usr/local/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar grep input output 'dfs[a-z.]+'

5、关闭

stop-yarn.sh

stop-dfs.sh

mr-jobhistory-daemon.sh stop historyserver