docker-compose部署hive数仓服务 —— 筑梦之路

1. docker创建网络

# 创建,注意不能使用hadoop-network

docker network create hadoop_network

# 查看

docker network ls2. mysql部署

# 拉取镜像

docker pull mysql:5.7

# 生成配置

mkdir -p conf/ data/db/

cat > conf/my.cnf <cat docker-compose.yml

version: '3'

services:

db:

image: mysql:5.7 #mysql版本

container_name: mysql

hostname: mysql

volumes:

- ./data/db:/var/lib/mysql

- ./conf/my.cnf:/etc/mysql/mysql.conf.d/mysqld.cnf

restart: always

ports:

- 13306:3306

networks:

- hadoop_network

environment:

MYSQL_ROOT_PASSWORD: 123456 #访问密码

secure_file_priv:

healthcheck:

test: ["CMD-SHELL", "curl -I localhost:3306 || exit 1"]

interval: 10s

timeout: 5s

retries: 3

# 连接外部网络

networks:

hadoop_network:

external: truedocker-compose up -d

docker-compose ps

3. 部署hive数仓服务

1)下载

# 下载

wget http://archive.apache.org/dist/hive/hive-3.1.3/apache-hive-3.1.3-bin.tar.gz

# 解压

tar -zxvf apache-hive-3.1.3-bin.tar.gz2)配置文件

cat images/hive-config/hive-site.xml

hive.metastore.warehouse.dir

/user/hive_remote/warehouse

hive.metastore.local

false

javax.jdo.option.ConnectionURL

jdbc:mysql://mysql:3306/hive_metastore?createDatabaseIfNotExist=true&useSSL=false&serverTimezone=Asia/Shanghai

javax.jdo.option.ConnectionDriverName

com.mysql.jdbc.Driver

javax.jdo.option.ConnectionUserName

root

javax.jdo.option.ConnectionPassword

123456

hive.metastore.schema.verification

false

system:user.name

root

user name

hive.metastore.uris

thrift://hive-metastore:9083

hive.server2.thrift.bind.host

0.0.0.0

Bind host on which to run the HiveServer2 Thrift service.

hive.server2.thrift.port

10000

hive.server2.active.passive.ha.enable

true

3)启动脚本hive-env.sh

#!/usr/bin/env sh

wait_for() {

echo Waiting for $1 to listen on $2...

while ! nc -z $1 $2; do echo waiting...; sleep 1s; done

}

start_hdfs_namenode() {

if [ ! -f /tmp/namenode-formated ];then

${HADOOP_HOME}/bin/hdfs namenode -format >/tmp/namenode-formated

fi

${HADOOP_HOME}/bin/hdfs --loglevel INFO --daemon start namenode

tail -f ${HADOOP_HOME}/logs/*namenode*.log

}

start_hdfs_datanode() {

wait_for $1 $2

${HADOOP_HOME}/bin/hdfs --loglevel INFO --daemon start datanode

tail -f ${HADOOP_HOME}/logs/*datanode*.log

}

start_yarn_resourcemanager() {

${HADOOP_HOME}/bin/yarn --loglevel INFO --daemon start resourcemanager

tail -f ${HADOOP_HOME}/logs/*resourcemanager*.log

}

start_yarn_nodemanager() {

wait_for $1 $2

${HADOOP_HOME}/bin/yarn --loglevel INFO --daemon start nodemanager

tail -f ${HADOOP_HOME}/logs/*nodemanager*.log

}

start_yarn_proxyserver() {

wait_for $1 $2

${HADOOP_HOME}/bin/yarn --loglevel INFO --daemon start proxyserver

tail -f ${HADOOP_HOME}/logs/*proxyserver*.log

}

start_mr_historyserver() {

wait_for $1 $2

${HADOOP_HOME}/bin/mapred --loglevel INFO --daemon start historyserver

tail -f ${HADOOP_HOME}/logs/*historyserver*.log

}

start_hive_metastore() {

if [ ! -f ${HIVE_HOME}/formated ];then

schematool -initSchema -dbType mysql --verbose > ${HIVE_HOME}/formated

fi

$HIVE_HOME/bin/hive --service metastore

}

start_hive_hiveserver2() {

$HIVE_HOME/bin/hive --service hiveserver2

}

case $1 in

hadoop-hdfs-nn)

start_hdfs_namenode

;;

hadoop-hdfs-dn)

start_hdfs_datanode $2 $3

;;

hadoop-yarn-rm)

start_yarn_resourcemanager

;;

hadoop-yarn-nm)

start_yarn_nodemanager $2 $3

;;

hadoop-yarn-proxyserver)

start_yarn_proxyserver $2 $3

;;

hadoop-mr-historyserver)

start_mr_historyserver $2 $3

;;

hive-metastore)

start_hive_metastore $2 $3

;;

hive-hiveserver2)

start_hive_hiveserver2 $2 $3

;;

*)

echo "请输入正确的服务启动命令~"

;;

esac4)构建镜像Dockerfile

FROM hadoop:v1

COPY hive-config/* ${HIVE_HOME}/conf/

COPY bootstrap.sh /opt/apache/

COPY mysql-connector-java-5.1.49/mysql-connector-java-5.1.49-bin.jar ${HIVE_HOME}/lib/

RUN sudo mkdir -p /home/hadoop/ && sudo chown -R hadoop:hadoop /home/hadoop/

#RUN yum -y install whichdocker build -t hadoop_hive:v1 . --no-cache

### 参数解释

# -t:指定镜像名称

# . :当前目录Dockerfile

# -f:指定Dockerfile路径

# --no-cache:不缓存5)编写docker-compose.yml

version: '3'

services:

hadoop-hdfs-nn:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-hdfs-nn

hostname: hadoop-hdfs-nn

restart: always

privileged: true

env_file:

- .env

ports:

- "30070:${HADOOP_HDFS_NN_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-hdfs-nn"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "curl --fail http://localhost:${HADOOP_HDFS_NN_PORT} || exit 1"]

interval: 20s

timeout: 20s

retries: 3

hadoop-hdfs-dn-0:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-hdfs-dn-0

hostname: hadoop-hdfs-dn-0

restart: always

depends_on:

- hadoop-hdfs-nn

env_file:

- .env

ports:

- "30864:${HADOOP_HDFS_DN_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-hdfs-dn hadoop-hdfs-nn ${HADOOP_HDFS_NN_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "curl --fail http://localhost:${HADOOP_HDFS_DN_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 3

hadoop-hdfs-dn-1:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-hdfs-dn-1

hostname: hadoop-hdfs-dn-1

restart: always

depends_on:

- hadoop-hdfs-nn

env_file:

- .env

ports:

- "30865:${HADOOP_HDFS_DN_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-hdfs-dn hadoop-hdfs-nn ${HADOOP_HDFS_NN_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "curl --fail http://localhost:${HADOOP_HDFS_DN_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 3

hadoop-hdfs-dn-2:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-hdfs-dn-2

hostname: hadoop-hdfs-dn-2

restart: always

depends_on:

- hadoop-hdfs-nn

env_file:

- .env

ports:

- "30866:${HADOOP_HDFS_DN_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-hdfs-dn hadoop-hdfs-nn ${HADOOP_HDFS_NN_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "curl --fail http://localhost:${HADOOP_HDFS_DN_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 3

hadoop-yarn-rm:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-yarn-rm

hostname: hadoop-yarn-rm

restart: always

env_file:

- .env

ports:

- "30888:${HADOOP_YARN_RM_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-yarn-rm"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "netstat -tnlp|grep :${HADOOP_YARN_RM_PORT} || exit 1"]

interval: 20s

timeout: 20s

retries: 3

hadoop-yarn-nm-0:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-yarn-nm-0

hostname: hadoop-yarn-nm-0

restart: always

depends_on:

- hadoop-yarn-rm

env_file:

- .env

ports:

- "30042:${HADOOP_YARN_NM_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-yarn-nm hadoop-yarn-rm ${HADOOP_YARN_RM_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "curl --fail http://localhost:${HADOOP_YARN_NM_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 3

hadoop-yarn-nm-1:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-yarn-nm-1

hostname: hadoop-yarn-nm-1

restart: always

depends_on:

- hadoop-yarn-rm

env_file:

- .env

ports:

- "30043:${HADOOP_YARN_NM_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-yarn-nm hadoop-yarn-rm ${HADOOP_YARN_RM_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "curl --fail http://localhost:${HADOOP_YARN_NM_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 3

hadoop-yarn-nm-2:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-yarn-nm-2

hostname: hadoop-yarn-nm-2

restart: always

depends_on:

- hadoop-yarn-rm

env_file:

- .env

ports:

- "30044:${HADOOP_YARN_NM_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-yarn-nm hadoop-yarn-rm ${HADOOP_YARN_RM_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "curl --fail http://localhost:${HADOOP_YARN_NM_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 3

hadoop-yarn-proxyserver:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-yarn-proxyserver

hostname: hadoop-yarn-proxyserver

restart: always

depends_on:

- hadoop-yarn-rm

env_file:

- .env

ports:

- "30911:${HADOOP_YARN_PROXYSERVER_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-yarn-proxyserver hadoop-yarn-rm ${HADOOP_YARN_RM_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "netstat -tnlp|grep :${HADOOP_YARN_PROXYSERVER_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 3

hadoop-mr-historyserver:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hadoop-mr-historyserver

hostname: hadoop-mr-historyserver

restart: always

depends_on:

- hadoop-yarn-rm

env_file:

- .env

ports:

- "31988:${HADOOP_MR_HISTORYSERVER_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hadoop-mr-historyserver hadoop-yarn-rm ${HADOOP_YARN_RM_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "netstat -tnlp|grep :${HADOOP_MR_HISTORYSERVER_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 3

hive-metastore:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hive-metastore

hostname: hive-metastore

restart: always

depends_on:

- hadoop-hdfs-dn-2

env_file:

- .env

ports:

- "30983:${HIVE_METASTORE_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hive-metastore hadoop-hdfs-dn-2 ${HADOOP_HDFS_DN_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "netstat -tnlp|grep :${HIVE_METASTORE_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 5

hive-hiveserver2:

image: hadoop_hive:v1

user: "hadoop:hadoop"

container_name: hive-hiveserver2

hostname: hive-hiveserver2

restart: always

depends_on:

- hive-metastore

env_file:

- .env

ports:

- "31000:${HIVE_HIVESERVER2_PORT}"

command: ["sh","-c","/opt/apache/bootstrap.sh hive-hiveserver2 hive-metastore ${HIVE_METASTORE_PORT}"]

networks:

- hadoop_network

healthcheck:

test: ["CMD-SHELL", "netstat -tnlp|grep :${HIVE_HIVESERVER2_PORT} || exit 1"]

interval: 30s

timeout: 30s

retries: 5

# 连接外部网络

networks:

hadoop_network:

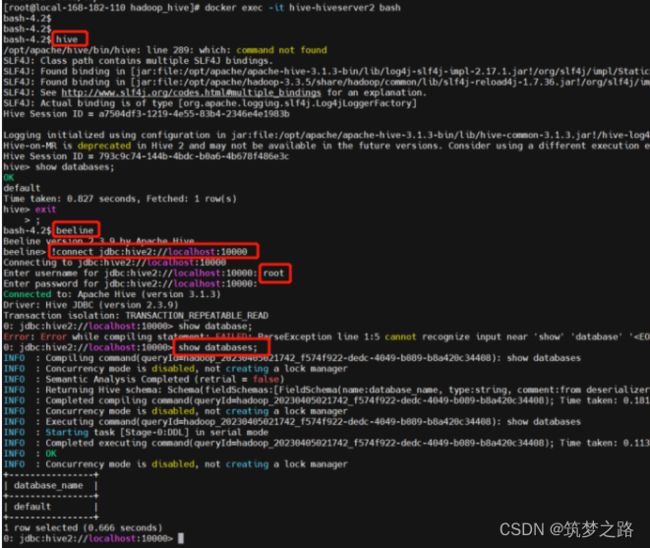

external: true6)启动测试验证

【问题】

如果出现以下类似的错误,是因为多次启动,之前的数据还在,但是datanode的IP是已经变了的(宿主机部署就不会有这样的问题,因为宿主机的IP是固定的),所以需要刷新节点,当然也可清理之前的旧数据,不推荐清理旧数据,推荐使用刷新节点的方式(如果有对外挂载的情况下,像我这里没有对外挂载,是因为之前旧容器还在,下面有几种解决方案):

org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.hdfs.server.protocol.DisallowedDatanodeException): Datanode denied communication with namenode because the host is not in the include-list: DatanodeRegistration(172.30.0.12:9866, datanodeUuid=f8188476-4a88-4cd6-836f-769d510929e4, infoPort=9864, infoSecurePort=0, ipcPort=9867, storageInfo=lv=-57;cid=CID-f998d368-222c-4a9a-88a5-85497a82dcac;nsid=1840040096;c=1680661390829)

【解决方案】

1. 删除旧容器重启启动

# 清理旧容器 docker rm `docker ps -a|grep 'Exited'|awk '{print $1}'` # 重启启动服务 docker-compose -f docker-compose.yaml up -d # 查看 docker-compose -f docker-compose.yaml ps2. 登录 namenode 刷新 datanode

docker exec -it hadoop-hdfs-nn hdfs dfsadmin -refreshNodes3. 登录 任意节点刷新 datanode

# 这里以 hadoop-hdfs-dn-0 为例 docker exec -it hadoop-hdfs-dn-0 hdfs dfsadmin -fs hdfs://hadoop-hdfs-nn:9000 -refreshNodes4. 在docker-compose中给每个容器固定IP