【Python案例】(十)多线程、多进程、多协程加速程序

文章目录

- P1 Python并发编程简介

-

- 一、具体应用:

- 二、几种方式的联系与Python的支持:

-

- 1)对比

- 2)python的支持

- P2 怎样选择多线程、多进程、多协程

-

- 一、CPU密集型计算、IO密集型计算

-

- CPU密集型(CPU-bound):

- I/O密集型(I/O bound):

- 二、多线程、多进程、多协程的对比:

-

- 1、Python并发编程有三种方式:

- 2、 对比

-

- 1)多进程Process(multiprocessing)

- 2)多线程Thread(threading)

- 3)多协程Coroutine(asyncio)

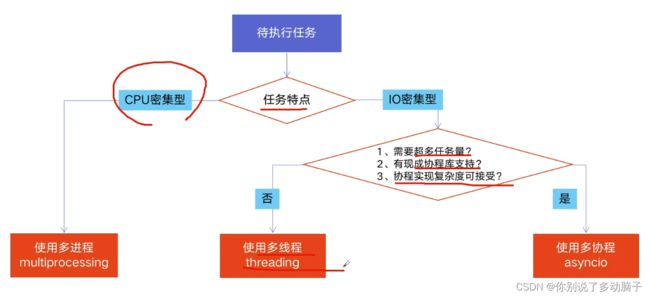

- 三、怎样根据任务选择对应技术?

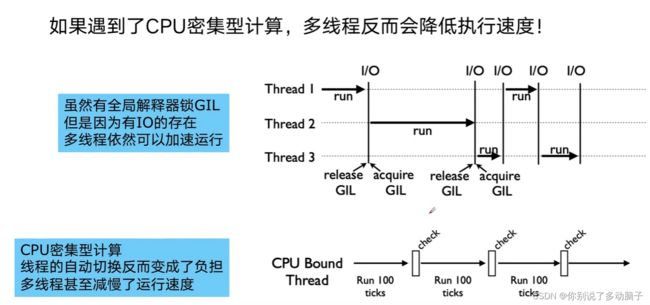

- P3:全局解释器锁GIL

-

- 一、Python速度慢的两大原因

- 二、GIL是什么?

- 三、为什么有GIL这个东西?

- 四、怎么规避GIL带来的限制

- P4:使用多线程加速程序Python代码

-

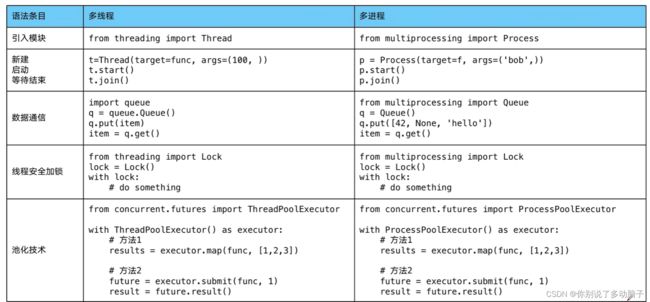

- 一、Python创建多线程的方法

- P5:Python实现生产者消费者爬虫

-

- 一、多组件的Pipeline技术架构

- 二、代码实现

- P6:线程安全问题以及Lock解决方案

-

- 一、线程安全概念介绍

- 二、Lock用于解决线程安全问题

- 三、实力代码演示问题以及解决方案

- P7: 线程池ThreadPoolExecututor

-

- 一、线程池的原理

- 二、使用线程池的好处

- 三、ThreadPoolExecutor的使用语法

- 四、使用线程池改造爬虫程序

- P8:使用线程池在Web服务中实现加速

-

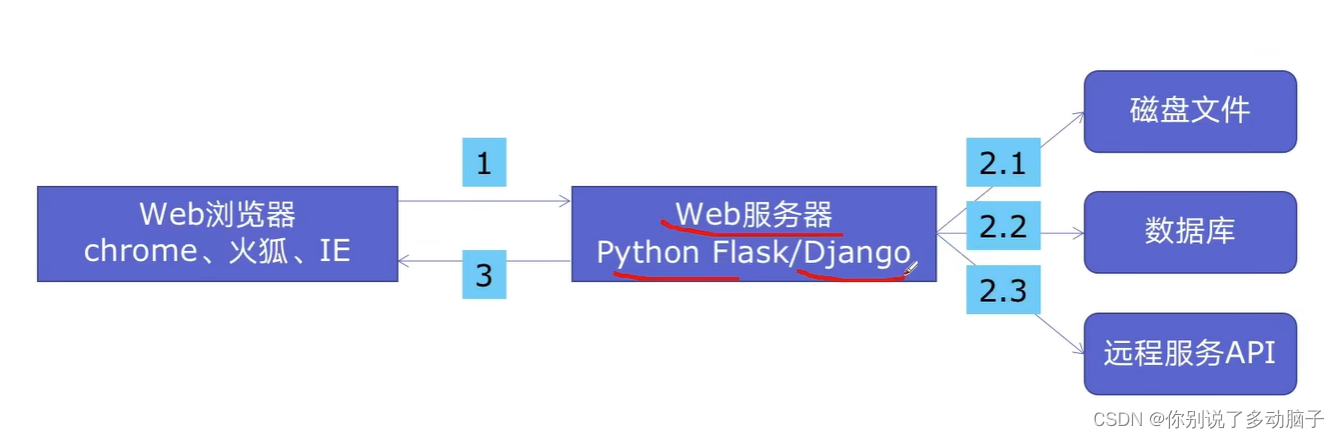

- 一、Web服务的架构以及特点

- 二、使用线程池ThreadPoolExecutor加速

- 三、代码用Flask实现Web服务并实现加速

- P9:多进程multiprocessing模块加速程序的运行

-

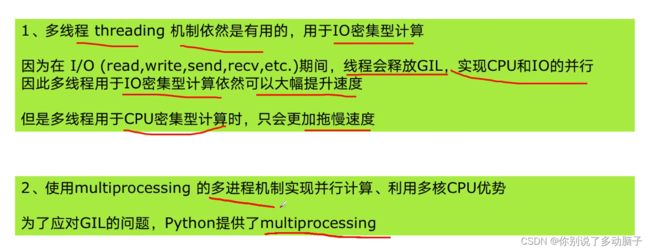

- 一、有了多线程threading,为什么还要用多进程multiprocessing

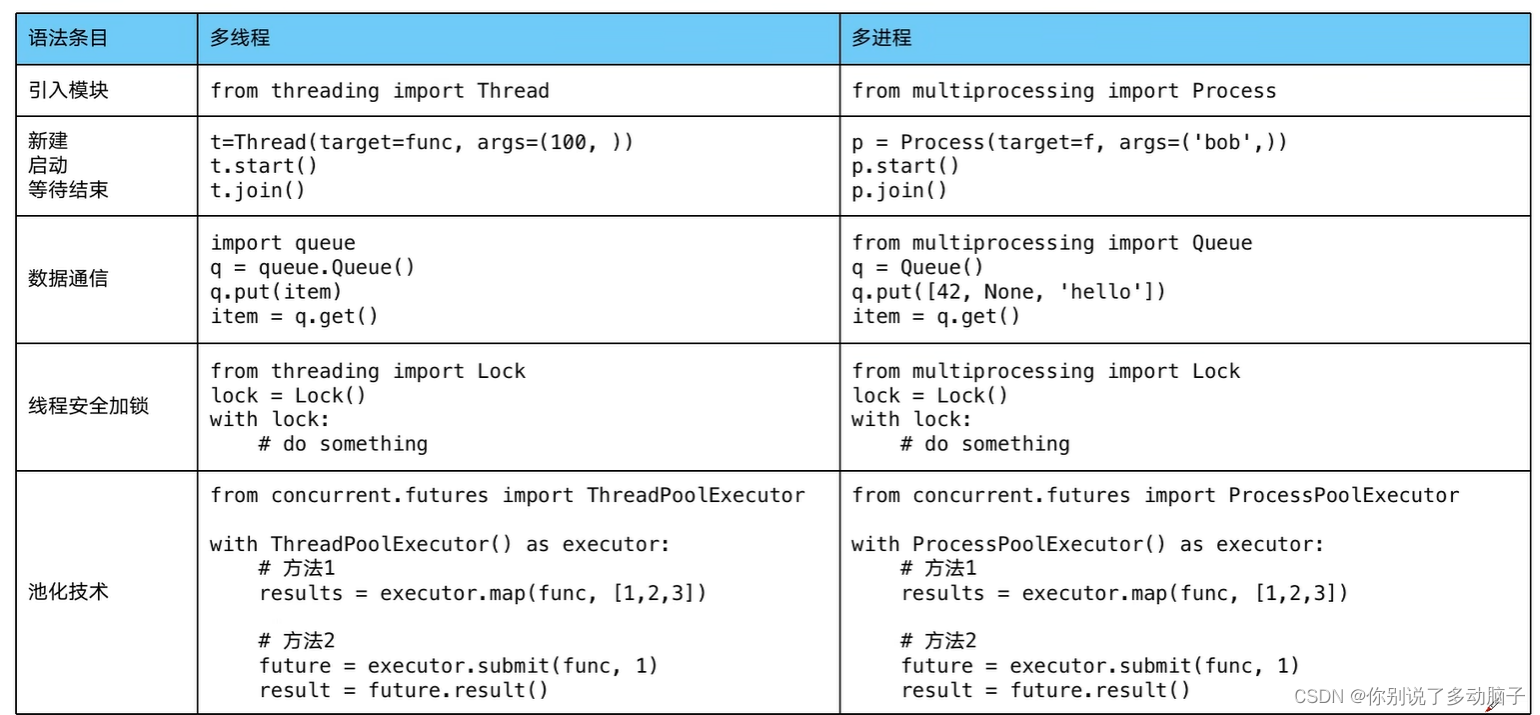

- 二、多进程multiprocessing知识梳理

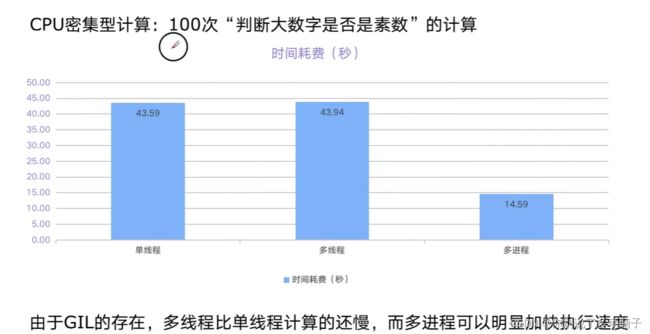

- 三、代码实战:单线程、多线程、多进程对比CPU密集计算速度

- P10: Python在Flask服务中使用多进程池加速程序运行

- P11:异步IO实现并发爬虫

- P12:在异步IO中使用信号量控制爬虫并发度

P1 Python并发编程简介

一、具体应用:

- 并发爬虫,同时进行爬取

- APP应用,每次打开页面需要3秒,异步并发提升到200毫秒

二、几种方式的联系与Python的支持:

1)对比

单线程串行(不加改造的程序)>>>多线程并发(threading)>>>多CPU并行(multiprocessing)>>>多机器并行(hadoop/hive/spark)

- 多线程并发,是在IO执行阶段,CPU可以运行,还是单CPU运行的方式;

- 多CPU并行:多核多CPU运行

- 多机器并行:大数据时代

2)python的支持

多线程:threading,利用CPU和IO可以同时执行的原理,让CPU不会干巴巴等待IO完成

多进程:multiprocessing,利用多核CPU的能力,真正的并行执行任务

异步IO:asyncio,在单线程利用CPU和IO同时执行的原理,实现函数异步执行

使用Lock对资源枷锁,防止冲突访问(多线程和多进程同时访问一个文件会冲突,如果锁起来就可以有序访问)

使用Queue实现不同线程/进程之间的数据通信,实现生产者-消费者模式

使用线程池Pool/进程池Pool,简化线程/进程的任务提交、等待结束、获取结果

使用subprocess启动外部程序的进程,并进行输入输出交互

P2 怎样选择多线程、多进程、多协程

一、CPU密集型计算、IO密集型计算

CPU密集型(CPU-bound):

CPU密集型也叫计算密集型,是指I/O在很短的时间就可以完成,CPU需要大量的计算和处理,特点是CPU占用率相当高。

例如:解压缩、加密解密、正则表达式搜索

I/O密集型(I/O bound):

IO密集型指的是系统运作大部分的状况是CPU在等I/O(硬盘 /内存)的读/写操作,CPU占用率仍然较低。

例如:文件处理程序、网络爬虫程序、读写数据库程序

二、多线程、多进程、多协程的对比:

1、Python并发编程有三种方式:

多线程Thread、多进程Process、多协程Coroutine

2、 对比

关系:一个进程中,可以启动N个线程;一个线程中,可以启动N个协程

1)多进程Process(multiprocessing)

- 优点:可以利用多核CPU并行运算

- 缺点:占用资源最多、可启动数目比线程少

- 适用于:CPU密集型计算

2)多线程Thread(threading)

- 优点:相比进程、更轻量级、占用资源少

- 缺点:

- 相比进程:多线程只能并发执行,不能利用多CPU(GIL)

- 相比协程:启动数目有限制,占用内存资源,有线程切换开销

- 适用于:IO密集型计算、同时运行的任务数目要求不多

3)多协程Coroutine(asyncio)

- 优点:内存开销最少、启动协程数量最多

- 缺点:支持的库有限制(aiohttp vs requests(不支持协程))、代码实现复杂

- 适用于:IO密集型计算、需要超多任务运行、但有现成库支持的场景

三、怎样根据任务选择对应技术?

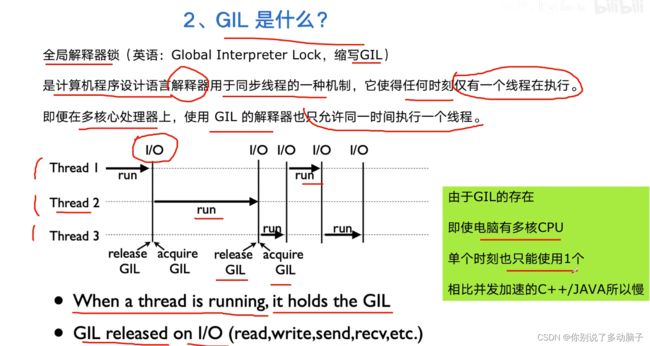

P3:全局解释器锁GIL

一、Python速度慢的两大原因

- 相比C/C++/JAVA,Python确实慢,在一些特殊场景下,Python比C++慢100~200倍

- 由于速度慢的原因,很多公司的基础架构代码依然用C/C++开发;比如各大公司阿里/腾讯/快手的推荐引擎、搜索引擎、存储引擎等底层对性能要求高的模块

1)动态类型语言,边解释边执行

2)GIL的存在,使得python无法利用多核CPU并发执行

二、GIL是什么?

全局解释器锁(Global Interpreter Look,缩写GIL)是计算机程序设计语言解释器用于同步线程的一种机制,它使得任何时刻仅有一个线程在执行。即便在多核心处理器上,使用GIL。

三、为什么有GIL这个东西?

- 简而言之:Python设计初期,为了规避并发问题引入了GIL,现在想去却去不掉了!

- GIL确实有好处:简化了Python对共享资源的管理。

四、怎么规避GIL带来的限制

P4:使用多线程加速程序Python代码

一、Python创建多线程的方法

1. 准备一个函数

def my_func(a,b):

dp_craw(a,b)

2. 怎样创建一个线程

import threading

t = threading.Thread(target = my_func, args = (100,200) # args是函数的参数

3. 启动线程

t.start()

4. 等待结束

t.join()

- 元组要加逗号,否则就是个字符串

具体案例

- 在同目录下创建一个blog_spider.py文件

import requests

urls = [

f"https://www.cnblogs.com/#p{page}"

for page in range(1, 51)

]

def craw(url):

r = requests.get(url)

print(url, len(r.text))

import blog_spider

import threading

def single_thread():

print("single_thread begin")

for url in blog_spider.urls:

blog_spider.craw(url)

print("single_thread end")

def multi_thread():

print("multi_thread begin")

threads = []

for url in blog_spider.urls:

threads.append(

threading.Thread(target=blog_spider.craw, args=(url,)) #元组要加逗号,否则就是个字符串

)

for thread in threads:

thread.start()

for thread in threads:

thread.join()

print("multi_thread end")

P5:Python实现生产者消费者爬虫

一、多组件的Pipeline技术架构

复杂的事情一般都不会一下子做完,而是会分很多中间步骤一步步完成

二、代码实现

- blog_spider.py假

import requests

from bs4 import BeautifulSoup

urls = [

f"https://www.cnblogs.com/#p{page}"

for page in range(1, 51)

]

def craw(url):

r = requests.get(url)

return r.text

def parse(html):

# class="post-item-title"

soup = BeautifulSoup(html, "html.parser")

links = soup.find_all("a", class_="post-item-title")

return [(link["href"]) for link in links]

- 复杂的爬虫可以分为很多模块,每个模块可以用不同的线程组处理

import queue

import blog_spider

import time

import random

import threading

def do_craw(url_queue:queue.Queue, html_queue: queue.Queue):

while True:

html = url_queue.get()

html = blog_spider.craw(url)

html_queue.put(html)

print(threading.current_thread().name, f"craw{url}",

"url_queue.size=", url_queue.qsize())

time.sleep(random.randint(1,2))

def do_parse(html_queue:queue.Queue, fout):

while True:

html = html_queue.get()

results = blog_spider.parse(html)

for result in results:

fout.write(str(result) + "\n")

print(threading.current_thread().name, f"results.size",

len(results),"html_queue.size=", html_queue.qsize())

time.sleep(random.randint(1, 2))

if __name__ == "__main__":

url_queue = queue.Queue()

html_queue = queue.Queue()

for url in blog_spider.urls:

url_queue.put(url)

for idx in range(3):

t = threading.Thread(target=do_craw, args = (url_queue, html_queue),

name = f"craw{idx}")

t.start()

fout = open("o2.data.txt", "w")

for idx in range(2):

t = threading.Thread(target=do_parse, args=(html_queue, fout),

name=f"parse{idx}")

t.start()

P6:线程安全问题以及Lock解决方案

一、线程安全概念介绍

- 线程安全指某个函数、函数库在多线程环境中被调用时,能够正确地处理多个线程之间地共享变量,使程序功能正确完成。

- 同理,线程不安全是由于线程地执行随时会发生切换,就造成了不可预料地结果,出现线程不安全

例如,银行取钱问题:

def draw(account, amount):

if account.balance >= amount:

account.balance -= amount

二、Lock用于解决线程安全问题

为解决上述问题,需要对代码进行加锁,有以下两种方法:

(先获取锁,然后执行代码)

- 用法1:try-finally 模式

import threading

lock = threading.Lock()

lock.acquire()

try:

# do something

finally:

lock.release()

- 用法2: with模式

import threading

lock = threading.Lock()

with lock:

#do something

三、实力代码演示问题以及解决方案

- 会出现-600余额的情况,这是有问题的

import threading

import time

**lock = threading.Lock()**

class Account:

def __init__(self, balance):

self.balance = balance

def draw(account, amount):

**with lock:**

if account.balance >= amount:

time.sleep(0.1)

print(threading.current_thread().name, "取钱成功")

account.balance -= amount

print(threading.current_thread().name,

"余额", account.balance)

else:

print(threading.current_thread().name,

"取钱失败,余额不足")

if __name__ == "__main__":

account = Account(1000)

ta = threading.Thread(name="ta", target=draw,args=(account, 800))

tb = threading.Thread(name="tb", target=draw,args=(account, 800))

ta.start()

tb.start()

需要对上面代码进行简要修改,修改部分标粗

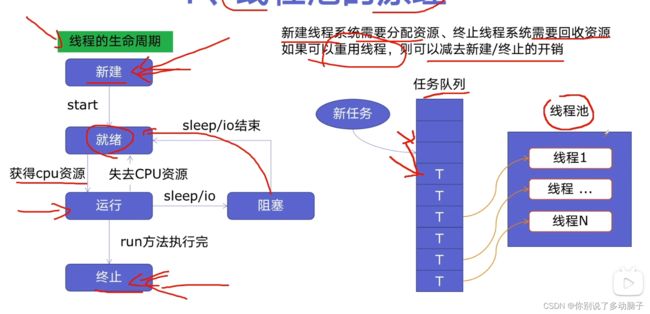

P7: 线程池ThreadPoolExecututor

一、线程池的原理

新建线程系统需要分配资源、终止线程系统需要回收资源;如果可以重用线程,则可以减去新建/终止的开销。这样就引出了线程池。

二、使用线程池的好处

- 提升性能:因为减去了大量新建、终止线程的开销,重用了线程资源;

- 适用场景:适合处理突发性大量请求或大量线程完成任务、但实际任务处理时间较短

- 防御功能:能有效避免系统因为创建线程过多,而导致系统负荷过大相应变慢等问题

- 代码优势:使用线程池的语法比自己新建线程执行线程更加简洁

三、ThreadPoolExecutor的使用语法

两种用法:

- 用法1:map函数,(简单)注意map的结果和入参是顺序对应的

from concurrent.futures import ThreadPoolExecutor, as_completed

with ThreadPoolExecutor() as pool:

results = pool.map(craw,urls) # map传入函数和参数列表

for result in results:

print(result)

遇到多个参数的函数需要线程池支持时,依次并列往后就行,不用生成元组:

with ThreadPoolExecutor() as pool:

# results = pool.map(run_noerror,arg)

results = pool.map(run_noerror, titles, start_list, end_list) # 多余的参数就依次往后列出就行

- 用法2:future模式,(强大)注意如果用as_completed顺序是不定的

from concurrent.futures import ThreadPoolExecutor, as_completed

with ThreadPoolExecutor() as pool:

futures = [pool.submit(craw, url) for url in urls]

for future in futures: # 第一种这种会按照url对应的顺序依次获取结果

print(future.result())

for future in as_completed(futures): # 返回先执行完的任务,无顺序

print(future.result())

四、使用线程池改造爬虫程序

创建python文件04.thread_pool.py如下:

import concurrent.futures

import blog_spider

# craw 方法1

with concurrent,futures.ThreadPoolExcecutor() as pool:

htmls = pool.map(blog_spider.craw, blog_spider.urls)

htmls = list(zip(blog_spider.urls, htmls))

for url, html in htmls:

print(url, len(html))

print("craw over")

# parse 方法2

with concurrent.futures.ThreadPoolExecutor() as pool:

futures = {}

for url, html in htmlsL

future = pool.submit(blog_spider.parse, html)

futures[future] = url

for future, url in futures.items(): # 有顺序的方式

print(url, future.result())

for future in concurrent.futures.as_completed(futures): # 无顺序的方法

url = futures[future]

print(url, future.result())

P8:使用线程池在Web服务中实现加速

一、Web服务的架构以及特点

Web后台服务的特点:

- Web服务对响应时间要求非常高,比如要求200MS返回

- Web服务有大量的依赖IO操作的调用,比如磁盘文件、数据库、远程API

- Web服务经常需要处理几万人、几百万人的同时请求

二、使用线程池ThreadPoolExecutor加速

使用线程池ThreadPoolExecutor的好处:

- 方便的将磁盘文件、数据库、远程API和IO调用并发执行;

- 线程池的线程数目不会无限创建(导致系统挂掉),具有防御功能

三、代码用Flask实现Web服务并实现加速

创建05.flask_thread_pool.py的python文件

import flask

import json

import time

from concurrent.futures import ThreadPoolExecutor

app = flask.Flask(__name__)

pool = ThreadPoolExecutor()

def read_file():

time.sleep(0.3)

return "file result"

def read_db():

time.sleep(0.1)

return "db result"

def read_api():

time.sleep(0.2)

return "df result"

@app.route("/")

def index():

result_file = pool.submit(read_file)

result_db = pool.submit(read_bd)

result_api = pool.submit(read_api)

return json.dumps({

"result_file": result_file.result(),

"result_db": result_db.result(),

"result_api": result_api.result()

})

if __name__ == "__main__":

app.run()

P9:多进程multiprocessing模块加速程序的运行

一、有了多线程threading,为什么还要用多进程multiprocessing

multiprocessing模块就是python为了解决GIL缺陷引入的一个模块,原理是用多进程在多CPU上并行执行。

二、多进程multiprocessing知识梳理

三、代码实战:单线程、多线程、多进程对比CPU密集计算速度

import math

import concurrent.futures import ThreadPoolExecutor, ProcessPoolExecutor

import time

PRIMES = [112272535095293] * 100

def is_prime():

if n<2:

return False

if n == 2:

return True

if n % 2 == 0:

return False

sqrt_n = int(math.floor(math.sqrt(n)))

for i in range(3, sqrt_n + 1,2):

if n%i ==0:

return False

return True

# 单线程

def single_thread():

for number in PRIMES:

is_prime(number)

def multi_thread():

with ThreadPoolExecutor as pool:

pool.map(is_prime,PRIMES)

def multi_process():

with ProcessPoolExceutor() as pool:

pool.map(is_prime, PRIMES)

if __name__ = "__main__":

start = time.time()

single_thread()

end = time.time()

print("single_thread,cost:", end - start, "seconds")

start = time.time()

multi_thread()

end = time.time()

print("multi_thread,cost:", end - start, "seconds")

start = time.time()

multi_process()

end = time.time()

print("multi_process,cost:", end - start, "seconds")

P10: Python在Flask服务中使用多进程池加速程序运行

创建python文件07.process_pool.py如下:

import flask

from concurrent.futures import ProcessPoolExecutor

import json

import math

app = flask.Flask(__name__)

def is_prime():

if n<2:

return False

if n == 2:

return True

if n % 2 == 0:

return False

sqrt_n = int(math.floor(math.sqrt(n)))

for i in range(3, sqrt_n + 1,2):

if n%i ==0:

return False

return True

@app.route("/is_prime/" )

def api_is_prime(numbers):

number_list = [int(x) for x in numbers.split(",")]

results = process_pool.map(is_prime(),number_list)

return json.dumps(dict(zip(number_list,results)))

if __name__ == "__main__":

process_pool = ProcessPoolExecutor()

app.run()

P11:异步IO实现并发爬虫

import asyncio

#获取事件循环

loop = asyncio.get_event_loop()

# 定义协程

async def myfunc(url):

await get_url(url)

# 创建task列表

tasks = [loop.create_task(myfunc(url)) for url in urls]

# 执行爬虫事件列表

loop.run_until_complete(asyncio.wait(tasks))

import asyncio

import aiohttp

import blog_spider

async def async_craw(url):

async with aiohttp.ClientSession() as session:

async with session.get(url) as resp:

result = await resp.text()

print(f"craw url: {url},{len(result)}")

loop = asyncio.get_event_loop()

tasks = [

loop.create_task(async_craw(url))

for url in blog_spider.urls

]

import time

start = time.time()

loop.run_until_complete(asyncio.wait(tasks))

end = time.time()

print(start-end)

注意:

- 要用在异步IO编程中,依赖的库必须支持异步IO特性

- 在爬虫中,不支持requests库,可以用aiohttp代替

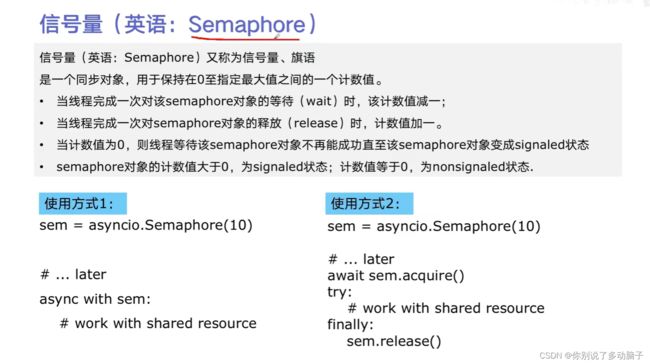

P12:在异步IO中使用信号量控制爬虫并发度

import asyncio

import aiohttp

import blog_spider

semaphore = asyncio.Semaphore(10) # 信号量控制了并发度

async def async_craw(url):

async with semaphore:

print("craw url:", url)

async with aiohttp.ClientSession() as session:

async with session.get(url) as resp:

result = await resp.text()

await asyncio.sleep(5)

print(f"craw url: {url},{len(result)}")

loop = asyncio.get_event_loop()

tasks = [

loop.create_task(async_craw(url))

for url in blog_spider.urls

]

import time

start = time.time()

loop.run_until_complete(asyncio.wait(tasks))

end = time.time()

print(start-end)

视频来源:https://www.bilibili.com/video/BV1bK411A7tV?p=1&vd_source=63b8ded929e53ceb23c48c6ca09fa194