hadoop生产调优之综合调优

一、Hadoop 小文件优化方法

Hadoop 小文件弊端

HDFS 上每个文件都要在 NameNode 上创建对应的元数据,这个元数据的大小约为150byte,这样当小文件比较多的时候,就会产生很多的元数据文件,一方面会大量占用NameNode 的内存空间,另一方面就是元数据文件过多,使得寻址索引速度变慢。小文件过多,在进行 MR 计算时,会生成过多切片,需要启动过多的 MapTask。每个MapTask 处理的数据量小,导致 MapTask 的处理时间比启动时间还小,白白消耗资源。

Hadoop 小文件解决方案

1)在数据采集的时候,就将小文件或小批数据合成大文件再上传 HDFS(数据源头)

2)Hadoop Archive(存储方向)

是一个高效的将小文件放入 HDFS 块中的文件存档工具,能够将多个小文件打包成一个 HAR 文件,从而达到减少 NameNode 的内存使用

3)CombineTextInputFormat(计算方向)

CombineTextInputFormat 用于将多个小文件在切片过程中生成一个单独的切片或者少量的切片。

4)开启 uber 模式,实现 JVM 重用(计算方向)

默认情况下,每个 Task 任务都需要启动一个 JVM 来运行,如果 Task 任务计算的数据量很小,我们可以让同一个 Job 的多个 Task 运行在一个 JVM 中,不必为每个 Task 都开启一个 JVM。

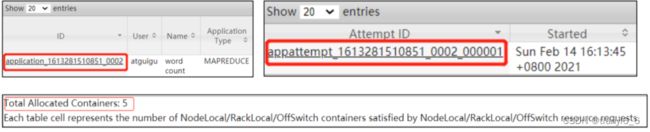

(1)未开启 uber 模式,在/input 路径上上传多个小文件并执行 wordcount 程序

[atguigu@hadoop102 hadoop-3.1.3]$ hadoop jar

share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar

wordcount /input /output2

(2)观察控制台

2021-02-14 16:13:50,607 INFO mapreduce.Job: Job job_1613281510851_0002

running in uber mode : false

(3)观察 http://hadoop103:8088/cluster

(4)开启 uber 模式,在 mapred-site.xml 中添加如下配置

<property>

<name>mapreduce.job.ubertask.enablename>

<value>truevalue>

property>

<property>

<name>mapreduce.job.ubertask.maxmapsname>

<value>9value>

property>

<property>

<name>mapreduce.job.ubertask.maxreducesname>

<value>1value>

property>

<property>

<name>mapreduce.job.ubertask.maxbytesname>

<value>value>

property>

(5)分发配置

[atguigu@hadoop102 hadoop]$ xsync mapred-site.xml

(6)再次执行 wordcount 程序

[atguigu@hadoop102 hadoop-3.1.3]$ hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar wordcount /input /output2

(7)观察控制台

2021-02-14 16:28:36,198 INFO mapreduce.Job: Job

job_1613281510851_0003 running in uber mode : true

(8)观察 http://hadoop103:8088/cluster

二、测试 MapReduce 计算性能

使用 Sort 程序评测 MapReduce

注:一个虚拟机不超过 150G 磁盘尽量不要执行这段代码

(1)使用 RandomWriter 来产生随机数,每个节点运行 10 个 Map 任务,每个 Map 产生大约 1G 大小的二进制随机数

[atguigu@hadoop102 mapreduce]$ hadoop jar /opt/module/hadoop-

3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-

3.1.3.jar randomwriter random-data

(2)执行 Sort 程序

[atguigu@hadoop102 mapreduce]$ hadoop jar /opt/module/hadoop-

3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-

3.1.3.jar sort random-data sorted-data

(3)验证数据是否真正排好序了

[atguigu@hadoop102 mapreduce]$

hadoop jar /opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient 3.1.3-tests.jar testmapredsort -sortInput random-data

-sortOutput sorted-data

三、企业开发场景案例

需求

(1)需求:从 1G 数据中,统计每个单词出现次数。服务器 3 台,每台配置 4G 内存,4 核 CPU,4 线程。

(2)需求分析:

1G / 128m = 8 个 MapTask;1 个 ReduceTask;1 个 mrAppMaster

平均每个节点运行 10 个 / 3 台 ≈ 3 个任务(4 3 3)

HDFS 参数调优

(1)修改:hadoop-env.sh

export HDFS_NAMENODE_OPTS="-Dhadoop.security.logger=INFO,RFAS -Xmx1024m"

export HDFS_DATANODE_OPTS="-Dhadoop.security.logger=ERROR,RFAS -Xmx1024m"

(2)修改 hdfs-site.xml

<property>

<name>dfs.namenode.handler.countname>

<value>21value>

property>

(3)修改 core-site.xml

<property>

<name>fs.trash.intervalname>

<value>60value>

property>

(4)分发配置

[atguigu@hadoop102 hadoop]$ xsync hadoop-env.sh hdfs-site.xml

core-site.xml

MapReduce 参数调优

(1)修改 mapred-site.xml

<property>

<name>mapreduce.task.io.sort.mbname>

<value>100value>

property>

<property>

<name>mapreduce.map.sort.spill.percentname>

<value>0.80value>

property>

<property>

<name>mapreduce.task.io.sort.factorname>

<value>10value>

property>

<property>

<name>mapreduce.map.memory.mbname>

<value>-1value>

<description>The amount of memory to request from the

scheduler for each map task. If this is not specified or is

non-positive, it is inferred from mapreduce.map.java.opts and

mapreduce.job.heap.memory-mb.ratio. If java-opts are also not

specified, we set it to 1024.

description>

property>

<property>

<name>mapreduce.map.cpu.vcoresname>

<value>1value>

property>

<property>

<name>mapreduce.map.maxattemptsname>

<value>4value>

property>

<property>

<name>mapreduce.reduce.shuffle.parallelcopiesname>

<value>5value>

property>

<property>

<name>mapreduce.reduce.shuffle.input.buffer.percentname>

<value>0.70value>

property>

<property>

<name>mapreduce.reduce.shuffle.merge.percentname>

<value>0.66value>

property>

<property>

<name>mapreduce.reduce.memory.mbname>

<value>-1value>

<description>The amount of memory to request from the

scheduler for each reduce task. If this is not specified or

is non-positive, it is inferred

from mapreduce.reduce.java.opts and

mapreduce.job.heap.memory-mb.ratio.

If java-opts are also not specified, we set it to 1024.

description>

property>

<property>

<name>mapreduce.reduce.cpu.vcoresname>

<value>2value>

property>

<property>

<name>mapreduce.reduce.maxattemptsname>

<value>4value>

property>

<property>

<name>mapreduce.job.reduce.slowstart.completedmapsname>

<value>0.05value>

property>

<property>

<name>mapreduce.task.timeoutname>

<value>600000value>

property>

(2)分发配置

[atguigu@hadoop102 hadoop]$ xsync mapred-site.xml

Yarn 参数调优

(1)修改 yarn-site.xml 配置参数如下:

<property>

<description>The class to use as the resource scheduler.description>

<name>yarn.resourcemanager.scheduler.classname>

<value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.capaci

ty.CapacitySchedulervalue>

property>

<property>

<description>Number of threads to handle scheduler

interface.description>

<name>yarn.resourcemanager.scheduler.client.thread-countname>

<value>8value>

property>

<property>

<description>Enable auto-detection of node capabilities such as

memory and CPU.

description>

<name>yarn.nodemanager.resource.detect-hardware-capabilitiesname>

<value>falsevalue>

property>

<property>

<description>Flag to determine if logical processors(such as

hyperthreads) should be counted as cores. Only applicable on Linux

when yarn.nodemanager.resource.cpu-vcores is set to -1 and

yarn.nodemanager.resource.detect-hardware-capabilities is true.

description>

<name>yarn.nodemanager.resource.count-logical-processors-ascoresname>

<value>falsevalue>

property>

<property>

<description>Multiplier to determine how to convert phyiscal cores to

vcores. This value is used if yarn.nodemanager.resource.cpu-vcores

is set to -1(which implies auto-calculate vcores) and

yarn.nodemanager.resource.detect-hardware-capabilities is set to true.

The number of vcores will be calculated as number of CPUs * multiplier.

description>

<name>yarn.nodemanager.resource.pcores-vcores-multipliername>

<value>1.0value>

property>

<property>

<description>Amount of physical memory, in MB, that can be allocated

for containers. If set to -1 and

yarn.nodemanager.resource.detect-hardware-capabilities is true, it is

automatically calculated(in case of Windows and Linux).

In other cases, the default is 8192MB.

description>

<name>yarn.nodemanager.resource.memory-mbname>

<value>4096value>

property>

<property>

<description>Number of vcores that can be allocated

for containers. This is used by the RM scheduler when allocating

resources for containers. This is not used to limit the number of

CPUs used by YARN containers. If it is set to -1 and

yarn.nodemanager.resource.detect-hardware-capabilities is true, it is

automatically determined from the hardware in case of Windows and Linux.

In other cases, number of vcores is 8 by default.description>

<name>yarn.nodemanager.resource.cpu-vcoresname>

<value>4value>

property>

<property>

<description>The minimum allocation for every container request at the

RM in MBs. Memory requests lower than this will be set to the value of

this property. Additionally, a node manager that is configured to have

less memory than this value will be shut down by the resource manager.

description>

<name>yarn.scheduler.minimum-allocation-mbname>

<value>1024value>

property>

<property>

<description>The maximum allocation for every container request at the

RM in MBs. Memory requests higher than this will throw an

InvalidResourceRequestException.

description>

<name>yarn.scheduler.maximum-allocation-mbname>

<value>2048value>

property>

<property>

<description>The minimum allocation for every container request at the

RM in terms of virtual CPU cores. Requests lower than this will be set to

the value of this property. Additionally, a node manager that is configured

to have fewer virtual cores than this value will be shut down by the

resource manager.

description>

<name>yarn.scheduler.minimum-allocation-vcoresname>

<value>1value>

property>

<property>

<description>The maximum allocation for every container request at the

RM in terms of virtual CPU cores. Requests higher than this will throw an

InvalidResourceRequestException.description>

<name>yarn.scheduler.maximum-allocation-vcoresname>

<value>2value>

property>

<property>

<description>Whether virtual memory limits will be enforced for

containers.description>

<name>yarn.nodemanager.vmem-check-enabledname>

<value>falsevalue>

property>

<property>

<description>Ratio between virtual memory to physical memory when

setting memory limits for containers. Container allocations are

expressed in terms of physical memory, and virtual memory usage is

allowed to exceed this allocation by this ratio.

description>

<name>yarn.nodemanager.vmem-pmem-rationame>

<value>2.1value>

property>

(2)分发配置

[atguigu@hadoop102 hadoop]$ xsync yarn-site.xml

执行程序

(1)重启集群

[atguigu@hadoop102 hadoop-3.1.3]$ sbin/stop-yarn.sh

[atguigu@hadoop103 hadoop-3.1.3]$ sbin/start-yarn.sh

(2)执行 WordCount 程序

[atguigu@hadoop102 hadoop-3.1.3]$ hadoop jar

share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar

wordcount /input /output

(3)观察 Yarn 任务执行页面

http://hadoop103:8088/cluster/apps