Ceph集群安装部署

目录

- Ceph集群安装部署

- 1、环境准备

- 1.1 环境简介

- 1.2 配置hosts解析(所有节点)

- 1.3 配置时间同步

- 2、安装docker(所有节点)

- 3、配置镜像

- 3.1 下载ceph镜像(所有节点执行)

- 3.2 搭建制作本地仓库(ceph-01节点执行)

- 3.3 配置私有仓库(所有节点执行)

- 3.4 为 Docker 镜像打标签(ceph-01节点执行)

- 4、安装ceph工具(所有节点执行)

- 5、引导集群(ceph-01节点执行)

- 6、添加主机到集群(ceph-01节点执行)

- 7、部署OSD,存储数据(ceph-01节点执行)

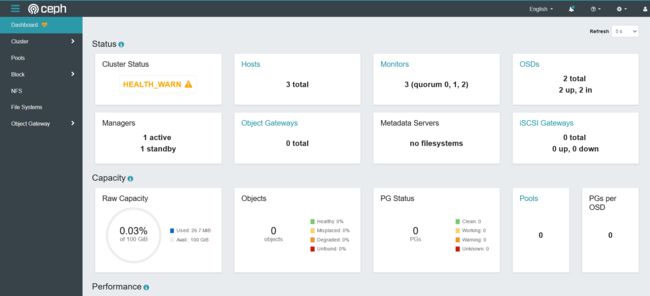

- 8、访问仪表盘查看状态

- 1、环境准备

1、环境准备

1.1 环境简介

| 主机名 | IP | 磁盘一 | 磁盘二 | 磁盘三 | CPU | 内存 | 操作系统 | 虚拟化工具 |

|---|---|---|---|---|---|---|---|---|

| ceph-01 | 192.168.200.33 | 100G | 50G | 50G | 2C | 4G | Ubuntu 22.04 | VMware15 |

| ceph-02 | 192.168.200.34 | 100G | 50G | 50G | 2C | 4G | Ubuntu 22.04 | VMware15 |

| ceph-03 | 192.168.200.35 | 100G | 50G | 50G | 2C | 4G | Ubuntu 22.04 | VMware15 |

1.2 配置hosts解析(所有节点)

root@ceph-01:~# cat >> /etc/hosts <1.3 配置时间同步

所有节点执行

# 可配置开启

root@ceph-01:~# timedatectl set-ntp true

# 配置上海时区

root@ceph-01:~# timedatectl set-timezone Asia/Shanghai

# 系统时钟与硬件时钟同步

root@ceph-01:~# hwclock --systohcceph-01节点执行

# 安装服务

root@ceph-01:~# apt install -y chrony

# 配置文件

root@ceph-01:~# cat >> /etc/chrony/chrony.conf << EOF

server controller iburst maxsources 2

allow all

local stratum 10

EOF

# 重启服务

root@ceph-01:~# systemctl restart chronydceph-02、ceph-03节点

# 安装服务

root@ceph-02:~# apt install -y chrony

# 配置文件

root@ceph-02:~# cat >> /etc/chrony/chrony.conf << EOF

pool controller iburst maxsources 4

EOF

# 重启服务

root@ceph-02:~# systemctl restart chronyd2、安装docker(所有节点)

# 安装证书

root@ceph-01:~# apt -y install ca-certificates curl gnupg lsb-release

# 安装官方GPG key

root@ceph-01:~# mkdir -p /etc/apt/keyrings

root@ceph-01:~# curl -fsSL https://download.docker.com/linux/ubuntu/gpg | gpg --dearmor -o /etc/apt/keyrings/docker.gpg

# 建立docker资源库

root@ceph-01:~# echo "deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.gpg] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" | tee /etc/apt/sources.list.d/docker.list > /dev/null

# 更新资源库

root@ceph-01:~# apt update

# 开始安装docker

root@ceph-01:~# apt -y install docker-ce

# 设置开机自启动

root@ceph-01:~# systemctl enable docker3、配置镜像

3.1 下载ceph镜像(所有节点执行)

root@ceph-01:~# docker pull quay.io/ceph/ceph:v17

root@ceph-01:~# docker pull quay.io/ceph/ceph-grafana:8.3.5

root@ceph-01:~# docker pull quay.io/prometheus/prometheus:v2.33.4

root@ceph-01:~# docker pull quay.io/prometheus/node-exporter:v1.3.1

root@ceph-01:~# docker pull quay.io/prometheus/alertmanager:v0.23.03.2 搭建制作本地仓库(ceph-01节点执行)

# 下载镜像

root@ceph-01:~# docker pull registry

# 启动仓库容器

root@ceph-01:~# docker run -d --name registry -p 5000:5000 --restart always 3a0f7b0a13ef

root@ceph-01:~# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

04b3ea9c4c08 3a0f7b0a13ef "/entrypoint.sh /etc…" 1 second ago Up Less than a second 0.0.0.0:5000->5000/tcp, :::5000->5000/tcp registry3.3 配置私有仓库(所有节点执行)

# 配置仓库地址

root@ceph-01:~# cat > /etc/docker/daemon.json << EOF

{

"insecure-registries":["192.168.200.33:5000"]

}

EOF

# 重启docker

root@ceph-01:~# systemctl daemon-reload

root@ceph-01:~# systemctl restart docker3.4 为 Docker 镜像打标签(ceph-01节点执行)

# 打地址标签

root@ceph-01:~# docker tag 0912465dcea5 192.168.200.33:5000/ceph:v17

# 查看打开的标签

root@ceph-01:~# docker images | grep 0912465dcea5

192.168.200.33:5000/ceph v17 0912465dcea5 12 months ago 1.34GB

quay.io/ceph/ceph v17 0912465dcea5 12 months ago 1.34GB

# 推入仓库

root@ceph-01:~# docker push 192.168.200.33:5000/ceph:v174、安装ceph工具(所有节点执行)

root@ceph-01:~# apt install -y cephadm ceph-common5、引导集群(ceph-01节点执行)

# 初始化mon节点

root@ceph-01:~# mkdir -p /etc/ceph

root@ceph-01:~# cephadm --image 192.168.200.33:5000/ceph:v17 bootstrap --mon-ip 192.168.200.33 --initial-dashboard-user admin --initial-dashboard-password 000000 --skip-pull6、添加主机到集群(ceph-01节点执行)

# 传输ceph密钥

root@ceph-01:~# ssh-copy-id -f -i /etc/ceph/ceph.pub ceph-02

root@ceph-01:~# ssh-copy-id -f -i /etc/ceph/ceph.pub ceph-03# 集群机器发现

root@ceph-01:~# ceph orch host add ceph-02

root@ceph-01:~# ceph orch host add ceph-03

# 查看主机

root@ceph-01:~# ceph orch host ls

HOST ADDR LABELS STATUS

ceph-01 192.168.200.33 _admin

ceph-02 192.168.200.34

ceph-03 192.168.200.35

3 hosts in clusterps:

# 要部署其他监视器

ceph orch apply mon "ceph-01,ceph-02,ceph-03"

# 删除集群

cephadm rm-cluster --fsid d92b85c0-3ecd-11ed-a617-3f7cf3e2d6d8 --force7、部署OSD,存储数据(ceph-01节点执行)

# 查看可用的磁盘设备

root@ceph-01:~# ceph orch device ls

HOST PATH TYPE DEVICE ID SIZE AVAILABLE REFRESHED REJECT REASONS

ceph-01 /dev/sdb hdd 53.6G Yes 18m ago

ceph-01 /dev/sdc hdd 53.6G Yes 18m ago

ceph-02 /dev/sdb hdd 53.6G Yes 45s ago

ceph-02 /dev/sdc hdd 53.6G Yes 45s ago

ceph-03 /dev/sdb hdd 53.6G Yes 32s ago

ceph-03 /dev/sdc hdd 53.6G Yes 32s ago

# 添加到ceph集群中,在未使用的设备上自动创建osd

root@ceph-01:~# ceph orch apply osd --all-available-devices

PS:

# 从特定主机上的特定设备创建OSD:

ceph orch daemon add osd ceph-01:/dev/sdb

ceph orch daemon add osd ceph-02:/dev/sdb

ceph orch daemon add osd ceph-03:/dev/sdb# 查看osd磁盘

root@ceph-01:~# ceph -s

cluster:

id: cf4e18fa-36a8-11ee-b041-03777440eaac

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph-01,ceph-02,ceph-03 (age 3m)

mgr: ceph-01.ydxjzm(active, since 7m), standbys: ceph-02.zpbmpm

osd: 6 osds: 0 up, 6 in (since 1.16241s)

data:

pools: 1 pools, 1 pgs

objects: 2 objects, 449 KiB

usage: 120 MiB used, 300 GiB / 300 GiB avail

pgs: 1 active+clean

root@ceph-01:~# ceph df

--- RAW STORAGE ---

CLASS SIZE AVAIL USED RAW USED %RAW USED

hdd 300 GiB 300 GiB 120 MiB 120 MiB 0.04

TOTAL 300 GiB 300 GiB 120 MiB 120 MiB 0.04

--- POOLS ---

POOL ID PGS STORED OBJECTS USED %USED MAX AVAIL

.mgr 1 1 449 KiB 2 449 KiB 0 95 GiB8、访问仪表盘查看状态

访问:https://192.168.200.33:8443/

访问:https://192.168.200.33:3000/