triton客户端使用

model_analyzer

简介:

Triton Model Analyzer is a CLI tool which can help you find a more optimal configuration, on a given piece of hardware, for single, multiple, ensemble, or BLS models running on a Triton Inference Server. Model Analyzer will also generate reports to help you better understand the trade-offs of the different configurations along with their compute and memory requirements.

代码地址:https://github.com/triton-inference-server/model_analyzer

测试方法:

启docker

docker run --name fan-triton -it --gpus device=0 \

-v /var/run/docker.sock:/var/run/docker.sock \

-v $(pwd)/examples/quick-start:$(pwd)/examples/quick-start \

-v /home/fanz/thirdparty/triton:/home/fanz/thirdparty/triton \

--net=host nvcr.io/nvidia/tritonserver:23.04-py3-sdk

测试

/usr/local/bin/model-analyzer profile \

--model-repository /home/xx/thirdparty/triton/models \

--profile-models Primary_Detector --triton-launch-mode=docker \

--output-model-repository-path /home/xx/thirdparty/triton/prm-out2 \

--export-path .

models下包含Primary_Detector等模型文件。Primary_Detector下包含模型配置文件config.pbtxt和模型版本目录1,1下就是模型的engine文件。

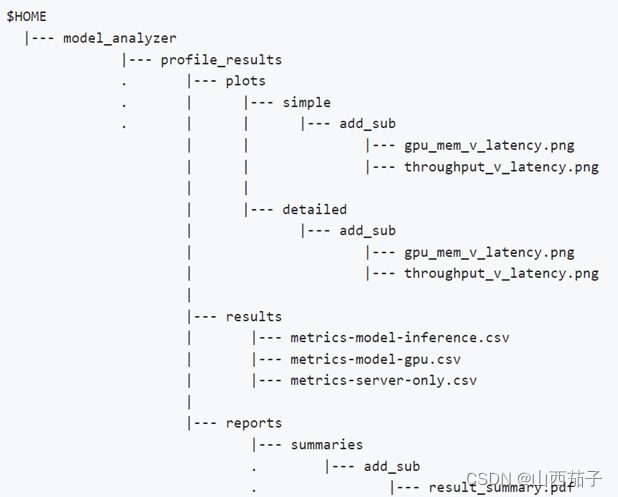

跑的时间会很长,因为在运行所有的配置。最后生成如下几个文件,具体意义详见文档:https://github.com/triton-inference-server/model_analyzer/blob/main/docs/report.md

perf_analyzer

perf_analyzer是个功能强大的测试工具,支持http, grpc, capi三种模式的测试。http, grpc方式需要启动tritonserver, 客户端发送命令包给服务端,服务端调triton的底层接口进行推理。capi方式不需要启动tritonserver,程序直接调接口进行推理。

perf_analyzer的源码:client/src/c++/perf_analyzer at main · triton-inference-server/client · GitHub。

capi方式的使用:

./perf_analyzer --service-kind=triton_c_api --triton-server-directory=/opt/tritonserver --model-repository=/opt/nvidia/deepstream/deepstream/samples/models -m Primary_Detector

model-repository的意义详见上一章节。