Deep Neural Network for Image Classification(吴恩达老师课后作业)

Deep Neural Network for Image Classification

1.前言

这里吴恩达老师大课第一部分第四周的实验作业,上一次的实验作业(logistics回归模型实现二分类算法)是相关联的,类似一个渐变的过程。本次实现先讲述搭建二层神经网络在到多层神经网络的构建,类似一个由浅入深的过程。老师给出的jupyter文件中详细的将每一个过程写了出来,对于新手的学习十分友好。我将实验过程记录了下来,在部分地方添加了个人的一些理解(如有错误还请指正),并且记录了实现过程中遇到的一些问题和个人的感悟,希望能给其他一些学习的朋友带来一些帮助

2.代码实现

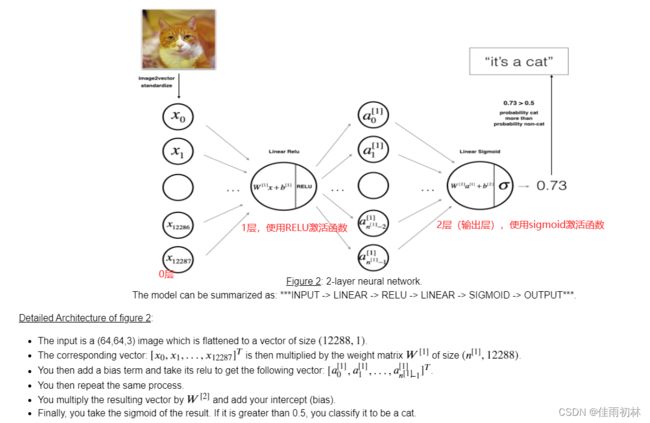

2.1两层神经网络的实现

搭建两层神经网络实现二分类算法,比之前的单层神经网络要多一个隐藏层,网络的结构图如下所示:

导入实验需要的依赖包

# 导入实验所需要的依赖

import time

import datetime

import numpy as np

import h5py

import matplotlib.pyplot as plt

from PIL import Image

from scipy import ndimage

from dnn_app_utils_v2 import *

%matplotlib inline

# rc:run configuration 设置图像显示的一些参数

plt.rcParams['figure.figsize'] = (5.0, 4.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

%load_ext autoreload

%autoreload 2

# 设置一个随机数种子

np.random.seed(1)

这里使用的工具类dnn_app_utils_v2是老师提前准备好的

import numpy as np

import matplotlib.pyplot as plt

import h5py

def sigmoid(Z):

"""

Implements the sigmoid activation in numpy

Arguments:

Z -- numpy array of any shape

Returns:

A -- output of sigmoid(z), same shape as Z

cache -- returns Z as well, useful during backpropagation

"""

A = 1/(1+np.exp(-Z))

cache = Z

return A, cache

def relu(Z):

"""

Implement the RELU function.

Arguments:

Z -- Output of the linear layer, of any shape

Returns:

A -- Post-activation parameter, of the same shape as Z

cache -- a python dictionary containing "A" ; stored for computing the backward pass efficiently

"""

A = np.maximum(0,Z)

assert(A.shape == Z.shape)

cache = Z

return A, cache

def relu_backward(dA, cache):

"""

Implement the backward propagation for a single RELU unit.

Arguments:

dA -- post-activation gradient, of any shape

cache -- 'Z' where we store for computing backward propagation efficiently

Returns:

dZ -- Gradient of the cost with respect to Z

"""

Z = cache

dZ = np.array(dA, copy=True) # just converting dz to a correct object.

# When z <= 0, you should set dz to 0 as well.

dZ[Z <= 0] = 0

assert (dZ.shape == Z.shape)

return dZ

def sigmoid_backward(dA, cache):

"""

Implement the backward propagation for a single SIGMOID unit.

Arguments:

dA -- post-activation gradient, of any shape

cache -- 'Z' where we store for computing backward propagation efficiently

Returns:

dZ -- Gradient of the cost with respect to Z

"""

Z = cache

s = 1/(1+np.exp(-Z))

dZ = dA * s * (1-s)

assert (dZ.shape == Z.shape)

return dZ

def load_data():

train_dataset = h5py.File('datasets/train_catvnoncat.h5', "r")

train_set_x_orig = np.array(train_dataset["train_set_x"][:]) # your train set features

train_set_y_orig = np.array(train_dataset["train_set_y"][:]) # your train set labels

test_dataset = h5py.File('datasets/test_catvnoncat.h5', "r")

test_set_x_orig = np.array(test_dataset["test_set_x"][:]) # your test set features

test_set_y_orig = np.array(test_dataset["test_set_y"][:]) # your test set labels

classes = np.array(test_dataset["list_classes"][:]) # the list of classes

train_set_y_orig = train_set_y_orig.reshape((1, train_set_y_orig.shape[0]))

test_set_y_orig = test_set_y_orig.reshape((1, test_set_y_orig.shape[0]))

return train_set_x_orig, train_set_y_orig, test_set_x_orig, test_set_y_orig, classes

def initialize_parameters(n_x, n_h, n_y):

"""

Argument:

n_x -- size of the input layer

n_h -- size of the hidden layer

n_y -- size of the output layer

Returns:

parameters -- python dictionary containing your parameters:

W1 -- weight matrix of shape (n_h, n_x)

b1 -- bias vector of shape (n_h, 1)

W2 -- weight matrix of shape (n_y, n_h)

b2 -- bias vector of shape (n_y, 1)

"""

np.random.seed(1)

W1 = np.random.randn(n_h, n_x)*0.01

b1 = np.zeros((n_h, 1))

W2 = np.random.randn(n_y, n_h)*0.01

b2 = np.zeros((n_y, 1))

assert(W1.shape == (n_h, n_x))

assert(b1.shape == (n_h, 1))

assert(W2.shape == (n_y, n_h))

assert(b2.shape == (n_y, 1))

parameters = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2}

return parameters

def initialize_parameters_deep(layer_dims):

"""

Arguments:

layer_dims -- python array (list) containing the dimensions of each layer in our network

Returns:

parameters -- python dictionary containing your parameters "W1", "b1", ..., "WL", "bL":

Wl -- weight matrix of shape (layer_dims[l], layer_dims[l-1])

bl -- bias vector of shape (layer_dims[l], 1)

"""

np.random.seed(1)

parameters = {}

L = len(layer_dims) # number of layers in the network

for l in range(1, L):

parameters['W' + str(l)] = np.random.randn(layer_dims[l], layer_dims[l-1]) / np.sqrt(layer_dims[l-1]) #*0.01

parameters['b' + str(l)] = np.zeros((layer_dims[l], 1))

assert(parameters['W' + str(l)].shape == (layer_dims[l], layer_dims[l-1]))

assert(parameters['b' + str(l)].shape == (layer_dims[l], 1))

return parameters

def linear_forward(A, W, b):

"""

Implement the linear part of a layer's forward propagation.

Arguments:

A -- activations from previous layer (or input data): (size of previous layer, number of examples)

W -- weights matrix: numpy array of shape (size of current layer, size of previous layer)

b -- bias vector, numpy array of shape (size of the current layer, 1)

Returns:

Z -- the input of the activation function, also called pre-activation parameter

cache -- a python dictionary containing "A", "W" and "b" ; stored for computing the backward pass efficiently

"""

Z = W.dot(A) + b

assert(Z.shape == (W.shape[0], A.shape[1]))

cache = (A, W, b)

return Z, cache

def linear_activation_forward(A_prev, W, b, activation):

"""

Implement the forward propagation for the LINEAR->ACTIVATION layer

Arguments:

A_prev -- activations from previous layer (or input data): (size of previous layer, number of examples)

W -- weights matrix: numpy array of shape (size of current layer, size of previous layer)

b -- bias vector, numpy array of shape (size of the current layer, 1)

activation -- the activation to be used in this layer, stored as a text string: "sigmoid" or "relu"

Returns:

A -- the output of the activation function, also called the post-activation value

cache -- a python dictionary containing "linear_cache" and "activation_cache";

stored for computing the backward pass efficiently

"""

if activation == "sigmoid":

# Inputs: "A_prev, W, b". Outputs: "A, activation_cache".

Z, linear_cache = linear_forward(A_prev, W, b)

A, activation_cache = sigmoid(Z)

elif activation == "relu":

# Inputs: "A_prev, W, b". Outputs: "A, activation_cache".

Z, linear_cache = linear_forward(A_prev, W, b)

A, activation_cache = relu(Z)

assert (A.shape == (W.shape[0], A_prev.shape[1]))

cache = (linear_cache, activation_cache)

return A, cache

def L_model_forward(X, parameters):

"""

Implement forward propagation for the [LINEAR->RELU]*(L-1)->LINEAR->SIGMOID computation

Arguments:

X -- data, numpy array of shape (input size, number of examples)

parameters -- output of initialize_parameters_deep()

Returns:

AL -- last post-activation value

caches -- list of caches containing:

every cache of linear_relu_forward() (there are L-1 of them, indexed from 0 to L-2)

the cache of linear_sigmoid_forward() (there is one, indexed L-1)

"""

caches = []

A = X

L = len(parameters) // 2 # number of layers in the neural network

# Implement [LINEAR -> RELU]*(L-1). Add "cache" to the "caches" list.

for l in range(1, L):

A_prev = A

A, cache = linear_activation_forward(A_prev, parameters['W' + str(l)], parameters['b' + str(l)], activation = "relu")

caches.append(cache)

# Implement LINEAR -> SIGMOID. Add "cache" to the "caches" list.

AL, cache = linear_activation_forward(A, parameters['W' + str(L)], parameters['b' + str(L)], activation = "sigmoid")

caches.append(cache)

assert(AL.shape == (1,X.shape[1]))

return AL, caches

def compute_cost(AL, Y):

"""

Implement the cost function defined by equation (7).

Arguments:

AL -- probability vector corresponding to your label predictions, shape (1, number of examples)

Y -- true "label" vector (for example: containing 0 if non-cat, 1 if cat), shape (1, number of examples)

Returns:

cost -- cross-entropy cost

"""

m = Y.shape[1]

# Compute loss from aL and y.

cost = (1./m) * (-np.dot(Y,np.log(AL).T) - np.dot(1-Y, np.log(1-AL).T))

cost = np.squeeze(cost) # To make sure your cost's shape is what we expect (e.g. this turns [[17]] into 17).

assert(cost.shape == ())

return cost

def linear_backward(dZ, cache):

"""

Implement the linear portion of backward propagation for a single layer (layer l)

Arguments:

dZ -- Gradient of the cost with respect to the linear output (of current layer l)

cache -- tuple of values (A_prev, W, b) coming from the forward propagation in the current layer

Returns:

dA_prev -- Gradient of the cost with respect to the activation (of the previous layer l-1), same shape as A_prev

dW -- Gradient of the cost with respect to W (current layer l), same shape as W

db -- Gradient of the cost with respect to b (current layer l), same shape as b

"""

A_prev, W, b = cache

m = A_prev.shape[1]

dW = 1./m * np.dot(dZ,A_prev.T)

db = 1./m * np.sum(dZ, axis = 1, keepdims = True)

dA_prev = np.dot(W.T,dZ)

assert (dA_prev.shape == A_prev.shape)

assert (dW.shape == W.shape)

assert (db.shape == b.shape)

return dA_prev, dW, db

def linear_activation_backward(dA, cache, activation):

"""

Implement the backward propagation for the LINEAR->ACTIVATION layer.

Arguments:

dA -- post-activation gradient for current layer l

cache -- tuple of values (linear_cache, activation_cache) we store for computing backward propagation efficiently

activation -- the activation to be used in this layer, stored as a text string: "sigmoid" or "relu"

Returns:

dA_prev -- Gradient of the cost with respect to the activation (of the previous layer l-1), same shape as A_prev

dW -- Gradient of the cost with respect to W (current layer l), same shape as W

db -- Gradient of the cost with respect to b (current layer l), same shape as b

"""

linear_cache, activation_cache = cache

if activation == "relu":

dZ = relu_backward(dA, activation_cache)

dA_prev, dW, db = linear_backward(dZ, linear_cache)

elif activation == "sigmoid":

dZ = sigmoid_backward(dA, activation_cache)

dA_prev, dW, db = linear_backward(dZ, linear_cache)

return dA_prev, dW, db

def L_model_backward(AL, Y, caches):

"""

Implement the backward propagation for the [LINEAR->RELU] * (L-1) -> LINEAR -> SIGMOID group

Arguments:

AL -- probability vector, output of the forward propagation (L_model_forward())

Y -- true "label" vector (containing 0 if non-cat, 1 if cat)

caches -- list of caches containing:

every cache of linear_activation_forward() with "relu" (there are (L-1) or them, indexes from 0 to L-2)

the cache of linear_activation_forward() with "sigmoid" (there is one, index L-1)

Returns:

grads -- A dictionary with the gradients

grads["dA" + str(l)] = ...

grads["dW" + str(l)] = ...

grads["db" + str(l)] = ...

"""

grads = {}

L = len(caches) # the number of layers

m = AL.shape[1]

Y = Y.reshape(AL.shape) # after this line, Y is the same shape as AL

# Initializing the backpropagation

dAL = - (np.divide(Y, AL) - np.divide(1 - Y, 1 - AL))

# Lth layer (SIGMOID -> LINEAR) gradients. Inputs: "AL, Y, caches". Outputs: "grads["dAL"], grads["dWL"], grads["dbL"]

current_cache = caches[L-1]

grads["dA" + str(L)], grads["dW" + str(L)], grads["db" + str(L)] = linear_activation_backward(dAL, current_cache, activation = "sigmoid")

for l in reversed(range(L-1)):

# lth layer: (RELU -> LINEAR) gradients.

current_cache = caches[l]

dA_prev_temp, dW_temp, db_temp = linear_activation_backward(grads["dA" + str(l + 2)], current_cache, activation = "relu")

grads["dA" + str(l + 1)] = dA_prev_temp

grads["dW" + str(l + 1)] = dW_temp

grads["db" + str(l + 1)] = db_temp

return grads

def update_parameters(parameters, grads, learning_rate):

"""

Update parameters using gradient descent

Arguments:

parameters -- python dictionary containing your parameters

grads -- python dictionary containing your gradients, output of L_model_backward

Returns:

parameters -- python dictionary containing your updated parameters

parameters["W" + str(l)] = ...

parameters["b" + str(l)] = ...

"""

L = len(parameters) // 2 # number of layers in the neural network

# Update rule for each parameter. Use a for loop.

for l in range(L):

parameters["W" + str(l+1)] = parameters["W" + str(l+1)] - learning_rate * grads["dW" + str(l+1)]

parameters["b" + str(l+1)] = parameters["b" + str(l+1)] - learning_rate * grads["db" + str(l+1)]

return parameters

def predict(X, y, parameters):

"""

This function is used to predict the results of a L-layer neural network.

Arguments:

X -- data set of examples you would like to label

parameters -- parameters of the trained model

Returns:

p -- predictions for the given dataset X

"""

m = X.shape[1]

n = len(parameters) // 2 # number of layers in the neural network

p = np.zeros((1,m))

# Forward propagation

probas, caches = L_model_forward(X, parameters)

# convert probas to 0/1 predictions

for i in range(0, probas.shape[1]):

if probas[0,i] > 0.5:

p[0,i] = 1

else:

p[0,i] = 0

#print results

#print ("predictions: " + str(p))

#print ("true labels: " + str(y))

print("Accuracy: " + str(np.sum((p == y)/m)))

return p

def print_mislabeled_images(classes, X, y, p):

"""

Plots images where predictions and truth were different.

X -- dataset

y -- true labels

p -- predictions

"""

a = p + y

mislabeled_indices = np.asarray(np.where(a == 1))

plt.rcParams['figure.figsize'] = (40.0, 40.0) # set default size of plots

num_images = len(mislabeled_indices[0])

for i in range(num_images):

index = mislabeled_indices[1][i]

plt.subplot(2, num_images, i + 1)

plt.imshow(X[:,index].reshape(64,64,3), interpolation='nearest')

plt.axis('off')

plt.title("Prediction: " + classes[int(p[0,index])].decode("utf-8") + " \n Class: " + classes[y[0,index]].decode("utf-8"))

导入实验所需要的数据集和测试集

# 导入实验需要的数据集和训练集;我们在工具类中已经定义好了导入数据的函数

train_x_orig, train_y, test_x_orig, test_y, classes = load_data()

# 我们可以随机抽取一张图片看看

index = 1

plt.imshow(train_x_orig[index])

print ("y = " + str(train_y[0,index]) + ". It's a " + classes[train_y[0,index]].decode("utf-8") + " picture.")

我们要经常检查一下我们的数据维度是否正确,避免出现不必要的错误

# Explore your dataset

m_train = train_x_orig.shape[0]

num_px = train_x_orig.shape[1]

m_test = test_x_orig.shape[0]

# 将维度打印出来查看一下

print ("Number of training examples: " + str(m_train))

print ("Number of testing examples: " + str(m_test))

print ("Each image is of size: (" + str(num_px) + ", " + str(num_px) + ", 3)")

print ("train_x_orig shape: " + str(train_x_orig.shape))

print ("train_y shape: " + str(train_y.shape))

print ("test_x_orig shape: " + str(test_x_orig.shape))

print ("test_y shape: " + str(test_y.shape))

# 将训练集和测试集的数据改成我们实现需要的数据维度(所有的输入特征要编程一个列向量)

train_x_flatten = train_x_orig.reshape(train_x_orig.shape[0], -1).T # The "-1" makes reshape flatten the remaining dimensions

test_x_flatten = test_x_orig.reshape(test_x_orig.shape[0], -1).T

# 将所有的特征进行标准化

train_x = train_x_flatten/255.

test_x = test_x_flatten/255.

print ("train_x's shape: " + str(train_x.shape))

print ("test_x's shape: " + str(test_x.shape))

# 接下来我们构建一个两层的神经网络

'''

#定义一个初始化两层网络维度的函数

# n_x就是0层输入的特征值的个数

# n_h就是1层神经元的个数

# n_y就是2层神经元的个数(我们实现的是二分类的算法,所以则一层是只有一个输出神经元的)

'''

def initialize_parameters(n_x, n_h, n_y):

np.random.seed(1)

W1 = np.random.randn(n_h, n_x) * 0.01

b1 = np.zeros((n_h, 1))

W2 = np.random.randn(n_y, n_h) * 0.01

b2 = np.zeros((n_y, 1))

# 这是使用了assert,可以确保我们的维度上是正确的

assert((n_h, n_x) == W1.shape)

assert((n_h, 1) == b1.shape)

assert((n_y, n_h) == W2.shape)

assert((n_y, 1) == b2.shape)

parameters = {

"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2

}

return parameters

# 激活函数的前向传播过程

def linear_activation_forward(A_prev, W, b, activation):

if activation == "sigmoid":

Z, linear_cache = linear_forward(A_prev, W, b)

A, activation_cache = sigmoid(Z)

elif activation == "relu":

Z, linear_cache = linear_forward(A_prev, W, b)

A, activation_cache = relu(Z)

assert(A.shape == (W.shape[0], A_prev.shape[1]))

# 这里记录好cache是方便方向传播的时候使用(避免重复计算)

cache = (linear_cache, activation_cache)

return A, cache

# 定义计算损失值的函数

def compute_cost(AL, Y):

m = Y.shape[1]

cost = -(np.dot(np.log(AL), Y.T) + np.dot(np.log(1 - AL), (1 - Y).T)) / (1.0 * m)

#print(cost.shape)

cost = np.squeeze(cost)

#print(cost.shape)

#print(cost)

assert(cost.shape == ( ))

return cost

# 激活函数的方向传播过程

def linear_activation_backward(dA, cache, activation):

linear_cache, activation_cache = cache

if activation == "relu":

dZ = relu_backward(dA, activation_cache)

dA_prev, dW, db = linear_backward(dZ, linear_cache)

elif activation == "sigmoid":

dZ = sigmoid_backward(dA, activation_cache)

dA_prev, dW, db = linear_backward(dZ, linear_cache)

return dA_prev, dW, db

# 参数更新函数

def update_parameters(parameters, grads, learning_rate):

# //2表示向下取整的除法

L = len(parameters) // 2

for i in range(1, L + 1):

parameters["W" + str(i)] -= learning_rate * grads["dW" + str(i)]

parameters["b" + str(i)] -= learning_rate * grads["db" + str(i)]

return parameters

实现两层神经网络模型

# GRADED FUNCTION: two_layer_model

def two_layer_model(X, Y, layers_dims, learning_rate = 0.0075, num_iterations = 3000, print_cost=False):

"""

Implements a two-layer neural network: LINEAR->RELU->LINEAR->SIGMOID.

Arguments:

X -- input data, of shape (n_x, number of examples)

Y -- true "label" vector (containing 0 if cat, 1 if non-cat), of shape (1, number of examples)

layers_dims -- dimensions of the layers (n_x, n_h, n_y)

num_iterations -- number of iterations of the optimization loop

learning_rate -- learning rate of the gradient descent update rule

print_cost -- If set to True, this will print the cost every 100 iterations

Returns:

parameters -- a dictionary containing W1, W2, b1, and b2

"""

np.random.seed(1)

grads = {}

costs = [] # to keep track of the cost

m = X.shape[1] # number of examples

(n_x, n_h, n_y) = layers_dims

# Initialize parameters dictionary, by calling one of the functions you'd previously implemented

### START CODE HERE ### (≈ 1 line of code)

parameters = initialize_parameters(n_x, n_h, n_y)

### END CODE HERE ###

# Get W1, b1, W2 and b2 from the dictionary parameters.

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

# Loop (gradient descent)

for i in range(0, num_iterations):

# Forward propagation: LINEAR -> RELU -> LINEAR -> SIGMOID. Inputs: "X, W1, b1". Output: "A1, cache1, A2, cache2".

### START CODE HERE ### (≈ 2 lines of code)

A1, cache1 = linear_activation_forward(X, parameters["W1"], parameters["b1"], activation="relu")

A2, cache2 = linear_activation_forward(A1, parameters["W2"], parameters["b2"], activation="sigmoid")

### END CODE HERE ###

# Compute cost

### START CODE HERE ### (≈ 1 line of code)

cost = compute_cost(A2, Y)

### END CODE HERE ###

# Initializing backward propagation

dA2 = - (np.divide(Y, A2) - np.divide(1 - Y, 1 - A2))

# Backward propagation. Inputs: "dA2, cache2, cache1". Outputs: "dA1, dW2, db2; also dA0 (not used), dW1, db1".

### START CODE HERE ### (≈ 2 lines of code)

dA1, dW2, db2 = linear_activation_backward(dA2, cache2, activation="sigmoid")

dA0, dW1, db1 = linear_activation_backward(dA1, cache1, activation="relu")

### END CODE HERE ###

# Set grads['dWl'] to dW1, grads['db1'] to db1, grads['dW2'] to dW2, grads['db2'] to db2

grads['dW1'] = dW1

grads['db1'] = db1

grads['dW2'] = dW2

grads['db2'] = db2

# Update parameters.

### START CODE HERE ### (approx. 1 line of code)

parameters = update_parameters(parameters, grads, learning_rate)

### END CODE HERE ###

# Retrieve W1, b1, W2, b2 from parameters

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

# Print the cost every 100 training example

if print_cost and i % 100 == 0:

print("Cost after iteration {}: {}".format(i, np.squeeze(cost)))

if i % 100 == 0:

costs.append(cost)

if not(print_cost):

print("The final cost = %f" %(cost))

# plot the cost

plt.plot(np.squeeze(costs))

plt.ylabel('cost')

plt.xlabel('iterations (per hundreds)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

return parameters

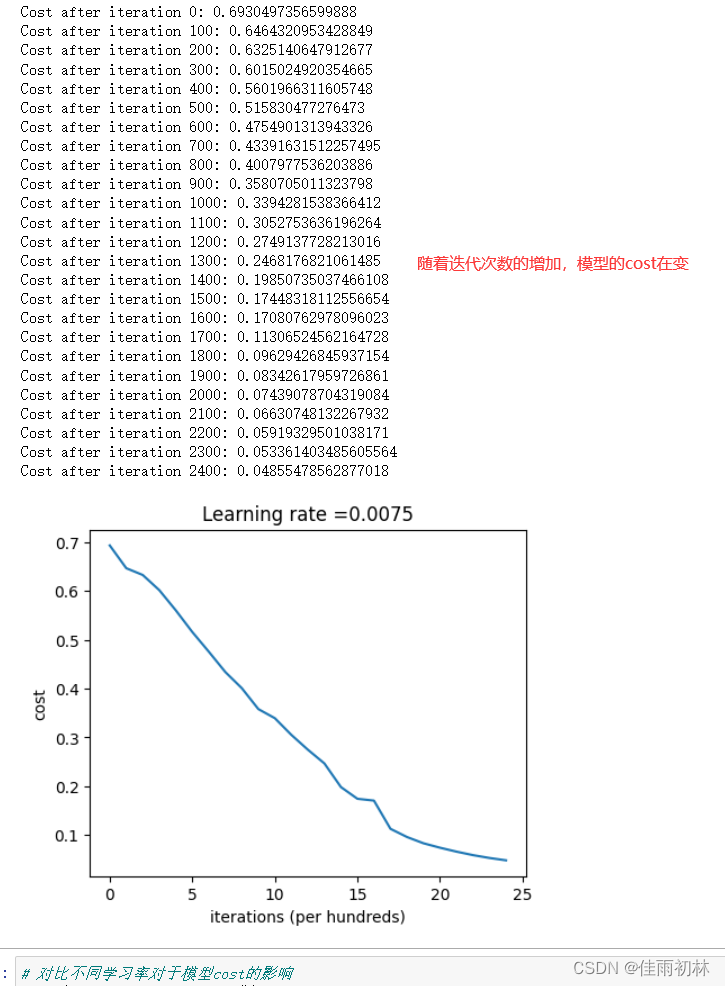

对模型进行测试

# 接下来对二层神经网络的各层维度进行赋值

n_x = 12288 # num_px * num_px * 3

n_h = 7

n_y = 1

layers_dims = (n_x, n_h, n_y)

# 调用模型

parameters = two_layer_model(train_x, train_y, layers_dims = (n_x, n_h, n_y), num_iterations = 2500, print_cost=True)

# 自定义预测函数,用来得到预测的正确率

def predict(X, y, parameters):

m = X.shape[1]

n = len(parameters) // 2

p = np.zeros((1, m))

probas, caches = L_model_forward(X, parameters)

for i in range(probas.shape[1]):

if probas[0,i] > 0.5:

p[0, i] = 1

else:

p[0, i] = 0

print("Accuracy: " + str(np.sum(p == y) / (1.0 * m)*100) + "%")

return p

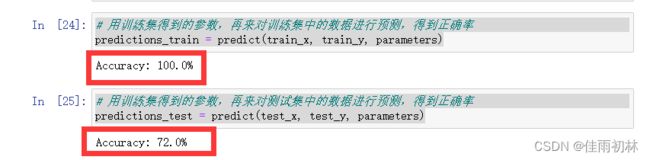

# 用训练集得到的参数,再来对训练集中的数据进行预测,得到正确率

predictions_train = predict(train_x, train_y, parameters)

# 用训练集得到的参数,再来对测试集中的数据进行预测,得到正确率

predictions_test = predict(test_x, test_y, parameters)

2.2 L层神经网络的实现

由上面的两层神经网络的实现,我们可以将两层模型推广至L层,需要对之前的部分函数进行调整

核心部分:前向传播、反向传播和参数调整

# 实现一个L层的神经网络;需要将前面的代码进行修改

def initialize_parameters_deep(layer_dims):

np.random.seed(1)

parameters = {}

L = len(layer_dims)

for i in range(1, L):

#Be careful, the scaler is np.sqrt(layers_dims[i - 1]), not constant 0.01

parameters['W' + str(i)] = np.random.randn(layer_dims[i], layer_dims[i - 1]) / np.sqrt(layers_dims[i - 1])

parameters["b" + str(i)] = np.zeros((layer_dims[i], 1))

assert((layer_dims[i], layer_dims[i - 1]) == parameters["W" + str(i)].shape)

assert((layer_dims[i], 1) == parameters["b" + str(i)].shape)

return parameters

def L_model_forward(X, parameters):

caches = []

A = X

L = len(parameters) // 2

for i in range(1, L):

A_prev = A

A, cache = linear_activation_forward(A_prev, parameters["W" + str(i)], parameters["b" + str(i)], "relu")

caches.append(cache)

AL, cache = linear_activation_forward(A, parameters["W" + str(L)], parameters["b" + str(L)], "sigmoid")

caches.append(cache)

assert(AL.shape == (1, X.shape[1]))

return AL, caches

def compute_cost(AL, Y):

m = Y.shape[1]

cost = -(np.dot(np.log(AL), Y.T) + np.dot(np.log(1 - AL), (1 - Y).T)) / (m * 1.0)

#print(cost.shape)

cost = np.squeeze(cost)

#print(cost.shape)

#print(cost)

assert(cost.shape == ( ))

return cost

def L_model_backward(AL, Y, caches):

grads = {}

L = len(caches)

m = AL.shape[1]

Y = Y.reshape(AL.shape)

dAL = -(np.divide(Y, AL) - np.divide(1 - Y, 1 - AL))

current_cache = caches[L - 1]

grads["dA" + str(L)], grads["dW" + str(L)], grads["db" + str(L)] = linear_activation_backward(dAL, current_cache, activation="sigmoid")

for i in reversed(range(L - 1)):

current_cache = caches[i]

dA_prev_temp, dW_temp, db_temp = linear_activation_backward(grads["dA" + str(i + 2)], current_cache, activation="relu")

grads["dA" + str(i + 1)] = dA_prev_temp

grads["dW" + str(i + 1)] = dW_temp

grads["db" + str(i + 1)] = db_temp

return grads

def update_parameters(parameters, grads, learning_rate):

L = len(parameters) // 2

for i in range(1, L + 1):

parameters["W" + str(i)] -= learning_rate * grads["dW" + str(i)]

parameters["b" + str(i)] -= learning_rate * grads["db" + str(i)]

return parameters

实现L层神经网络模型

# GRADED FUNCTION: L_layer_model

def L_layer_model(X, Y, layers_dims, learning_rate = 0.0075, num_iterations = 3000, print_cost=False):#lr was 0.009

"""

Implements a L-layer neural network: [LINEAR->RELU]*(L-1)->LINEAR->SIGMOID.

Arguments:

X -- data, numpy array of shape (number of examples, num_px * num_px * 3)

Y -- true "label" vector (containing 0 if cat, 1 if non-cat), of shape (1, number of examples)

layers_dims -- list containing the input size and each layer size, of length (number of layers + 1).

learning_rate -- learning rate of the gradient descent update rule

num_iterations -- number of iterations of the optimization loop

print_cost -- if True, it prints the cost every 100 steps

Returns:

parameters -- parameters learnt by the model. They can then be used to predict.

"""

np.random.seed(1)

costs = [] # keep track of cost

# Parameters initialization.

### START CODE HERE ###

parameters = initialize_parameters_deep(layers_dims)

### END CODE HERE ###

# Loop (gradient descent)

for i in range(0, num_iterations):

# Forward propagation: [LINEAR -> RELU]*(L-1) -> LINEAR -> SIGMOID.

### START CODE HERE ### (≈ 1 line of code)

AL, caches = L_model_forward(X, parameters)

### END CODE HERE ###

# Compute cost.

### START CODE HERE ### (≈ 1 line of code)

cost = compute_cost(AL, Y)

### END CODE HERE ###

# Backward propagation.

### START CODE HERE ### (≈ 1 line of code)

grads = L_model_backward(AL, Y, caches)

### END CODE HERE ###

# Update parameters.

### START CODE HERE ### (≈ 1 line of code)

parameters = update_parameters(parameters, grads, learning_rate)

### END CODE HERE ###

# Print the cost every 100 training example

if print_cost and i % 100 == 0:

print ("Cost after iteration %i: %f" %(i, cost))

if print_cost and i % 100 == 0:

costs.append(cost)

# plot the cost

plt.plot(np.squeeze(costs))

plt.ylabel('cost')

plt.xlabel('iterations (per tens)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

return parameters

这里我们以L=5为例子,将L层模型跑起来,看看实验的效果

### CONSTANTS ###

layers_dims = [12288, 20, 7, 5, 1] # 5-layer model

parameters = L_layer_model(train_x, train_y, layers_dims, num_iterations = 2500, print_cost = True)

# 输出模型对训练集进行预测的正确率

pred_train = predict(train_x, train_y, parameters)

# 输出模型对测试集进行预测的正确率

pred_test = predict(test_x, test_y, parameters)

# 输出所有预测错误的图片

print_mislabeled_images(classes, test_x, test_y, pred_test)

3.实验总结

- 实现多层神经网络模型进行二分类,实现效果上比上一次实验中的单神经网络模型的表现要好,对于测试集的预测正确率由68%(单神经网络)—>72%(两层神经网络)---->80%(5层神经网络),这里只是一粗略的对比,因为对于学习率等其他因素没有控制相同变量

- 对于多层神经网络模型而言,比如上面实验中,两层和五层的神经网络,在相同学习率的条件下,五层神经网络模型的的实现效果较两层模型实现的效果好,但是这是否意味着模型的层数越多模型表现的效果就越好呢?(答案是否定的)

- 在实验中,对两层模型进行了学习率的实验的,发现学习率的选取对于模型的效果表现是十分重要的(高了或者太低都会适得其反)

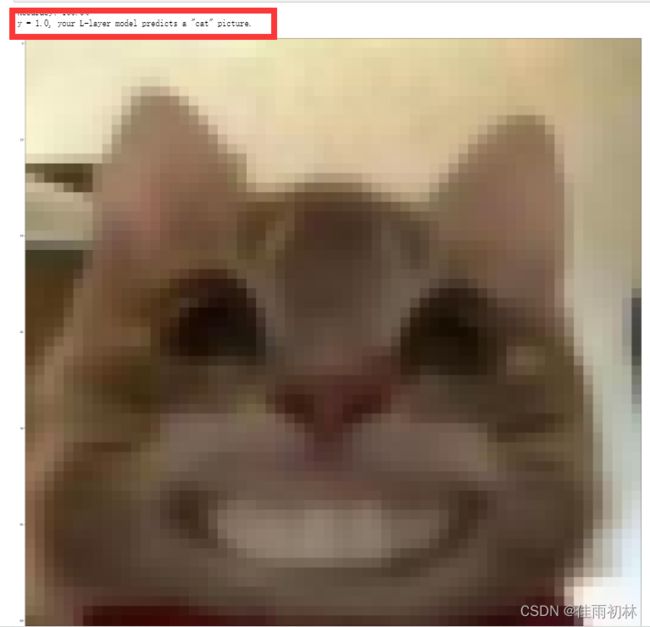

- 在上次的单层神经网络模型中,我用自己的图片进行了测试,上次模型认为我的本地图片不是一只,这次我用五层神经网络模型进行测试,模型认为我的图片是一只猫。哈哈哈,虽然这个图片不正经,不过这个结果对比还是很有意思的。

# 用自己电脑本地图片输入到模型中进行预测

local_file_path = 'C:\\Users\\佳雨初林\\Desktop\\猫头.jpg'

local_image = plt.imread(local_file_path )

# 打印本地图片的尺寸:(200,197,3)

local_image.shape

# 我们需要将图片尺寸改成我们模型中数据集的尺寸:(200,197,3)->(64,64,3)

from skimage import transform

local_image_tran = transform.resize(local_image,(64, 64, 3))

# 将改变尺寸后的图片打印出来看看

plt.imshow(local_image_tran)

# 最后将改变好尺寸之后的图像数据转化为模型需要的向量数据(12288,1)

local_test = local_image_tran.reshape(64*64*3,1)

my_predicted_image = predict(local_test, [1], parameters)

print ("y = " + str(np.squeeze(my_predicted_image)) + ", your L-layer model predicts a \"" + classes[int(np.squeeze(my_predicted_image)),].decode("utf-8") + "\" picture.")

4.问题记录

4.1 资源导入问题

当我重新实现一次代码时,数据集和实验所需要的工具类都不在我jupyter的文件目录下,当时想着如何导入不同文件下的包,可以使用sys改变导入包的路径(亲测有效),append中不仅可以添加绝对路径,也可以是相对路径。

import sys

sys.path.append('C://Users//Desktop//研究生学习//deeplearning.ai-andrewNG-master//COURSE 1 Neural Networks and Deep Learning//Week 4//Deep Neural Network Application_ Image Classification')

from dnn_app_utils_v2 import *

这样确实能够将工具类dnn_app_utils_v2导入,但是工具类的中导入数据的load_data()方法中的数据路径又得修改,我怕麻烦,直接把数据集和工具类复制一份放到我的jupyter文件目录下面。

4.2 部分代码解释

下面这两条语句是自动加载你导入的python模块,保证你每次在ipynb里使用的模块是最新版本,所以在你修改了模块代码之后,不需要重新刷新你的kenel。(这些命令是在jupyter中才有的,在pycharm中会报错)

%load_ext autoreload

%autoreload 2

assert语法的使用,在实验的过程中总是遇到assert,这是一个有用的减少错误办法,可以有效排除coding时出现的一些错误。

5.总结

自己对于python相关库函数的使用上面还是存在极大缺陷,这里需要自己好好补补相关知识点。如果要我自己根据吴老师上课时的数学推导再到模型建立,完全个人实现模型的代码的话,那一定会非常吃力,但是老师的实验作业中,我只需要补齐极少数代码,然后根据jupyter笔记上面的引导,从而实现整个模型,将上课的内容融入到代码中,加深理论知识的理解,这是非常好的。前路漫漫,还得继续努力。