Python爬虫实战Pro | (4) 用Flask+Redis维护代理池

在本篇博客中,我们将使用Flask+Redis维护代理池。在之前的Python爬虫实战(18)中,我们曾搭建过IP代理池,本次搭建的IP代理池是对之前的升级,获取代理的范围更加广泛。

目录

1. 为什么要用代理池?

2. 代理池要求

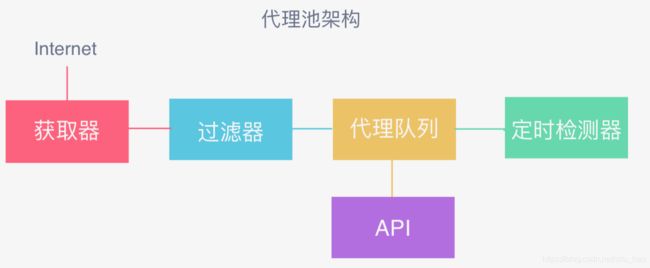

3. 代理池架构

4. 代理池的实现

5. 使用方法

6. 完整项目

1. 为什么要用代理池?

- 许多网站有专门的反爬⾍措施,可能遇到封IP等问题。使用代理伪装IP,防止被封。

- 互联⽹上公开了⼤量免费代理,利用好资源。

- 通过定时的检测维护同样可以得到多个可⽤代理。

2. 代理池要求

- 多站抓取,异步检测

可以从多个代理网站,进行代理抓取。并且可以进行异步检测,提高检测效率。

- 定时筛选,持续更新

每隔一段时间对代理进行检测(用代理请求相关网站,看是否成功),判断其可用性,筛选可用的代理,不断更新。

- 提供接口,易于提取

使用Flask提供web接口,可以使用Python程序在该web接口上方便的获取可用的代理IP。

3. 代理池架构

- 获取器 从各大代理网站,抓取代理(IP+端口)

- 过滤器 使用获取器得到的代理,访问带爬取网站或通用网站(百度),过滤掉不可用的代理。

- 代理队列 使用redis数据库维护一些可用的代理

- 定时检测器 一些之前可用的代理,可能一段时间后,使用次数过于频繁就会不可用,所以需要对代理队列中的代理进行定时检测,即调用过滤器中的方法,判断其可用性,过滤掉不可用的代理。

- API 使用Flask提供web接口,提供代理队列中的可用代理,可以使用Python程序在该接口方便的获取。

4. 代理池的实现

- 项目目录

- 入口文件 run.py

执行run.py,运行代理池:

from proxypool.scheduler import Scheduler

import sys

import io

sys.stdout = io.TextIOWrapper(sys.stdout.buffer, encoding='utf-8')

#入口文件 代理池的入口

def main():

try:

#调用Scheduler类的run方法

s = Scheduler()

s.run()

except:

#发生异常后进行重试

main()

if __name__ == '__main__':

main()

- 调度文件 scheduler.py

import time

from multiprocessing import Process

from proxypool.api import app

from proxypool.getter import Getter

from proxypool.tester import Tester

from proxypool.db import RedisClient

from proxypool.setting import *

class Scheduler():

def schedule_tester(self, cycle=TESTER_CYCLE):

"""

定时测试代理

"""

tester = Tester()

while True:

print('测试器开始运行')

tester.run()

time.sleep(cycle)

def schedule_getter(self, cycle=GETTER_CYCLE):

"""

定时获取代理

"""

getter = Getter()

while True:

print('开始抓取代理')

getter.run()

time.sleep(cycle)

def schedule_api(self):

"""

开启API

"""

app.run(API_HOST, API_PORT)

def run(self):

print('代理池开始运行')

if TESTER_ENABLED: #开启定时检测代理进程

tester_process = Process(target=self.schedule_tester)

tester_process.start()

if GETTER_ENABLED: #开启定时获取代理进程

getter_process = Process(target=self.schedule_getter)

getter_process.start()

if API_ENABLED: #开启接口进程

api_process = Process(target=self.schedule_api)

api_process.start()

- 代理队列 db.py

import redis

from proxypool.error import PoolEmptyError

from proxypool.setting import REDIS_HOST, REDIS_PORT, REDIS_PASSWORD, REDIS_KEY

from proxypool.setting import MAX_SCORE, MIN_SCORE, INITIAL_SCORE

from random import choice

import re

#维护可用的代理队列

class RedisClient(object):

def __init__(self, host=REDIS_HOST, port=REDIS_PORT, password=REDIS_PASSWORD):

"""

初始化

:param host: Redis 地址

:param port: Redis 端口

:param password: Redis密码

"""

self.db = redis.StrictRedis(host=host, port=port, password=password, decode_responses=True)

def add(self, proxy, score=INITIAL_SCORE):

"""

添加代理,设置分数为最高

:param proxy: 代理

:param score: 分数

:return: 添加结果

"""

if not re.match('\d+\.\d+\.\d+\.\d+\:\d+', proxy):

print('代理不符合规范', proxy, '丢弃')

return

if not self.db.zscore(REDIS_KEY, proxy): #代理符合规范 且redis数据库中不存在该代理

return self.db.zadd(REDIS_KEY, {proxy: score}) #将该代理加入到redis数据库中 设置一个初始分数 10

def random(self):

"""

随机获取有效代理,首先尝试获取最高分数代理,如果不存在,按照排名获取,否则异常

:return: 随机代理

"""

result = self.db.zrangebyscore(REDIS_KEY, MAX_SCORE, MAX_SCORE) #获取redis数据库中的最高分代理(100分)

if len(result): #如果存在

return choice(result) #返回该代理

else: #不存在最高分代理

result = self.db.zrevrange(REDIS_KEY, 0, 100) #对redis数据库中的代理 按分数排名获取 取最高排名的代理

if len(result):

return choice(result)

else:

raise PoolEmptyError

def decrease(self, proxy):

"""

检测代理不可用时,代理值减一分,小于最小值则删除

:param proxy: 代理

:return: 修改后的代理分数

"""

score = self.db.zscore(REDIS_KEY, proxy)

if score and score > MIN_SCORE:

print('代理', proxy, '当前分数', score, '减1')

return self.db.zincrby(REDIS_KEY, -1,proxy)

else:

print('代理', proxy, '当前分数', score, '移除')

return self.db.zrem(REDIS_KEY, proxy)

def exists(self, proxy):

"""

判断是否存在

:param proxy: 代理

:return: 是否存在

"""

return not self.db.zscore(REDIS_KEY, proxy) == None

def max(self, proxy):

"""

如果检测代理可用 就将该代理设置为MAX_SCORE

:param proxy: 代理

:return: 设置结果

"""

print('代理', proxy, '可用,设置为', MAX_SCORE)

return self.db.zadd(REDIS_KEY, {proxy: MAX_SCORE})

def count(self):

"""

获取redis数据库中的代理数量

:return: 数量

"""

return self.db.zcard(REDIS_KEY)

def all(self):

"""

获取全部代理

:return: 全部代理列表

"""

return self.db.zrangebyscore(REDIS_KEY, MIN_SCORE, MAX_SCORE)

def batch(self, start, stop):

"""

批量获取

:param start: 开始索引

:param stop: 结束索引

:return: 代理列表

"""

return self.db.zrevrange(REDIS_KEY, start, stop - 1)

if __name__ == '__main__':

conn = RedisClient()

result = conn.batch(680, 688)

print(result)

- 获取代理 getter.py crawler.py

from proxypool.tester import Tester

from proxypool.db import RedisClient

from proxypool.crawler import Crawler

from proxypool.setting import *

import sys

#获取器 定时从各大代理网站 抓取代理

class Getter():

def __init__(self):

self.redis = RedisClient()

self.crawler = Crawler()

def is_over_threshold(self):

"""

判断是否达到了代理池限制

"""

if self.redis.count() >= POOL_UPPER_THRESHOLD:

return True

else:

return False

def run(self):

print('获取器开始执行')

if not self.is_over_threshold(): #没有超过代理数量阈值

for callback_label in range(self.crawler.__CrawlFuncCount__):

callback = self.crawler.__CrawlFunc__[callback_label]

# 获取代理

proxies = self.crawler.get_proxies(callback)

sys.stdout.flush()

for proxy in proxies: #添加到redis数据库

self.redis.add(proxy)

import json

import re

from .utils import get_page

from pyquery import PyQuery as pq

#从各大代理网站 抓取代理

#可以自行扩充代理网站 定义解析提取代理的规则

class ProxyMetaclass(type):

def __new__(cls, name, bases, attrs):

count = 0

attrs['__CrawlFunc__'] = []

for k, v in attrs.items():

if 'crawl_' in k:

attrs['__CrawlFunc__'].append(k)

count += 1

attrs['__CrawlFuncCount__'] = count

return type.__new__(cls, name, bases, attrs)

class Crawler(object, metaclass=ProxyMetaclass):

def get_proxies(self, callback):

proxies = []

for proxy in eval("self.{}()".format(callback)):

print('成功获取到代理', proxy)

proxies.append(proxy)

return proxies

def crawl_daili66(self, page_count=4):

"""

获取代理66

:param page_count: 页码

:return: 代理

"""

start_url = 'http://www.66ip.cn/{}.html'

urls = [start_url.format(page) for page in range(1, page_count + 1)]

for url in urls:

print('Crawling', url)

html = get_page(url)

if html:

doc = pq(html)

trs = doc('.containerbox table tr:gt(0)').items()

for tr in trs:

ip = tr.find('td:nth-child(1)').text()

port = tr.find('td:nth-child(2)').text()

yield ':'.join([ip, port])

def crawl_ip3366(self):

for page in range(1, 4):

start_url = 'http://www.ip3366.net/free/?stype=1&page={}'.format(page)

html = get_page(start_url)

ip_address = re.compile('\s*(.*?) \s*(.*?) ')

# \s * 匹配空格,起到换行作用

re_ip_address = ip_address.findall(html)

for address, port in re_ip_address:

result = address + ':' + port

yield result.replace(' ', '')

def crawl_kuaidaili(self):

for i in range(1, 4):

start_url = 'http://www.kuaidaili.com/free/inha/{}/'.format(i)

html = get_page(start_url)

if html:

ip_address = re.compile('(.*?) ')

re_ip_address = ip_address.findall(html)

port = re.compile('(.*?) ')

re_port = port.findall(html)

for address, port in zip(re_ip_address, re_port):

address_port = address + ':' + port

yield address_port.replace(' ', '')

def crawl_xicidaili(self):

for i in range(1, 3):

start_url = 'http://www.xicidaili.com/nn/{}'.format(i)

headers = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8',

'Cookie': '_free_proxy_session=BAh7B0kiD3Nlc3Npb25faWQGOgZFVEkiJWRjYzc5MmM1MTBiMDMzYTUzNTZjNzA4NjBhNWRjZjliBjsAVEkiEF9jc3JmX3Rva2VuBjsARkkiMUp6S2tXT3g5a0FCT01ndzlmWWZqRVJNek1WanRuUDBCbTJUN21GMTBKd3M9BjsARg%3D%3D--2a69429cb2115c6a0cc9a86e0ebe2800c0d471b3',

'Host': 'www.xicidaili.com',

'Referer': 'http://www.xicidaili.com/nn/3',

'Upgrade-Insecure-Requests': '1',

}

html = get_page(start_url, options=headers)

if html:

find_trs = re.compile(' (.*?) ', re.S)

trs = find_trs.findall(html)

for tr in trs:

find_ip = re.compile('(\d+\.\d+\.\d+\.\d+) ')

re_ip_address = find_ip.findall(tr)

find_port = re.compile('(\d+) ')

re_port = find_port.findall(tr)

for address, port in zip(re_ip_address, re_port):

address_port = address + ':' + port

yield address_port.replace(' ', '')

def crawl_ip3366(self):

for i in range(1, 4):

start_url = 'http://www.ip3366.net/?stype=1&page={}'.format(i)

html = get_page(start_url)

if html:

find_tr = re.compile('(.*?) ', re.S)

trs = find_tr.findall(html)

for s in range(1, len(trs)):

find_ip = re.compile('(\d+\.\d+\.\d+\.\d+) ')

re_ip_address = find_ip.findall(trs[s])

find_port = re.compile('(\d+) ')

re_port = find_port.findall(trs[s])

for address, port in zip(re_ip_address, re_port):

address_port = address + ':' + port

yield address_port.replace(' ', '')

def crawl_iphai(self):

start_url = 'http://www.iphai.com/'

html = get_page(start_url)

if html:

find_tr = re.compile('(.*?) ', re.S)

trs = find_tr.findall(html)

for s in range(1, len(trs)):

find_ip = re.compile('\s+(\d+\.\d+\.\d+\.\d+)\s+ ', re.S)

re_ip_address = find_ip.findall(trs[s])

find_port = re.compile('\s+(\d+)\s+ ', re.S)

re_port = find_port.findall(trs[s])

for address, port in zip(re_ip_address, re_port):

address_port = address + ':' + port

yield address_port.replace(' ', '')

def crawl_data5u(self):

start_url = 'http://www.data5u.com/free/gngn/index.shtml'

headers = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8',

'Accept-Encoding': 'gzip, deflate',

'Accept-Language': 'en-US,en;q=0.9,zh-CN;q=0.8,zh;q=0.7',

'Cache-Control': 'max-age=0',

'Connection': 'keep-alive',

'Cookie': 'JSESSIONID=47AA0C887112A2D83EE040405F837A86',

'Host': 'www.data5u.com',

'Referer': 'http://www.data5u.com/free/index.shtml',

'Upgrade-Insecure-Requests': '1',

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_13_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.108 Safari/537.36',

}

html = get_page(start_url, options=headers)

if html:

ip_address = re.compile('(\d+\.\d+\.\d+\.\d+) .*?(\d+) ', re.S)

re_ip_address = ip_address.findall(html)

for address, port in re_ip_address:

result = address + ':' + port

yield result.replace(' ', '')

- 检测代理 tester.py

import asyncio

import aiohttp

import time

import sys

try:

from aiohttp import ClientError

except:

from aiohttp import ClientProxyConnectionError as ProxyConnectionError

from proxypool.db import RedisClient

from proxypool.setting import *

#定时检测 redis数据库中代理的可用性

class Tester(object):

def __init__(self):

self.redis = RedisClient()

async def test_single_proxy(self, proxy):

"""

测试单个代理

:param proxy:

:return:

"""

conn = aiohttp.TCPConnector(verify_ssl=False)

async with aiohttp.ClientSession(connector=conn) as session:

try:

if isinstance(proxy, bytes):

proxy = proxy.decode('utf-8')

real_proxy = 'http://' + proxy

print('正在测试', proxy)

async with session.get(TEST_URL, proxy=real_proxy, timeout=15, allow_redirects=False) as response:

if response.status in VALID_STATUS_CODES:

self.redis.max(proxy)

print('代理可用', proxy)

else:

self.redis.decrease(proxy)

print('请求响应码不合法 ', response.status, 'IP', proxy)

except (ClientError, aiohttp.client_exceptions.ClientConnectorError, asyncio.TimeoutError, AttributeError):

self.redis.decrease(proxy)

print('代理请求失败', proxy)

def run(self):

"""

测试主函数

:return:

"""

print('测试器开始运行')

try:

count = self.redis.count() #获取代理数量

print('当前剩余', count, '个代理')

for i in range(0, count, BATCH_TEST_SIZE): #批量测试代理

start = i

stop = min(i + BATCH_TEST_SIZE, count)

print('正在测试第', start + 1, '-', stop, '个代理')

test_proxies = self.redis.batch(start, stop)

loop = asyncio.get_event_loop()

tasks = [self.test_single_proxy(proxy) for proxy in test_proxies]

loop.run_until_complete(asyncio.wait(tasks))

sys.stdout.flush()

time.sleep(5)

except Exception as e:

print('测试器发生错误', e.args)

- web 接口 api.py

from flask import Flask, g

from .db import RedisClient

__all__ = ['app']

app = Flask(__name__)

def get_conn():

if not hasattr(g, 'redis'):

g.redis = RedisClient()

return g.redis

@app.route('/')

def index():

return 'Welcome to Proxy Pool System

'

@app.route('/random')

def get_proxy():

"""

Get a proxy

:return: 随机代理

"""

conn = get_conn()

return conn.random()

@app.route('/count')

def get_counts():

"""

Get the count of proxies

:return: 代理池总量

"""

conn = get_conn()

return str(conn.count())

if __name__ == '__main__':

app.run()

- 参数配置 setting.py

# Redis数据库地址

REDIS_HOST = '127.0.0.1'

# Redis端口

REDIS_PORT = 6379

# Redis密码,如无填None

REDIS_PASSWORD = None

REDIS_KEY = 'ProxiesPro'

# 代理分数

MAX_SCORE = 100

MIN_SCORE = 0

INITIAL_SCORE = 10

VALID_STATUS_CODES = [200, 302]

# 代理池数量界限

POOL_UPPER_THRESHOLD = 50000

# 检查周期

TESTER_CYCLE = 20

# 获取周期

GETTER_CYCLE = 300

# 测试API,建议抓哪个网站测哪个

TEST_URL = 'http://www.baidu.com' #也可以使用通用测试网站 百度

# API配置

API_HOST = '0.0.0.0'

API_PORT = 5555

# 开关

TESTER_ENABLED = True #检测

GETTER_ENABLED = True #获取

API_ENABLED = True #接口

# 最大批测试量

BATCH_TEST_SIZE = 10

5. 使用方法

运行run.py,运行后会自动从各大代理网站抓取代理并存入redis数据库,并定时检测代理的可用性,提供web 接口,APIHost:APIPort,如上述设置的http://0.0.0.0:5555.

在该接口可以随机获取一个可用的代理,或查看代理池中的代理数量。

在浏览器输入http://0.0.0.0:5555/count 获取代理数量:

在浏览器输入http://0.0.0.0:5555/random 随机获取一个可用代理:

每次刷新后都会,返回一个不同的可用代理。

- 在爬虫程序中进行使用

在下一篇博客中,我们会结合一个特定的爬虫程序,来学习如何将IP代理池运用到爬虫程序中,伪装IP,防止被封。

大体思路:首先运行run.py,打开web 接口。在爬虫程序中,请求该接口,获取可用的IP,然后用这个IP爬取我们想要爬取的网站。用一段时间后,可能该IP会被封,可以添加异常处理机制,当发生异常如IP被封时,重新在该接口获取一个新的可用的IP,继续爬取我们想要爬取的网站,以此重复。这样我们就可以在爬取过程中,当遇到IP被封时,不断的更换IP,从而达到防止被封的目的,顺利爬取完想要的数据。

6. 完整项目

完整项目