- 系统学习Python——并发模型和异步编程:进程、线程和GIL

分类目录:《系统学习Python》总目录在文章《并发模型和异步编程:基础知识》我们简单介绍了Python中的进程、线程和协程。本文就着重介绍Python中的进程、线程和GIL的关系。Python解释器的每个实例都是一个进程。使用multiprocessing或concurrent.futures库可以启动额外的Python进程。Python的subprocess库用于启动运行外部程序(不管使用何种

- JavaScript 树形菜单总结

Auscy

microsoft

树形菜单是前端开发中常见的交互组件,用于展示具有层级关系的数据(如文件目录、分类列表、组织架构等)。以下从核心概念、实现方式、常见功能及优化方向等方面进行总结。一、核心概念层级结构:数据以父子嵌套形式存在,如{id:1,children:[{id:2}]}。节点:树形结构的基本单元,包含自身信息及子节点(若有)。展开/折叠:子节点的显示与隐藏切换,是树形菜单的核心交互。递归渲染:因数据层级不固定,

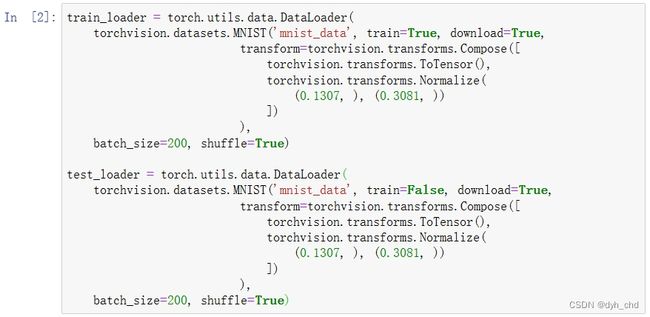

- PyTorch & TensorFlow速成复习:从基础语法到模型部署实战(附FPGA移植衔接)

阿牛的药铺

算法移植部署pytorchtensorflowfpga开发

PyTorch&TensorFlow速成复习:从基础语法到模型部署实战(附FPGA移植衔接)引言:为什么算法移植工程师必须掌握框架基础?针对光学类产品算法FPGA移植岗位需求(如可见光/红外图像处理),深度学习框架是算法落地的"桥梁"——既要用PyTorch/TensorFlow验证算法可行性,又要将训练好的模型(如CNN、目标检测)转换为FPGA可部署的格式(ONNX、TFLite)。本文采用"

- 算法学习笔记:17.蒙特卡洛算法 ——从原理到实战,涵盖 LeetCode 与考研 408 例题

在计算机科学和数学领域,蒙特卡洛算法(MonteCarloAlgorithm)以其独特的随机抽样思想,成为解决复杂问题的有力工具。从圆周率的计算到金融风险评估,从物理模拟到人工智能,蒙特卡洛算法都发挥着不可替代的作用。本文将深入剖析蒙特卡洛算法的思想、解题思路,结合实际应用场景与Java代码实现,并融入考研408的相关考点,穿插图片辅助理解,帮助你全面掌握这一重要算法。蒙特卡洛算法的基本概念蒙特卡

- 计算机网络技术

CZZDg

计算机网络

目录一.网络概述1.网络的概念2.网络发展是3.网络的四要素4.网络功能5.网络类型6.网络协议与标准7.网络中常见的概念8.网络拓补结构二.网络模型1.分层思想2.OSI七层模型3.TCP/IP五层模型4.数据的封装与解封装过程三.IP地址1.进制转换2.IP地址定义3.IP地址组成成分4.IP地址分类5.地址划分6、相关概念一.网络概述1.网络的概念两个主机通过传输介质和通信协议实现通信和资源

- UNIX域套接字

1、UNIX域套接字的定义UNIX域套接字是进程间通信(IPC)的一种方式,不涉及网络协议栈,因此在同一台主机上的通信中,它比基于TCP/IP协议的网络套接字更快速、更高效。2、UNIX域套接字的分类字节流套接字(SOCK_STREAM):提供面向连接的、可靠的数据传输服务。数据报套接字(SOCK_DGRAM):提供无连接的数据传输服务,数据以独立的数据报形式传输。3、UNIX套接字与TCP/IP

- AI音乐模拟器:AIGC时代的智能音乐创作革命

lauo

人工智能AIGC开源前端机器人

AI音乐模拟器:AIGC时代的智能音乐创作革命引言:AIGC浪潮下的音乐创作新范式在数字化转型的浪潮中,人工智能生成内容(AIGC)正在重塑各个创意领域。音乐产业作为创意经济的重要组成部分,正经历着前所未有的变革。据最新市场研究数据显示,全球AI音乐市场规模预计将从2023年的5.8亿美元增长到2030年的26.8亿美元,年复合增长率高达24.3%。这一快速增长的市场背后,是AI音乐技术正在打破传

- 数据分析常用指标名词解释及计算公式

走过冬季

学习笔记数据分析大数据

数据分析中有大量常用指标,它们帮助我们量化业务表现、用户行为、产品健康度等。下面是一些核心指标的名词解释及计算方式,按常见类别分类:一、流量与用户规模指标页面浏览量名词解释:用户访问网站或应用时,每次加载或刷新一个页面就算一次PV。它衡量的是页面被打开的总次数。计算方式:PV=∑(所有页面被加载的次数)(通常由埋点或日志直接统计)独立访客数名词解释:在特定时间范围内(如一天、一周、一月),访问网站

- 视频分析:让AI看懂动态画面

随机森林404

计算机视觉音视频人工智能microsoft

引言:动态视觉理解的革命在数字信息爆炸的时代,视频已成为最主要的媒介形式。据统计,每分钟有超过500小时的视频内容被上传到YouTube平台,而全球互联网流量的82%来自视频数据传输。面对如此海量的视频内容,传统的人工处理方式已无法满足需求,这正是人工智能视频分析技术大显身手的舞台。视频分析技术赋予机器"看懂"动态画面的能力,使其能够自动理解、解释甚至预测视频中的内容,这一突破正在彻底改变我们与视

- V少JS基础班之第五弹

V少在逆向

JS基础班javascript开发语言ecmascript

文章目录一、前言二、本节涉及知识点三、重点内容1-函数的定义2-函数的构成1.函数参数详解1)参数个数不固定2)默认参数3)arguments对象(类数组)4)剩余参数(Rest参数)5)函数参数是按值传递的6)解构参数传递7)参数校验技巧(JavaScript没有类型限制,需要手动校验)2.函数返回值详解3-函数的分类1-函数声明式:2-函数表达式:3-箭头函数:4-构造函数:5-IIFE:6-

- Python爬虫实战:利用最新技术爬取B站直播数据

Python爬虫项目

2025年爬虫实战项目python爬虫开发语言html百度

1.B站直播数据爬取概述B站(哔哩哔哩)是中国最大的年轻人文化社区和视频平台之一,其直播业务近年来发展迅速。爬取B站直播数据可以帮助我们分析直播市场趋势、热门主播排行、观众喜好等有价值的信息。常见的B站直播数据类型包括:直播间基本信息(标题、分类、主播信息)实时观看人数与弹幕数据礼物打赏数据直播历史记录分区热门直播数据本文将重点介绍如何获取直播间基本信息和分区热门直播数据。2.环境准备与工具选择2

- 目标检测(object detection)

加油吧zkf

目标检测目标检测人工智能计算机视觉

目标检测作为计算机视觉的核心技术,在自动驾驶、安防监控、医疗影像等领域发挥着不可替代的作用。本文将系统讲解目标检测的概念、原理、主流模型、常见数据集及应用场景,帮助读者构建对这一技术的完整认知。一、目标检测的核心概念目标检测(ObjectDetection)是指在图像或视频中自动定位并识别出所有感兴趣的目标的技术。它需要解决两个核心问题:分类(Classification):确定图像中每个目标的类

- 不同行业的 AI 数据安全与合规实践:7 大核心要点全解析

观熵

人工智能DeepSeek私有化部署

不同行业的AI数据安全与合规实践:7大核心要点全解析关键词AI数据安全、行业合规、私有化部署、数据分类分级、国产大模型、隐私保护、DeepSeek部署摘要随着国产大模型在金融、医疗、政务、教育等关键领域的深入部署,AI系统对数据安全与行业合规提出了更高要求。本文结合DeepSeek私有化部署实战,系统梳理当前各行业主流的数据安全合规标准与落地策略,从数据分类分级、访问控制、审计追踪到敏感信息识别与

- STM32 ADC详解

月入鱼饵

stm32嵌入式硬件单片机

本文介绍stm32ADC的使用,本文较长,可以配合目录跳转到需要的地方阅读。ADC转换原理本文重点在于STM32的ADC的使用,介绍ADC转换原理是为了更好理解STM32中关于ADC的配置,所以这里只是简单介绍一下ADC的转换原理,想详细了解ADC的转换原理可以看看看完这篇文章,终于搞懂了ADC原理及分类!和ADC基本工作原理-CSDN。简单来说,模拟信号输入进来,经过低通滤波操作预处理信号之后,

- vllm本地部署bge-reranker-v2-m3模型API服务实战教程

雷 电法王

大模型部署linuxpythonvscodelanguagemodel

文章目录一、说明二、配置环境2.1安装虚拟环境2.2安装vllm2.3对应版本的pytorch安装2.4安装flash_attn2.5下载模型三、运行代码3.1启动服务3.2调用代码验证一、说明本文主要介绍vllm本地部署BAAI/bge-reranker-v2-m3模型API服务实战教程本文是在Ubuntu24.04+CUDA12.8+Python3.12环境下复现成功的二、配置环境2.1安装虚

- 深度学习图像分类数据集—桃子识别分类

AI街潜水的八角

深度学习图像数据集深度学习分类人工智能

该数据集为图像分类数据集,适用于ResNet、VGG等卷积神经网络,SENet、CBAM等注意力机制相关算法,VisionTransformer等Transformer相关算法。数据集信息介绍:桃子识别分类:['B1','M2','R0','S3']训练数据集总共有6637张图片,每个文件夹单独放一种数据各子文件夹图片统计:·B1:1601张图片·M2:1800张图片·R0:1601张图片·S3:

- c++中迭代器的本质

三月微风

c++开发语言

C++迭代器的本质与实现原理迭代器是C++标准模板库(STL)的核心组件之一,它作为容器与算法之间的桥梁,提供了统一访问容器元素的方式。下面从多个维度深入解析迭代器的本质特性。一、迭代器的基本定义与分类迭代器的本质迭代器是一种行为类似指针的对象,用于遍历和操作容器中的元素。它提供了一种统一的方式来访问不同容器中的元素,而无需关心容器的具体实现细节。标准分类体系C++标准定义了5种迭代器类型,按功能

- 法律科技领域人工智能代理构建的十个经验教训,一位人工智能工程师通过构建、部署和维护智能代理的经验教训来优化法律工作流程的历程。

知识大胖

NVIDIAGPU和大语言模型开发教程人工智能ai

目录介绍什么是代理人?为什么它对法律如此重要?法律技术中代理用例示例-合同审查代理-法律研究代理在LegalTech中使用代理的十个教训-教训1:即使代理很酷,它们也不能解决所有问题-教训2:选择最适合您用例的框架-教训3:能够快速迭代不同的模型-教训4:从简单开始,必要时扩展-教训5:使用跟踪解决方案;您将需要它-教训6:确保跟踪成本,代理循环可能很昂贵-教训7:将控制权交给最终用户(人在环路中

- Llama-Omni会说话的人工智能“语音到语音LLM” 利用低延迟、高质量语音转语音 AI 彻底改变对话方式(教程含源码)

知识大胖

NVIDIAGPU和大语言模型开发教程llama人工智能nvidiallm

介绍“单靠技术是不够的——技术与文科、人文学科的结合,才能产生让我们心花怒放的成果。”——史蒂夫·乔布斯近年来,人机交互领域发生了重大变化,尤其是随着ChatGPT、GPT-4等大型语言模型(LLM)的出现。虽然这些模型主要基于文本,但人们对语音交互的兴趣日益浓厚,以使人机对话更加无缝和自然。然而,实现语音交互而不受语音转文本处理中常见的延迟和错误的影响仍然是一个挑战。关键字:Llama-Omni

- 什么是热力学计算?它如何帮助人工智能发展?

知识大胖

NVIDIAGPU和大语言模型开发教程人工智能量子计算

现代计算的基础是晶体管,这是一种微型电子开关,可以用它构建逻辑门,从而创建CPU或GPU等复杂的数字电路。随着技术的进步,晶体管变得越来越小。根据摩尔定律,集成电路中晶体管的数量大约每两年增加一倍。这种指数级增长使得计算技术呈指数级发展。然而,晶体管尺寸的缩小是有限度的。我们很快就会达到晶体管无法工作的阈值。此外,人工智能的进步使得对计算能力的需求比以往任何时候都更加迫切。根本问题是自然是随机的(

- 上海交大:工具增强推理agent

标题:SciMaster:TowardsGeneral-PurposeScientificAIAgentsPartI.X-MasterasFoundation-CanWeLeadonHumanity’sLastExam?来源:arXiv,2507.05241摘要人工智能代理的快速发展激发了利用它们加速科学发现的长期雄心。实现这一目标需要深入了解人类知识的前沿。因此,人类的最后一次考试(HLE)为评

- 微算法科技的前沿探索:量子机器学习算法在视觉任务中的革新应用

MicroTech2025

量子计算算法

在信息技术飞速发展的今天,计算机视觉作为人工智能领域的重要分支,正逐步渗透到我们生活的方方面面。从自动驾驶到人脸识别,从医疗影像分析到安防监控,计算机视觉技术展现了巨大的应用潜力。然而,随着视觉任务复杂度的不断提升,传统机器学习算法在处理大规模、高维度数据时遇到了计算瓶颈。在此背景下,量子计算作为一种颠覆性的计算模式,以其独特的并行处理能力和指数级增长的计算空间,为解决这一难题提供了新的思路。微算

- udev 规则文件命名规范

奇妙之二进制

#嵌入式/Linuxlinux网络运维

文章目录udev规则文件名的含义、规范及数字开头的原因一、udev规则文件的基本概念二、udev规则文件名的规范与含义1.文件名格式规范2.名称各部分的含义3.文件扫描路径三、为何规则文件名通常以数字开头?1.执行顺序的精确控制2.便于分类和管理3.兼容性与标准化四、示例与实践建议1.常见规则文件示例2.自定义规则命名建议五、总结udev规则文件名的含义、规范及数字开头的原因一、udev规则文件的

- 中国银联豪掷1亿采购海光C86架构服务器

信创新态势

海光芯片C86国产芯片海光信息

近日,中国银联国产服务器采购大单正式敲定,基于海光C86架构的服务器产品中标,项目金额超过1亿元。接下来,C86服务器将用于支撑中国银联的虚拟化、大数据、人工智能、研发测试等技术场景,进一步提升其业务处理能力、用户服务效率和信息安全水平。作为我国重要的银行卡组织和金融基础设施,中国银联在全球183个国家和地区设有银联受理网络,境内外成员机构超过2600家,是世界三大银行卡品牌之一。此次中国银联发力

- Ollama平台里最流行的embedding模型: nomic-embed-text 模型介绍和实践

skywalk8163

人工智能embedding人工智能服务器

nomic-embed-text模型介绍nomic-embed-text是一个基于SentenceTransformers库的句子嵌入模型,专门用于特征提取和句子相似度计算。该模型在多个任务上表现出色,特别是在分类、检索和聚类任务中。其核心优势在于能够生成高质量的句子嵌入,这些嵌入在语义上非常接近,从而在相似度计算和分类任务中表现优异。之所以选用这个模型,是因为在Ollama网站查找这个模型,发现

- AI人工智能浪潮中文心一言的独特优势

AI人工智能浪潮中文心一言的独特优势:为什么它是中国市场的“AI主力军”?关键词:文心一言,AI大模型,中文处理,多模态融合,产业落地,安全可控,百度ERNIE摘要:在全球AI大模型浪潮中,百度文心一言(ERNIEBot)凭借“懂中文、会多模态、能落地、守规矩”的四大核心优势,成为中国市场最具竞争力的AI产品之一。本文将用“超级大脑”的比喻,从中文理解、多模态能力、产业生态融合、安全可控性四个维度

- Flink 2.0 DataStream算子全景

Edingbrugh.南空

大数据flinkflink人工智能

在实时流处理中,ApacheFlink的DataStreamAPI算子是构建流处理pipeline的基础单元。本文基于Flink2.0,聚焦算子的核心概念、分类及高级特性。一、算子核心概念:流处理的"原子操作1.数据流拓扑(StreamTopology)每个Flink应用可抽象为有向无环图(DAG),由源节点(Source)、算子节点(Operator)和汇节点(Sink)构成,算子通过数据流(S

- 正义的算法迷宫—人工智能重构司法体系的技术悖论与文明试炼

一、法庭的数字化迁徙当美国威斯康星州法院采纳COMPAS算法评估被告再犯风险,当中国"智慧法院"系统年处理1.2亿件案件,司法体系正经历从石柱法典到代码裁判的范式革命。这场转型的核心驱动力是司法效率与公正的永恒张力:美国重罪案件平均审理周期达18个月,中国基层法官年人均结案357件(是德国同行的6倍),而算法能在0.3秒内完成百万份文书比对。人工智能渗透司法引发三重裂变:证据分析从经验推断转向数据

- 【python实战】不玩微博,一封邮件就能知道实时热榜,天秀吃瓜

一条coding

从实战学python人工智能pythonlinux爬虫

❤️欢迎订阅《从实战学python》专栏,用python实现办公自动化、数据可视化、人工智能等各个方向的实战案例,有趣又有用!❤️更多精品专栏简介点这里有的人金玉其表败絮其中,有的人却若彩虹般绚烂,怦然心动前言哈喽,大家好,我是一条。在生活中我是一个不太喜欢逛娱乐平台的人,抖音、快手、微博我手机里都没装,甚至微信朋友圈都不看,但是自从开始写博客,有些热度不得不蹭。所以就有了这样一个需求,能不能让微

- 财政业务知识库目录分类实践

alankuo

人工智能

财政业务知识库的目录分类是实现知识有序管理、高效检索和精准应用的核心环节,需结合财政业务的专业性、系统性和动态性,兼顾业务逻辑、用户需求和管理实践。以下从分类原则、核心框架、实践要点三个方面,结合财政业务特点展开具体实践说明。一、财政业务知识库目录分类的核心原则在实践中,目录分类需遵循以下原则,确保分类逻辑清晰、实用高效:业务关联性:以财政核心业务流程和管理领域为基础,确保分类与实际工作场景紧密贴

- [黑洞与暗粒子]没有光的世界

comsci

无论是相对论还是其它现代物理学,都显然有个缺陷,那就是必须有光才能够计算

但是,我相信,在我们的世界和宇宙平面中,肯定存在没有光的世界....

那么,在没有光的世界,光子和其它粒子的规律无法被应用和考察,那么以光速为核心的

&nbs

- jQuery Lazy Load 图片延迟加载

aijuans

jquery

基于 jQuery 的图片延迟加载插件,在用户滚动页面到图片之后才进行加载。

对于有较多的图片的网页,使用图片延迟加载,能有效的提高页面加载速度。

版本:

jQuery v1.4.4+

jQuery Lazy Load v1.7.2

注意事项:

需要真正实现图片延迟加载,必须将真实图片地址写在 data-original 属性中。若 src

- 使用Jodd的优点

Kai_Ge

jodd

1. 简化和统一 controller ,抛弃 extends SimpleFormController ,统一使用 implements Controller 的方式。

2. 简化 JSP 页面的 bind, 不需要一个字段一个字段的绑定。

3. 对 bean 没有任何要求,可以使用任意的 bean 做为 formBean。

使用方法简介

- jpa Query转hibernate Query

120153216

Hibernate

public List<Map> getMapList(String hql,

Map map) {

org.hibernate.Query jpaQuery = entityManager.createQuery(hql);

if (null != map) {

for (String parameter : map.keySet()) {

jp

- Django_Python3添加MySQL/MariaDB支持

2002wmj

mariaDB

现状

首先,

[email protected] 中默认的引擎为 django.db.backends.mysql 。但是在Python3中如果这样写的话,会发现 django.db.backends.mysql 依赖 MySQLdb[5] ,而 MySQLdb 又不兼容 Python3 于是要找一种新的方式来继续使用MySQL。 MySQL官方的方案

首先据MySQL文档[3]说,自从MySQL

- 在SQLSERVER中查找消耗IO最多的SQL

357029540

SQL Server

返回做IO数目最多的50条语句以及它们的执行计划。

select top 50

(total_logical_reads/execution_count) as avg_logical_reads,

(total_logical_writes/execution_count) as avg_logical_writes,

(tot

- spring UnChecked 异常 官方定义!

7454103

spring

如果你接触过spring的 事物管理!那么你必须明白 spring的 非捕获异常! 即 unchecked 异常! 因为 spring 默认这类异常事物自动回滚!!

public static boolean isCheckedException(Throwable ex)

{

return !(ex instanceof RuntimeExcep

- mongoDB 入门指南、示例

adminjun

javamongodb操作

一、准备工作

1、 下载mongoDB

下载地址:http://www.mongodb.org/downloads

选择合适你的版本

相关文档:http://www.mongodb.org/display/DOCS/Tutorial

2、 安装mongoDB

A、 不解压模式:

将下载下来的mongoDB-xxx.zip打开,找到bin目录,运行mongod.exe就可以启动服务,默

- CUDA 5 Release Candidate Now Available

aijuans

CUDA

The CUDA 5 Release Candidate is now available at http://developer.nvidia.com/<wbr></wbr>cuda/cuda-pre-production. Now applicable to a broader set of algorithms, CUDA 5 has advanced fe

- Essential Studio for WinRT网格控件测评

Axiba

JavaScripthtml5

Essential Studio for WinRT界面控件包含了商业平板应用程序开发中所需的所有控件,如市场上运行速度最快的grid 和chart、地图、RDL报表查看器、丰富的文本查看器及图表等等。同时,该控件还包含了一组独特的库,用于从WinRT应用程序中生成Excel、Word以及PDF格式的文件。此文将对其另外一个强大的控件——网格控件进行专门的测评详述。

网格控件功能

1、

- java 获取windows系统安装的证书或证书链

bewithme

windows

有时需要获取windows系统安装的证书或证书链,比如说你要通过证书来创建java的密钥库 。

有关证书链的解释可以查看此处 。

public static void main(String[] args) {

SunMSCAPI providerMSCAPI = new SunMSCAPI();

S

- NoSQL数据库之Redis数据库管理(set类型和zset类型)

bijian1013

redis数据库NoSQL

4.sets类型

Set是集合,它是string类型的无序集合。set是通过hash table实现的,添加、删除和查找的复杂度都是O(1)。对集合我们可以取并集、交集、差集。通过这些操作我们可以实现sns中的好友推荐和blog的tag功能。

sadd:向名称为key的set中添加元

- 异常捕获何时用Exception,何时用Throwable

bingyingao

用Exception的情况

try {

//可能发生空指针、数组溢出等异常

} catch (Exception e) {

- 【Kafka四】Kakfa伪分布式安装

bit1129

kafka

在http://bit1129.iteye.com/blog/2174791一文中,实现了单Kafka服务器的安装,在Kafka中,每个Kafka服务器称为一个broker。本文简单介绍下,在单机环境下Kafka的伪分布式安装和测试验证 1. 安装步骤

Kafka伪分布式安装的思路跟Zookeeper的伪分布式安装思路完全一样,不过比Zookeeper稍微简单些(不

- Project Euler

bookjovi

haskell

Project Euler是个数学问题求解网站,网站设计的很有意思,有很多problem,在未提交正确答案前不能查看problem的overview,也不能查看关于problem的discussion thread,只能看到现在problem已经被多少人解决了,人数越多往往代表问题越容易。

看看problem 1吧:

Add all the natural num

- Java-Collections Framework学习与总结-ArrayDeque

BrokenDreams

Collections

表、栈和队列是三种基本的数据结构,前面总结的ArrayList和LinkedList可以作为任意一种数据结构来使用,当然由于实现方式的不同,操作的效率也会不同。

这篇要看一下java.util.ArrayDeque。从命名上看

- 读《研磨设计模式》-代码笔记-装饰模式-Decorator

bylijinnan

java设计模式

声明: 本文只为方便我个人查阅和理解,详细的分析以及源代码请移步 原作者的博客http://chjavach.iteye.com/

import java.io.BufferedOutputStream;

import java.io.DataOutputStream;

import java.io.FileOutputStream;

import java.io.Fi

- Maven学习(一)

chenyu19891124

Maven私服

学习一门技术和工具总得花费一段时间,5月底6月初自己学习了一些工具,maven+Hudson+nexus的搭建,对于maven以前只是听说,顺便再自己的电脑上搭建了一个maven环境,但是完全不了解maven这一强大的构建工具,还有ant也是一个构建工具,但ant就没有maven那么的简单方便,其实简单点说maven是一个运用命令行就能完成构建,测试,打包,发布一系列功

- [原创]JWFD工作流引擎设计----节点匹配搜索算法(用于初步解决条件异步汇聚问题) 补充

comsci

算法工作PHP搜索引擎嵌入式

本文主要介绍在JWFD工作流引擎设计中遇到的一个实际问题的解决方案,请参考我的博文"带条件选择的并行汇聚路由问题"中图例A2描述的情况(http://comsci.iteye.com/blog/339756),我现在把我对图例A2的一个解决方案公布出来,请大家多指点

节点匹配搜索算法(用于解决标准对称流程图条件汇聚点运行控制参数的算法)

需要解决的问题:已知分支

- Linux中用shell获取昨天、明天或多天前的日期

daizj

linuxshell上几年昨天获取上几个月

在Linux中可以通过date命令获取昨天、明天、上个月、下个月、上一年和下一年

# 获取昨天

date -d 'yesterday' # 或 date -d 'last day'

# 获取明天

date -d 'tomorrow' # 或 date -d 'next day'

# 获取上个月

date -d 'last month'

#

- 我所理解的云计算

dongwei_6688

云计算

在刚开始接触到一个概念时,人们往往都会去探寻这个概念的含义,以达到对其有一个感性的认知,在Wikipedia上关于“云计算”是这么定义的,它说:

Cloud computing is a phrase used to describe a variety of computing co

- YII CMenu配置

dcj3sjt126com

yii

Adding id and class names to CMenu

We use the id and htmlOptions to accomplish this. Watch.

//in your view

$this->widget('zii.widgets.CMenu', array(

'id'=>'myMenu',

'items'=>$this-&g

- 设计模式之静态代理与动态代理

come_for_dream

设计模式

静态代理与动态代理

代理模式是java开发中用到的相对比较多的设计模式,其中的思想就是主业务和相关业务分离。所谓的代理设计就是指由一个代理主题来操作真实主题,真实主题执行具体的业务操作,而代理主题负责其他相关业务的处理。比如我们在进行删除操作的时候需要检验一下用户是否登陆,我们可以删除看成主业务,而把检验用户是否登陆看成其相关业务

- 【转】理解Javascript 系列

gcc2ge

JavaScript

理解Javascript_13_执行模型详解

摘要: 在《理解Javascript_12_执行模型浅析》一文中,我们初步的了解了执行上下文与作用域的概念,那么这一篇将深入分析执行上下文的构建过程,了解执行上下文、函数对象、作用域三者之间的关系。函数执行环境简单的代码:当调用say方法时,第一步是创建其执行环境,在创建执行环境的过程中,会按照定义的先后顺序完成一系列操作:1.首先会创建一个

- Subsets II

hcx2013

set

Given a collection of integers that might contain duplicates, nums, return all possible subsets.

Note:

Elements in a subset must be in non-descending order.

The solution set must not conta

- Spring4.1新特性——Spring缓存框架增强

jinnianshilongnian

spring4

目录

Spring4.1新特性——综述

Spring4.1新特性——Spring核心部分及其他

Spring4.1新特性——Spring缓存框架增强

Spring4.1新特性——异步调用和事件机制的异常处理

Spring4.1新特性——数据库集成测试脚本初始化

Spring4.1新特性——Spring MVC增强

Spring4.1新特性——页面自动化测试框架Spring MVC T

- shell嵌套expect执行命令

liyonghui160com

一直都想把expect的操作写到bash脚本里,这样就不用我再写两个脚本来执行了,搞了一下午终于有点小成就,给大家看看吧.

系统:centos 5.x

1.先安装expect

yum -y install expect

2.脚本内容:

cat auto_svn.sh

#!/bin/bash

- Linux实用命令整理

pda158

linux

0. 基本命令 linux 基本命令整理

1. 压缩 解压 tar -zcvf a.tar.gz a #把a压缩成a.tar.gz tar -zxvf a.tar.gz #把a.tar.gz解压成a

2. vim小结 2.1 vim替换 :m,ns/word_1/word_2/gc

- 独立开发人员通向成功的29个小贴士

shoothao

独立开发

概述:本文收集了关于独立开发人员通向成功需要注意的一些东西,对于具体的每个贴士的注解有兴趣的朋友可以查看下面标注的原文地址。

明白你从事独立开发的原因和目的。

保持坚持制定计划的好习惯。

万事开头难,第一份订单是关键。

培养多元化业务技能。

提供卓越的服务和品质。

谨小慎微。

营销是必备技能。

学会组织,有条理的工作才是最有效率的。

“独立

- JAVA中堆栈和内存分配原理

uule

java

1、栈、堆

1.寄存器:最快的存储区, 由编译器根据需求进行分配,我们在程序中无法控制.2. 栈:存放基本类型的变量数据和对象的引用,但对象本身不存放在栈中,而是存放在堆(new 出来的对象)或者常量池中(字符串常量对象存放在常量池中。)3. 堆:存放所有new出来的对象。4. 静态域:存放静态成员(static定义的)5. 常量池:存放字符串常量和基本类型常量(public static f

![]()