【Hadoop学习之MapReduce】_25MR之数据清洗案例(ETL)

数据清洗(ETL):提取-转换-装载(Extract-Transform-Load)

在运行核心业务MapReduce程序之前,往往要先对数据进行清洗,清理掉不符合用户要求的数据。清理的过程往往只需要运行Mapper程序,不需要运行Reduce程序。

一、数据清洗案例实操——简单案例

-

需求

去除网站日志中字段长度小于等于11的日志信息。

-

输入数据

58.177.135.108 - - [19/Sep/2013:06:19:56 +0000] "GET /data-scientist-problems/?cf_action=sync_comments&post_id=59 HTTP/1.1" 200 48 "http://blog.fens.me/data-scientist-problems/" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_8_5) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/29.0.1547.65 Safari/537.36" 111.192.165.229 - - [19/Sep/2013:06:20:16 +0000] "POST /wp-admin/admin-ajax.php HTTP/1.1" 200 95 "http://blog.fens.me/wp-admin/post.php?post=2445&action=edit&message=10" "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/28.0.1500.95 Safari/537.36" 58.177.135.108 - - [19/Sep/2013:06:20:33 +0000] "GET /favicon.ico HTTP/1.1" 200 0 "-" "Mozilla/5.0 (iPhone; CPU iPhone OS 7_0 like Mac OS X) AppleWebKit/537.51.1 (KHTML, like Gecko) CriOS/30.0.1599.12 Mobile/11A465 Safari/8536.25" 58.177.135.108 - - [19/Sep/2013:06:20:33 +0000] "GET /wp-content/uploads/2013/05/favicon.ico HTTP/1.1" 304 0 "-" "Mozilla/5.0 (iPhone; CPU iPhone OS 7_0 like Mac OS X) AppleWebKit/537.51.1 (KHTML, like Gecko) CriOS/30.0.1599.12 Mobile/11A465 Safari/8536.25" 58.177.135.108 - - [19/Sep/2013:06:20:52 +0000] "-" 400 0 "-" "-" 163.177.71.12 - - [19/Sep/2013:06:21:14 +0000] "HEAD / HTTP/1.1" 200 20 "-" "DNSPod-Monitor/1.0" ...... -

期望输出数据

每行字段长度都大于11.

-

创建包名:

com.easysir.etl -

创建

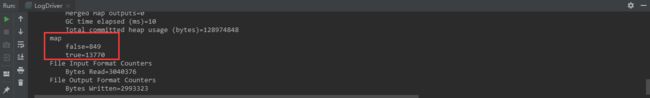

LogMapper类:package com.easysir.etl; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper; import java.io.IOException; public class LogMapper extends Mapper<LongWritable, Text, Text, NullWritable> { @Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { // 1 获取一行 String line = value.toString(); // 2 解析数据 boolean result = parseLog(line, context); // 3 解析未通过 if (!result){ return; } // 3 解析通过 context.write(value, NullWritable.get()); } private boolean parseLog(String line, Context context){ String[] fields = line.split(" "); if (fields.length > 11){ // 实现计数器功能 context.getCounter("map", "true").increment(1); return true; }else { // 实现计数器功能 context.getCounter("map", "false").increment(1); return false; } } } -

创建

LogDriver类:package com.easysir.etl; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; public class LogDriver { public static void main(String[] args) throws Exception { // 输入输出路径需要根据自己电脑上实际的输入输出路径设置 args = new String[] { "E:\\idea-workspace\\mrWordCount\\input\\web.log", "E:\\idea-workspace\\mrWordCount\\output" }; // 1 获取job信息 Configuration conf = new Configuration(); Job job = Job.getInstance(conf); // 2 加载jar包 job.setJarByClass(LogDriver.class); // 3 关联map job.setMapperClass(LogMapper.class); // 4 设置最终输出类型 job.setOutputKeyClass(Text.class); job.setOutputValueClass(NullWritable.class); // 设置reducetask个数为0 job.setNumReduceTasks(0); // 5 设置输入和输出路径 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1])); // 6 提交 job.waitForCompletion(true); } }

二、数据清洗案例实操——复杂案例

-

需求

对

web访问日志中的各个字段识别切分,去除日志中不合法的记录,根据清洗规则,输出过滤后的数据. -

输入数据

58.177.135.108 - - [19/Sep/2013:06:19:56 +0000] "GET /data-scientist-problems/?cf_action=sync_comments&post_id=59 HTTP/1.1" 200 48 "http://blog.fens.me/data-scientist-problems/" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_8_5) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/29.0.1547.65 Safari/537.36" 111.192.165.229 - - [19/Sep/2013:06:20:16 +0000] "POST /wp-admin/admin-ajax.php HTTP/1.1" 200 95 "http://blog.fens.me/wp-admin/post.php?post=2445&action=edit&message=10" "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/28.0.1500.95 Safari/537.36" 58.177.135.108 - - [19/Sep/2013:06:20:33 +0000] "GET /favicon.ico HTTP/1.1" 200 0 "-" "Mozilla/5.0 (iPhone; CPU iPhone OS 7_0 like Mac OS X) AppleWebKit/537.51.1 (KHTML, like Gecko) CriOS/30.0.1599.12 Mobile/11A465 Safari/8536.25" 58.177.135.108 - - [19/Sep/2013:06:20:33 +0000] "GET /wp-content/uploads/2013/05/favicon.ico HTTP/1.1" 304 0 "-" "Mozilla/5.0 (iPhone; CPU iPhone OS 7_0 like Mac OS X) AppleWebKit/537.51.1 (KHTML, like Gecko) CriOS/30.0.1599.12 Mobile/11A465 Safari/8536.25" 58.177.135.108 - - [19/Sep/2013:06:20:52 +0000] "-" 400 0 "-" "-" 163.177.71.12 - - [19/Sep/2013:06:21:14 +0000] "HEAD / HTTP/1.1" 200 20 "-" "DNSPod-Monitor/1.0" ...... -

期望输出

均为合法数据。

-

创建包名:

com.easysir.etl2 -

创建

LogBean类,用来记录日志数据中各数据字段:package com.easysir.etl2; public class LogBean { private String remote_addr;// 记录客户端的ip地址 private String remote_user;// 记录客户端用户名称,忽略属性"-" private String time_local;// 记录访问时间与时区 private String request;// 记录请求的url与http协议 private String status;// 记录请求状态;成功是200 private String body_bytes_sent;// 记录发送给客户端文件主体内容大小 private String http_referer;// 用来记录从那个页面链接访问过来的 private String http_user_agent;// 记录客户浏览器的相关信息 private boolean valid = true;// 判断数据是否合法 public String getRemote_addr() { return remote_addr; } public void setRemote_addr(String remote_addr) { this.remote_addr = remote_addr; } public String getRemote_user() { return remote_user; } public void setRemote_user(String remote_user) { this.remote_user = remote_user; } public String getTime_local() { return time_local; } public void setTime_local(String time_local) { this.time_local = time_local; } public String getRequest() { return request; } public void setRequest(String request) { this.request = request; } public String getStatus() { return status; } public void setStatus(String status) { this.status = status; } public String getBody_bytes_sent() { return body_bytes_sent; } public void setBody_bytes_sent(String body_bytes_sent) { this.body_bytes_sent = body_bytes_sent; } public String getHttp_referer() { return http_referer; } public void setHttp_referer(String http_referer) { this.http_referer = http_referer; } public String getHttp_user_agent() { return http_user_agent; } public void setHttp_user_agent(String http_user_agent) { this.http_user_agent = http_user_agent; } public boolean isValid() { return valid; } public void setValid(boolean valid) { this.valid = valid; } @Override public String toString() { StringBuilder sb = new StringBuilder(); sb.append(this.valid); sb.append("\001").append(this.remote_addr); sb.append("\001").append(this.remote_user); sb.append("\001").append(this.time_local); sb.append("\001").append(this.request); sb.append("\001").append(this.status); sb.append("\001").append(this.body_bytes_sent); sb.append("\001").append(this.http_referer); sb.append("\001").append(this.http_user_agent); return sb.toString(); } } -

创建

LogMapper类:package com.easysir.etl2; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper; import java.io.IOException; public class LogMapper extends Mapper<LongWritable, Text, Text, NullWritable>{ Text k = new Text(); @Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { // 1 获取1行 String line = value.toString(); // 2 解析日志是否合法 LogBean bean = parseLog(line); if (!bean.isValid()) { return; } k.set(bean.toString()); // 3 输出 context.write(k, NullWritable.get()); } // 解析日志 private LogBean parseLog(String line) { LogBean logBean = new LogBean(); // 1 截取 String[] fields = line.split(" "); if (fields.length > 11) { // 2封装数据 logBean.setRemote_addr(fields[0]); logBean.setRemote_user(fields[1]); logBean.setTime_local(fields[3].substring(1)); logBean.setRequest(fields[6]); logBean.setStatus(fields[8]); logBean.setBody_bytes_sent(fields[9]); logBean.setHttp_referer(fields[10]); if (fields.length > 12) { logBean.setHttp_user_agent(fields[11] + " "+ fields[12]); }else { logBean.setHttp_user_agent(fields[11]); } // 大于400,HTTP错误 if (Integer.parseInt(logBean.getStatus()) >= 400) { logBean.setValid(false); } }else { logBean.setValid(false); } return logBean; } } -

创建

LogDriver类:package com.easysir.etl2; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; public class LogDriver { public static void main(String[] args) throws Exception { // 输入输出路径需要根据自己电脑上实际的输入输出路径设置 args = new String[] { "E:\\idea-workspace\\mrWordCount\\input\\web.log", "E:\\idea-workspace\\mrWordCount\\output" }; // 1 获取job信息 Configuration conf = new Configuration(); Job job = Job.getInstance(conf); // 2 加载jar包 job.setJarByClass(LogDriver.class); // 3 关联map job.setMapperClass(LogMapper.class); // 4 设置最终输出类型 job.setOutputKeyClass(Text.class); job.setOutputValueClass(NullWritable.class); // 5 设置输入和输出路径 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1])); // 6 提交 job.waitForCompletion(true); } }