图像分类或检测完整代码--搭建AlexNet模型--tensorflow实现

代码来自b站up:霹雳吧啦Wz

这些代码可用来目标检测或分类。你只需要准备自己的数据集,就可以跑!

一共有三个文件:搭建模型,训练模型(gpu或cpu版),预测(分类)

如果分类就改一下模型中的参数:

1.原代码中num_classes=5,意思分为五类。

2.train.py中

data_root = os.path.abspath(os.path.join(os.getcwd(), "../..")) # get data root path

image_path = os.path.join(data_root, "data_set", "flower_data") # flower data set path

train_dir = os.path.join(image_path, "train")

validation_dir = os.path.join(image_path, "val")

# 如果不理解可改写为:

train_dir = os.path.join(‘D:/train’) # 训练集绝对路径

validation_dir = os.path.join('D:/val') # 测试集绝对路径

1。(搭建模型)model.py

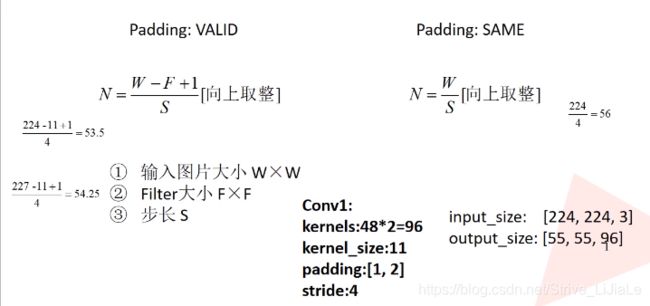

第一层卷积层:输入(227,227,3),48个卷积核,卷积核大小为11*11,默认采用VALID方法,N=(W-F+2P)/S+1,输出(55,55,48)

第一层池化层:输入(55,55,48),池化后卷积核个数不变还是48,池化核大小为3*3,默认采用VALID方法,N=(W-F+2P)/S+1=(55-3)/2+1=27,所以输出(27,27,48)

第二层卷积层:输入(27,27,48),卷积核个数128,大小5*5,padding:SAME,S=1,所以N=W/S=W,高和宽不变,输出(27,27,128)

第二层池化层:输入(27,27,128),池化后卷积核个数不变还是128,池化核大小为3*3,步长S=2,默认采用VALID方法,N=(W-F+2P)/S+1=(27-3)/2+1=13,输出(13,13,128)

第三层卷积层:输入(13,13,128),卷积核个数192,大小3*3,padding:SAME,S=1,所以N=W/S=W,高和宽不变,输出(13,13,192)

第四层卷积层:输入(13,13,192),卷积核个数192,大小3*3,padding:SAME,S=1,所以N=W/S=W,高和宽不变,输出(13,13,192)

第五层卷积层:输入(13,13,192),卷积核个数128,大小3*3,padding:SAME,S=1,所以N=W/S=W,高和宽不变,输出(13,13,128)

第三层池化层:输入(13,13,128),池化后卷积核个数不变还是128,池化核大小为3*3,步长S=2,默认采用VALID方法,N=(W-F+2P)/S+1=(13-3)/2+1=6,输出(6,6,128)

展平处理:66128

随机失活神经元20%

第一层全连接层:2048个节点,relu激活函数,输出2048

随机失活神经元20%

第二层全连接层:2048个节点,relu激活函数,输出2048

第三层全连接层:10个节点(数据集分类的类别数),输出10

softmax函数将输出转化为概率分布

模型代码

from tensorflow.keras import layers, models, Model, Sequential

def AlexNet_v1(im_height=224, im_width=224, num_classes=1000):

# tensorflow中的tensor通道排序是NHWC

input_image = layers.Input(shape=(im_height, im_width, 3), dtype="float32") # output(None, 224, 224, 3)

x = layers.ZeroPadding2D(((1, 2), (1, 2)))(input_image) # output(None, 227, 227, 3)

x = layers.Conv2D(48, kernel_size=11, strides=4, activation="relu")(x) # output(None, 55, 55, 48)

x = layers.MaxPool2D(pool_size=3, strides=2)(x) # output(None, 27, 27, 48)

x = layers.Conv2D(128, kernel_size=5, padding="same", activation="relu")(x) # output(None, 27, 27, 128)

x = layers.MaxPool2D(pool_size=3, strides=2)(x) # output(None, 13, 13, 128)

x = layers.Conv2D(192, kernel_size=3, padding="same", activation="relu")(x) # output(None, 13, 13, 192)

x = layers.Conv2D(192, kernel_size=3, padding="same", activation="relu")(x) # output(None, 13, 13, 192)

x = layers.Conv2D(128, kernel_size=3, padding="same", activation="relu")(x) # output(None, 13, 13, 128)

x = layers.MaxPool2D(pool_size=3, strides=2)(x) # output(None, 6, 6, 128)

x = layers.Flatten()(x) # output(None, 6*6*128)

x = layers.Dropout(0.2)(x)

x = layers.Dense(2048, activation="relu")(x) # output(None, 2048)

x = layers.Dropout(0.2)(x)

x = layers.Dense(2048, activation="relu")(x) # output(None, 2048)

x = layers.Dense(num_classes)(x) # output(None, 5)

predict = layers.Softmax()(x)

model = models.Model(inputs=input_image, outputs=predict)

return model

class AlexNet_v2(Model):

def __init__(self, num_classes=1000):

super(AlexNet_v2, self).__init__()

self.features = Sequential([

layers.ZeroPadding2D(((1, 2), (1, 2))), # output(None, 227, 227, 3)

layers.Conv2D(48, kernel_size=11, strides=4, activation="relu"), # output(None, 55, 55, 48)

layers.MaxPool2D(pool_size=3, strides=2), # output(None, 27, 27, 48)

layers.Conv2D(128, kernel_size=5, padding="same", activation="relu"), # output(None, 27, 27, 128)

layers.MaxPool2D(pool_size=3, strides=2), # output(None, 13, 13, 128)

layers.Conv2D(192, kernel_size=3, padding="same", activation="relu"), # output(None, 13, 13, 192)

layers.Conv2D(192, kernel_size=3, padding="same", activation="relu"), # output(None, 13, 13, 192)

layers.Conv2D(128, kernel_size=3, padding="same", activation="relu"), # output(None, 13, 13, 128)

layers.MaxPool2D(pool_size=3, strides=2)]) # output(None, 6, 6, 128)

self.flatten = layers.Flatten()

self.classifier = Sequential([

layers.Dropout(0.2),

layers.Dense(1024, activation="relu"), # output(None, 2048)

layers.Dropout(0.2),

layers.Dense(128, activation="relu"), # output(None, 2048)

layers.Dense(num_classes), # output(None, 5)

layers.Softmax()

])

def call(self, inputs, **kwargs):

x = self.features(inputs)

x = self.flatten(x)

x = self.classifier(x)

return x

2.train.py(训练模型cpu版)

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import matplotlib.pyplot as plt

from model import AlexNet_v1, AlexNet_v2

import tensorflow as tf

import json

import os

def main():

data_root = os.path.abspath(os.path.join(os.getcwd(), "../..")) # get data root path

image_path = os.path.join(data_root, "data_set", "flower_data") # flower data set path

train_dir = os.path.join(image_path, "train")

validation_dir = os.path.join(image_path, "val")

assert os.path.exists(train_dir), "cannot find {}".format(train_dir)

assert os.path.exists(validation_dir), "cannot find {}".format(validation_dir)

# create direction for saving weights

if not os.path.exists("save_weights"):

os.makedirs("save_weights")

im_height = 224

im_width = 224

batch_size = 32

epochs = 10

# data generator with data augmentation

train_image_generator = ImageDataGenerator(rescale=1. / 255,

horizontal_flip=True)

validation_image_generator = ImageDataGenerator(rescale=1. / 255)

train_data_gen = train_image_generator.flow_from_directory(directory=train_dir,

batch_size=batch_size,

shuffle=True,

target_size=(im_height, im_width),

class_mode='categorical')

total_train = train_data_gen.n

# get class dict

class_indices = train_data_gen.class_indices

# transform value and key of dict

inverse_dict = dict((val, key) for key, val in class_indices.items())

# write dict into json file

json_str = json.dumps(inverse_dict, indent=4)

with open('class_indices.json', 'w') as json_file:

json_file.write(json_str)

val_data_gen = validation_image_generator.flow_from_directory(directory=validation_dir,

batch_size=batch_size,

shuffle=False,

target_size=(im_height, im_width),

class_mode='categorical')

total_val = val_data_gen.n

print("using {} images for training, {} images for validation.".format(total_train,

total_val))

# sample_training_images, sample_training_labels = next(train_data_gen) # label is one-hot coding

#

# # This function will plot images in the form of a grid with 1 row

# # and 5 columns where images are placed in each column.

# def plotImages(images_arr):

# fig, axes = plt.subplots(1, 5, figsize=(20, 20))

# axes = axes.flatten()

# for img, ax in zip(images_arr, axes):

# ax.imshow(img)

# ax.axis('off')

# plt.tight_layout()

# plt.show()

#

#

# plotImages(sample_training_images[:5])

model = AlexNet_v1(im_height=im_height, im_width=im_width, num_classes=5)

# model = AlexNet_v2(class_num=5)

# model.build((batch_size, 224, 224, 3)) # when using subclass model

model.summary()

# using keras high level api for training

model.compile(optimizer=tf.keras.optimizers.Adam(learning_rate=0.0005),

loss=tf.keras.losses.CategoricalCrossentropy(from_logits=False),

metrics=["accuracy"])

callbacks = [tf.keras.callbacks.ModelCheckpoint(filepath='./save_weights/myAlex.h5',

save_best_only=True,

save_weights_only=True,

monitor='val_loss')]

# tensorflow2.1 recommend to using fit

history = model.fit(x=train_data_gen,

steps_per_epoch=total_train // batch_size,

epochs=epochs,

validation_data=val_data_gen,

validation_steps=total_val // batch_size,

callbacks=callbacks)

# plot loss and accuracy image

history_dict = history.history

train_loss = history_dict["loss"]

train_accuracy = history_dict["accuracy"]

val_loss = history_dict["val_loss"]

val_accuracy = history_dict["val_accuracy"]

# figure 1

plt.figure()

plt.plot(range(epochs), train_loss, label='train_loss')

plt.plot(range(epochs), val_loss, label='val_loss')

plt.legend()

plt.xlabel('epochs')

plt.ylabel('loss')

# figure 2

plt.figure()

plt.plot(range(epochs), train_accuracy, label='train_accuracy')

plt.plot(range(epochs), val_accuracy, label='val_accuracy')

plt.legend()

plt.xlabel('epochs')

plt.ylabel('accuracy')

plt.show()

# history = model.fit_generator(generator=train_data_gen,

# steps_per_epoch=total_train // batch_size,

# epochs=epochs,

# validation_data=val_data_gen,

# validation_steps=total_val // batch_size,

# callbacks=callbacks)

# # using keras low level api for training

# loss_object = tf.keras.losses.CategoricalCrossentropy(from_logits=False)

# optimizer = tf.keras.optimizers.Adam(learning_rate=0.0005)

#

# train_loss = tf.keras.metrics.Mean(name='train_loss')

# train_accuracy = tf.keras.metrics.CategoricalAccuracy(name='train_accuracy')

#

# test_loss = tf.keras.metrics.Mean(name='test_loss')

# test_accuracy = tf.keras.metrics.CategoricalAccuracy(name='test_accuracy')

#

#

# @tf.function

# def train_step(images, labels):

# with tf.GradientTape() as tape:

# predictions = model(images, training=True)

# loss = loss_object(labels, predictions)

# gradients = tape.gradient(loss, model.trainable_variables)

# optimizer.apply_gradients(zip(gradients, model.trainable_variables))

#

# train_loss(loss)

# train_accuracy(labels, predictions)

#

#

# @tf.function

# def test_step(images, labels):

# predictions = model(images, training=False)

# t_loss = loss_object(labels, predictions)

#

# test_loss(t_loss)

# test_accuracy(labels, predictions)

#

#

# best_test_loss = float('inf')

# for epoch in range(1, epochs+1):

# train_loss.reset_states() # clear history info

# train_accuracy.reset_states() # clear history info

# test_loss.reset_states() # clear history info

# test_accuracy.reset_states() # clear history info

# for step in range(total_train // batch_size):

# images, labels = next(train_data_gen)

# train_step(images, labels)

#

# for step in range(total_val // batch_size):

# test_images, test_labels = next(val_data_gen)

# test_step(test_images, test_labels)

#

# template = 'Epoch {}, Loss: {}, Accuracy: {}, Test Loss: {}, Test Accuracy: {}'

# print(template.format(epoch,

# train_loss.result(),

# train_accuracy.result() * 100,

# test_loss.result(),

# test_accuracy.result() * 100))

# if test_loss.result() < best_test_loss:

# model.save_weights("./save_weights/myAlex.ckpt", save_format='tf')

if __name__ == '__main__':

main()

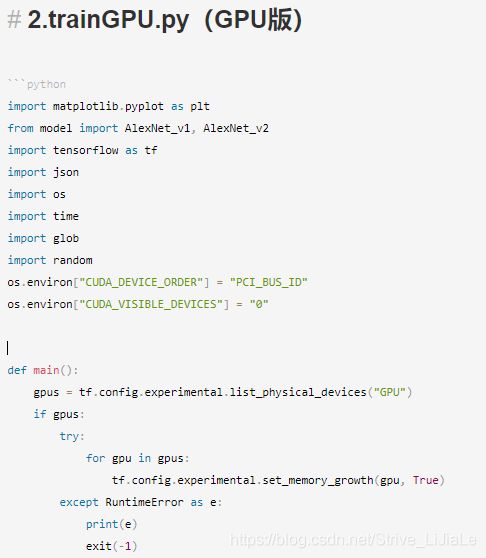

2.trainGPU.py(GPU版)

import matplotlib.pyplot as plt

from model import AlexNet_v1, AlexNet_v2

import tensorflow as tf

import json

import os

import time

import glob

import random

os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID"

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

def main():

gpus = tf.config.experimental.list_physical_devices("GPU")

if gpus:

try:

for gpu in gpus:

tf.config.experimental.set_memory_growth(gpu, True)

except RuntimeError as e:

print(e)

exit(-1)

data_root = os.path.abspath(os.path.join(os.getcwd(), "../..")) # get data root path

image_path = os.path.join(data_root, "data_set", "flower_data") # flower data set path

train_dir = os.path.join(image_path, "train")

validation_dir = os.path.join(image_path, "val")

assert os.path.exists(train_dir), "cannot find {}".format(train_dir)

assert os.path.exists(validation_dir), "cannot find {}".format(validation_dir)

# create direction for saving weights

if not os.path.exists("save_weights"):

os.makedirs("save_weights")

im_height = 224

im_width = 224

batch_size = 32

epochs = 10

# class dict

data_class = [cla for cla in os.listdir(train_dir) if os.path.isdir(os.path.join(train_dir, cla))]

class_num = len(data_class)

class_dict = dict((value, index) for index, value in enumerate(data_class))

# reverse value and key of dict

inverse_dict = dict((val, key) for key, val in class_dict.items())

# write dict into json file

json_str = json.dumps(inverse_dict, indent=4)

with open('class_indices.json', 'w') as json_file:

json_file.write(json_str)

# load train images list

train_image_list = glob.glob(train_dir+"/*/*.jpg")

random.shuffle(train_image_list)

train_num = len(train_image_list)

assert train_num > 0, "cannot find any .jpg file in {}".format(train_dir)

train_label_list = [class_dict[path.split(os.path.sep)[-2]] for path in train_image_list]

# load validation images list

val_image_list = glob.glob(validation_dir+"/*/*.jpg")

random.shuffle(val_image_list)

val_num = len(val_image_list)

assert val_num > 0, "cannot find any .jpg file in {}".format(validation_dir)

val_label_list = [class_dict[path.split(os.path.sep)[-2]] for path in val_image_list]

print("using {} images for training, {} images for validation.".format(train_num,

val_num))

def process_path(img_path, label):

label = tf.one_hot(label, depth=class_num)

image = tf.io.read_file(img_path)

image = tf.image.decode_jpeg(image)

image = tf.image.convert_image_dtype(image, tf.float32)

image = tf.image.resize(image, [im_height, im_width])

return image, label

AUTOTUNE = tf.data.experimental.AUTOTUNE

# load train dataset

train_dataset = tf.data.Dataset.from_tensor_slices((train_image_list, train_label_list))

train_dataset = train_dataset.shuffle(buffer_size=train_num)\

.map(process_path, num_parallel_calls=AUTOTUNE)\

.repeat().batch(batch_size).prefetch(AUTOTUNE)

# load train dataset

val_dataset = tf.data.Dataset.from_tensor_slices((val_image_list, val_label_list))

val_dataset = val_dataset.map(process_path, num_parallel_calls=tf.data.experimental.AUTOTUNE)\

.repeat().batch(batch_size)

# 实例化模型

model = AlexNet_v1(im_height=im_height, im_width=im_width, num_classes=5)

# model = AlexNet_v2(class_num=5)

# model.build((batch_size, 224, 224, 3)) # when using subclass model

model.summary()

# using keras low level api for training

loss_object = tf.keras.losses.CategoricalCrossentropy(from_logits=False)

optimizer = tf.keras.optimizers.Adam(learning_rate=0.0005)

train_loss = tf.keras.metrics.Mean(name='train_loss')

train_accuracy = tf.keras.metrics.CategoricalAccuracy(name='train_accuracy')

test_loss = tf.keras.metrics.Mean(name='test_loss')

test_accuracy = tf.keras.metrics.CategoricalAccuracy(name='test_accuracy')

@tf.function

def train_step(images, labels):

with tf.GradientTape() as tape:

predictions = model(images, training=True)

loss = loss_object(labels, predictions)

gradients = tape.gradient(loss, model.trainable_variables)

optimizer.apply_gradients(zip(gradients, model.trainable_variables))

train_loss(loss)

train_accuracy(labels, predictions)

@tf.function

def test_step(images, labels):

predictions = model(images, training=False)

t_loss = loss_object(labels, predictions)

test_loss(t_loss)

test_accuracy(labels, predictions)

best_test_loss = float('inf')

train_step_num = train_num // batch_size

val_step_num = val_num // batch_size

for epoch in range(1, epochs+1):

train_loss.reset_states() # clear history info

train_accuracy.reset_states() # clear history info

test_loss.reset_states() # clear history info

test_accuracy.reset_states() # clear history info

t1 = time.perf_counter()

for index, (images, labels) in enumerate(train_dataset):

train_step(images, labels)

if index+1 == train_step_num:

break

print(time.perf_counter()-t1)

for index, (images, labels) in enumerate(val_dataset):

test_step(images, labels)

if index+1 == val_step_num:

break

template = 'Epoch {}, Loss: {}, Accuracy: {}, Test Loss: {}, Test Accuracy: {}'

print(template.format(epoch,

train_loss.result(),

train_accuracy.result() * 100,

test_loss.result(),

test_accuracy.result() * 100))

if test_loss.result() < best_test_loss:

model.save_weights("./save_weights/myAlex.ckpt".format(epoch), save_format='tf')

# # using keras high level api for training

# model.compile(optimizer=tf.keras.optimizers.Adam(learning_rate=0.0005),

# loss=tf.keras.losses.CategoricalCrossentropy(from_logits=False),

# metrics=["accuracy"])

#

# callbacks = [tf.keras.callbacks.ModelCheckpoint(filepath='./save_weights/myAlex_{epoch}.h5',

# save_best_only=True,

# save_weights_only=True,

# monitor='val_loss')]

#

# # tensorflow2.1 recommend to using fit

# history = model.fit(x=train_dataset,

# steps_per_epoch=train_num // batch_size,

# epochs=epochs,

# validation_data=val_dataset,

# validation_steps=val_num // batch_size,

# callbacks=callbacks)

if __name__ == '__main__':

main()

3.predict.py(如果训练用的gpu版,需将gpu版中,调用gpu的代码写入,不然会提示显存错误)

import os

import json

from PIL import Image

import numpy as np

import matplotlib.pyplot as plt

from model import AlexNet_v1, AlexNet_v2

def main():

im_height = 224

im_width = 224

# load image

img_path = "../tulip.jpg"

assert os.path.exists(img_path), "file: '{}' dose not exist.".format(img_path)

img = Image.open(img_path)

# resize image to 224x224

img = img.resize((im_width, im_height))

plt.imshow(img)

# scaling pixel value to (0-1)

img = np.array(img) / 255.

# Add the image to a batch where it's the only member.

img = (np.expand_dims(img, 0))

# read class_indict

json_path = './class_indices.json'

assert os.path.exists(json_path), "file: '{}' dose not exist.".format(json_path)

json_file = open(json_path, "r")

class_indict = json.load(json_file)

# create model

model = AlexNet_v1(num_classes=5)

weighs_path = "./save_weights/myAlex.h5"

assert os.path.exists(img_path), "file: '{}' dose not exist.".format(weighs_path)

model.load_weights(weighs_path)

# prediction

result = np.squeeze(model.predict(img))

predict_class = np.argmax(result)

print_res = "class: {} prob: {:.3}".format(class_indict[str(predict_class)],

result[predict_class])

plt.title(print_res)

print(print_res)

plt.show()

if __name__ == '__main__':

main()