python去停用词用nltk_NLTK简单入门和数据清洗

NLTK历史悠久的英文分词工具# 导入分词模块

from nltk.tokenize import word_tokenize

from nltk.text import Text

input='''

There were a sensitivity and a beauty to her that have nothing to do with looks. She was one to be listened to, whose words were so easy to take to heart.

'''

tokens=word_tokenize(input)

# 打印前5个词

print(tokens[:5])

# 将单词统一转换成小写 There 和 there 应该算同一个词

tokens=[w.lower() for w in tokens]

# 创建一个Text对象

t=Text(tokens)

# 统计某个词的出现的次数

t.count('beauty')

# 计算某个词出现的位置

t.index('beauty')

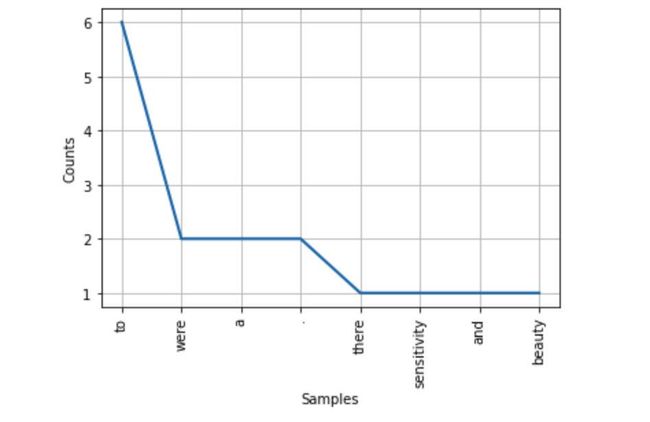

# 出现最多的前8个词画一个图

# 需要安装matplotlib pip install matplotlib

t.plot(8)['There', 'were', 'a', 'sensitivity', 'and']

停用词from nltk.corpus import stopwords

# 打印出所有的停用词支持的语言,我们使用english

stopwords.fileids()['arabic',

'azerbaijani',

'danish',

'dutch',

'english',

'finnish',

'french',

'german',

'greek',

'hungarian',

'indonesian',

'italian',

'kazakh',

'nepali',

'norwegian',

'portuguese',

'romanian',

'russian',

'spanish',

'swedish',

'turkish']# 打印所有的停用词

stopwords.raw('english').replace('\n',' ')"i me my myself we our ours ourselves you you're you've you'll you'd your yours yourself yourselves he him his himself she she's her hers herself it it's its itself they them their theirs themselves what which who whom this that that'll these those am is are was were be been being have has had having do does did doing a an the and but if or because as until while of at by for with about against between into through during before after above below to from up down in out on off over under again further then once here there when where why how all any both each few more most other some such no nor not only own same so than too very s t can will just don don't should should've now d ll m o re ve y ain aren aren't couldn couldn't didn didn't doesn doesn't hadn hadn't hasn hasn't haven haven't isn isn't ma mightn mightn't mustn mustn't needn needn't shan shan't shouldn shouldn't wasn wasn't weren weren't won won't wouldn wouldn't "# 过滤停用词

tokens=set(tokens)

filtered=[w for w in tokens if(w not in stopwords.words('english'))]

print(filtered)['nothing', 'sensitivity', ',', 'one', 'beauty', 'words', 'heart', 'looks', 'take', 'whose', '.', 'listened', 'easy']

词性标注# 第一次需要下载相应的组件 nltk.download()

from nltk import pos_tag

pos_tag(filtered)[('nothing', 'NN'),

('sensitivity', 'NN'),

(',', ','),

('one', 'CD'),

('beauty', 'NN'),

('words', 'NNS'),

('heart', 'NN'),

('looks', 'VBZ'),

('take', 'VB'),

('whose', 'WP$'),

('.', '.'),

('listened', 'VBN'),

('easy', 'JJ')]POS Tag指代CC并列连词

CD基数词

DT限定符

EX存在词

FW外来词

IN介词或从属连词

JJ形容词

JJR比较级的形容词

JJS最高级的形容词

LS列表项标记

MD情态动词

NN名词单数

NNS名词复数

NNP专有名词

PDT前置限定词

POS所有格结尾

PRP人称代词

PRP$所有格代词

RB副词

RBR副词比较级

RBS副词最高级

RP小品词

UH感叹词

VB动词原型

VBD动词过去式

VBG动名词或现在分词

VBN动词过去分词

VBP非第三人称单数的现在时

VBZ第三人称单数的现在时

WDT以wh开头的限定词

分块from nltk.chunk import RegexpParser

sentence = [('the','DT'),('little','JJ'),('yellow','JJ'),('dog','NN'),('died','VBD')]

grammer = "MY_NP: {

?*}"cp = nltk.RegexpParser(grammer) #生成规则

result = cp.parse(sentence) #进行分块

print(result)

result.draw() #调用matplotlib库画出来(S (MY_NP the/DT little/JJ yellow/JJ dog/NN) died/VBD)

An exception has occurred, use %tb to see the full traceback.

SystemExit: 0

命名实体识别# 第一次需要下载相应的组件 nltk.download()

from nltk import ne_chunk

input = "Edison went to Tsinghua University today."

print(ne_chunk(pos_tag(word_tokenize(input))))showing info https://raw.githubusercontent.com/nltk/nltk_data/gh-pages/index.xml

(S

(PERSON Edison/NNP)

went/VBD

to/TO

(ORGANIZATION Tsinghua/NNP University/NNP)

today/NN

./.)

数据清洗import re

from nltk.corpus import stopwords

# 输入数据

s = ' RT @Amila #Test\nTom\'s newly listed Co & Mary\'s unlisted Group to supply tech for nlTK.\nh $TSLA $AAPL https:// t.co/x34afsfQsh'

# 去掉html标签

s=re.sub(r'&\w*;|@\w*|#\w*','',s)

# 去掉一些价值符号

s=re.sub(r'\$\w*','',s)

# 去掉超链接

s=re.sub(r'https?:\/\/.*\/\w*','',s)

# 去掉一些专有名词 \b为单词的边界

s=re.sub(r'\b\w{1,2}\b','',s)

# 去掉多余的空格

s=re.sub(r'\s\s+','',s)

# 分词

tokens=word_tokenize(s)

# 去掉停用词

tokens=[w for w in tokens if(w not in stopwords.words('english'))]

# 最后的结果

print(' '.join(tokens))Tom ' newly listedMary ' unlistedGroupsupply tech nlTK .