NLP之LSTM与BiLSTM

文章目录

- 代码展示

- 代码解读

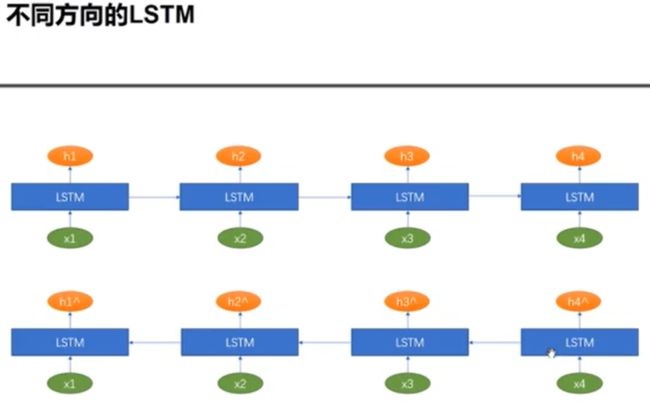

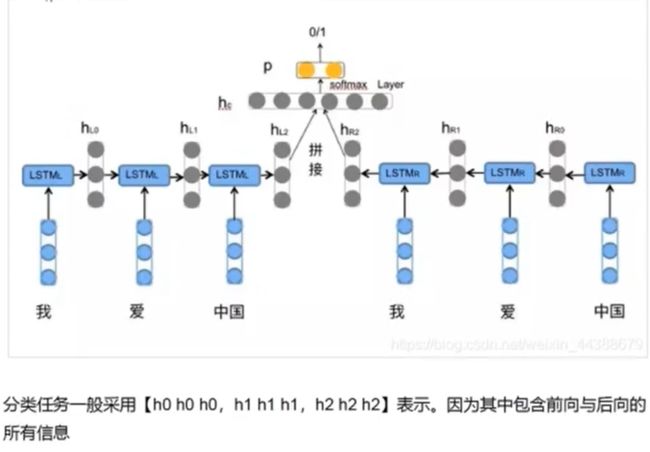

- 双向LSTM介绍(BiLSTM)

代码展示

import pandas as pd

import tensorflow as tf

tf.random.set_seed(1)

df = pd.read_csv("../data/Clothing Reviews.csv")

print(df.info())

df['Review Text'] = df['Review Text'].astype(str)

x_train = df['Review Text']

y_train = df['Rating']

print(y_train.unique())

<class 'pandas.core.frame.DataFrame'>

RangeIndex: 23486 entries, 0 to 23485

Data columns (total 11 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 Unnamed: 0 23486 non-null int64

1 Clothing ID 23486 non-null int64

2 Age 23486 non-null int64

3 Title 19676 non-null object

4 Review Text 22641 non-null object

5 Rating 23486 non-null int64

6 Recommended IND 23486 non-null int64

7 Positive Feedback Count 23486 non-null int64

8 Division Name 23472 non-null object

9 Department Name 23472 non-null object

10 Class Name 23472 non-null object

[4 5 3 2 1]

from tensorflow.keras.preprocessing.text import Tokenizer

dict_size = 14848

tokenizer = Tokenizer(num_words=dict_size)

tokenizer.fit_on_texts(x_train)

print(len(tokenizer.word_index),tokenizer.index_word)

x_train_tokenized = tokenizer.texts_to_sequences(x_train)

from tensorflow.keras.preprocessing.sequence import pad_sequences

max_comment_length = 120

x_train = pad_sequences(x_train_tokenized,maxlen=max_comment_length)

for v in x_train[:10]:

print(v,len(v))

# 构建RNN神经网络

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense,SimpleRNN,Embedding,LSTM,Bidirectional

import tensorflow as tf

rnn = Sequential()

# 对于rnn来说首先进行词向量的操作

rnn.add(Embedding(input_dim=dict_size,output_dim=60,input_length=max_comment_length))

# RNN:simple_rnn (SimpleRNN) (None, 100) 16100

# LSTM:simple_rnn (SimpleRNN) (None, 100) 64400

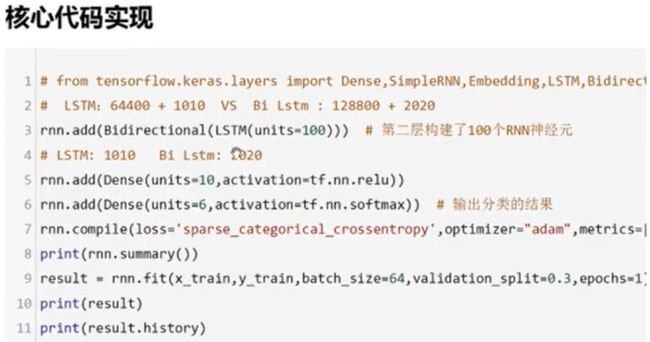

rnn.add(Bidirectional(LSTM(units=100))) # 第二层构建了100个RNN神经元

rnn.add(Dense(units=10,activation=tf.nn.relu))

rnn.add(Dense(units=6,activation=tf.nn.softmax)) # 输出分类的结果

rnn.compile(loss='sparse_categorical_crossentropy',optimizer="adam",metrics=['accuracy'])

print(rnn.summary())

result = rnn.fit(x_train,y_train,batch_size=64,validation_split=0.3,epochs=10)

print(result)

print(result.history)

代码解读

首先,我们来总结这段代码的流程:

- 导入了必要的TensorFlow Keras模块。

- 初始化了一个Sequential模型,这表示我们的模型会按顺序堆叠各层。

- 添加了一个Embedding层,用于将整数索引(对应词汇)转换为密集向量。

- 添加了一个双向LSTM层,其中包含100个神经元。

- 添加了两个Dense全连接层,分别包含10个和6个神经元。

- 使用

sparse_categorical_crossentropy损失函数编译了模型。 - 打印了模型的摘要。

- 使用给定的训练数据和验证数据对模型进行了训练。

- 打印了训练的结果。

现在,让我们逐行解读代码:

- 导入依赖:

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense,SimpleRNN,Embedding,LSTM,Bidirectional

import tensorflow as tf

你导入了创建和训练RNN模型所需的TensorFlow Keras库。

- 初始化模型:

rnn = Sequential()

你选择了一个顺序模型,这意味着你可以简单地按顺序添加层。

- 添加Embedding层:

rnn.add(Embedding(input_dim=dict_size,output_dim=60,input_length=max_comment_length))

此层将整数索引转换为固定大小的向量。dict_size是词汇表的大小,max_comment_length是输入评论的最大长度。

- 添加LSTM层:

rnn.add(Bidirectional(LSTM(units=100)))

你选择了双向LSTM,这意味着它会考虑过去和未来的信息。它有100个神经元。

- 添加全连接层:

rnn.add(Dense(units=10,activation=tf.nn.relu))

rnn.add(Dense(units=6,activation=tf.nn.softmax))

这两个Dense层用于模型的输出,最后一层使用softmax激活函数进行6类的分类。

- 编译模型:

rnn.compile(loss='sparse_categorical_crossentropy',optimizer="adam",metrics=['accuracy'])

你选择了一个适合分类问题的损失函数,并选择了adam优化器。

- 显示模型摘要:

print(rnn.summary())

这将展示模型的结构和参数数量。

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

embedding (Embedding) (None, 120, 60) 890880

bidirectional (Bidirectiona (None, 200) 128800

l)

dense (Dense) (None, 10) 2010

dense_1 (Dense) (None, 6) 66

=================================================================

- 训练模型:

result = rnn.fit(x_train,y_train,batch_size=64,validation_split=0.3,epochs=10)

你用训练数据集训练了模型,其中30%的数据用作验证,训练了10个周期。

Epoch 1/10

257/257 [==============================] - 74s 258ms/step - loss: 1.2142 - accuracy: 0.5470 - val_loss: 1.0998 - val_accuracy: 0.5521

Epoch 2/10

257/257 [==============================] - 57s 221ms/step - loss: 0.9335 - accuracy: 0.6293 - val_loss: 0.9554 - val_accuracy: 0.6094

Epoch 3/10

257/257 [==============================] - 59s 229ms/step - loss: 0.8363 - accuracy: 0.6616 - val_loss: 0.9321 - val_accuracy: 0.6168

Epoch 4/10

257/257 [==============================] - 61s 236ms/step - loss: 0.7795 - accuracy: 0.6833 - val_loss: 0.9812 - val_accuracy: 0.6089

Epoch 5/10

257/257 [==============================] - 56s 217ms/step - loss: 0.7281 - accuracy: 0.7010 - val_loss: 0.9559 - val_accuracy: 0.6043

Epoch 6/10

257/257 [==============================] - 56s 219ms/step - loss: 0.6934 - accuracy: 0.7156 - val_loss: 1.0197 - val_accuracy: 0.5999

Epoch 7/10

257/257 [==============================] - 57s 220ms/step - loss: 0.6514 - accuracy: 0.7364 - val_loss: 1.1192 - val_accuracy: 0.6080

Epoch 8/10

257/257 [==============================] - 57s 222ms/step - loss: 0.6258 - accuracy: 0.7486 - val_loss: 1.1350 - val_accuracy: 0.6100

Epoch 9/10

257/257 [==============================] - 57s 220ms/step - loss: 0.5839 - accuracy: 0.7749 - val_loss: 1.1537 - val_accuracy: 0.6019

Epoch 10/10

257/257 [==============================] - 57s 222ms/step - loss: 0.5424 - accuracy: 0.7945 - val_loss: 1.1715 - val_accuracy: 0.5744

<keras.callbacks.History object at 0x00000244DCE06D90>

- 显示训练结果:

print(result)

print(result.history)

这将展示训练过程中的损失和准确性等信息。