pytorch网络模型结构的总结打印

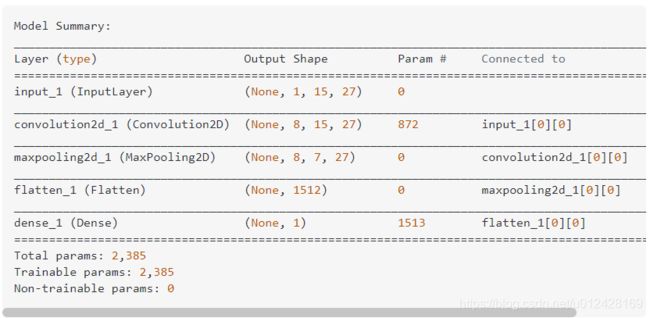

在keras中可以通过model.summary()打印出模型的结构,类似这样:

在pytorch中想要实现类似的功能,直接打印模型就可以了。例如

from torchvision import models

model = models.vgg16()

print(model)

输出结果

VGG (

(features): Sequential (

(0): Conv2d(3, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): ReLU (inplace)

(2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(3): ReLU (inplace)

(4): MaxPool2d (size=(2, 2), stride=(2, 2), dilation=(1, 1))

(5): Conv2d(64, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(6): ReLU (inplace)

(7): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(8): ReLU (inplace)

(9): MaxPool2d (size=(2, 2), stride=(2, 2), dilation=(1, 1))

(10): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(11): ReLU (inplace)

(12): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(13): ReLU (inplace)

(14): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(15): ReLU (inplace)

(16): MaxPool2d (size=(2, 2), stride=(2, 2), dilation=(1, 1))

(17): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(18): ReLU (inplace)

(19): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(20): ReLU (inplace)

(21): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(22): ReLU (inplace)

(23): MaxPool2d (size=(2, 2), stride=(2, 2), dilation=(1, 1))

(24): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(25): ReLU (inplace)

(26): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(27): ReLU (inplace)

(28): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(29): ReLU (inplace)

(30): MaxPool2d (size=(2, 2), stride=(2, 2), dilation=(1, 1))

)

(classifier): Sequential (

(0): Dropout (p = 0.5)

(1): Linear (25088 -> 4096)

(2): ReLU (inplace)

(3): Dropout (p = 0.5)

(4): Linear (4096 -> 4096)

(5): ReLU (inplace)

(6): Linear (4096 -> 1000)

)

)

参考资料:

Model summary in pytorch