阅读笔记:利用Python进行数据分析第2版——第8章 数据规整:聚合、合并和重塑

目录

-

-

- 一、层次化索引

- 二、合并数据集

- 三、重塑和轴向旋转

-

一、层次化索引

- 层次化索引(hierarchical indexing)是pandas的一项重要功能,它使你能在一个轴上拥有多个(两个以上)索引级别。抽象点说,它使你能以低维度形式处理高维度数据。

import pandas as pd

import numpy as np

data = pd.Series(np.random.randn(9), index=[['a', 'a', 'a', 'b', 'b', 'c', 'c', 'd', 'd'], [1, 2, 3, 1, 3, 1, 2, 2, 3]])

In [10]: data

Out[10]:

a 1 -0.204708

2 0.478943

3 -0.519439

b 1 -0.555730

3 1.965781

c 1 1.393406

2 0.092908

d 2 0.281746

3 0.769023

dtype: float64

In [12]: data['b']

Out[12]:

1 -0.555730

3 1.965781

dtype: float64

In [15]: data.loc[:, 2]

Out[15]:

a 0.478943

c 0.092908

d 0.281746

dtype: float64

- 层次化索引在数据重塑和基于分组的操作(如透视表生成)中扮演着重要的角色。

In [16]: data.unstack()

Out[16]:

1 2 3

a -0.204708 0.478943 -0.519439

b -0.555730 NaN 1.965781

c 1.393406 0.092908 NaN

d NaN 0.281746 0.769023

data.unstack().stack()

- 对于一个

DataFrame,每条轴都可以有分层索引:

In [18]: frame = pd.DataFrame(np.arange(12).reshape((4, 3)), index=[['a', 'a', 'b', 'b'], [1, 2, 1, 2]], columns=[['Ohio', 'Ohio', 'Colorado'], ['Green', 'Red', 'Green']])

In [19]: frame

Out[19]:

Ohio Colorado

Green Red Green

a 1 0 1 2

2 3 4 5

b 1 6 7 8

2 9 10 11

- 各层都可以有名字(可以是字符串,也可以是别的Python对象)。

In [20]: frame.index.names = ['key1', 'key2']

In [21]: frame.columns.names = ['state', 'color']

In [22]: frame

Out[22]:

state Ohio Colorado

color Green Red Green

key1 key2

a 1 0 1 2

2 3 4 5

b 1 6 7 8

2 9 10 11

- 可以单独创建

MultiIndex然后复用。上面那个DataFrame中的(带有分级名称)列可以这样创建:

MultiIndex.from_arrays([['Ohio', 'Ohio', 'Colorado'], ['Green', 'Red', 'Green']], names=['state', 'color'])

swaplevel接受两个级别编号或名称,并返回一个互换了级别的新对象(但数据不会发生变化):

In [24]: frame.swaplevel('key1', 'key2')

Out[24]:

state Ohio Colorado

color Green Red Green

key2 key1

1 a 0 1 2

2 a 3 4 5

1 b 6 7 8

2 b 9 10 11

- 而sort_index则根据单个级别中的值对数据进行排序。交换级别时,常常也会用到sort_index,这样最终结果就是按照指定顺序进行字母排序了:

In [25]: frame.sort_index(level=1)

Out[25]:

state Ohio Colorado

color Green Red Green

key1 key2

a 1 0 1 2

b 1 6 7 8

a 2 3 4 5

b 2 9 10 11

In [26]: frame.swaplevel(0, 1).sort_index(level=0)

Out[26]:

state Ohio Colorado

color Green Red Green

key2 key1

1 a 0 1 2

b 6 7 8

2 a 3 4 5

b 9 10 11

- 许多对

DataFrame和Series的描述和汇总统计都有一个level选项,它用于指定在某条轴上求和的级别。

In [27]: frame.sum(level='key2')

Out[27]:

state Ohio Colorado

color Green Red Green

key2

1 6 8 10

2 12 14 16

In [28]: frame.sum(level='color', axis=1)

Out[28]:

color Green Red

key1 key2

a 1 2 1

2 8 4

b 1 14 7

2 20 10

这其实是利用了pandas的groupby功能。

- 人们经常想要将

DataFrame的一个或多个列当做行索引来用,或者可能希望将行索引变成DataFrame的列。

frame = pd.DataFrame({'a': range(7), 'b': range(7, 0, -1), 'c': ['one', 'one', 'one', 'two', 'two', 'two', 'two'], 'd': [0, 1, 2, 0, 1, 2, 3]})

frame2 = frame.set_index(['c', 'd'])

frame.set_index(['c', 'd'], drop=False)

frame2.reset_index() # 功能跟set_index刚好相反,层次化索引的级别会被转移到列里面

二、合并数据集

pandas对象中的数据可以通过一些方式进行合并:

pandas.merge可根据一个或多个键将不同DataFrame中的行连接起来,相当于数据库的join操作。pandas.concat可以沿着一条轴将多个对象堆叠到一起。- 实例方法

combine_first可以将重复数据拼接在一起,用一个对象中的值填充另一个对象中的缺失值。

- 多对一的合并

df1 = pd.DataFrame({'key': ['b', 'b', 'a', 'c', 'a', 'a', 'b'], 'data1': range(7)})

df2 = pd.DataFrame({'key': ['a', 'b', 'd'], 'data2': range(3)})

# 注意,如果没有指定要用哪个列进行连接,merge就会将重叠列的列名当做键。

In [39]: pd.merge(df1, df2)

Out[39]:

data1 key data2

0 0 b 1

1 1 b 1

2 6 b 1

3 2 a 0

4 4 a 0

5 5 a 0

如果两个对象的列名不同,也可以分别进行指定:

In [41]: df3 = pd.DataFrame({'lkey': ['b', 'b', 'a', 'c', 'a', 'a', 'b'], 'data1': range(7)})

In [42]: df4 = pd.DataFrame({'rkey': ['a', 'b', 'd'], 'data2': range(3)})

In [43]: pd.merge(df3, df4, left_on='lkey', right_on='rkey')

Out[43]:

data1 lkey data2 rkey

0 0 b 1 b

1 1 b 1 b

2 6 b 1 b

3 2 a 0 a

4 4 a 0 a

5 5 a 0 a

默认情况下,merge做的是“内连接”;结果中的键是交集。其他方式还有”left”、”right”以及”outer”。外连接求取的是键的并集,组合了左连接和右连接的效果。

- 多对多的合并

In [45]: df1 = pd.DataFrame({'key': ['b', 'b', 'a', 'c', 'a', 'b'], 'data1': range(6)})

In [46]: df2 = pd.DataFrame({'key': ['a', 'b', 'a', 'b', 'd'], 'data2': range(5)})

In [49]: pd.merge(df1, df2, on='key', how='left') # 左连接,保留c,删除d

Out[49]:

data1 key data2

0 0 b 1.0

1 0 b 3.0

2 1 b 1.0

3 1 b 3.0

4 2 a 0.0

5 2 a 2.0

6 3 c NaN

7 4 a 0.0

8 4 a 2.0

9 5 b 1.0

10 5 b 3.0

- 要根据多个键进行合并,传入一个由列名组成的列表即可

In [51]: left = pd.DataFrame({'key1': ['foo', 'foo', 'bar'], 'key2': ['one', 'two', 'one'], 'lval': [1, 2, 3]})

In [52]: right = pd.DataFrame({'key1': ['foo', 'foo', 'bar', 'bar'], 'key2': ['one', 'one', 'one', 'two'], 'rval': [4, 5, 6, 7]})

In [53]: pd.merge(left, right, on=['key1', 'key2'], how='outer')

Out[53]:

key1 key2 lval rval

0 foo one 1.0 4.0

1 foo one 1.0 5.0

2 foo two 2.0 NaN

3 bar one 3.0 6.0

4 bar two NaN 7.0

- 对于合并运算需要考虑的最后一个问题是对重复列名的处理。虽然你可以手工处理列名重叠的问题(查看前面介绍的重命名轴标签),但merge有一个更实用的suffixes选项,用于指定附加到左右两个DataFrame对象的重叠列名上的字符串:

In [54]: pd.merge(left, right, on='key1')

Out[54]:

key1 key2_x lval key2_y rval

0 foo one 1 one 4

1 foo one 1 one 5

2 foo two 2 one 4

3 foo two 2 one 5

4 bar one 3 one 6

5 bar one 3 two 7

In [55]: pd.merge(left, right, on='key1', suffixes=('_left', '_right'))

Out[55]:

key1 key2_left lval key2_right rval

0 foo one 1 one 4

1 foo one 1 one 5

2 foo two 2 one 4

3 foo two 2 one 5

4 bar one 3 one 6

5 bar one 3 two 7

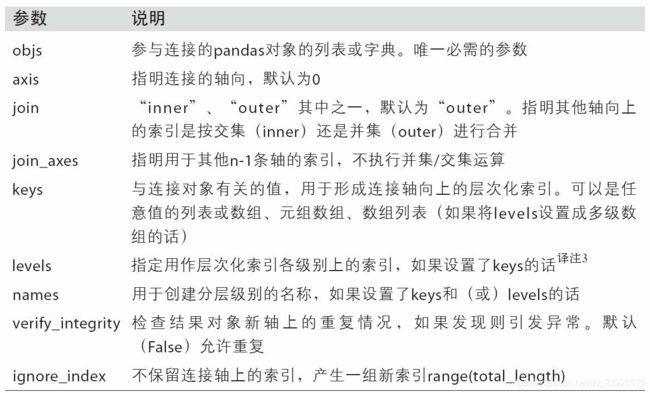

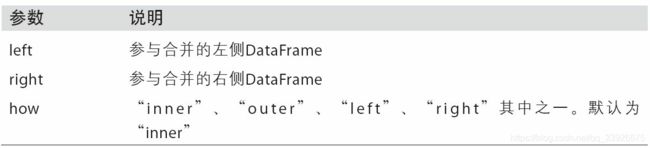

- merge函数的参数:

- on:用于连接的列名

- left_on/right_on:左侧/右侧DataFrame中用作连接键的列

- left_index/right_index:将左侧/右侧的行索引用作其连接键

- how:inner、outer、left、right,DataFrame连接方式

- 索引上的合并

In [56]: left1 = pd.DataFrame({'key': ['a', 'b', 'a', 'a', 'b', 'c'], 'value': range(6)})

In [57]: right1 = pd.DataFrame({'group_val': [3.5, 7]}, index=['a', 'b'])

In [60]: pd.merge(left1, right1, left_on='key', right_index=True)

Out[60]:

key value group_val

0 a 0 3.5

2 a 2 3.5

3 a 3 3.5

1 b 1 7.0

4 b 4 7.0

In [61]: pd.merge(left1, right1, left_on='key', right_index=True, how='outer') # 通过外连接的方式得到它们的并集

- 层次化索引上的合并

In [62]: lefth = pd.DataFrame({'key1': ['Ohio', 'Ohio', 'Ohio', 'Nevada', 'Nevada'],

....: 'key2': [2000, 2001, 2002, 2001, 2002],

....: 'data': np.arange(5.)})

In [63]: righth = pd.DataFrame(np.arange(12).reshape((6, 2)),

....: index=[['Nevada', 'Nevada', 'Ohio', 'Ohio', 'Ohio', 'Ohio'],

....: [2001, 2000, 2000, 2000, 2001, 2002]],

....: columns=['event1', 'event2'])

In [64]: lefth

Out[64]:

data key1 key2

0 0.0 Ohio 2000

1 1.0 Ohio 2001

2 2.0 Ohio 2002

3 3.0 Nevada 2001

4 4.0 Nevada 2002

In [65]: righth

Out[65]:

event1 event2

Nevada 2001 0 1

2000 2 3

Ohio 2000 4 5

2000 6 7

2001 8 9

2002 10 11

# 必须以列表的形式指明用作合并键的多个列(注意用how=’outer’对重复索引值的处理)

In [66]: pd.merge(lefth, righth, left_on=['key1', 'key2'], right_index=True)

Out[66]:

data key1 key2 event1 event2

0 0.0 Ohio 2000 4 5

0 0.0 Ohio 2000 6 7

1 1.0 Ohio 2001 8 9

2 2.0 Ohio 2002 10 11

3 3.0 Nevada 2001 0 1

In [67]: pd.merge(lefth, righth, left_on=['key1', 'key2'], right_index=True, how='outer')

Out[67]:

data key1 key2 event1 event2

0 0.0 Ohio 2000 4.0 5.0

0 0.0 Ohio 2000 6.0 7.0

1 1.0 Ohio 2001 8.0 9.0

2 2.0 Ohio 2002 10.0 11.0

3 3.0 Nevada 2001 0.0 1.0

4 4.0 Nevada 2002 NaN NaN

4 NaN Nevada 2000 2.0 3.0

DataFrame还有一个便捷的join实例方法,它能更为方便地实现按索引合并。它还可用于合并多个带有相同或相似索引的DataFrame对象,但要求没有重叠的列。

In [68]: left2 = pd.DataFrame([[1., 2.], [3., 4.], [5., 6.]], index=['a', 'c', 'e'], columns=['Ohio', 'Nevada'])

In [69]: right2 = pd.DataFrame([[7., 8.], [9., 10.], [11., 12.], [13, 14]], index=['b', 'c', 'd', 'e'], columns=['Missouri', 'Alabama'])

In [72]: pd.merge(left2, right2, how='outer', left_index=True, right_index=True)

In [73]: left2.join(right2, how='outer') # 效果等价于上面这行代码

Out[73]:

Ohio Nevada Missouri Alabama

a 1.0 2.0 NaN NaN

b NaN NaN 7.0 8.0

c 3.0 4.0 9.0 10.0

d NaN NaN 11.0 12.0

e 5.0 6.0 13.0 14.0

DataFrame的join方法默认使用的是左连接,保留左边表的行索引

- 对于简单的索引合并,你还可以向

join传入一组DataFrame:

another = pd.DataFrame([[7., 8.], [9., 10.], [11., 12.], [16., 17.]], index=['a', 'c', 'e', 'f'], columns=['New York', 'Oregon'])

In [77]: left2.join([right2, another])

Out[77]:

Ohio Nevada Missouri Alabama New York Oregon

a 1.0 2.0 NaN NaN 7.0 8.0

c 3.0 4.0 9.0 10.0 9.0 10.0

e 5.0 6.0 13.0 14.0 11.0 12.0

In [78]: left2.join([right2, another], how='outer')

Out[78]:

Ohio Nevada Missouri Alabama New York Oregon

a 1.0 2.0 NaN NaN 7.0 8.0

b NaN NaN 7.0 8.0 NaN NaN

c 3.0 4.0 9.0 10.0 9.0 10.0

d NaN NaN 11.0 12.0 NaN NaN

e 5.0 6.0 13.0 14.0 11.0 12.0

f NaN NaN NaN NaN 16.0 17.0

- 另一种数据合并运算也被称作连接(concatenation)、绑定(binding)或堆叠(stacking)。

NumPy的concatenation函数可以用NumPy数组来做:

arr = np.arange(12).reshape((3, 4))

np.concatenate([arr, arr], axis=1)

- 对于

pandas对象,带有标签的轴使你能够进一步推广数组的连接运算。需要考虑:

- 如果对象在其它轴上的索引不同,我们应该合并这些轴的不同元素还是只使用交集?

- 连接的数据集是否需要在结果对象中可识别?

- 连接轴中保存的数据是否需要保留?许多情况下,

DataFrame默认的整数标签最好在连接时删掉。

- 默认情况下,

concat是在axis=0上工作的,最终产生一个新的Series。

In [82]: s1 = pd.Series([0, 1], index=['a', 'b'])

In [83]: s2 = pd.Series([2, 3, 4], index=['c', 'd', 'e'])

In [84]: s3 = pd.Series([5, 6], index=['f', 'g'])

In [85]: pd.concat([s1, s2, s3])

Out[85]:

a 0

b 1

c 2

d 3

e 4

f 5

g 6

dtype: int64

- 如果传入

axis=1,则结果就会变成一个DataFrame(axis=1是列):

In [86]: pd.concat([s1, s2, s3], axis=1)

Out[86]:

0 1 2

a 0.0 NaN NaN

b 1.0 NaN NaN

c NaN 2.0 NaN

d NaN 3.0 NaN

e NaN 4.0 NaN

f NaN NaN 5.0

g NaN NaN 6.0

In [87]: s4 = pd.concat([s1, s3])

In [89]: pd.concat([s1, s4], axis=1) # 默认交集

Out[89]:

0 1

a 0.0 0

b 1.0 1

f NaN 5

g NaN 6

In [90]: pd.concat([s1, s4], axis=1, join='inner') # 交集

Out[90]:

0 1

a 0 0

b 1 1

- 可以通过join_axes指定要在其它轴上使用的索引:

In [91]: pd.concat([s1, s4], axis=1, join_axes=[['a', 'c', 'b', 'e']])

Out[91]:

0 1

a 0.0 0.0

c NaN NaN

b 1.0 1.0

e NaN NaN

- 假设你想要在连接轴上创建一个层次化索引。使用keys参数即可达到这个目的:

In [92]: result = pd.concat([s1, s1, s3], keys=['one','two', 'three'])

In [93]: result

Out[93]:

one a 0

b 1

two a 0

b 1

three f 5

g 6

dtype: int64

In [94]: result.unstack()

Out[94]:

a b f g

one 0.0 1.0 NaN NaN

two 0.0 1.0 NaN NaN

three NaN NaN 5.0 6.0

如果沿着axis=1对Series进行合并,则keys就会成为DataFrame的列头:

In [95]: pd.concat([s1, s2, s3], axis=1, keys=['one','two', 'three'])

Out[95]:

one two three

a 0.0 NaN NaN

b 1.0 NaN NaN

c NaN 2.0 NaN

d NaN 3.0 NaN

e NaN 4.0 NaN

f NaN NaN 5.0

g NaN NaN 6.0

- 同样的逻辑也适用于DataFrame对象:

In [96]: df1 = pd.DataFrame(np.arange(6).reshape(3, 2), index=['a', 'b', 'c'], columns=['one', 'two'])

In [97]: df2 = pd.DataFrame(5 + np.arange(4).reshape(2, 2), index=['a', 'c'], columns=['three', 'four'])

In [100]: pd.concat([df1, df2], axis=1, keys=['level1', 'level2'])

Out[100]:

level1 level2

one two three four

a 0 1 5.0 6.0

b 2 3 NaN NaN

c 4 5 7.0 8.0

In [101]: result['level1']

Out[102]:

one two

a 0 1

b 2 3

c 4 5

In [102]: result['level1'].loc['a']

Out[102]:

one 0

two 1

Name: a, dtype: int32

如果传入的不是列表而是一个字典,则字典的键就会被当做keys选项的值:

In [101]: pd.concat({'level1': df1, 'level2': df2}, axis=1)

Out[101]:

level1 level2

one two three four

a 0 1 5.0 6.0

b 2 3 NaN NaN

c 4 5 7.0 8.0

- 用names参数命名创建的轴级别:

pd.concat([df1, df2], axis=1, keys=['level1', 'level2'], names=['upper', 'lower']) - 若

DataFrame的行索引不包含任何相关数据,传入ignore_index=True即可:

In [103]: df1 = pd.DataFrame(np.random.randn(3, 4), columns=['a', 'b', 'c', 'd'])

In [104]: df2 = pd.DataFrame(np.random.randn(2, 3), columns=['b', 'd', 'a'])

In [107]: pd.concat([df1, df2], ignore_index=True)

Out[107]:

a b c d

0 1.246435 1.007189 -1.296221 0.274992

1 0.228913 1.352917 0.886429 -2.001637

2 -0.371843 1.669025 -0.438570 -0.539741

3 -1.021228 0.476985 NaN 3.248944

4 0.302614 -0.577087 NaN 0.124121

- 合并重叠数据

a = pd.Series([np.nan, 2.5, np.nan, 3.5, 4.5, np.nan], index=['f', 'e', 'd', 'c', 'b', 'a'])

b = pd.Series(np.arange(len(a), dtype=np.float64), index=['f', 'e', 'd', 'c', 'b', 'a'])

b[-1] = np.nan

In [113]: np.where(pd.isnull(a), b, a)

Out[113]: array([ 0. , 2.5, 2. , 3.5, 4.5, nan])

Series有一个combine_first方法,实现的也是一样的功能,还带有pandas的数据对齐:

In [114]: b[:-2].combine_first(a[2:])

Out[114]:

a NaN

b 4.5

c 3.0

d 2.0

e 1.0

f 0.0

dtype: float64

- 对于DataFrame,combine_first自然也会在列上做同样的事情,因此你可以将其看做:用传递对象中的数据为调用对象的缺失数据“打补丁”:

In [115]: df1 = pd.DataFrame({'a': [1., np.nan, 5., np.nan],

.....: 'b': [np.nan, 2., np.nan, 6.],

.....: 'c': range(2, 18, 4)})

In [116]: df2 = pd.DataFrame({'a': [5., 4., np.nan, 3., 7.],

.....: 'b': [np.nan, 3., 4., 6., 8.]})

In [119]: df1.combine_first(df2)

Out[119]:

a b c

0 1.0 NaN 2.0

1 4.0 2.0 6.0

2 5.0 4.0 10.0

3 3.0 6.0 14.0

4 7.0 8.0 NaN

三、重塑和轴向旋转

有许多用于重新排列表格型数据的基础运算。这些函数也称作重塑(reshape)或轴向旋转(pivot)运算。

- 层次化索引为

DataFrame数据的重排任务提供了一种具有良好一致性的方式。主要功能有二:

stack:将数据的列“旋转”为行。unstack:将数据的行“旋转”为列。

- 重塑层次化索引:

In [120]: data = pd.DataFrame(np.arange(6).reshape((2, 3)),

.....: index=pd.Index(['Ohio','Colorado'], name='state'),

.....: columns=pd.Index(['one', 'two', 'three'],

.....: name='number'))

对该数据使用stack方法即可将列转换为行,得到一个Series;对于一个层次化索引的Series,你可以用unstack将其重排为一个DataFrame:

In [122]: result = data.stack()

In [123]: result

Out[123]:

state number

Ohio one 0

two 1

three 2

Colorado one 3

two 4

three 5

dtype: int64

In [124]: result.unstack()

Out[124]:

number one two three

state

Ohio 0 1 2

Colorado 3 4 5

- 默认情况下,

unstack操作的是最内层(stack也是如此)。传入分层级别的编号或名称即可对其它级别进行unstack操作:

In [125]: result.unstack(0)

Out[125]:

state Ohio Colorado

number

one 0 3

two 1 4

three 2 5

In [126]: result.unstack('state')

Out[126]:

state Ohio Colorado

number

one 0 3

two 1 4

three 2 5

- 如果不是所有的级别值都能在各分组中找到的话,则

unstack操作可能会引入缺失数据。stack默认会滤除缺失数据,因此该运算是可逆的:

In [132]: data2.unstack()

Out[132]:

a b c d e

one 0.0 1.0 2.0 3.0 NaN

two NaN NaN 4.0 5.0 6.0

In [133]: data2.unstack().stack()

Out[133]:

one a 0.0

b 1.0

c 2.0

d 3.0

two c 4.0

d 5.0

e 6.0

dtype: float64

In [134]: data2.unstack().stack(dropna=False)

Out[134]:

one a 0.0

b 1.0

c 2.0

d 3.0

e NaN

two a NaN

b NaN

c 4.0

d 5.0

e 6.0

dtype: float64

DataFrame的pivot方法可以实现将数据从长格式旋转为宽格式,melt函数可以实现将数据从宽格式旋转为长格式,两者操作可逆。

In[157]: df = pd.DataFrame({'key':['foo','bar','baz'], 'A':[1,2,3], 'B':[4,5,6], 'C':[7,8,9]})

In[158]: df

Out[158]:

A B C key

0 1 4 7 foo

1 2 5 8 bar

2 3 6 9 baz

# 当使用pandas.melt,我们必须指明哪些列是分组指标。下面使用key作为唯一的分组指标:

In[159]: melted = pd.melt(df,['key'])

In[160]: melted

Out[160]:

key variable value

0 foo A 1

1 bar A 2

2 baz A 3

3 foo B 4

4 bar B 5

5 baz B 6

6 foo C 7

7 bar C 8

8 baz C 9

使用pivot,可以重塑回原来的样子:reshaped = melted.pivot('key','variable','value')

注意,pivot其实就是用set_index创建层次化索引,再用unstack重塑:

melted.set_index(['key', 'variable']).unstack('variable')

value

variable A B C

key

bar 2 5 8

baz 3 6 9

foo 1 4 7

现在你已经掌握了pandas数据导入、清洗、重塑,我们可以进一步学习matplotlib数据可视化。我们在稍后会回到pandas,学习更高级的分析。