kubernetes集群编排——k8s存储(volumes,持久卷,statefulset控制器)

volumes

emptyDir卷

vim emptydir.yamlapiVersion: v1

kind: Pod

metadata:

name: vol1

spec:

containers:

- image: busyboxplus

name: vm1

command: ["sleep", "300"]

volumeMounts:

- mountPath: /cache

name: cache-volume

- name: vm2

image: nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: cache-volume

volumes:

- name: cache-volume

emptyDir:

medium: Memory

sizeLimit: 100Mikubectl apply -f emptydir.yaml

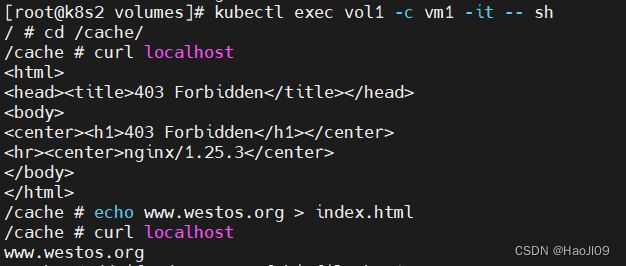

kubectl get podkubectl exec vol1 -c vm1 -it -- sh

/ # cd /cache/

/cache # curl localhost

/cache # echo www.westos.org > index.html

/cache # curl localhost

/cache # dd if=/dev/zero of=bigfile bs=1M count=200

/cache # du -h bigfilehostpath卷

vim hostpath.yamlapiVersion: v1

kind: Pod

metadata:

name: vol2

spec:

nodeName: k8s4

containers:

- image: nginx

name: test-container

volumeMounts:

- mountPath: /usr/share/nginx/html

name: test-volume

volumes:

- name: test-volume

hostPath:

path: /data

type: DirectoryOrCreatekubectl apply -f hostpath.yaml

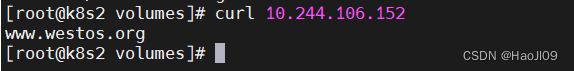

kubectl get pod -o wide[root@k8s4 data]# echo www.westos.org > index.htmlcurl 10.244.106.152nfs卷

配置nfsserver

[root@k8s1 ~]# yum install -y nfs-utils

[root@k8s1 ~]# vim /etc/exports

/nfsdata *(rw,sync,no_root_squash)

[root@k8s1 ~]# mkdir -m 777 /nfsdata

[root@k8s1 ~]# systemctl enable --now nfs

[root@k8s1 ~]# showmount -evim nfs.yamlapiVersion: v1

kind: Pod

metadata:

name: nfs

spec:

containers:

- image: nginx

name: test-container

volumeMounts:

- mountPath: /usr/share/nginx/html

name: test-volume

volumes:

- name: test-volume

nfs:

server: 192.168.92.11

path: /nfsdata

需要在所有k8s节点上安装nfs-utils软件包

yum install -y nfs-utils没有安装会有以下错误

kubectl apply -f nfs.yaml

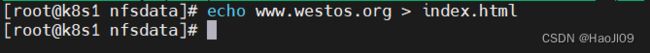

kubectl get pod -o wide在nfsserver端创建测试页

[root@k8s1 ~]# cd /nfsdata/

[root@k8s1 nfsdata]# echo www.westos.org > index.html

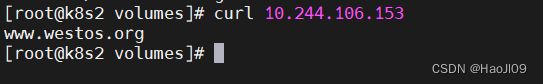

[root@k8s2 volumes]# curl 10.244.106.153持久卷

配置nfs输出目录

[root@k8s1 ~]# cd /nfsdata/

[root@k8s1 nfsdata]# mkdir pv1 pv2 pv3

创建静态pv

vim pv.yamlapiVersion: v1

kind: PersistentVolume

metadata:

name: pv1

spec:

capacity:

storage: 5Gi

volumeMode: Filesystem

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: nfs

nfs:

path: /nfsdata/pv1

server: 192.168.92.11

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv2

spec:

capacity:

storage: 10Gi

volumeMode: Filesystem

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Delete

storageClassName: nfs

nfs:

path: /nfsdata/pv2

server: 192.168.92.11

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv3

spec:

capacity:

storage: 15Gi

volumeMode: Filesystem

accessModes:

- ReadOnlyMany

persistentVolumeReclaimPolicy: Retain

storageClassName: nfs

nfs:

path: /nfsdata/pv3

server: 192.168.92.11

kubectl apply -f pv.yaml

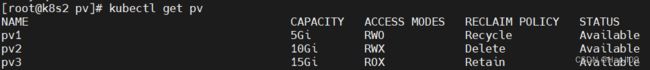

kubectl get pv创建pvc

vim pvc.yamlapiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc1

spec:

storageClassName: nfs

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc2

spec:

storageClassName: nfs

accessModes:

- ReadWriteMany

resources:

requests:

storage: 10Gi

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc3

spec:

storageClassName: nfs

accessModes:

- ReadOnlyMany

resources:

requests:

storage: 15Gi

kubectl apply -f pvc.yaml

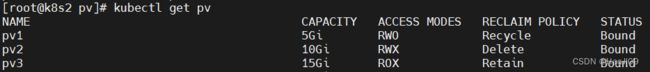

kubectl get pvc

kubectl get pv创建pod

vim pod.yamlapiVersion: v1

kind: Pod

metadata:

name: test-pod1

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: vol1

volumes:

- name: vol1

persistentVolumeClaim:

claimName: pvc1

---

apiVersion: v1

kind: Pod

metadata:

name: test-pod2

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: vol1

volumes:

- name: vol1

persistentVolumeClaim:

claimName: pvc2

---

apiVersion: v1

kind: Pod

metadata:

name: test-pod3

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: vol1

volumes:

- name: vol1

persistentVolumeClaim:

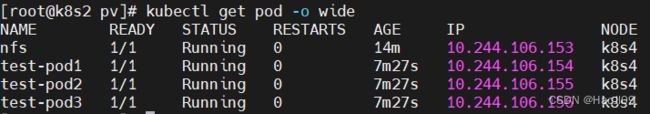

claimName: pvc3kubectl apply -f pod.yaml

kubectl get pod -o wide在nfs输出目录中创建测试页

echo pv1 > pv1/index.html

echo pv2 > pv2/index.html

echo pv3 > pv3/index.html[root@k8s2 pv]# curl 10.244.106.154

[root@k8s2 pv]# curl 10.244.106.155

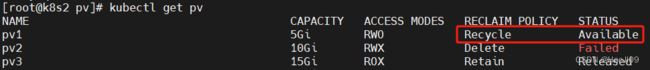

[root@k8s2 pv]# curl 10.244.106.156回收资源,需要按顺序回收: pod -> pvc -> pv

kubectl delete -f pod.yml

kubectl delete -f pvc.yml回收pvc后,pv会被回收再利用

kubectl get pvpv的回收需要拉取镜像,提前在node节点导入镜像

containerd 导入镜像

[root@k8s3 ~]# ctr -n=k8s.io image import debian-base.tar

[root@k8s4 ~]# ctr -n=k8s.io image import debian-base.tar回收

kubectl delete -f pv.yamlstorageclass

官网: GitHub - kubernetes-sigs/nfs-subdir-external-provisioner: Dynamic sub-dir volume provisioner on a remote NFS server.

上传镜像

创建sa并授权

[root@k8s2 storageclass]# vim nfs-client.yamlapiVersion: v1

kind: Namespace

metadata:

labels:

kubernetes.io/metadata.name: nfs-client-provisioner

name: nfs-client-provisioner

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

namespace: nfs-client-provisioner

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: nfs-client-provisioner

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

namespace: nfs-client-provisioner

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

namespace: nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: nfs-client-provisioner

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

namespace: nfs-client-provisioner

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: sig-storage/nfs-subdir-external-provisioner:v4.0.2

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: k8s-sigs.io/nfs-subdir-external-provisioner

- name: NFS_SERVER

value: 192.168.92.11

- name: NFS_PATH

value: /nfsdata

volumes:

- name: nfs-client-root

nfs:

server: 192.168.92.11

path: /nfsdata

---

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-client

annotations:

storageclass.kubernetes.io/is-default-class: "true"

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner

parameters:

archiveOnDelete: "false"

kubectl apply -f nfs-client.yaml

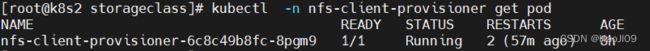

kubectl -n nfs-client-provisioner get pod

kubectl get sc![]()

创建pvc

vim pvc.yamlkind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: test-claim

spec:

storageClassName: nfs-client

accessModes:

- ReadWriteMany

resources:

requests:

storage: 1Gikubectl apply -f pvc.yaml

kubectl get pvc创建pod

vim pod.yamlkind: Pod

apiVersion: v1

metadata:

name: test-pod

spec:

containers:

- name: test-pod

image: busybox

command:

- "/bin/sh"

args:

- "-c"

- "touch /mnt/SUCCESS && exit 0 || exit 1"

volumeMounts:

- name: nfs-pvc

mountPath: "/mnt"

restartPolicy: "Never"

volumes:

- name: nfs-pvc

persistentVolumeClaim:

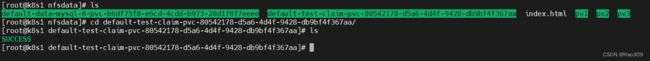

claimName: test-claimkubectl apply -f pod.yamlpod会在pv中创建一个文件

回收

kubectl delete -f pod.yaml

kubectl delete -f pvc.yaml设置默认存储类,这样在创建pvc时可以不用指定storageClassName

kubectl patch storageclass nfs-client -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'kubectl get scstatefulset控制器

vim headless.yamlapiVersion: v1

kind: Service

metadata:

name: nginx-svc

labels:

app: nginx

spec:

ports:

- port: 80

name: web

clusterIP: None

selector:

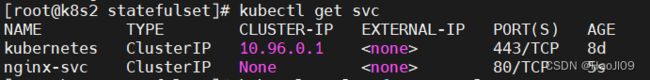

app: nginxkubectl apply -f headless.yaml

kubectl get svcvim statefulset.yamlapiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

serviceName: "nginx-svc"

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumeClaimTemplates:

- metadata:

name: www

spec:

storageClassName: nfs-client

accessModes:

- ReadWriteOnce

resources:

requests:

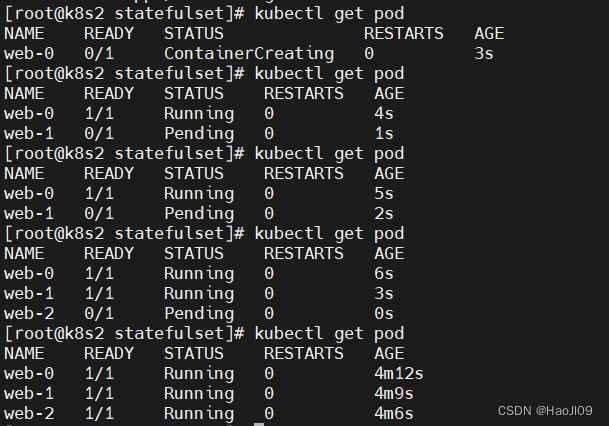

storage: 1Gikubectl apply -f statefulset.yaml

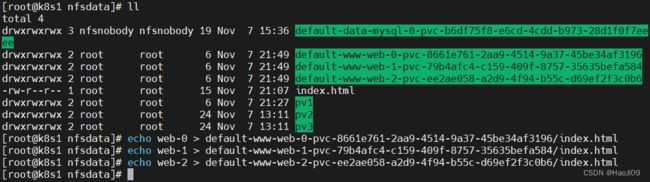

kubectl get pod在nfs输出目录创建测试页

echo web-0 > default-www-web-0-pvc-8661e761-2aa9-4514-9a37-45be34af3196/index.html

echo web-1 > default-www-web-1-pvc-79b4afc4-c159-409f-8757-35635befa584/index.html

echo web-2 > default-www-web-2-pvc-ee2ae058-a2d9-4f94-b55c-d69ef2f3c0b6/index.html

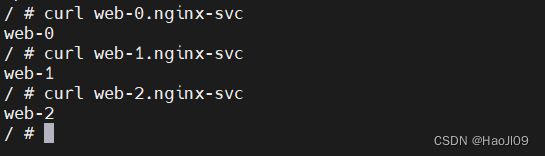

kubectl run demo --image busyboxplus -it/ # curl web-0.nginx-svc

/ # curl web-1.nginx-svc

/ # curl web-2.nginx-svc

statefulset有序回收

kubectl scale statefulsets web --replicas=0

kubectl delete -f statefulset.yaml

kubectl delete pvc --all

mysql主从部署

官网:https://v1-25.docs.kubernetes.io/zh-cn/docs/tasks/run-application/run-replicated-stateful-application/

上传镜像

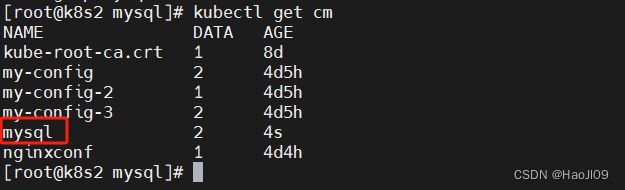

vim configmap.yamlapiVersion: v1

kind: ConfigMap

metadata:

name: mysql

labels:

app: mysql

app.kubernetes.io/name: mysql

data:

primary.cnf: |

[mysqld]

log-bin

replica.cnf: |

[mysqld]

super-read-onlykubectl apply -f configmap.yaml

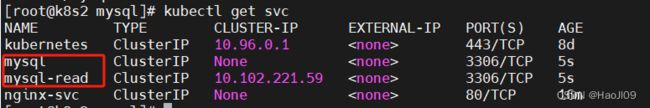

kubectl get cmvim svc.yamlapiVersion: v1

kind: Service

metadata:

name: mysql

labels:

app: mysql

app.kubernetes.io/name: mysql

spec:

ports:

- name: mysql

port: 3306

clusterIP: None

selector:

app: mysql

---

apiVersion: v1

kind: Service

metadata:

name: mysql-read

labels:

app: mysql

app.kubernetes.io/name: mysql

readonly: "true"

spec:

ports:

- name: mysql

port: 3306

selector:

app: mysqlkubectl apply -f svc.yaml

kubectl get svcvim statefulset.yamlapiVersion: apps/v1

kind: StatefulSet

metadata:

name: mysql

spec:

selector:

matchLabels:

app: mysql

app.kubernetes.io/name: mysql

serviceName: mysql

replicas: 3

template:

metadata:

labels:

app: mysql

app.kubernetes.io/name: mysql

spec:

initContainers:

- name: init-mysql

image: mysql:5.7

command:

- bash

- "-c"

- |

set -ex

# 基于 Pod 序号生成 MySQL 服务器的 ID。

[[ $HOSTNAME =~ -([0-9]+)$ ]] || exit 1

ordinal=${BASH_REMATCH[1]}

echo [mysqld] > /mnt/conf.d/server-id.cnf

# 添加偏移量以避免使用 server-id=0 这一保留值。

echo server-id=$((100 + $ordinal)) >> /mnt/conf.d/server-id.cnf

# 将合适的 conf.d 文件从 config-map 复制到 emptyDir。

if [[ $ordinal -eq 0 ]]; then

cp /mnt/config-map/primary.cnf /mnt/conf.d/

else

cp /mnt/config-map/replica.cnf /mnt/conf.d/

fi

volumeMounts:

- name: conf

mountPath: /mnt/conf.d

- name: config-map

mountPath: /mnt/config-map

- name: clone-mysql

image: xtrabackup:1.0

command:

- bash

- "-c"

- |

set -ex

# 如果已有数据,则跳过克隆。

[[ -d /var/lib/mysql/mysql ]] && exit 0

# 跳过主实例(序号索引 0)的克隆。

[[ `hostname` =~ -([0-9]+)$ ]] || exit 1

ordinal=${BASH_REMATCH[1]}

[[ $ordinal -eq 0 ]] && exit 0

# 从原来的对等节点克隆数据。

ncat --recv-only mysql-$(($ordinal-1)).mysql 3307 | xbstream -x -C /var/lib/mysql

# 准备备份。

xtrabackup --prepare --target-dir=/var/lib/mysql

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql

- name: conf

mountPath: /etc/mysql/conf.d

containers:

- name: mysql

image: mysql:5.7

env:

- name: MYSQL_ALLOW_EMPTY_PASSWORD

value: "1"

ports:

- name: mysql

containerPort: 3306

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql

- name: conf

mountPath: /etc/mysql/conf.d

resources:

requests:

cpu: 500m

memory: 512Mi

livenessProbe:

exec:

command: ["mysqladmin", "ping"]

initialDelaySeconds: 30

periodSeconds: 10

timeoutSeconds: 5

readinessProbe:

exec:

# 检查我们是否可以通过 TCP 执行查询(skip-networking 是关闭的)。

command: ["mysql", "-h", "127.0.0.1", "-e", "SELECT 1"]

initialDelaySeconds: 5

periodSeconds: 2

timeoutSeconds: 1

- name: xtrabackup

image: xtrabackup:1.0

ports:

- name: xtrabackup

containerPort: 3307

command:

- bash

- "-c"

- |

set -ex

cd /var/lib/mysql

# 确定克隆数据的 binlog 位置(如果有的话)。

if [[ -f xtrabackup_slave_info && "x$( change_master_to.sql.in

# 在这里要忽略 xtrabackup_binlog_info (它是没用的)。

rm -f xtrabackup_slave_info xtrabackup_binlog_info

elif [[ -f xtrabackup_binlog_info ]]; then

# 我们直接从主实例进行克隆。解析 binlog 位置。

[[ `cat xtrabackup_binlog_info` =~ ^(.*?)[[:space:]]+(.*?)$ ]] || exit 1

rm -f xtrabackup_binlog_info xtrabackup_slave_info

echo "CHANGE MASTER TO MASTER_LOG_FILE='${BASH_REMATCH[1]}',\

MASTER_LOG_POS=${BASH_REMATCH[2]}" > change_master_to.sql.in

fi

# 检查我们是否需要通过启动复制来完成克隆。

if [[ -f change_master_to.sql.in ]]; then

echo "Waiting for mysqld to be ready (accepting connections)"

until mysql -h 127.0.0.1 -e "SELECT 1"; do sleep 1; done

echo "Initializing replication from clone position"

mysql -h 127.0.0.1 \

-e "$( kubectl apply -f statefulset.yaml

kubectl get pod连接测试

kubectl run demo --image mysql:5.7 -it -- bash

root@demo:/# mysql -h mysql-0.mysql

mysql> show databases;回收

kubectl delete -f statefulset.yaml

kubectl delete pvc --all