深度学习之梯度下降算法

梯度下降算法

- 梯度下降算法

-

- 数学公式

-

- 结果

- 梯度下降算法存在的问题

- 随机梯度下降算法

梯度下降算法

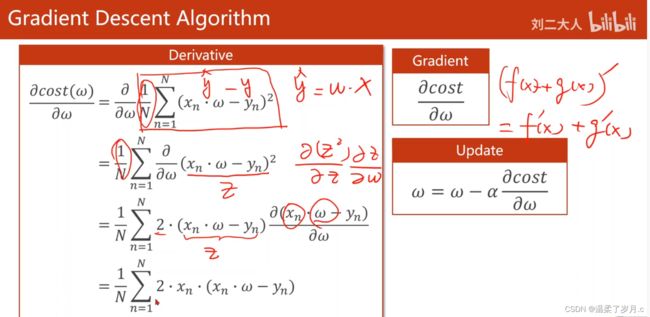

数学公式

这里案例是用梯度下降算法,来计算 y = w * x

先计算出梯度,再进行梯度的更新

import numpy as np

import matplotlib.pyplot as plt

x_data = [1.0, 2.0, 3.0, 4.0]

y_data = [2.0, 4.0, 6.0, 8.0]

mse_list= []

w_list = []

w = 1.0 #注意:这里设初始权重为1.0

def forward(x):

return w*x

def const(xs, ys):

const = 0

for x, y in zip(xs, ys):

y_pred = forward(x)

const += (y_pred - y)**2

return const/ len(xs)

def gradient(xs, ys):

grad = 0

for x, y in zip(xs, ys):

grad += 2 * x * (w * x - y)

return grad / len(xs)

print('Predict (befortraining)',4,forward(4))

#100轮

for epoch in range(100):

const_val = const(x_data, y_data) #损失值,为了绘图,才算他

grad_val = gradient(x_data, y_data) # gradient函数求梯度值

w = w - 0.01 * grad_val

#这里学习率取的 0.01,要尽量小

mse_list.append(const_val)

print('Epoch:', epoch, 'w=', w, 'cost=', const_val)

print('Predict(after training)', 4, forward(4))

#绘图

w_list = np.arange(0, 100, 1)

plt.plot(w_list, mse_list)

plt.xlabel("epoch")

plt.ylabel("mse")

plt.show()

结果

Predict (befortraining) 4 4.0

Epoch: 0 w= 1.15 cost= 7.5

Epoch: 1 w= 1.2774999999999999 cost= 5.418750000000001

Epoch: 2 w= 1.385875 cost= 3.9150468750000016

Epoch: 3 w= 1.47799375 cost= 2.828621367187501

Epoch: 4 w= 1.5562946874999999 cost= 2.0436789377929685

Epoch: 5 w= 1.6228504843749998 cost= 1.4765580325554204

Epoch: 6 w= 1.6794229117187498 cost= 1.0668131785212922

Epoch: 7 w= 1.7275094749609374 cost= 0.7707725214816338

Epoch: 8 w= 1.7683830537167968 cost= 0.55688314677048

Epoch: 9 w= 1.8031255956592773 cost= 0.40234807354167157

Epoch: 10 w= 1.8326567563103857 cost= 0.29069648313385765

Epoch: 11 w= 1.857758242863828 cost= 0.21002820906421227

Epoch: 12 w= 1.8790945064342537 cost= 0.15174538104889318

Epoch: 13 w= 1.8972303304691156 cost= 0.10963603780782546

Epoch: 14 w= 1.9126457808987483 cost= 0.07921203731615392

Epoch: 15 w= 1.925748913763936 cost= 0.05723069696092115

Epoch: 16 w= 1.9368865766993457 cost= 0.041349178554265495

Epoch: 17 w= 1.9463535901944438 cost= 0.02987478150545676

Epoch: 18 w= 1.9544005516652772 cost= 0.021584529637692605

Epoch: 19 w= 1.9612404689154856 cost= 0.015594822663232907

Epoch: 20 w= 1.9670543985781628 cost= 0.011267259374185785

Epoch: 21 w= 1.9719962387914383 cost= 0.00814059489784921

Epoch: 22 w= 1.9761968029727226 cost= 0.0058815798136960945

Epoch: 23 w= 1.9797672825268142 cost= 0.004249441415395416

Epoch: 24 w= 1.9828021901477921 cost= 0.0030702214226231784

Epoch: 25 w= 1.9853818616256234 cost= 0.0022182349778452353

Epoch: 26 w= 1.9875745823817799 cost= 0.0016026747714931776

Epoch: 27 w= 1.989438395024513 cost= 0.0011579325224038112

Epoch: 28 w= 1.991022635770836 cost= 0.0008366062474367442

Epoch: 29 w= 1.9923692404052107 cost= 0.0006044480137730437

Epoch: 30 w= 1.993513854344429 cost= 0.0004367136899510165

Epoch: 31 w= 1.9944867761927647 cost= 0.00031552564098961234

Epoch: 32 w= 1.99531375976385 cost= 0.00022796727561499308

Epoch: 33 w= 1.9960166957992724 cost= 0.0001647063566318346

Epoch: 34 w= 1.9966141914293816 cost= 0.00011900034266650408

Epoch: 35 w= 1.9971220627149744 cost= 8.597774757655033e-05

Epoch: 36 w= 1.9975537533077283 cost= 6.211892262405537e-05

Epoch: 37 w= 1.9979206903115692 cost= 4.488092159587483e-05

Epoch: 38 w= 1.9982325867648338 cost= 3.242646585301842e-05

Epoch: 39 w= 1.9984976987501089 cost= 2.3428121578803835e-05

Epoch: 40 w= 1.9987230439375925 cost= 1.692681784068377e-05

Epoch: 41 w= 1.9989145873469536 cost= 1.2229625889894448e-05

Epoch: 42 w= 1.9990773992449105 cost= 8.835904705448865e-06

Epoch: 43 w= 1.999215789358174 cost= 6.383941149688757e-06

Epoch: 44 w= 1.9993334209544478 cost= 4.612397480649774e-06

Epoch: 45 w= 1.9994334078112805 cost= 3.33245717977035e-06

Epoch: 46 w= 1.9995183966395884 cost= 2.4077003123843227e-06

Epoch: 47 w= 1.9995906371436503 cost= 1.7395634756983151e-06

Epoch: 48 w= 1.9996520415721026 cost= 1.2568346111911193e-06

Epoch: 49 w= 1.9997042353362873 cost= 9.080630065859313e-07

Epoch: 50 w= 1.9997486000358442 cost= 6.560755222580743e-07

Epoch: 51 w= 1.9997863100304676 cost= 4.7401456483160105e-07

Epoch: 52 w= 1.9998183635258975 cost= 3.4247552309066444e-07

Epoch: 53 w= 1.999845608997013 cost= 2.4743856543302625e-07

Epoch: 54 w= 1.999868767647461 cost= 1.7877436352529204e-07

Epoch: 55 w= 1.9998884525003418 cost= 1.2916447764716773e-07

Epoch: 56 w= 1.9999051846252904 cost= 9.332133510001552e-08

Epoch: 57 w= 1.999919406931497 cost= 6.742466460983543e-08

Epoch: 58 w= 1.9999314958917724 cost= 4.8714320180508126e-08

Epoch: 59 w= 1.9999417715080066 cost= 3.5196096330379474e-08

Epoch: 60 w= 1.9999505057818057 cost= 2.542917959872535e-08

Epoch: 61 w= 1.999957929914535 cost= 1.8372582260029613e-08

Epoch: 62 w= 1.9999642404273548 cost= 1.327419068279643e-08

Epoch: 63 w= 1.9999696043632516 cost= 9.590602768272778e-09

Epoch: 64 w= 1.9999741637087638 cost= 6.929210500056835e-09

Epoch: 65 w= 1.9999780391524493 cost= 5.006354586314298e-09

Epoch: 66 w= 1.999981333279582 cost= 3.617091188568193e-09

Epoch: 67 w= 1.9999841332876447 cost= 2.6133483837386546e-09

Epoch: 68 w= 1.999986513294498 cost= 1.888144207242458e-09

Epoch: 69 w= 1.9999885363003234 cost= 1.3641841897252644e-09

Epoch: 70 w= 1.999990255855275 cost= 9.856230770713489e-10

Epoch: 71 w= 1.9999917174769837 cost= 7.121126731808042e-10

Epoch: 72 w= 1.9999929598554362 cost= 5.145014063749241e-10

Epoch: 73 w= 1.9999940158771208 cost= 3.7172726609486193e-10

Epoch: 74 w= 1.9999949134955526 cost= 2.6857294975413565e-10

Epoch: 75 w= 1.9999956764712197 cost= 1.9404395619846422e-10

Epoch: 76 w= 1.9999963250005368 cost= 1.4019675835727846e-10

Epoch: 77 w= 1.9999968762504563 cost= 1.0129215790946163e-10

Epoch: 78 w= 1.9999973448128878 cost= 7.318358408922187e-11

Epoch: 79 w= 1.9999977430909546 cost= 5.2875139505922e-11

Epoch: 80 w= 1.9999980816273113 cost= 3.820228829502065e-11

Epoch: 81 w= 1.9999983693832146 cost= 2.7601153294430312e-11

Epoch: 82 w= 1.9999986139757324 cost= 1.994183325506297e-11

Epoch: 83 w= 1.9999988218793725 cost= 1.4407974526944569e-11

Epoch: 84 w= 1.9999989985974667 cost= 1.0409761596639575e-11

Epoch: 85 w= 1.9999991488078466 cost= 7.521052753355296e-12

Epoch: 86 w= 1.9999992764866696 cost= 5.43396061571672e-12

Epoch: 87 w= 1.9999993850136693 cost= 3.926036544289031e-12

Epoch: 88 w= 1.999999477261619 cost= 2.8365614029723025e-12

Epoch: 89 w= 1.9999995556723762 cost= 2.0494156128291866e-12

Epoch: 90 w= 1.9999996223215197 cost= 1.480702779721521e-12

Epoch: 91 w= 1.9999996789732917 cost= 1.0698077583047718e-12

Epoch: 92 w= 1.999999727127298 cost= 7.729361059472377e-13

Epoch: 93 w= 1.9999997680582033 cost= 5.584463360924549e-13

Epoch: 94 w= 1.9999998028494728 cost= 4.034774778538369e-13

Epoch: 95 w= 1.9999998324220518 cost= 2.915124779890719e-13

Epoch: 96 w= 1.999999857558744 cost= 2.1061776543919866e-13

Epoch: 97 w= 1.9999998789249325 cost= 1.521713353234463e-13

Epoch: 98 w= 1.9999998970861925 cost= 1.0994378986595627e-13

Epoch: 99 w= 1.9999999125232637 cost= 7.943438830326513e-14

Predict(after training) 4 7.999999650093055

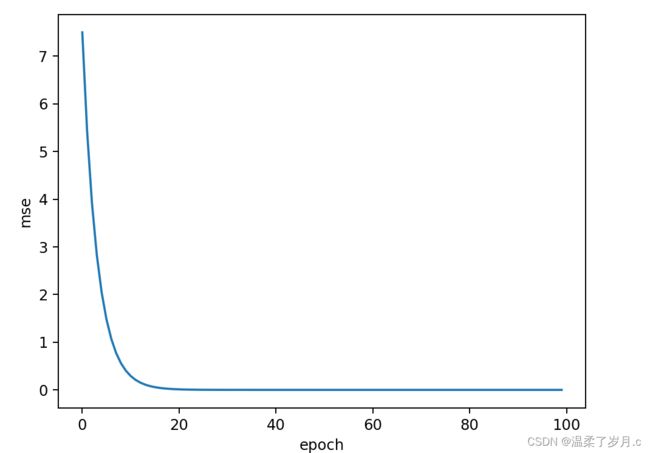

横坐标表示训练的轮数,纵坐标为损失值,通过图分析,随着训练轮数的增加,损失值逐渐减少,趋于0(可能会不等于0)

梯度下降算法存在的问题

使用梯度下降算法,如果遇到鞍点(总体梯度和为0的点),那么就会导致w = w - 学习率 * w中,w 不会改变,就导致w不能够继续更新,为了解决这个问题,就提出了随机梯度下降算法,随机选取一组(x, y)作为梯度下降的依据

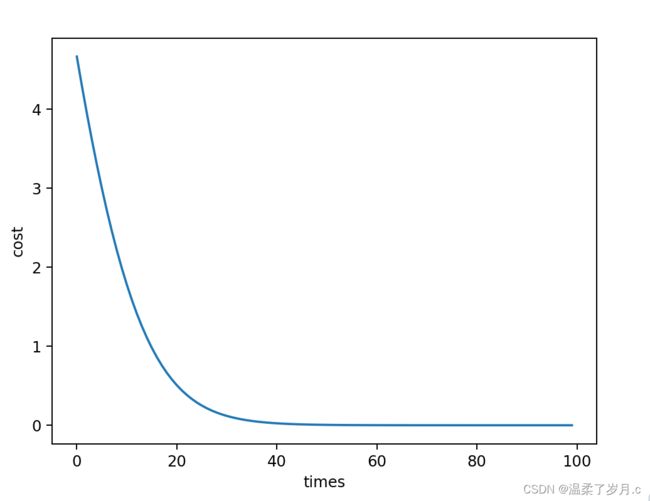

随机梯度下降算法

随机梯度下降

#随机梯度算法

import numpy as np

import matplotlib.pyplot as plt

x_data = [1.0,2.0,3.0]

y_data = [2.0,4.0,6.0]

w=1.0

def forward(x):

return w*x

#计算MSE

def cost(xs, ys):

cost = 0

for x, y in zip(xs, ys):

y_pred = forward(x)

cost += (y_pred - y)**2

return cost / len(xs)

def gradient(xs, ys):

grad = 0

for x, y in zip(xs, ys):

grad += 2*w*(w*x-y)

return grad/len(xs)

mse_list = []

for epoch in range(100):

cost_val = cost(x_data, y_data) #绘图才绘制

grad_val = gradient(x_data, y_data) #计算梯度

w -= 0.01*grad_val

mse_list.append(cost_val)

print('Epoch:', epoch, 'w=', w, 'cost=', cost_val)

print('Predict(after training)', 4, forward(4))

w_list = np.arange(0, 100, 1)

plt.plot(w_list, mse_list)

plt.ylabel('cost')

plt.xlabel('times')

plt.show()