Python(PyTorch)物理变化可微分神经算法

要点

使用受控物理变换序列实现可训练分层物理计算 | 多模机械振荡、非线性电子振荡器和光学二次谐波生成神经算法验证 | 训练输入数据,物理系统变换产生输出和可微分数字模型估计损失的梯度 | 多模振荡对输入数据进行可控卷积 | 物理神经算法数学表示、可微分数学模型 | MNIST和元音数据集评估算法

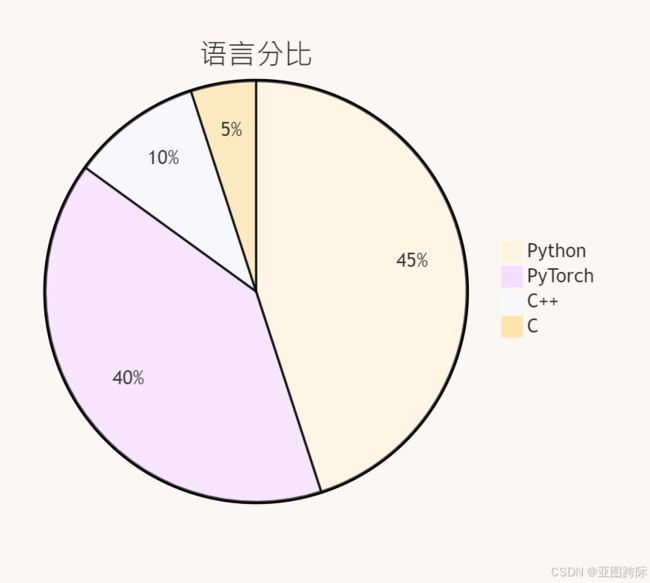

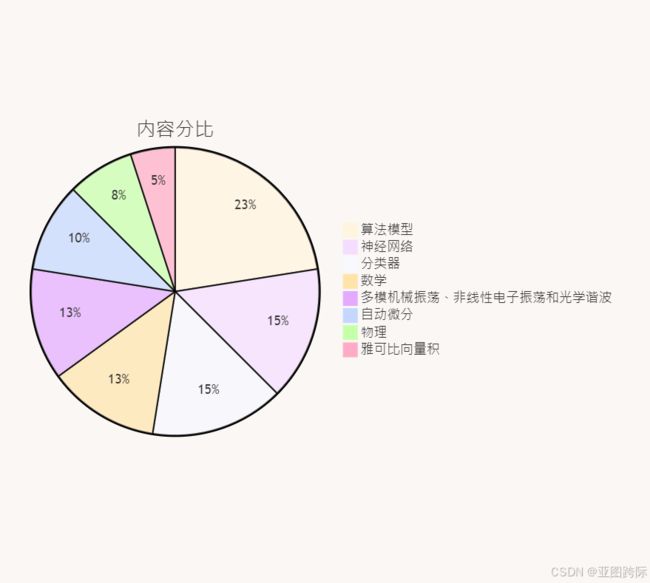

语言内容分比

PyTorch可微分优化

假设张量 x x x是元参数, a a a是普通参数(例如网络参数)。我们有内部损失 L in = a 0 ⋅ x 2 L ^{\text {in }}=a_0 \cdot x^2 Lin =a0⋅x2 并且我们使用梯度 ∂ L in ∂ a 0 = x 2 \frac{\partial L ^{\text {in }}}{\partial a_0}=x^2 ∂a0∂Lin =x2 更新 a a a和 a 1 = a 0 − η ∂ L in ∂ a 0 = a 0 − η x 2 a_1=a_0-\eta \frac{\partial L ^{\text {in }}}{\partial a_0}=a_0-\eta x^2 a1=a0−η∂a0∂Lin =a0−ηx2。然后我们计算外部损失 L out = a 1 ⋅ x 2 L ^{\text {out }}=a_1 \cdot x^2 Lout =a1⋅x2。因此外部损失到 x x x 的梯度为:

∂ L out ∂ x = ∂ ( a 1 ⋅ x 2 ) ∂ x = ∂ a 1 ∂ x ⋅ x 2 + a 1 ⋅ ∂ ( x 2 ) ∂ x = ∂ ( a 0 − η x 2 ) ∂ x ⋅ x 2 + ( a 0 − η x 2 ) ⋅ 2 x = ( − η ⋅ 2 x ) ⋅ x 2 + ( a 0 − η x 2 ) ⋅ 2 x = − 4 η x 3 + 2 a 0 x \begin{aligned} \frac{\partial L ^{\text {out }}}{\partial x} & =\frac{\partial\left(a_1 \cdot x^2\right)}{\partial x} \\ & =\frac{\partial a_1}{\partial x} \cdot x^2+a_1 \cdot \frac{\partial\left(x^2\right)}{\partial x} \\ & =\frac{\partial\left(a_0-\eta x^2\right)}{\partial x} \cdot x^2+\left(a_0-\eta x^2\right) \cdot 2 x \\ & =(-\eta \cdot 2 x) \cdot x^2+\left(a_0-\eta x^2\right) \cdot 2 x \\ & =-4 \eta x^3+2 a_0 x \end{aligned} ∂x∂Lout =∂x∂(a1⋅x2)=∂x∂a1⋅x2+a1⋅∂x∂(x2)=∂x∂(a0−ηx2)⋅x2+(a0−ηx2)⋅2x=(−η⋅2x)⋅x2+(a0−ηx2)⋅2x=−4ηx3+2a0x

鉴于上述分析解,让我们使用 TorchOpt 中的 MetaOptimizer 对其进行验证。MetaOptimizer 是我们可微分优化器的主类。它与功能优化器 torchopt.sgd 和 torchopt.adam 相结合,定义了我们的高级 API torchopt.MetaSGD 和 torchopt.MetaAdam。

首先,定义网络。

from IPython.display import display

import torch

import torch.nn as nn

import torch.nn.functional as F

import torchopt

class Net(nn.Module):

def __init__(self):

super().__init__()

self.a = nn.Parameter(torch.tensor(1.0), requires_grad=True)

def forward(self, x):

return self.a * (x**2)

然后我们声明网络(由 a 参数化)和元参数 x。不要忘记为 x 设置标志 require_grad=True 。

net = Net()

x = nn.Parameter(torch.tensor(2.0), requires_grad=True)

接下来我们声明元优化器。这里我们展示了定义元优化器的两种等效方法。

optim = torchopt.MetaOptimizer(net, torchopt.sgd(lr=1.0))

optim = torchopt.MetaSGD(net, lr=1.0)

元优化器将网络作为输入并使用方法步骤来更新网络(由a参数化)。最后,我们展示双层流程的工作原理。

inner_loss = net(x)

optim.step(inner_loss)

outer_loss = net(x)

outer_loss.backward()

# x.grad = - 4 * lr * x^3 + 2 * a_0 * x

# = - 4 * 1 * 2^3 + 2 * 1 * 2

# = -32 + 4

# = -28

print(f'x.grad = {x.grad!r}')

输出:

x.grad = tensor(-28.)

让我们从与模型无关的元学习算法的核心思想开始。该算法是一种与模型无关的元学习算法,它与任何使用梯度下降训练的模型兼容,并且适用于各种不同的学习问题,包括分类、回归和强化学习。元学习的目标是在各种学习任务上训练模型,以便它仅使用少量训练样本即可解决新的学习任务。

更新规则定义为:

给定微调步骤的学习率 α \alpha α, θ \theta θ 应该最小化

L ( θ ) = E T i ∼ p ( T ) [ L T i ( θ i ′ ) ] = E T i ∼ p ( T ) [ L T i ( θ − α ∇ θ L T i ( θ ) ) ] L (\theta)= E _{ T _i \sim p( T )}\left[ L _{ T _i}\left(\theta_i^{\prime}\right)\right]= E _{ T _i \sim p( T )}\left[ L _{ T _i}\left(\theta-\alpha \nabla_\theta L _{ T _i}(\theta)\right)\right] L(θ)=ETi∼p(T)[LTi(θi′)]=ETi∼p(T)[LTi(θ−α∇θLTi(θ))]

我们首先定义一些与任务、轨迹、状态、动作和迭代相关的参数。

import argparse

from typing import NamedTuple

import gym

import numpy as np

import torch

import torch.optim as optim

import torchopt

from helpers.policy import CategoricalMLPPolicy

TASK_NUM = 40

TRAJ_NUM = 20

TRAJ_LEN = 10

STATE_DIM = 10

ACTION_DIM = 5

GAMMA = 0.99

LAMBDA = 0.95

outer_iters = 500

inner_iters = 1

接下来,我们定义一个名为 Traj 的类来表示轨迹,其中包括观察到的状态、采取的操作、采取操作后观察到的状态、获得的奖励以及用于贴现未来奖励的伽玛值。

class Traj(NamedTuple):

obs: np.ndarray

acs: np.ndarray

next_obs: np.ndarray

rews: np.ndarray

gammas: np.ndarray

评估函数用于评估策略在不同任务上的性能。它使用内部优化器来微调每个任务的策略,然后计算微调前后的奖励。

def evaluate(env, seed, task_num, policy):

pre_reward_ls = []

post_reward_ls = []

inner_opt = torchopt.MetaSGD(policy, lr=0.1)

env = gym.make(

'TabularMDP-v0',

num_states=STATE_DIM,

num_actions=ACTION_DIM,

max_episode_steps=TRAJ_LEN,

seed=args.seed,

)

tasks = env.sample_tasks(num_tasks=task_num)

policy_state_dict = torchopt.extract_state_dict(policy)

optim_state_dict = torchopt.extract_state_dict(inner_opt)

for idx in range(task_num):

for _ in range(inner_iters):

pre_trajs = sample_traj(env, tasks[idx], policy)

inner_loss = a2c_loss(pre_trajs, policy, value_coef=0.5)

inner_opt.step(inner_loss)

post_trajs = sample_traj(env, tasks[idx], policy)

pre_reward_ls.append(np.sum(pre_trajs.rews, axis=0).mean())

post_reward_ls.append(np.sum(post_trajs.rews, axis=0).mean())

torchopt.recover_state_dict(policy, policy_state_dict)

torchopt.recover_state_dict(inner_opt, optim_state_dict)

return pre_reward_ls, post_reward_ls

在主函数中,我们初始化环境、策略和优化器。策略是一个简单的 MLP,它输出动作的分类分布。内部优化器用于在微调阶段更新策略参数,外部优化器用于在元训练阶段更新策略参数。性能通过微调前后的奖励来评估。每次外部迭代都会记录并打印训练过程。

def main(args):

torch.manual_seed(args.seed)

torch.cuda.manual_seed_all(args.seed)

env = gym.make(

'TabularMDP-v0',

num_states=STATE_DIM,

num_actions=ACTION_DIM,

max_episode_steps=TRAJ_LEN,

seed=args.seed,

)

policy = CategoricalMLPPolicy(input_size=STATE_DIM, output_size=ACTION_DIM)

inner_opt = torchopt.MetaSGD(policy, lr=0.1)

outer_opt = optim.Adam(policy.parameters(), lr=1e-3)

train_pre_reward = []

train_post_reward = []

test_pre_reward = []

test_post_reward = []

for i in range(outer_iters):

tasks = env.sample_tasks(num_tasks=TASK_NUM)

train_pre_reward_ls = []

train_post_reward_ls = []

outer_opt.zero_grad()

policy_state_dict = torchopt.extract_state_dict(policy)

optim_state_dict = torchopt.extract_state_dict(inner_opt)

for idx in range(TASK_NUM):

for _ in range(inner_iters):

pre_trajs = sample_traj(env, tasks[idx], policy)

inner_loss = a2c_loss(pre_trajs, policy, value_coef=0.5)

inner_opt.step(inner_loss)

post_trajs = sample_traj(env, tasks[idx], policy)

outer_loss = a2c_loss(post_trajs, policy, value_coef=0.5)

outer_loss.backward()

torchopt.recover_state_dict(policy, policy_state_dict)

torchopt.recover_state_dict(inner_opt, optim_state_dict)

# Logging

train_pre_reward_ls.append(np.sum(pre_trajs.rews, axis=0).mean())

train_post_reward_ls.append(np.sum(post_trajs.rews, axis=0).mean())

outer_opt.step()

test_pre_reward_ls, test_post_reward_ls = evaluate(env, args.seed, TASK_NUM, policy)

train_pre_reward.append(sum(train_pre_reward_ls) / TASK_NUM)

train_post_reward.append(sum(train_post_reward_ls) / TASK_NUM)

test_pre_reward.append(sum(test_pre_reward_ls) / TASK_NUM)

test_post_reward.append(sum(test_post_reward_ls) / TASK_NUM)

print('Train_iters', i)

print('train_pre_reward', sum(train_pre_reward_ls) / TASK_NUM)

print('train_post_reward', sum(train_post_reward_ls) / TASK_NUM)

print('test_pre_reward', sum(test_pre_reward_ls) / TASK_NUM)

print('test_post_reward', sum(test_post_reward_ls) / TASK_NUM)