ELK 日志分析平台搭建

主题简介:

处理日志是运维工作必不可少的一环。但是在规模化场景下,grep、awk 无法快速发挥作用,Hadoop 又更偏向于固定模式的离线统计。我们需要一种高效、灵活的日志分析方式,可以给故障处理,根源定位提供秒级的响应。基于全文搜索引擎 Lucene 构建的 ELKstack 平台,是目前开源界最接近该目标的实现。

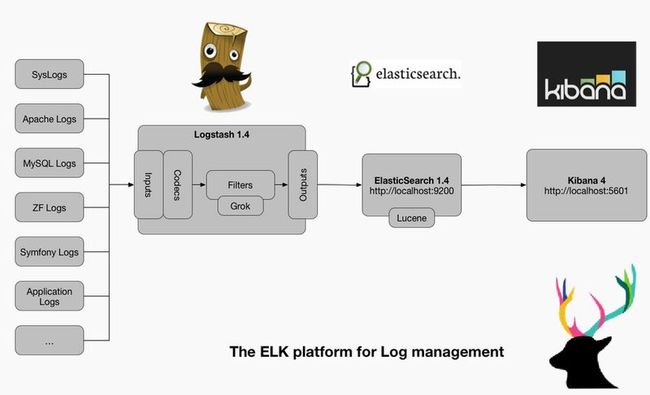

Elasticsearch + Logstash + Kibana(ELK)是一套开源的日志管理方案,分析网站的访问情况时我们一般会借助Google/百度/CNZZ等方式嵌入JS做数据统计,但是当网站访问异常或者被攻击时我们需要在后台分析如Nginx的具体日志,而Nginx日志分割/GoAccess/Awstats都是相对简单的单节点解决方案,针对分布式集群或者数据量级较大时会显得心有余而力不足,而ELK的出现可以使我们从容面对新的挑战。

- Logstash:负责日志的收集,处理和储存

- Elasticsearch:负责日志检索和分析

- Kibana:负责日志的可视化

ELK 使用场景介绍

日志其实是运维工程师打交道很多很多的一个东西了。一般来说,日志有三个用途:

- 找问题,以数据为数据导向。

- 做监控

- 安全审计

目前Splunk是这个大数据分析领域的一枝独秀,上市到现在都已经百亿美元市值了。不过 Splunk 很贵,每GB高达4500美元。ELK 就是在这种场景下,用来替代 Splunk 的开源产品。

ELK stack是以Elasticsearch,Logstash,Kibana三个开源软件为主的数据处理工具链,三者通常是配合使用,而且又先后归于Elastic.co公司名下。

ELK stack 具有如下几个优点:

1.处理方式灵活。Elasticsearch是实时全文索引,不需要像Storm那样预先编程才能使用。

2.配置简易上手。Elasticsearch全部采用JSON接口,Logstash是Ruby DSL设计。

3.检索性能高效。虽然每次查询都是实时计算,但是优秀的设计和实现基本可以达到百亿级数据查询的秒级响应。

4.集群线性扩展。不管是Elasticsearch集群还是Logstash集群都是可以线性扩展的。

5.前端操作可视化便利。鼠标点击搜索即可完成搜索,聚合,生成仪表盘。

ELK架构:

部署机器:

服务端:

dev-vhost031 10.59.74.54 ( logstash-1.5.4,elasticsearch-1.7.1,kibana-4.1.1 )

客户端:

dev-vhost011 10.59.74.33 (logstash-forwarder-0.4.0-1)

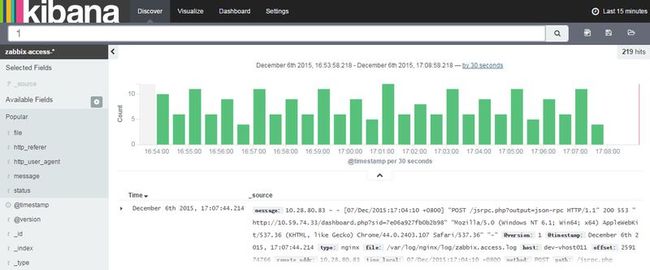

目的,将客户端zabbix server 访问日志在ELK中展示.

安装ELK:

服务端:

设置FQDN(创建SSL证书的时候需要配置FQDN):

[root@dev-vhost011 ~]# hostname dev-vhost011 [root@dev-vhost011 ~]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 10.59.74.54 elk.test.com elk

安装Java 1.8:

[root@dev-vhost031 elk]# cat /etc/redhat-release CentOS release 6.6 (Final) [root@dev-vhost031 elk]# yum install java-1.8.0-openjdk.x86_64 [root@dev-vhost031 elk]# java -version openjdk version "1.8.0_65" OpenJDK Runtime Environment (build 1.8.0_65-b17) OpenJDK 64-Bit Server VM (build 25.65-b01, mixed mode)

安装 elasticsearch-1.7.1:

#下载安装 [root@dev-vhost031 elk]# wget https://download.elastic.co/elasticsearch/elasticsearch/elasticsearch-1.7.1.noarch.rpm #启动相关服务 [root@dev-vhost031 elk]# /etc/init.d/elasticsearch start [root@dev-vhost031 elk]# /etc/init.d/elasticsearch stop #查看elasticsearch配置文件 [root@dev-vhost031 elk]# rpm -qc elasticsearch /etc/elasticsearch/elasticsearch.yml /etc/elasticsearch/logging.yml /etc/init.d/elasticsearch /etc/sysconfig/elasticsearch /usr/lib/sysctl.d/elasticsearch.conf /usr/lib/systemd/system/elasticsearch.service /usr/lib/tmpfiles.d/elasticsearch.conf #查看端口使用情况 [root@dev-vhost031 elk]# netstat -tunlp Active Internet connections (only servers) Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name tcp 0 0 :::9300 :::* LISTEN 14585/java tcp 0 0 :::9200 :::* LISTEN 14585/java

安装Kibana 4.1.1:

#下载tar包

[root@dev-vhost031 elk]# wget https://download.elastic.co/kibana/kibana/kibana-4.1.1-linux-x64.tar.gz

#解压

[root@dev-vhost031 elk]# pwd

/data1/elk

[root@dev-vhost031 elk]# tar xf kibana-4.1.1-linux-x64.tar.gz

[root@dev-vhost031 elk]# ln -s /data1/elk/kibana-4.1.1-linux-x64 kibana

#创建kibana服务

[root@dev-vhost031 elk]# cat /etc/init.d/kibana

#!/bin/bash

### BEGIN INIT INFO

# Provides: kibana

# Default-Start: 2 3 4 5

# Default-Stop: 0 1 6

# Short-Description: Runs kibana daemon

# Description: Runs the kibana daemon as a non-root user

### END INIT INFO

# Process name

NAME=kibana

DESC="Kibana4"

PROG="/etc/init.d/kibana"

# Configure location of Kibana bin

KIBANA_BIN=/data1/elk/kibana/bin #注意路径

# PID Info

PID_FOLDER=/var/run/kibana/

PID_FILE=/var/run/kibana/$NAME.pid

LOCK_FILE=/var/lock/subsys/$NAME

PATH=/bin:/usr/bin:/sbin:/usr/sbin:$KIBANA_BIN

DAEMON=$KIBANA_BIN/$NAME

# Configure User to run daemon process

DAEMON_USER=root

# Configure logging location

KIBANA_LOG=/var/log/kibana.log

# Begin Script

RETVAL=0

if [ `id -u` -ne 0 ]; then

echo "You need root privileges to run this script"

exit 1

fi

# Function library

. /etc/init.d/functions

start() {

echo -n "Starting $DESC : "

pid=`pidofproc -p $PID_FILE kibana`

if [ -n "$pid" ] ; then

echo "Already running."

exit 0

else

# Start Daemon

if [ ! -d "$PID_FOLDER" ] ; then

mkdir $PID_FOLDER

fi

daemon --user=$DAEMON_USER --pidfile=$PID_FILE $DAEMON 1>"$KIBANA_LOG" 2>&1 &

sleep 2

pidofproc node > $PID_FILE

RETVAL=$?

[[ $? -eq 0 ]] && success || failure

echo

[ $RETVAL = 0 ] && touch $LOCK_FILE

return $RETVAL

fi

}

reload()

{

echo "Reload command is not implemented for this service."

return $RETVAL

}

stop() {

echo -n "Stopping $DESC : "

killproc -p $PID_FILE $DAEMON

RETVAL=$?

echo

[ $RETVAL = 0 ] && rm -f $PID_FILE $LOCK_FILE

}

case "$1" in

start)

start

;;

stop)

stop

;;

status)

status -p $PID_FILE $DAEMON

RETVAL=$?

;;

restart)

stop

start

;;

reload)

reload

;;

*)

# Invalid Arguments, print the following message.

echo "Usage: $0 {start|stop|status|restart}" >&2

exit 2

;;

esac

#修改启动权限

[root@dev-vhost031 elk]# chmod +x /etc/init.d/kibana

#启动kibana服务

[root@dev-vhost031 elk]# /etc/init.d/kibana start

[root@dev-vhost031 elk]# /etc/init.d/kibana status

#查看端口

[root@dev-vhost031 elk]# netstat -tunlp

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 0.0.0.0:5601(默认,可以改为80) 0.0.0.0:* LISTEN 15128/node

安装logstash 1.5.4

#下载安装

[root@dev-vhost031 elk]# wget https://download.elastic.co/logstash/logstash/packages/centos/logstash-1.5.4-1.noarch.rpm

[root@dev-vhost031 elk]# yum localinstall logstash-1.5.4-1.noarch.rpm

#设置ssl,之前设置的FQDN是elk.test.com

[root@dev-vhost031 tls]# pwd

/etc/pki/tls

[root@dev-vhost031 tls]# openssl req -subj '/CN=elk.test.com/' -x509 -days 3650 -batch -nodes -newkey rsa:2048 -keyout private/logstash-forwarder.key -out certs/logstash-forwarder.crt

[root@dev-vhost031 certs]# pwd

/etc/pki/tls/certs

[root@dev-vhost031 certs]# ls -l logstash-forwarder.crt

-rw-r--r-- 1 root root 1103 Nov 23 22:46 logstash-forwarder.crt

#创建一个01-logstash-initial.conf文件

[root@dev-vhost031 conf.d]# cat 01-logstash-initial.conf

input {

lumberjack {

port => 5000

type => "logs"

ssl_certificate => "/etc/pki/tls/certs/logstash-forwarder.crt"

ssl_key => "/etc/pki/tls/private/logstash-forwarder.key"

}

}

filter {

if [type] == "syslog" {

grok {

match => { "message" => "%{SYSLOGTIMESTAMP:syslog_timestamp} %{SYSLOGHOST:syslog_hostname} %{DATA:syslog_program}(?:\[%{POSINT:syslog_pid}\])?: %{GREEDYDATA:syslog_message}" }

add_field => [ "received_at", "%{@timestamp}" ]

add_field => [ "received_from", "%{host}" ]

}

syslog_pri { }

date {

match => [ "syslog_timestamp", "MMM d HH:mm:ss", "MMM dd HH:mm:ss" ]

}

}

if [type] == "nginx" {

grok {

match => { "message" => "%{NGINXACCESS}" }

}

}

}

output {

elasticsearch {

index => "zabbix-access-%{+YYYY.MM.dd}"

host => localhost

}

stdout { codec => rubydebug }

}

#启动logstash服务

[root@dev-vhost031 conf.d]# /etc/init.d/logstash start

[root@dev-vhost031 conf.d]# /etc/init.d/logstash stop

#查看端口 [root@dev-vhost031 conf.d]# netstat -tunlp

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 :::9301 :::* LISTEN 4381/java

tcp 0 0 :::5000 :::* LISTEN 4381/java

#启动客户端logstash(后面会讲解客户端)

[root@dev-vhost011 ~]# /etc/init.d/logstash-forwarder start

[root@dev-vhost011 ~]# /etc/init.d/logstash-forwarder status

#访问kibana

http://10.59.74.54:5601/

#增加节点和客户端配置一样,注意同步证书

/etc/pki/tls/certs/logstash-forwarder.crt

客户端安装logstash-forwarder :

#安装客户端

[root@dev-vhost011 opt]# wget https://download.elastic.co/logstash-forwarder/binaries/logstash-forwarder-0.4.0-1.x86_64.rpm

[root@dev-vhost011 opt]# yum localinstall logstash-forwarder-0.4.0-1.x86_64.rpm

#查看配置文件

[root@dev-vhost011 opt]# rpm -qc logstash-forwarder

/etc/logstash-forwarder.conf

#备份配置文件

[root@dev-vhost011 opt]# cp /etc/logstash-forwarder.conf /etc/logstash-forwarder.conf.save

#编辑配置文件

[root@dev-vhost011 opt]# cat /etc/logstash-forwarder.conf

{

"network": {

"servers": [ "elk.test.com:5000" ],

"ssl ca": "/etc/pki/tls/certs/logstash-forwarder.crt",

"timeout": 15

},

"files": [

{

"paths": [

"/var/log/messages",

"/var/log/secure"

],

"fields": { "type": "syslog" }

}, {

"paths": [

"/var/log/nginx/log/zabbix.access.log"

],

"fields": { "type": "nginx" }

}

]

}

配置nginx 策略

#服务端增加patterns

[root@dev-vhost031 ]# mkdir /opt/logstash/patterns/

[root@dev-vhost031 patterns]# cat nginx

NGUSERNAME [a-zA-Z\.\@\-\+_%]+

NGUSER %{NGUSERNAME}

NGINXACCESS %{IPORHOST:remote_addr} - - \[%{HTTPDATE:time_local}\] "%{WORD:method} %{URIPATH:path}(?:%{URIPARAM:param})? HTTP/%{NUMBER:httpversion}" %{INT:status} %{INT:body_bytes_sent} %{QS:http_referer} %{QS:http_user_agent}

#官网pattern的debug在线工具

https://grokdebug.herokuapp.com/

#修改logstash权限

[root@dev-vhost031 patterns]# chown -R logstash:logstash /opt/logstash/patterns/

#修改服务端配置

[root@dev-vhost031 patterns]# cat /etc/logstash/conf.d/01-logstash-initial.conf

input {

lumberjack {

port => 5000

type => "logs"

ssl_certificate => "/etc/pki/tls/certs/logstash-forwarder.crt"

ssl_key => "/etc/pki/tls/private/logstash-forwarder.key"

}

}

filter {

if [type] == "syslog" {

grok {

match => { "message" => "%{SYSLOGTIMESTAMP:syslog_timestamp} %{SYSLOGHOST:syslog_hostname} %{DATA:syslog_program}(?:\[%{POSINT:syslog_pid}\])?: %{GREEDYDATA:syslog_message}" }

add_field => [ "received_at", "%{@timestamp}" ]

add_field => [ "received_from", "%{host}" ]

}

syslog_pri { }

date {

match => [ "syslog_timestamp", "MMM d HH:mm:ss", "MMM dd HH:mm:ss" ]

}

}

if [type] == "nginx" {

grok {

match => { "message" => "%{NGINXACCESS}" }

}

}

}

output {

elasticsearch {

index => "zabbix-access-%{+YYYY.MM.dd}"

host => localhost

}

stdout { codec => rubydebug }

} 修改Kibana端口

[root@dev-vhost031 config]# pwd /data1/elk/kibana/config [root@dev-vhost031 config]# cat kibana.yml | grep port # Kibana is served by a back end server. This controls which port to use. #port: 5601 port: 80

http://10.59.74.54

参考:

https://www.digitalocean.com/community/tutorials/how-to-use-logstash-and-kibana-to-centralize-logs-on-centos-6

http://blog.csdn.net/longxibendi/article/details/35237543/

https://www.elastic.co/guide