- 问题解决方案

cd hadoop安装路径/ect/hadoop

修改hdfs-site.xml加上以下内容

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

旨在取消权限检查,原因是为了解决我在windows机器上配置eclipse连接hadoop服务器时,配置map/reduce连接后报以下错误,org.apache.hadoop.security.AccessControlException: Permission denied:

修改hdfs-site.xml加上以下内容 (目前测试可选 根据具体情况)

<property>

<name>dfs.web.ugi</name>

<value>228238,supergroup</value>

</property>

原因是运行时,报如下错误 WARN org.apache.hadoop.security.ShellBasedUnixGroupsMapping: got exception trying to get groups for user 228238 (228238 机器用户名)

配置修改完后重启hadoop集群:

[root@supervisor-84 sbin]# ./stop-dfs.sh

[root@supervisor-84 sbin]# ./sbin/stop-yarn.sh

[root@supervisor-84 sbin]#./sbin/start-dfs.sh

[root@supervisor-84 sbin]# ./sbin/start-yarn.sh

- windows基础环境准备

windows7(x64),jdk,ant,eclipse,hadoop

jdk环境配置

jdk-6u26-windows-i586.exe安装后好后配置相关JAVA_HOME环境变量,并将bin目录配置到path

eclipse环境配置

eclipse-standard-luna-SR1-win32.zip解压到F:\eclipse\

下载地址:http://developer.eclipsesource.com/technology/epp/luna/eclipse-standard-luna-SR1-win32.zip

ant环境配置

apache-ant-1.9.4-bin.zip解压到D:\apache-ant\,配置环境变量ANT_HOME,并将bin目录配置到path

下载地址:http://mirror.bit.edu.cn/apache//ant/binaries/apache-ant-1.9.4-bin.zip

下载hadoop-2.5.2.tar.gz

http://mirror.bit.edu.cn/apache/hadoop/common/hadoop-2.5.2/hadoop-2.5.2.tar.gz

下载hadoop-2.5.2-src.tar.gz

http://mirror.bit.edu.cn/apache/hadoop/common/hadoop-2.5.2/hadoop-2.5.2-src.tar.gz

下载hadoop2x-eclipse-plugin

https://github.com/winghc/hadoop2x-eclipse-plugin

下载hadoop-common-2.2.0-bin

https://github.com/srccodes/hadoop-common-2.2.0-bin

分别将hadoop-2.5.2.tar.gz、hadoop-2.5.2-src.tar.gz、hadoop2x-eclipse-plugin、hadoop-common-2.2.0-bin下载解压到F:\hadoop\目录下

注: 解压后 hadoop-2.5.2 \share\hadoop\common\lib 中缺少 htrace-core-3.0.4.jar, 可以从网上下载放到该目录。

- 编译hadoop-eclipse-plugin-2.5.2.jar配置

添加环境变量HADOOP_HOME=F:\hadoop\hadoop-2.5.2\

追加环境变量path内容:%HADOOP_HOME%/bin

修改编译包及依赖包版本信息

修改F:\hadoop\hadoop2x-eclipse-plugin-master\ivy\libraries.properties

hadoop.version=2.5.2

jackson.version=1.9.13

ant编译

F:\hadoop\hadoop2x-eclipse-plugin-master\src\contrib\eclipse-plugin>

ant jar -Dversion=2.5.2 -Declipse.home=F:\eclipse\eclipse-hadoop\eclipse -Dhadoop.home=F:\hadoop\hadoop-2.5.2

编译好后hadoop-eclipse-plugin-2.5.2.jar会在F:\hadoop\hadoop2x-eclipse-plugin-master\build\contrib\eclipse-plugin目录下

- eclipse环境配置

将编译好的hadoop-eclipse-plugin-2.5.2.jar拷贝至eclipse的plugins目录下,然后重启eclipse

2.打开菜单Window--Preference--Hadoop Map/Reduce进行配置,如下图所示:

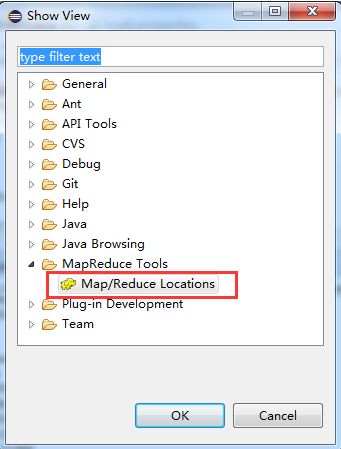

显示Hadoop连接配置窗口:Window--Show View--Other-MapReduce Tools,如下图所示:

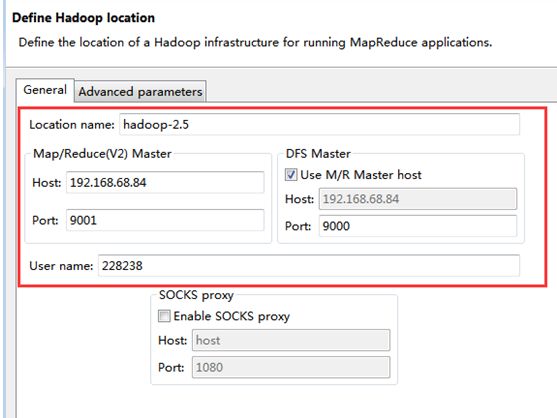

配置连接Hadoop,如下图所示:

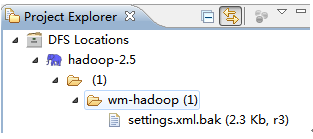

查看是否连接成功,新建文件夹并上传文件能看到如下类似信息,则表示连接成功:

- Map/Reduce Project 工程创建

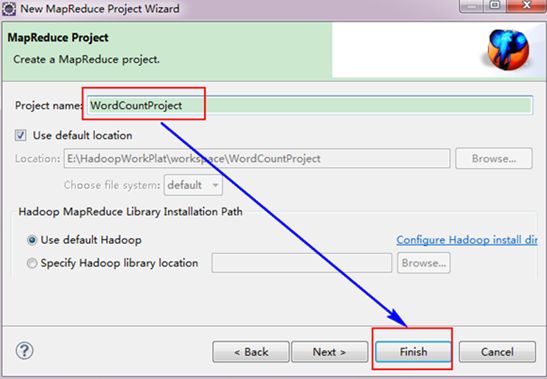

在工程栏中右击鼠标,选择new –-〉 other –〉 Map/Reduce Project

接着,填写MapReduce工程的名字为"WordCountProject",点击"finish"完成。

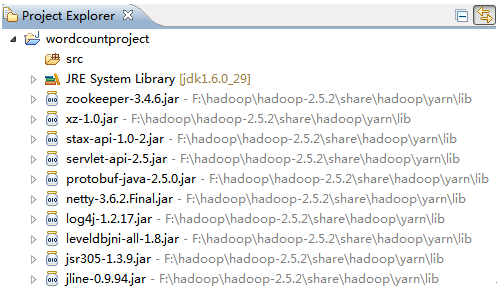

目前为止我们已经成功创建了MapReduce项目,我们发现在Eclipse软件的左侧多了我们的刚才建立的项目。

创建log4j.properties文件 目的是在eclipse 控制台有日志输出

在src目录下创建log4j.properties文件,内容如下:

log4j.rootLogger=debug,stdout,R

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%5p - %m%n

log4j.appender.R=org.apache.log4j.RollingFileAppender

log4j.appender.R.File=mapreduce_test.log

log4j.appender.R.MaxFileSize=1MB

log4j.appender.R.MaxBackupIndex=1

log4j.appender.R.layout=org.apache.log4j.PatternLayout

log4j.appender.R.layout.ConversionPattern=%p %t %c - %m%n

log4j.logger.com.codefutures=DEBUG

注: 把 winutils.exe 和 hadoop.dll (上面说到hadoop-common-2.2.0-bin 中可以找到,) 放入到 F:\hadoop\hadoop-2.5.2\bin 目录下

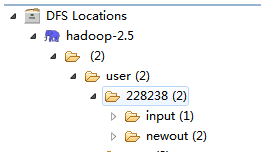

在 DFS Locations 的 user 目录下新建 228238 (PC 的用户名) 文件夹,在该文件夹新建 input 文件夹;运行 后的结果为 newout 目录下。

新建 类 WordCount,包名:org.apache.hadoop.examples

public class WordCount {

public static class TokenizerMapper

extends Mapper<Object, Text, Text, IntWritable>{

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context

) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

context.write(word, one); }

}

}

public static class IntSumReducer extends

Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values, Context context)

throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

public static void main(String[] args) throws Exception {

// 初始化Configuration

Configuration conf = new Configuration();

conf.set("mapred.job.tracker", "192.168.68.84:9001");

String[] ars=new String[]{"input","newout"};

// GenericOptionsParser类,它是用来解释常用hadoop命令,

// 并根据需要为Configuration对象设置相应的值,其实平时开发里我们不太常用它,

// 而是让类实现Tool接口,然后再main函数里使用ToolRunner运行程序,

// 而ToolRunner内部会调用GenericOptionsParser

String[] otherArgs = new GenericOptionsParser(conf, ars).getRemainingArgs();

// 运行WordCount程序时候一定是两个参数,如果不是就会报错退出

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount ");

System.exit(2);

}

// 在构建一个job

Job job = new Job(conf, "word count");

// 装载编写好的计算程序

job.setJarByClass(WordCount.class);

// 装载map函数

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(IntSumReducer.class);

// 装载reduce函数实现类

job.setReducerClass(IntSumReducer.class);

// 定义输出的key/value的类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 构建输入的数据文件

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

// 构建输出的数据文件

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

// 如果job运行成功了,我们的程序就会正常退出

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

右击 Run AS Run on hadoop

Debug 调试 运行:

修改mapred-site.xml文件,添加如下配置:

<property>

<name>mapred.child.java.opts</name>

<value>-agentlib:jdwp=transport=dt_socket,address=8883,server=y,suspend=y</value>

</property>

右键hadoop src项目,右键“Debug As”,选择“Debug Configurations”,选择“Remote Java Application”,添加一个新的测试,输入远程host ip和监听端口,例为8883,然后点击“Debug”按钮