Nodes in Hadoop cluster run both a datanode and a tasktracker, and both are typically commissioned or decommissioned in tandem.

Commissioning new nodes

Commissioning a new node can be as simple as configuring the hdfs-site.xml file to point to the namenode, configuring the mapred-site.xml file to point to the jobtracker, and starting the datanode and jobtracker daemons. Although from security perspective you'd better to have a list of authorized nodes.

Datanodes that are permitted to connect to the namenode are specified in a file whose name is specified by the dfs.hosts property. The file reside on the namenode's local filesystem, and it contains a line for each datanode, specified by network address. If you need to specified multiple network addresses for a datanode, put them on one line, separated by whitespace.

Tasktrackers that may connect to the jobtracker are specified in a file whose name is specified by the mapred.hosts property. In most cases, there is one shared file, referred as the include file, that both dfs.hosts and mapred.hosts refer to, since nodes in the cluster run both datanode and tasktracker daemons.

To add new nodes to the cluster:

- Add the network address of the new nodes to the include file.

- Update the namenode with the new set of permitted datanodes using this command:

$ hadoop dfsadmin -refreshNodes

- Update the jobtracker with the new set of permitted tasktrackers using:

$ hadoop mradmin -refreshNodes

- Update the slaves file with the new nodes, so that they are included in future operations performed by the hadoop control scripts.

- Start the new datanodes and tasktrackers.

- Check that the new datanodes and tasktrackers apprer in the web UI.

HDFS will not move blocks from old datanodes to new datanodes to balance the cluster. To do this, you should run the balancer manually by:

$ start-balancer.sh

The -threshold argument specified the threshold percentage that defines what it means for the cluster to be balanced. The flag is optional, in which case the threshold is 10%.

Decommissioning old nodes

The way to decommission datanodes is to inform the namenode of the nodes that you wish to take out of circulation, so that it can replicate the blocks to other datanodes before the datanodes are shutdown.

The decommissioning process is controlled by an exclude file, which for HDFS is set by the dfs.hosts.exclude property and for MapReduce by the mapred.hosts.exclude property. It is often the case that these properties refer to the same file. The exclude file lists the nodes that are not permitted tot connect to the cluster.

To remove nodes from the cluster:

- Add the network address of the nodes to be decommissioned to the exclude file. Do not update the include file at this point.

- Update the namenode with the new set of permitted datanodes, using this command:

$ hadoop dfsadmin -refreshNodes

- Update the jobtracker with the new set of permitted tasktracker using:

$ hadoop mradmin -refreshNodes

- Go to the web UI and check whether the admin state has changed to "Decommission in Progress" for the datanodes being decommissioned. They will start copying their blocks to other datanodes in the cluster.

- When all the datanodes report their state as "Decommissioned", all the blocks have been replicated. Shut down the decommissioned nodes.

- Remove the nodes from the include file, and run:

$ hadoop dfsadmin -refreshNodes $ hadoop mradmin -refreshNodes

- Remove the nodes from the slaves file.

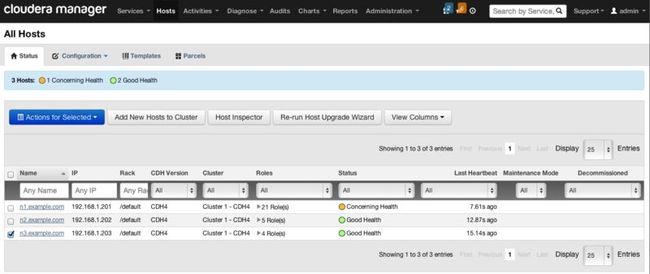

Actually, to decommission or recommission a node from Hadoop cluster manually is too involved. With cloudera manager, it easy to do the job without too involved into the fussy configuration. Below is the steps to decommission and recommission a node in a Hadoop cluster using Cloudera Manager.

Decommission one or more hosts:

- Click the Hosts tab.

- Select the host(s) you want to decommission.

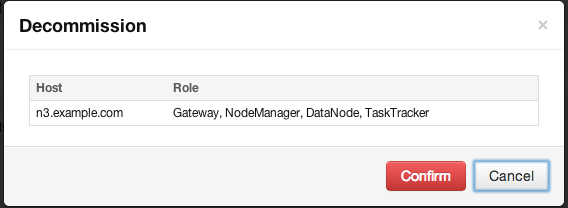

- From the Actions for Selected menu, click Decommission.

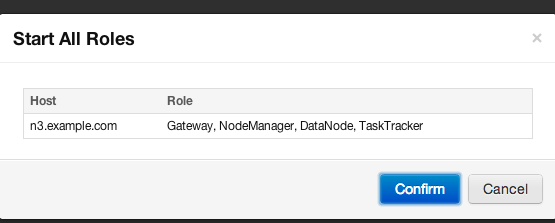

- A confirmation pop-up informs you of the roles that will be decommissioned or stopped on the nodes you have selected. To proceed with the decommissioning, click Confirm.

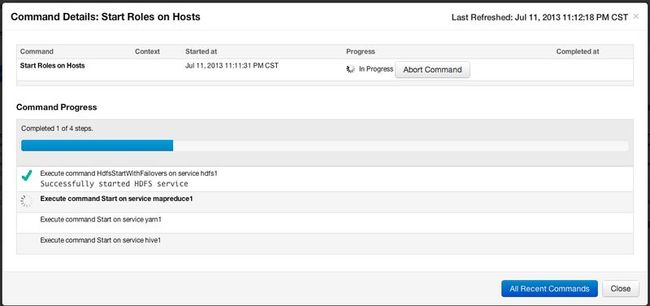

- A Command Details window appears that will show each stop or decommission command as it is run, service by service. You can click one of the decommission links to see the subcommands that are run for decommissioning a given role. Depending on the role, the steps may include adding the node to an "exclusions list" and refreshing the NameNode, JobTracker, or NodeManager, stopping the Balancer (if it is running), and moving data blocks or regions. Roles that do not have specific decommission actions are just stopped.

While decommissioning is in progress, the host is marked Decommissioning in the list under the Hosts tab. Once all roles have been decommissioned or stopped, the host is marked Decommissioned.![]()

Roles on a decommissioned host cannot be restarted until the host is recommissioned.

Recommissioning a Host

Only hosts that are decommissioned using Cloudera Manager can be recommissioned.

- Click the Hosts tab.

- Select the host(s) you want to recommission.

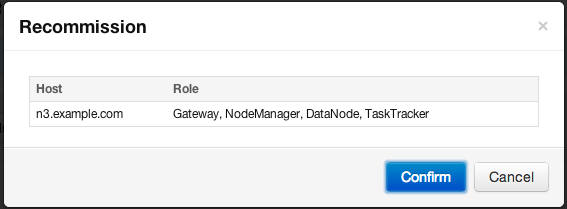

- From the Actions for Selected menu, click recommission.

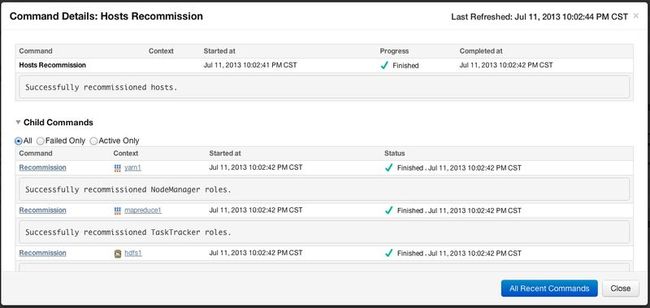

This will recommission the host (i.e. remove it from the exclusion lists and run the appropriate refresh) so that the roles that reside on it can be restarted. The Decommissioned indicator is removed from the host. It also removes the Decommissioned indicator from the roles that reside on the host. However, the roles themselves are NOT restarted by the recommission command.

You can restart all the roles on a recommissioned host in a single command from the Hosts page:

- Select the host(s) on which you want to start the decommissioned roles.

- From the Actions for Selected menu, click Start All Roles.

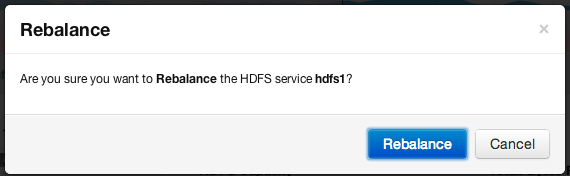

Run the Balancer to rebalance the cluster:

1. On the HDFS > Status tab, choose Rebalance on the Actions menu.

2. Click Rebalance that appears in the next screen to confirm.

If you see a Finished status, the Balancer successfully ran and completed.