corosync(openais) + mysql+ drbd

corosync(openais) + mysql+ drbd实现高可用性的服务器群集

实验环境:redhat 5.4主机两台

注意的事项

1:Yum 服务器的构建

2:各个节点之间的时间的一致性(hwclock –s 或者搭建ntp服务器)

3:被定义为群集的资源都不可以在本地主机上进行启动,他们要被crm来进行管理。

4:由于dbrd,corosync,pacemaker等各群集的服务都需要通过主机名来进行解析,所以我们的主机的名字一定要能够被正确的解析。(hosts文件)

5:本实验要用到的软件包。

//*************由于drbd内核模块代码只在linux内核2.6.3.33以后的版本中才有,所以我们要同时安装内核模块和管理工具*********//

drbd83-8.3.8-1.el5.centos.i386.rpm drbd的管理包

kmod-drbd83-8.3.8-1.el5.centos.i686.rpm drbd的内核模块

//*************由于drbd内核模块代码只在linux内核2.6.3.33以后的版本中才有,所以我们要同时安装内核模块和管理工具*********//

cluster-glue-1.0.6-1.6.el5.i386.rpm 为了在群集中增加对更多节点的支持

cluster-glue-libs-1.0.6-1.6.el5.i386.rpm

corosync-1.2.7-1.1.el5.i386.rpmcorosync的主配置文件

corosynclib-1.2.7-1.1.el5.i386.rpm corosync的库文件

heartbeat-3.0.3-2.3.el5.i386.rpm 我们的heartbeat在这里是做四层的资源代理用的

heartbeat-libs-3.0.3-2.3.el5.i386.rpm heartbeat的库文件

ldirectord-1.0.1-1.el5.i386.rpm 在高可用性群集中实验对后面realserver的探测

libesmtp-1.0.4-5.el5.i386.rpm

openais-1.1.3-1.6.el5.i386.rpm做丰富pacemake的内容使用

openaislib-1.1.3-1.6.el5.i386.rpm openais 的库文件

pacemaker-1.1.5-1.1.el5.i386.rpm pacemake的主配置文档

pacemaker-libs-1.1.5-1.1.el5.i386.rpm pacemaker的库文件

pacemaker-cts-1.1.5-1.1.el5.i386.rpm

perl-TimeDate-1.16-5.el5.noarch.rpm

resource-agents-1.0.4-1.1.el5.i386.rpm 开启资源代理用的

mysql-5.5.15-linux2.6-i686.tar.gz mysql的绿色软件

说明:资源的下载地址 http://down.51cto.com/data/402802

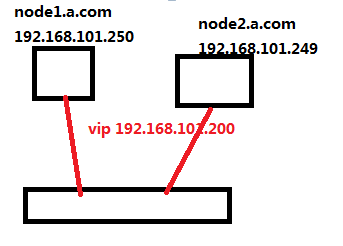

一:修改群集中各节点的网络参数

node1配置:

[root@jun ~]# vim /etc/sysconfig/network

NETWORKING=yes

NETWORKING_IPV6=yes

HOSTNAME=node1.a.com

[root@jun ~]# vim /etc/hosts

127.0.0.1 localhost.localdomain localhost

::1 localhost6.localdomain6 localhost6

192.168.101.250 node1.a.com node1

192.168.101.249 node2.a.com node2

[root@jun ~]# init 6

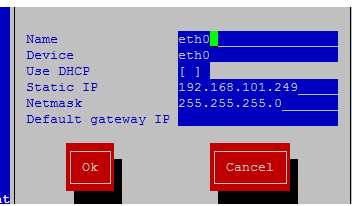

Node2配置:

[root@jun ~]# vim /etc/sysconfig/network

NETWORKING=yes

NETWORKING_IPV6=yes

HOSTNAME=node2.a.com

[root@jun ~]# vim /etc/hosts

# Do not remove the following line, or various programs

# that require network functionality will fail.

127.0.0.1 localhost.localdomain localhost

::1 localhost6.localdomain6 localhost6

192.168.101.250 node1.a.com node1

192.168.101.249 node2.a.com node2

[root@jun ~]# init 6

二:同步群集中各节点的时间

node1:

[root@node1~]# hwclock -s

node2:

[root@node2 ~]# hwclock -s

三:在各个节点上面产生密钥实现无密码的通讯

node1:

[root@node1 ~]# ssh-keygen -t rsa 产生一个rsa的非对称加密的私钥对

[root@node1 ~]# ssh-copy-id -i .ssh/id_rsa.pub node2 拷贝到node2节点

node2:

[root@node1 ~]# ssh-keygen -t rsa 产生一个rsa的非对称加密的私钥对

[root@node2 ~]# ssh-copy-id -i .ssh/id_rsa.pub node1 拷贝到node1节点

四:在各个节点上面配置好yum客户端

Node1:

[root@node1 ~]# vim /etc/yum.repos.d/server.repo

[rhel-server]

name=Red Hat Enterprise Linux server

baseurl=file:///mnt/cdrom/Server

enabled=1

gpgcheck=1

gpgkey=file:///mnt/cdrom/RPM-GPG-KEY-redhat-release

[rhel-vt]

name=Red Hat Enterprise Linux vt

baseurl=file:///mnt/cdrom/VT做虚拟化用到的仓库

enabled=1

gpgcheck=1

gpgkey=file:///mnt/cdrom/RPM-GPG-KEY-redhat-release

[rhel-cluster] 做群集需要用到的仓库

name=Red Hat Enterprise Linux cluster

baseurl=file:///mnt/cdrom/Cluster

enabled=1

gpgcheck=1

gpgkey=file:///mnt/cdrom/RPM-GPG-KEY-redhat-release

[rhel-clusterstorage] 做存储需要用到的仓库

name=Red Hat Enterprise Linux clusterstorage

baseurl=file:///mnt/cdrom/ClusterStorage

enabled=1

gpgcheck=1

gpgkey=file:///mnt/cdrom/RPM-GPG-KEY-redhat-release

将node1的yum配置拷贝到节点2

[root@node1 ~]# scp /etc/yum.repos.d/server.repo node2:/etc/yum.repos.d/

五:将下载好的rpm包上传到linux上的各个节点

Node1:

-rw-r--r-- 1 root root 125974 May 12 10:26 kmod-drbd83-8.3.8-1.el5.centos.i686.rpm

-rw-r--r-- 1 root root 60458 May 12 09:24 libesmtp-1.0.4-5.el5.i386.rpm

-rw-r--r-- 1 root root 126663 May 12 09:25 libmcrypt-2.5.7-5.el5.i386.rpm

-rw-r--r-- 1 root root 207085 May 12 09:24 openais-1.1.3-1.6.el5.i386.rpm

-rw-r--r-- 1 root root 94614 May 12 09:24 openaislib-1.1.3-1.6.el5.i386.rpm

-rw-r--r-- 1 root root 796813 May 12 09:24 pacemaker-1.1.5-1.1.el5.i386.rpm

-rw-r--r-- 1 root root 207925 May 12 09:24 pacemaker-cts-1.1.5-1.1.el5.i386.rpm

-rw-r--r-- 1 root root 332026 May 12 09:24 pacemaker-libs-1.1.5-1.1.el5.i386.rpm

-rw-r--r-- 1 root root 32818 May 12 09:24 perl-TimeDate-1.16-5.el5.noarch.rpm

-rw-r--r-- 1 root root 388632 May 12 09:24 resource-agents-1.0.4-1.1.el5.i386.rpm

-rw-r--r-- 1 root root 271360 May 12 09:21 cluster-glue-1.0.6-1.6.el5.i386.rpm

-rw-r--r-- 1 root root 133254 May 12 09:21 cluster-glue-libs-1.0.6-1.6.el5.i386.rpm

-rw-r--r-- 1 root root 170052 May 12 09:22 corosync-1.2.7-1.1.el5.i386.rpm

-rw-r--r-- 1 root root 158502 May 12 09:22 corosynclib-1.2.7-1.1.el5.i386.rpm

-rw-r--r-- 1 root root 221868 May 12 09:21 drbd83-8.3.8-1.el5.centos.i386.rpm

-rw-r--r-- 1 root root 165591 May 12 09:24 heartbeat-3.0.3-2.3.el5.i386.rpm

-rw-r--r-- 1 root root 289600 May 12 09:24 heartbeat-libs-3.0.3-2.3.el5.i386.rpm

-rw-r--r-- 1 root root 162247449 May 12 09:27 mysql-5.5.15-linux2.6-i686.tar.gz

将node1的rpm包拷贝得到node2:

六:在各节点上面安装所有的rpm包

node1: [root@node1 ~]# yum localinstall *.rpm -y --nogpgcheck

node2: [root@node2~]# yum localinstall *.rpm -y --nogpgcheck

七:在各节点上增加一个大小类型都相关的drbd设备(sdb1)

Node1:

[root@node1 ~]# fdisk /dev/sdb

: Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-2610, default 1): 1

Last cylinder or +size or +sizeM or +sizeK (1-2610, default 2610): +1g

Command (m for help): w

[root@node1 ~]# partprobe /dev/sdb //重新加载内核模块

[root@node2 ~]# cat /proc/partitions

major minor #blocks name

8 0 20971520 sda

8 1 104391 sda1

8 2 1052257 sda2

8 3 19808145 sda3

Node2:

[root@node2 ~]# fdisk /dev/sdb

: Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-2610, default 1): 1

Last cylinder or +size or +sizeM or +sizeK (1-2610, default 2610): +1g

Command (m for help): w

[root@node1 ~]# partprobe /dev/sdb //重新加载内核模块

[root@node2 ~]# cat /proc/partitions

major minor #blocks name

8 0 20971520 sda

8 1 104391 sda1

8 2 1052257 sda2

8 3 19808145 sda3

8 16 4194304 sdb

8 17 987966 sdb1

八:配置drbd

Node1:

1: 复制样例配置文件为即将使用的配置文件.

[root@node1 ~]# cp /usr/share/doc/drbd83-8.3.8/drbd.conf /etc/

2:将文件global_common.conf 备份一份

[root@node1 ~]# cd /etc/drbd.d/

[root@node1 drbd.d]# ll

-rwxr-xr-x 1 root root 1418 Jun 4 2010 global_common.conf

[root@node1 drbd.d]# cp global_common.conf global_common.conf.bak

3:编辑global_common.conf

[root@node1 drbd.d]# vim global_common.conf

global {

}

common {

protocol C;

handlers {

pri-lost-after-sb "/usr/lib/drbd/notify-pri-lost-after-sb.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f";

local-io-error "/usr/lib/drbd/notify-io-error.sh; /usr/lib/drbd/notify-emergency-shutdown.sh; echo o > /proc/sysrq-trigger ; halt -f";

}

startup {

wfc-timeout 120; 等待连接的超时时间

degr-wfc-timeout 120; 等待降级的节点连接的超时时间

}

disk {

on-io-error detach; 当出现I/O错误,节点要拆掉drbd设备

}

net {

cram-hmac-alg "sha1"; 使用sha1加密算法实现节点认证

shared-secret "mydrbdlab"; 认证码,两个节点内容要相同

}

syncer {

rate 100M;定义同步数据时的速率

}

}

4:定义mysql资源

[root@node1 drbd.d]# vim mysql.res

on node1.a.com {

device /dev/drbd0;

disk /dev/sdb1;

address 192.168.101.250:7789;

meta-disk internal;

}

on node2.a.com {

device /dev/drbd0;

disk /dev/sdb1;

address 192.168.101.249:7789;

meta-disk internal;

}

}

5:将以上的drbd.*文件都拷贝到node2上面

[root@node1 drbd.d]# scp -r /etc/drbd.* node2:/etc/

6:node1初始化定义的mysql的资源并启动相应的服务

[root@node1 drbd.d]# drbdadm create-md mysql

Writing meta data...

initializing activity log

NOT initialized bitmap

New drbd meta data block successfully created.

[root@node1 drbd.d]# service drbd start

7:node2初始化定义的mysql的资源并启动相应的服务

[root@node2 drbd.d]# drbdadm create-md mysql

Writing meta data...

initializing activity log

NOT initialized bitmap

New drbd meta data block successfully created.

[root@node2 drbd.d]# service drbd start

8: 使用drbd-overview命令来查看启动状态

[root@node1 drbd.d]# drbd-overview

0:mysql Connected Secondary/Secondary Inconsistent/Inconsistent C r----

从上面的信息中可以看出此时两个节点均处于Secondary状态。于是,我们接下来需要将其中一个节点设置为Primary,这里将node1设置为主节点,故要在node1上执行如下命令:

[root@node1 drbd.d]# drbdadm -- --overwrite-data-of-peer primary mysql

[root@node1 drbd.d]# drbd-overview 再次查看启动的状态

0:mysql SyncSource Primary/Secondary UpToDate/Inconsistent C r----

[=========>..........] sync'ed: 50.9% (490136/987896)K delay_probe: 30

在node2上查看相关的drbd

[root@node2 drbd.d]# cat /proc/drbd

version: 8.3.8 (api:88/proto:86-94)

GIT-hash: d78846e52224fd00562f7c225bcc25b2d422321d build by [email protected], 2010-06-04 08:04:16

0: cs:Connected ro:Secondary/Primary ds:UpToDate/UpToDate C r----

ns:0 nr:987896 dw:987896 dr:0 al:0 bm:61 lo:0 pe:0 ua:0 ap:0 ep:1 wo:b oos:0

注:

Primary/Secondary 说明当前节点为主节点

Secondary/Primary 说明当前节点为从节点

9:然后查看同步过程

[root@node1 drbd.d]# watch -n 1 'cat /proc/drbd'

10:创建文件系统(只可以在primary节点上进行)

[root@node1 drbd.d]# mkfs -t ext3 /dev/drbd0 格式化

[root@node1 drbd.d]# mkdir /mysqldata 创建挂载点

[root@node1 drbd.d]# mount /dev/drbd0 /mysqldata/ 进行挂载

[root@node1 drbd.d]#cd /mysqldata

[root@node1 mysqldata]#touch f1 f2 创建2个文件

[root@node1 drbd.d]# ls /mysqldata/

-rw-r--r-- 1 root root 0 May 9 15:45 f1

-rw-r--r-- 1 root root 0 May 9 15:45 f2

drwx------ 2 root root 16384 May 9 15:41 lost+found

[root@node1 ~]#umount /mysqldata 卸载drbd设备

[root@node1 ~]# drbdadm secondary mysql 将node1设置为secondary节点

[root@node1 ~]# drbd-overview

0:mysql Connected Secondary/Secondary UpToDate/UpToDate C r----

11:将node2设置为primary节点

[root@node2 ~]# drbdadm primary mysql

[root@node2 ~]# drbd-overview

0:mysql Connected Primary/Secondary UpToDate/UpToDate C r----

[root@node2 ~]#mkdir /mysqldata

[root@node2 ~]#mount /dev/drbd0 /mysqldata

[root@node2 ~]# ll /mysqldata/

total 16

-rw-r--r-- 1 root root 0 May 9 15:45 f1

-rw-r--r-- 1 root root 0 May 9 15:45 f2

drwx------ 2 root root 16384 May 9 15:41 lost+found

[root@node2 ~]# umount /mysqldata/ 卸载设备

至此我们的drbd已经正常安装完成!!!

九:mysql的安装和配置

node1上配置

添加用户和组:

# groupadd -r mysql

# useradd -g mysql -r mysql

由于主设备才能读写,挂载,故我们还要设置node1为主设备,node2为从设备:

node2上操作:

# drbdadm secondary mysql

node1上操作:

# drbdadm primary mysql

[root@node1 ~]# drbd-overview

挂载drbd设备:

[root@node1 ~]# mount /dev/drbd0 /mysqldata/

[root@node1 ~]# mkdir /mysqldata/data

data目录要用存放mysql的数据,故改变其属主属组:

[root@node1 ~]# chown -R mysql:mysql /mysqldata/data

[root@node1 ~]# ls /mysqldata/

data f1 f2 lost+found

node1:

mysql的安装;

# tar zxvf mysql-5.5.15-linux2.6-i686.tar.gz -C /usr/local

# cd /usr/local/

# ln -sv mysql-5.5.15-linux2.6-i686 mysql

# cd mysql

# chown -R mysql:mysql .

初始化mysql数据库:

# scripts/mysql_install_db --user=mysql --datadir=/mysqldata/data

# chown -R root .

为mysql提供主配置文件:

# cp support-files/my-large.cnf /etc/my.cnf

并修改此文件中thread_concurrency的值为你的CPU个数乘以2,比如这里使用如下行:

# vim /etc/my.cnf

39 thread_concurrency = 2

另外还需要添加如下行指定mysql数据文件的存放位置:

40 datadir = /mysqldata/data

为mysql提供sysv服务脚本,使其能使用service命令:

# cp support-files/mysql.server /etc/rc.d/init.d/mysqld

拷贝node1上的文件到node2上,sysv服务脚本和此相同,故直接复制过去:

# scp /etc/my.cnf node2:/etc/

# scp /etc/rc.d/init.d/mysqld node2:/etc/rc.d/init.d

添加至服务列表:

# chkconfig --add mysqld

确保开机不能自动启动,我们要用CRM控制:

# chkconfig mysqld off

而后就可以启动服务测试使用了:

# service mysqld start

测试之后关闭服务:

# ls /mysqldata/data 查看其中是否有文件

[root@node1 mysql]# ls /mysqldata/data/

ib_logfile0 ibdata1 mysql-bin.000001 node1.a.com.err performance_schema

ib_logfile1 mysql mysql-bin.index node1.a.com.pid test

为了使用mysql的安装符合系统使用规范,并将其开发组件导出给系统使用,这里还需要进行如下步骤:

输出mysql的man手册至man命令的查找路径:

# vim /etc/man.config

添加如下行即可:

MANPATH /usr/local/mysql/man

输出mysql的头文件至系统头文件路径/usr/include,这可以通过简单的创建链接实现:

# ln -sv /usr/local/mysql/include /usr/include/mysql

输出mysql的库文件给系统库查找路径:(文件只要是在/etc/ld.so.conf.d/下并且后缀是.conf就可以)

# echo '/usr/local/mysql/lib' > /etc/ld.so.conf.d/mysql.conf

而后让系统重新载入系统库:

# ldconfig

修改PATH环境变量,让系统所有用户可以直接使用mysql的相关命令:

#vim /etc/profile

PATH=$PATH:/usr/local/mysql/bin

[root@node1 ~]# . /etc/profile 重新读取环境变量

[root@node1 mysql]# echo $PATH

/usr/kerberos/sbin:/usr/kerberos/bin:/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin:/root/bin:/usr/local/mysql/bin

卸载drbd设备:

# umount /mysqldata

2,node2上的配置:

添加用户和组:

# groupadd -r mysql

# useradd -g mysql -r mysql

由于主设备才能读写,挂载,故我们还要设置node2为主设备,node1为从设备:

node1上操作:

# drbdadm secondary mysql

node2上操作:

# drbdadm primary mysql

挂载drbd设备:

# mount /dev/drbd0 /mysqldata

查看:

[root@node2 ~]# ls /mysqldata/

data f1 f2 lost+founddata lost+found

mysql的安装;

# tar zxfv mysql-5.5.15-linux2.6-i686.tar.gz -C /usr/local

# cd /usr/local/

# ln -sv mysql-5.5.15-linux2.6-i686 mysql

# cd mysql

一定不能对数据库进行初始化,因为我们在node1上已经初始化了:

# chown -R root:mysql .

mysql主配置文件和sysc服务脚本已经从node1复制过来了,不用在添加。

添加至服务列表:

# chkconfig --add mysqld

确保开机不能自动启动,我们要用CRM控制:

# chkconfig mysqld off

而后就可以启动服务测试使用了:(确保node1的mysql服务停止)

# service mysqld start

测试之后关闭服务:

# ls /mysqldata/data 查看其中是否有文件

[root@node2 mysql]# ls /mysqldata/data/

ib_logfile0 ibdata1 mysql-bin.000001 mysql-bin.index node2.a.com.err performance_schema

ib_logfile1 mysql mysql-bin.000002 node1.a.com.err node2.a.com.pid test

为了使用mysql的安装符合系统使用规范,并将其开发组件导出给系统使用,这里还需要进行一些类似node1上的操作,由于方法完全相同,不再阐述!

卸载设备:

# umount /dev/drbd0

十:corosync+pacemaker的配置

前面已经安装过相关的rpm包,这里就不再安装。

1:切换到主配置文件的目录

[root@node1 ~]# cd /etc/corosync/

root@node1 corosync]# cp corosync.conf.example corosync.conf

[root@node1 corosync]# vim corosync.conf

compatibility: whitetank

totem { //这是用来传递心跳时的相关协议的信息

version: 2

secauth: off

threads: 0

interface {

ringnumber: 0

bindnetaddr: 192.168.101.0 //我们只改动这里就行啦

mcastaddr: 226.94.1.1

mcastport: 5405

}

}

logging {

fileline: off

to_stderr: no //是否发送标准出错

to_logfile: yes //日志

to_syslog: yes //系统日志 (建议关掉一个),会降低性能

logfile: /var/log/cluster/corosync.log //需要手动创建目录cluster

debug: off // 排除时可以起来

timestamp: on //日志中是否记录时间

//******以下是openais的东西,可以不用代开*****//

logger_subsys {

subsys: AMF

debug: off

}

}

amf {

mode: disabled

}

//*********补充一些东西,前面只是底层的东西,因为要用pacemaker ******//

service {

ver: 0

name: pacemaker

use_mgmtd: yes

}

//******虽然用不到openais ,但是会用到一些子选项 ********//

aisexec {

user: root

group: root

}

2:创建cluster目录

[root@node1 corosync]# mkdir /var/log/cluster

3:为了便面其他主机加入该集群,需要认证,生成一authkey

[root@node1 corosync]# corosync-keygen

[root@node1 corosync]# ll

-rw-r--r-- 1 root root 5384 Jul 28 2010 amf.conf.example

-r-------- 1 root root 128 May 8 14:09 authkey

-rw-r--r-- 1 root root 538 May 8 14:08 corosync.conf

-rw-r--r-- 1 root root 436 Jul 28 2010 corosync.conf.example

drwxr-xr-x 2 root root 4096 Jul 28 2010 service.d

drwxr-xr-x 2 root root 4096 Jul 28 2010 uidgid.d

4:将node1节点上的文件拷贝到节点node2上面(记住要带-p可以拷贝权限)

[root@node1 corosync]# scp -p authkey corosync.conf node2:/etc/corosync/

authkey 100% 128 0.1KB/s 00:00

corosync.conf 100% 513 0.5KB/s 00:00

[root@node1 corosync]# ssh node2 'mkdir /var/log/cluster'

5:在node1和node2节点上面启动 corosync 的服务

[root@node1 corosync]# service corosync start

6:验证corosync引擎是否正常启动了

[root@node1 corosync]# grep -i -e "corosync cluster engine" -e "configuration file" /var/log/messages

Apr 15 09:17:49 jun smartd[2933]: Opened configuration file /etc/smartd.conf

Apr 15 09:17:49 jun smartd[2933]: Configuration file /etc/smartd.conf was parsed, found DEVICESCAN, scanning devices

May 6 10:02:18 jun smartd[2898]: Opened configuration file /etc/smartd.conf

May 6 10:02:18 jun smartd[2898]: Configuration file /etc/smartd.conf was parsed, found DEVICESCAN, scanning devices

May 12 08:55:22 jun smartd[2897]: Opened configuration file /etc/smartd.conf

May 12 08:55:23 jun smartd[2897]: Configuration file /etc/smartd.conf was parsed, found DEVICESCAN, scanning devices

May 12 09:04:09 node1 smartd[2873]: Opened configuration file /etc/smartd.conf

May 12 09:04:09 node1 smartd[2873]: Configuration file /etc/smartd.conf was parsed, found DEVICESCAN, scanning devices

May 12 09:41:20 node1 smartd[2958]: Opened configuration file /etc/smartd.conf

May 12 09:41:20 node1 smartd[2958]: Configuration file /etc/smartd.conf was parsed, found DEVICESCAN, scanning devices

May 12 12:06:34 node1 corosync[3730]: [MAIN ] Corosync Cluster Engine ('1.2.7'): started and ready to provide service.

May 12 12:06:34 node1 corosync[3730]: [MAIN ] Successfully read main configuration file '/etc/corosync/corosync.conf'.

7:查看初始化成员节点通知是否发出

[root@node1 corosync]# grep -i totem /var/log/messages

May 12 12:06:34 node1 corosync[3730]: [TOTEM ] Initializing transport (UDP/IP).

May 12 12:06:34 node1 corosync[3730]: [TOTEM ] Initializing transmit/receive security: libtomcrypt SOBER128/SHA1HMAC (mode 0).

May 12 12:06:34 node1 corosync[3730]: [TOTEM ] The network interface [192.168.101.250] is now up.

May 12 12:06:35 node1 corosync[3730]: [TOTEM ] Process pause detected for 855 ms, flushing membership messages.

May 12 12:06:35 node1 corosync[3730]: [TOTEM ] A processor joined or left the membership and a new membership was formed.

May 12 12:06:51 node1 corosync[3730]: [TOTEM ] A processor joined or left the membership and a new membership was formed.

8:检查过程中是否有错误产生

[root@node1 corosync]#grep -i error: /var/log/messages |grep -v unpack_resources (避免stonith的错误)

9:检查pacemaker时候已经启动了

[root@node1 corosync]# grep -i pcmk_startup /var/log/messages

May 7 16:24:36 node1 corosync[686]: [pcmk ] info: pcmk_startup: CRM: Initialized

May 7 16:24:36 node1 corosync[686]: [pcmk ] Logging: Initialized pcmk_startup

May 7 16:24:36 node1 corosync[686]: [pcmk ] info: pcmk_startup: Maximum core file size is: 4294967295

May 7 16:24:36 node1 corosync[686]: [pcmk ] info: pcmk_startup: Service: 9

May 7 16:24:36 node1 corosync[686]: [pcmk ] info: pcmk_startup: Local hostname: node1.a.com

May 7 16:38:31 node1 corosync[754]: [pcmk ] info: pcmk_startup: CRM: Initialized

May 7 16:38:31 node1 corosync[754]: [pcmk ] Logging: Initialized pcmk_startup

May 7 16:38:31 node1 corosync[754]: [pcmk ] info: pcmk_startup: Maximum core file size is: 4294967295

May 7 16:38:31 node1 corosync[754]: [pcmk ] info: pcmk_startup: Service: 9

May 7 16:38:31 node1 corosync[754]: [pcmk ] info: pcmk_startup: Local hostname: node1.a.com

node2:重复上面的5--9步骤

10:在node1上查看群集的状态

[root@node1 corosync]# crm status

============

Last updated: Sat May 12 12:13:09 2012

Stack: openais

Current DC: node1.a.com - partition with quorum

Version: 1.1.5-1.1.el5-01e86afaaa6d4a8c4836f68df80ababd6ca3902f

2 Nodes configured, 2 expected votes

0 Resources configured.

============

Online: [ node1.a.com node2.a.com ]

十一:配置群集的工作属性

corosync默认启用了stonith,而当前集群并没有相应的stonith设备,因此此默认配置目前尚不可用,这可以通过如下命令先禁用stonith:

# crm configure property stonith-enabled=false

对于双节点的集群来说,我们要配置此选项来忽略quorum,即这时候票数不起作用,一个节点也能正常运行:

# crm configure property no-quorum-policy=ignore

定义资源的粘性值,使资源不能再节点之间随意的切换,因为这样是非常浪费系统的资源的。

资源黏性值范围及其作用:

0:这是默认选项。资源放置在系统中的最适合位置。这意味着当负载能力“较好”或较差的节点变得可用时才转移资源。此选项的作用基本等同于自动故障回复,只是资源可能会转移到非之前活动的节点上;

大于0:资源更愿意留在当前位置,但是如果有更合适的节点可用时会移动。值越高表示资源越愿意留在当前位置;

小于0:资源更愿意移离当前位置。绝对值越高表示资源越愿意离开当前位置;

INFINITY:如果不是因节点不适合运行资源(节点关机、节点待机、达到migration-threshold 或配置更改)而强制资源转移,资源总是留在当前位置。此选项的作用几乎等同于完全禁用自动故障回复;

-INFINITY:资源总是移离当前位置;

我们这里可以通过以下方式为资源指定默认黏性值:

# crm configure rsc_defaults resource-stickiness=100

十三:定义集群服务及资源(node1)

1、查看当前集群的配置信息,确保已经配置全局属性参数为两节点集群所适用

[root@node1 ~]# crm configure show

node node1.a.com

node node2.a.com

property $id="cib-bootstrap-options" \

dc-version="1.1.5-1.1.el5-01e86afaaa6d4a8c4836f68df80ababd6ca3902f" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

2、将已经配置好的DRBD设备/dev/drbd0定义为集群服务;

# service drbd stop

# chkconfig drbd off

# ssh node2 "service drbd stop"

# ssh node2 "chkconfig drbd off"

# drbd-overview

drbd not loaded

3: 配置drbd为集群资源:

提供drbd的RA目前由OCF归类为linbit,其路径为/usr/lib/ocf/resource.d/linbit/drbd。我们可以使用如下命令来查看此RA及RA的meta信息

# crm ra classes

heartbeat

lsb

ocf / heartbeat linbit pacemaker

stonith

# crm ra list ocf linbit

drbd

查看drbd的资源代理的相关信息:

[root@node1 corosync]# crm ra info ocf:linbit:drbd

This resource agent manages a DRBD resource

as a master/slave resource. DRBD is a shared-nothing replicated storage

device. (ocf:linbit:drbd)

Master/Slave OCF Resource Agent for DRBD

Parameters (* denotes required, [] the default):

drbd_resource* (string): drbd resource name

The name of the drbd resource from the drbd.conf file.

drbdconf (string, [/etc/drbd.conf]): Path to drbd.conf

Full path to the drbd.conf file.

Operations' defaults (advisory minimum):

start timeout=240

promote timeout=90

demote timeout=90

notify timeout=90

stop timeout=100

monitor_Slave interval=20 timeout=20 start-delay=1m

monitor_Master interval=10 timeout=20 start-delay=1m

drbd需要同时运行在两个节点上,但只能有一个节点(primary/secondary模型)是Master,而另一个节点为Slave;因此,它是一种比较特殊的集群资源,其资源类型为多状态(Multi-state)clone类型,即主机节点有Master和Slave之分,且要求服务刚启动时两个节点都处于slave状态。

[root@node1 ~]# crm

crm(live)# configure

crm(live)configure# primitive mysqldrbd ocf:heartbeat:drbd params drbd_resource="mysql" op monitor role="Master" interval="30s" op monitor role="Slave" interval="31s" op start timeout="240s" op stop timeout="100s"

crm(live)configure# ms MS_mysqldrbd mysqldrbd meta master-max=1 master-node-max=1 clone-max=2 clone-node-max=1 notify="true"

crm(live)configure# show mysqldrbd

primitive mysqldrbd ocf:heartbeat:drbd \

params drbd_resource="mysql" \

op monitor interval="30s" role="Master" \

op monitor interval="31s" role="Slave" \

op start interval="0" timeout="240s" \

op stop interval="0" timeout="100s"

crm(live)configure# show MS_mysqldrbd

ms MS_mysqldrbd mysqldrbd \

meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true"

确定无误后,提交:

crm(live)configure# verify

crm(live)configure# commit

crm(live)configure# exit

查看当前集群运行状态:

[root@node1 ~]# crm status

============

Last updated: Sat May 12 12:35:33 2012

Stack: openais

Current DC: node1.a.com - partition with quorum

Version: 1.1.5-1.1.el5-01e86afaaa6d4a8c4836f68df80ababd6ca3902f

2 Nodes configured, 2 expected votes

1 Resources configured.

============

Online: [ node1.a.com node2.a.com ]

Master/Slave Set: MS_mysqldrbd [mysqldrbd]

Masters: [ node1.a.com ]

Slaves: [ node2.a.com ]

由上面的信息可以看出此时的drbd服务的Primary节点为node1.a.com,Secondary节点为node2.a.com。当然,也可以在node1上使用如下命令验正当前主机是否已经成为mysql资源的Primary节点:

# drbdadm role mysql

Primary/Secondary

我们实现将drbd设置自动挂载至/mysqldata目录。此外,此自动挂载的集群资源需要运行于drbd服务的Master节点上,并且只能在drbd服务将某节点设置为Primary以后方可启动。

确保两个节点上的设备已经卸载:

# umount /dev/drbd0

以下还在node1上操作:

# crm

crm(live)# configure

crm(live)configure# primitive MysqlFS ocf:heartbeat:Filesystem params device="/dev/drbd0" directory="/mysqldata" fstype="ext3" op start timeout=60s op stop timeout=60s

crm(live)configure# commit

crm(live)configure# exit

3,mysql资源的定义(node1上操作)

先为mysql集群创建一个IP地址资源,通过集群提供服务时使用,这个地址就是客户端访问mysql服务器使用的ip地址;

# crm configure primitive myip ocf:heartbeat:IPaddr params ip=192.168.101.200

配置mysqld服务为高可用资源:

# crm configure primitive mysqlserver lsb:mysqld

[root@node1 ~]# crm status

============

Last updated: Sat May 12 12:43:07 2012

Stack: openais

Current DC: node1.a.com - partition with quorum

Version: 1.1.5-1.1.el5-01e86afaaa6d4a8c4836f68df80ababd6ca3902f

2 Nodes configured, 2 expected votes

4 Resources configured.

============

Online: [ node1.a.com node2.a.com ]

Master/Slave Set: MS_mysqldrbd [mysqldrbd]

Masters: [ node1.a.com ]

Slaves: [ node2.a.com ]

MysqlFS (ocf::heartbeat:Filesystem): Started node1.a.com

myip (ocf::heartbeat:IPaddr): Started node2.a.com

mysqlserver (lsb:mysqld): Started node1.a.com

4,配置资源的各种约束:

集群拥有所有必需资源,但它可能还无法进行正确处理。资源约束则用以指定在哪些群集节点上运行资源,以何种顺序装载资源,以及特定资源依赖于哪些其它资源。pacemaker共给我们提供了三种资源约束方法:

1)Resource Location(资源位置):定义资源可以、不可以或尽可能在哪些节点上运行

2)Resource Collocation(资源排列):排列约束用以定义集群资源可以或不可以在某个节点上同时运行

3)Resource Order(资源顺序):顺序约束定义集群资源在节点上启动的顺序

定义约束时,还需要指定分数。各种分数是集群工作方式的重要组成部分。其实,从迁移资源到决定在已降级集群中停止哪些资源的整个过程是通过以某种方式修改分数来实现的。分数按每个资源来计算,资源分数为负的任何节点都无法运行该资源。在计算出资源分数后,集群选择分数最高的节点。INFINITY(无穷大)目前定义为 1,000,000。加减无穷大遵循以下3个基本规则:

1)任何值 + 无穷大 = 无穷大

2)任何值 - 无穷大 = -无穷大

3)无穷大 - 无穷大 = -无穷大

定义资源约束时,也可以指定每个约束的分数。分数表示指派给此资源约束的值。分数较高的约束先应用,分数较低的约束后应用。通过使用不同的分数为既定资源创建更多位置约束,可以指定资源要故障转移至的目标节点的顺序。

我们要定义如下的约束:

# crm

crm(live)# configure

crm(live)configure# colocation MysqlFS_with_mysqldrbd inf: MysqlFS MS_mysqldrbd:Master myip mysqlserver

crm(live)configure# order MysqlFS_after_mysqldrbd inf: MS_mysqldrbd:promote MysqlFS:start

crm(live)configure# order myip_after_MysqlFS mandatory: MysqlFS myip

crm(live)configure# order mysqlserver_after_myip mandatory: myip mysqlserver

验证是否有错:

验证是否有错:

crm(live)configure# verify

提交:

crm(live)configure# commit

crm(live)configure# exit

查看配置信息:

[root@node1 ~]# crm configure show

node node1.a.com

node node2.a.com

primitive MysqlFS ocf:heartbeat:Filesystem \

params device="/dev/drbd0" directory="/mysqldata" fstype="ext3" \

op start interval="0" timeout="60s" \

op stop interval="0" timeout="60s"

primitive myip ocf:heartbeat:IPaddr \

params ip="192.168.101.200"

primitive mysqldrbd ocf:heartbeat:drbd \

params drbd_resource="mysql" \

op monitor interval="30s" role="Master" \

op monitor interval="31s" role="Slave" \

op start interval="0" timeout="240s" \

op stop interval="0" timeout="100s"

primitive mysqlserver lsb:mysqld

ms MS_mysqldrbd mysqldrbd \

meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true"

colocation MysqlFS_with_mysqldrbd inf: MysqlFS MS_mysqldrbd:Master myip mysqlserver

order MysqlFS_after_mysqldrbd inf: MS_mysqldrbd:promote MysqlFS:start

order myip_after_MysqlFS inf: MysqlFS myip

order mysqlserver_after_myip inf: myip mysqlserver

property $id="cib-bootstrap-options" \

dc-version="1.1.5-1.1.el5-01e86afaaa6d4a8c4836f68df80ababd6ca3902f" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

查看crm运行状态:

[root@node1 ~]# crm status

============

Last updated: Sat May 12 12:47:02 2012

Stack: openais

Current DC: node1.a.com - partition with quorum

Version: 1.1.5-1.1.el5-01e86afaaa6d4a8c4836f68df80ababd6ca3902f

2 Nodes configured, 2 expected votes

4 Resources configured.

============

Online: [ node1.a.com node2.a.com ]

Master/Slave Set: MS_mysqldrbd [mysqldrbd]

Masters: [ node1.a.com ]

Slaves: [ node2.a.com ]

MysqlFS (ocf::heartbeat:Filesystem): Started node1.a.com

myip (ocf::heartbeat:IPaddr): Started node1.a.com

mysqlserver (lsb:mysqld): Started node1.a.com

可见,服务现在在node1上正常运行:

在node1上的操作,查看mysql的运行状态:

# service mysqld status

MySQL running (5345) [ OK ]

查看是否自动挂载

[root@node1 corosync]# mount

/dev/hdc on /media/RHEL_5.4 i386 DVD type iso9660 (ro,noexec,nosuid,nodev,uid=0)

/dev/hdc on /mnt/cdrom type iso9660 (ro)

/dev/drbd0 on /mysqldata type ext3 (rw)

查看目录:

[root@node1 ~]# ls /mysqldata/

data f1 f2 lost+found

查看vip的状态

eth0 Link encap:Ethernet HWaddr 00:0C:29:73:46:87

inet addr:192.168.101.250 Bcast:192.168.101.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fe73:4687/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:126339 errors:0 dropped:0 overruns:0 frame:0

TX packets:787206 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:13314481 (12.6 MiB) TX bytes:1144350819 (1.0 GiB)

Interrupt:67 Base address:0x2000

eth0:0 Link encap:Ethernet HWaddr 00:0C:29:73:46:87

inet addr:192.168.101.200 Bcast:192.168.101.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

Interrupt:67 Base address:0x2000

继续测试:

在node1上操作,让node1下线:

# crm node standby

查看集群运行的状态:

[root@node1 ~]# crm status

============

Last updated: Sat May 12 12:49:56 2012

Stack: openais

Current DC: node1.a.com - partition with quorum

Version: 1.1.5-1.1.el5-01e86afaaa6d4a8c4836f68df80ababd6ca3902f

2 Nodes configured, 2 expected votes

4 Resources configured.

============

Node node1.a.com: standby

Online: [ node2.a.com ]

Master/Slave Set: MS_mysqldrbd [mysqldrbd]

Masters: [ node2.a.com ] 主用变成node2

Stopped: [ mysqldrbd:0 ]

MysqlFS (ocf::heartbeat:Filesystem): Started node2.a.com

myip (ocf::heartbeat:IPaddr): Started node2.a.com

可见我们的资源已经都切换到了node2上:

查看node2的运行状态:

# service mysqld status

MySQL running (7585) [ OK ]

查看目录:

[root@node2 ~]# ls /mysqldata/

data f1 f2 lost+found

ok,现在一切正常,我们可以验证mysql服务是否能被正常访问:

我们定义的是通过VIP:192.168.101.200来访问mysql服务,现在node2上建立一个可以让某个网段主机能访问的账户(这个内容会同步drbd设备同步到node1上):

Node2:

user

[root@node2 ~]# /usr/local/mysql/bin/mysql -u root

mysql> grant all on *.* to test@'192.168.%.%' identified by '123456';

Query OK, 0 rows affected (0.08 sec)

mysql> flush privileges;

Query OK, 0 rows affected (0.00 sec)

在node1上做如下测试

[root@node1 ~]# mysql -u test -h 192.168.101.200

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 13

Server version: 5.5.15-log MySQL Community Server (GPL)

Copyright (c) 2000, 2010, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql>