- RabbitMQ实战(二)-消息持久化策略、事务以及Confirm消息确认方式

Java思享汇

RabbitMQ学习RabbitMQ消息持久化事务confirmack

「扫码关注我,面试、各种技术(mysql、zookeeper、微服务、redis、jvm)持续更新中~」RabbitMQ学习列表:RabbitMQ实战(一)-消息通信基本概念·在上一篇学习完RabbitMQ通信的基本概念后,我们来继续学习消息的持久化以及代码实现RabbitMQ通信。在正常生产环境运维过程中无法避免RabbitMQ服务器重启,那么,如果RabbitMQ重启之后,那些队列和交换器就会

- Zookeeper性能优化与调优技巧精讲

AI天才研究院

AI大模型企业级应用开发实战DeepSeekR1&大数据AI人工智能大模型计算科学神经计算深度学习神经网络大数据人工智能大型语言模型AIAGILLMJavaPython架构设计AgentRPA

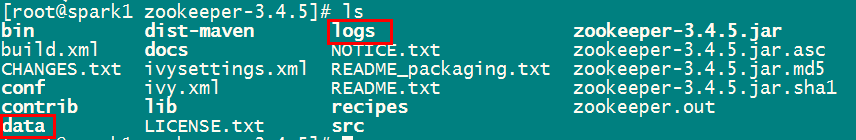

Zookeeper性能优化与调优技巧精讲1.背景介绍1.1什么是Zookeeper?ApacheZooKeeper是一个开源的分布式协调服务,为分布式应用程序提供高可用性和强一致性的协调服务。它主要用于解决分布式环境中的数据管理问题,如统一命名服务、配置管理、分布式锁、集群管理等。ZooKeeper的设计目标是构建一个简单且高效的核心,以确保最大程度的可靠性和可扩展性。1.2Zookeeper的应

- 关于虚拟机的VMware和Ubuntu18之间的网络问题

海洋猿

ubuntulinux运维网络

在这里我记录一下最近遇到的问题,因为最近在虚拟机上配置了zookeeper集群,并且使用的网络是桥接网络。由于我的宿主机是通过手机热点连接的,这可能在桥接模式下导致了我的ip分配的网段发生改变,经过一番百度之后,决定改用nat模式网络。这是他们之间的区别,简单来说,我想要虚拟机的ip恢复到之前的,而不用我再去修改zookeeper的一堆配置,也不用我去考虑宿主机和虚拟机不在相同的网段下。一、桥接模

- sparkML入门,通俗解释机器学习的框架和算法

Tometor

spark-ml机器学习算法回归数据挖掘人工智能scala

一、机器学习的整体框架(类比烹饪)假设你要做一道菜,机器学习的过程可以类比为:步骤-->烹饪类比-->机器学习对应1.确定目标|想做什么菜(红烧肉/沙拉)|明确任务(分类/回归/聚类)2.准备食材|买菜、洗菜、切菜|数据收集与预处理3.设计食谱|决定烹饪步骤和调料|选择算法和模型设计4.试做并尝味道|调整火候和调味|模型训练与调参5.最终成品|端上桌的菜|模型部署与应用二、机器学习的核心流程1.数

- Zookeeper(103)如何在生产环境中使用Zookeeper的命令行工具?

辞暮尔尔-烟火年年

微服务zookeeper分布式云原生

在生产环境中使用ZooKeeper的命令行工具是管理和调试ZooKeeper集群的重要手段。ZooKeeper提供了一个名为zkCli.sh的命令行工具,可以用来执行各种操作,如创建节点、读取数据、设置ACL等。以下是详细的步骤和代码示例,展示如何使用ZooKeeper的命令行工具。连接到ZooKeeper首先,确保你已经安装了ZooKeeper并且ZooKeeper服务正在运行。你可以使用Zoo

- Zookeeper(102)如何在生产环境中设置Zookeeper的ACL?

辞暮尔尔-烟火年年

微服务zookeeperdebian分布式

在生产环境中设置ZooKeeper的访问控制列表(ACL)是确保数据安全和访问控制的关键步骤。ACL允许你定义谁可以访问ZooKeeper节点以及他们可以执行的操作。以下是设置ZooKeeperACL的详细步骤和代码示例。ACL基础知识ZooKeeper支持以下几种权限:CREATE:允许创建子节点。READ:允许读取节点数据和获取子节点列表。WRITE:允许设置节点数据。DELETE:允许删除子

- 本地docker安装zookeeper,kafka,flink

a724952091

flinkkafkadocker

首先安装zookeeper这里zookeeper的安装是为了去使用kafka这里我们安装的是wurstmeister的kafka和zookeeper镜像也是在hub.docker.com网站上,Star最多的kafka镜像直接在cmd执行run命令(前提是有本地docker。。。)第一次使用因为本地没有此镜像会去下载dockerrun-d--namezookeeper-p2181-twurstme

- Docker-compose编排部署Kafka伪分布式集群(为后续实验搭建基础环境)

F_Hello_World

Kafkakafkadocker

本实验参照官网http://kafka.apache.org/documentation/构建,为后续了解kafka应用做环境准备。搭建环境:MAC10.15docker19.03.4docker-composeversion1.24.1jdk1.8以上(对于kafka2.x以上版本已遗弃对jdk1.7的支持)zookeeper-3.4.14(这里没使用kafka自带zk,而使用外置zk,这里zk

- 大数据面试之路 (三) mysql

愿与狸花过一生

大数据面试职场和发展

技术选型通常也是被问道的问题,一方面考察候选人对技术掌握程度,另一方面考察对项目的理解,以及项目总结能力。介绍项目是从数据链路介绍,是一个很好来的方式,会让人觉得思路清晰,项目理解透彻。将SparkSQL加工后的数据存入MySQL通常基于以下几个关键原因:1.数据应用场景适配OLTP与OLAP分工:SparkSQL擅长处理大数据量的OLAP(分析型)任务,而MySQL作为OLTP(事务型)数据库,

- 如何使用 SparkLLM 进行自然语言处理

shuoac

python

在当代自然语言处理领域,拥有强大的跨域知识和语言理解能力的模型至关重要。iFLYTEK开发的SparkLLM便是这样一个大规模认知模型。通过学习大量文本、代码和图像,SparkLLM能够理解和执行基于自然对话的任务。在本文中,我们将深入探讨如何配置和使用SparkLLM来处理自然语言任务。技术背景介绍大规模语言模型(LLM)近年来在各个领域中获得了广泛的应用,它们在处理自然语言任务时表现出色。iF

- RDD 行动算子

阿强77

RDDSpark

在ApacheSpark中,RDD(弹性分布式数据集)是核心数据结构之一。行动算子会触发实际的计算并返回结果或执行某些操作。以下是Scala中常见的RDD行动算子:1.collect()将RDD中的所有数据收集到驱动程序中,并返回一个数组。注意:如果数据集很大,可能会导致内存不足。valdata:Array[T]=rdd.collect()2.count()返回RDD中元素的总数。valcount

- 使用Spring Boot集成Kafka开发:接收Kafka消息的Java应用

YazIdris

javaspringbootkafka

Kafka是一个分布式的流处理平台,它具有高吞吐量、可扩展性和容错性的特点。SpringBoot提供了与Kafka集成的便捷方式,使得开发者可以轻松地创建Kafka消息接收应用。本文将介绍如何使用SpringBoot集成Kafka开发,以及如何编写Java代码来接收Kafka消息。首先,确保你已经安装了Kafka和Zookeeper,并启动了它们。接下来,创建一个新的SpringBoot项目,并添

- 讲一下Spark的shuffle过程

冰火同学

Sparkspark大数据分布式

首先Spark的shuffle是Spark分布式集群计算的核心。Spark的shuffle可以从shuffle的阶段划分,shuffle数据存储,shuffle的数据拉取三个方面进行讲解。首先shuffle的阶段分为shuffle的shufflewrite阶段和shuffleread阶段。shufflewrite的触发条件就是上游的Stage任务shuffleMapTask完成计算后,会哪找下游S

- Spark常见面试题目(1)

冰火同学

Sparkspark面试大数据

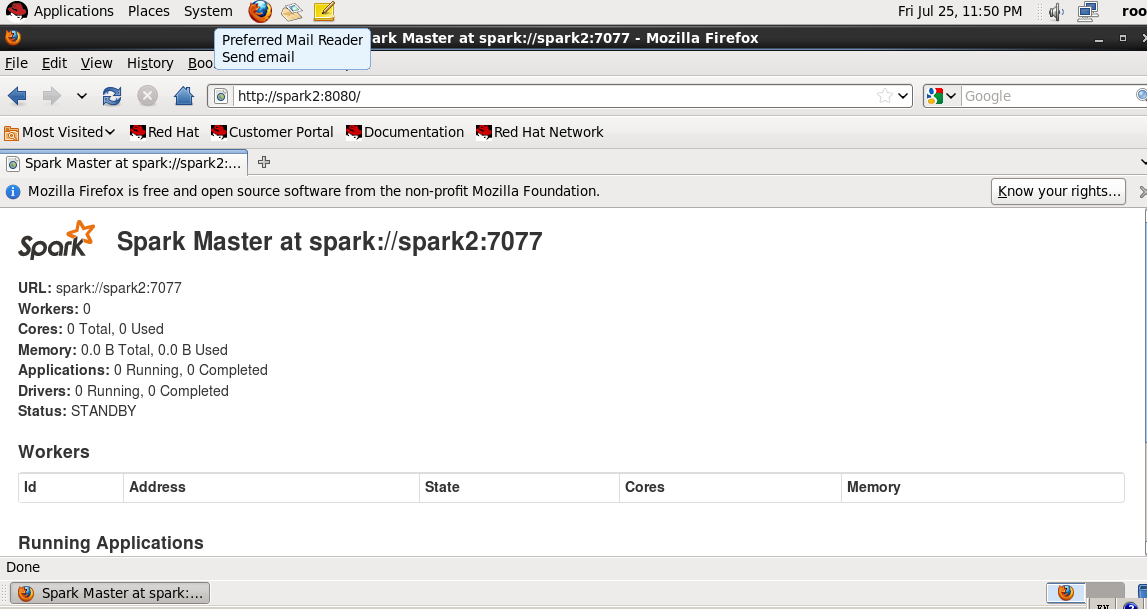

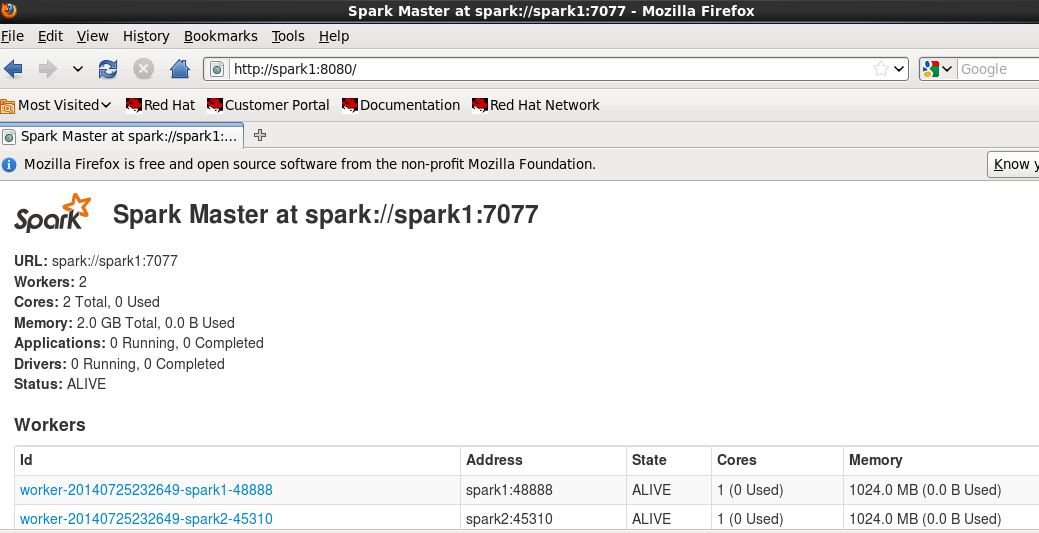

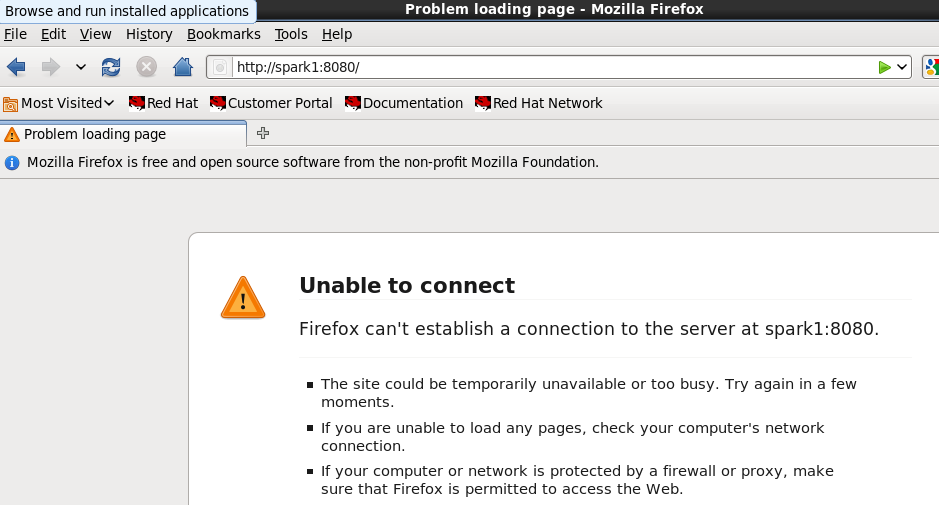

Spark有哪几种部署的方式,谈谈方式都有哪些特点第一种是local本地部署,通常就是一台机器用于测试。第二种是standalone部署模式,就是一个master节点,控制几个work节点,其实一台机器的standalone模式就是它自己即是master,又是work。第三种是yarn模式,就是吧spark交给yarn进行资源调度管理。第四种就是messon模式,这种在国内很少见到。Spark主备

- Spark数据倾斜的问题

冰火同学

Sparkspark大数据分布式

Spark数据倾斜业务背景Spark数据倾斜表现Spark的数据倾斜,包括SparkStreaming和SparkSQL,表现主要有下面几种:1、Excutorlost,OOM,Shuffle过程出错2、DriverOOM3、单个Excutor执行器一直在运行,整体任务卡在某个阶段不能结束4、正常运行的任务突然失败数据倾斜产生的原因以Spark使用场景为例,我们再做数据计算的时候会涉及类似coun

- PySpark实现导出两个包含多个Parquet数据文件的S3目录里的对应值的差异值分析

weixin_30777913

pythonspark数据分析云计算

编写PySpark代码实现从一个包含多个Parquet数据文件的AmazonS3目录的dataframe数据里取两个维度字段,一个度量字段的数据,根据这两个维度字段的数据分组统计,计算度量字段的数据的分组总计值,得到一个包含两个维度字段和度量字段的分组总计值字段的dataframe,再从另一个包含多个Parquet数据文件的S3目录的dataframe数据里取两个维度字段,一个度量字段的数据组成一

- 搭建分布式Hive集群

逸曦玥泱

大数据运维分布式hivehadoop

title:搭建分布式Hive集群date:2024-11-2923:39:00categories:-服务器tags:-Hive-大数据搭建分布式Hive集群本次实验环境:Centos7-2009、Hadoop-3.1.4、JDK8、Zookeeper-3.6.3、Mysql-5.7.38、Hive-3.1.2功能规划方案一(本地运行模式)Master主节点(Mysql+Hive)192.168

- Hadoop、Spark和 Hive 的详细关系

夜行容忍

hadoopsparkhive

Hadoop、Spark和Hive的详细关系1.ApacheHadoopHadoop是一个开源框架,用于分布式存储和处理大规模数据集。核心组件:HDFS(HadoopDistributedFileSystem):分布式文件系统,提供高吞吐量的数据访问。YARN(YetAnotherResourceNegotiator):集群资源管理和作业调度系统。MapReduce:基于YARN的并行处理框架,用

- 大数据技术生态圈:Hadoop、Hive、Spark的区别和关系

雨中徜徉的思绪漫溢

大数据hadoophive

大数据技术生态圈:Hadoop、Hive、Spark的区别和关系在大数据领域中,Hadoop、Hive和Spark是三个常用的开源技术,它们在大数据处理和分析方面发挥着重要作用。虽然它们都是为了处理大规模数据集而设计的,但它们在功能和使用方式上存在一些区别。本文将详细介绍Hadoop、Hive和Spark的区别和关系,并提供相应的源代码示例。Hadoop:Hadoop是一个用于分布式存储和处理大规

- 大数据面试之路 (一) 数据倾斜

愿与狸花过一生

大数据面试职场和发展

记录大数据面试历程数据倾斜大数据岗位,数据倾斜面试必问的一个问题。一、数据倾斜的表现与原因表现某个或某几个Task执行时间过长,其他Task快速完成。Spark/MapReduce作业卡在某个阶段(如reduce阶段),日志显示少数Task处理大量数据。资源利用率不均衡(如CPU、内存集中在某些节点)。常见场景Key分布不均:如某些Key对应的数据量极大(如用户ID为空的记录、热点事件)。数据分区

- zookeeper程序员指南

weixin_30326741

java运维shell

1简介本文是为想要创建使用ZooKeeper协调服务优势的分布式应用的开发者准备的。本文包含理论信息和实践信息。本指南的前四节对各种ZooKeeper概念进行较高层次的讨论。这些概念对于理解ZooKeeper是如何工作的,以及如何使用ZooKeeper来进行工作都是必要的。这几节没有代码,但却要求读者对分布式计算相关的问题较为熟悉。本文的大多数信息以可独立访问的参考材料的形式存在。但是,在编写第一

- ZooKeeper学习总结(1)——ZooKeeper入门介绍

一杯甜酒

ZooKeeper学习总结Zookeeper

1.概述Zookeeper是Hadoop的一个子项目,它是分布式系统中的协调系统,可提供的服务主要有:配置服务、名字服务、分布式同步、组服务等。它有如下的一些特点:简单Zookeeper的核心是一个精简的文件系统,它支持一些简单的操作和一些抽象操作,例如,排序和通知。丰富Zookeeper的原语操作是很丰富的,可实现一些协调数据结构和协议。例如,分布式队列、分布式锁和一组同级别节点中的“领导者选举

- Zookeeper+kafka学习笔记

CHR_YTU

Zookeeper

Zookeeper是Apache的一个java项目,属于Hadoop系统,扮演管理员的角色。配置管理分布式系统都有好多机器,比如我在搭建hadoop的HDFS的时候,需要在一个主机器上(Master节点)配置好HDFS需要的各种配置文件,然后通过scp命令把这些配置文件拷贝到其他节点上,这样各个机器拿到的配置信息是一致的,才能成功运行起来HDFS服务。Zookeeper提供了这样的一种服务:一种集

- Zookeeper【概念(集中式到分布式、什么是分布式 、CAP定理 、什么是Zookeeper、应用场景、为什么选择Zookeeper 、基本概念) 】(一)-全面详解(学习总结---从入门到深化)

童小纯

中间件大全---全面详解zookeeper分布式

作者简介:大家好,我是小童,Java开发工程师,CSDN博客博主,Java领域新星创作者系列专栏:前端、Java、Java中间件大全、微信小程序、微信支付、若依框架、Spring全家桶如果文章知识点有错误的地方,请指正!和大家一起学习,一起进步如果感觉博主的文章还不错的话,请三连支持一下博主哦博主正在努力完成2023计划中:以梦为马,扬帆起航,2023追梦人目录Zookeeper概念_集中式到分布

- Zookeeper与Kafka学习笔记

上海研博数据

zookeeperkafka学习

一、Zookeeper核心要点1.核心特性分布式协调服务,用于维护配置/命名/同步等元数据采用层次化数据模型(Znode树结构),每个节点可存储<1MB数据典型应用场景:HadoopNameNode高可用HBase元数据管理Kafka集群选举与状态管理2.设计限制内存型存储,不适合大数据量场景数据变更通过版本号(Version)控制,实现乐观锁机制采用ZAB协议保证数据一致性二、Kafka核心架构

- Zookeeper学习

种豆走天下

zookeeper学习分布式

Zookeeper是一个开源的分布式协调框架,它主要用于处理分布式系统中的一些常见问题,如同步、配置管理、命名服务和集群管理等。Zookeeper是由Apache提供的,并且广泛应用于各种分布式应用中,特别是在高可用、高可靠性和高性能的系统中。Zookeeper的主要功能分布式协调:Zookeeper提供了协调多个节点(服务器)间行为的机制。例如,分布式锁、选举、配置管理等。命名服务:Zookee

- Zookeeper实践指南

Kale又菜又爱玩

zookeeper分布式java

Zookeeper实践指南1.什么是Zookeeper?Zookeeper是Apache旗下的一个开源分布式协调框架,主要用于解决分布式系统中的一致性问题,提供高效可靠的分布式数据管理能力。1.1Zookeeper的核心特性顺序一致性:客户端的更新请求按顺序执行。原子性:更新操作要么成功要么失败,不存在中间状态。可靠性:一旦数据写入Zookeeper,它就不会丢失,除非主动删除。高可用性:采用主从

- zookeeper与kafka集群配置

zhangpeng455547940

计算机linuxjava运维

基本配置修改ipvi/etc/sysconfig/network-scripts/ifcfg-ens33BOOTPROTO=staticONBOOT=yesIPADDR=192.168.139.133NETMASK=255.255.255.0GATEWAY=192.168.139.2DNS1=192.168.1.1修改主机名hostnamectlset-hostnameSSH免密登录vi/etc/

- scala针对复杂数据源导入与分隔符乱码处理

Tometor

scalajavascript后端java数据结构

复杂的数据源,和奇怪的数据格式是生产中经常遇到的难题,本文将探讨如何解析分隔符混乱的数据,和如何导入各种数据源文件一、非标准分隔符处理当数据源的分隔符混乱或不统一时(如,、|、\t混合使用),可采用以下方法:1.1动态检测分隔符//示例:自动检测前100行的常用分隔符valsampleLines=spark.read.text("data.csv").limit(100).collect()val

- ClickHouse Keeper 源码解析

阿里云云栖号

云栖号技术分享java开发语言后端

简介:ClickHouse社区在21.8版本中引入了ClickHouseKeeper。ClickHouseKeeper是完全兼容Zookeeper协议的分布式协调服务。本文对开源版本ClickHousev21.8.10.19-lts源码进行了解析。作者简介:范振(花名辰繁),阿里云开源大数据-OLAP方向负责人。内容框架背景架构图核心流程图梳理内部代码流程梳理Nuraft关键配置排坑结论关于我们R

- Java 并发包之线程池和原子计数

lijingyao8206

Java计数ThreadPool并发包java线程池

对于大数据量关联的业务处理逻辑,比较直接的想法就是用JDK提供的并发包去解决多线程情况下的业务数据处理。线程池可以提供很好的管理线程的方式,并且可以提高线程利用率,并发包中的原子计数在多线程的情况下可以让我们避免去写一些同步代码。

这里就先把jdk并发包中的线程池处理器ThreadPoolExecutor 以原子计数类AomicInteger 和倒数计时锁C

- java编程思想 抽象类和接口

百合不是茶

java抽象类接口

接口c++对接口和内部类只有简介的支持,但在java中有队这些类的直接支持

1 ,抽象类 : 如果一个类包含一个或多个抽象方法,该类必须限定为抽象类(否者编译器报错)

抽象方法 : 在方法中仅有声明而没有方法体

package com.wj.Interface;

- [房地产与大数据]房地产数据挖掘系统

comsci

数据挖掘

随着一个关键核心技术的突破,我们已经是独立自主的开发某些先进模块,但是要完全实现,还需要一定的时间...

所以,除了代码工作以外,我们还需要关心一下非技术领域的事件..比如说房地产

&nb

- 数组队列总结

沐刃青蛟

数组队列

数组队列是一种大小可以改变,类型没有定死的类似数组的工具。不过与数组相比,它更具有灵活性。因为它不但不用担心越界问题,而且因为泛型(类似c++中模板的东西)的存在而支持各种类型。

以下是数组队列的功能实现代码:

import List.Student;

public class

- Oracle存储过程无法编译的解决方法

IT独行者

oracle存储过程

今天同事修改Oracle存储过程又导致2个过程无法被编译,流程规范上的东西,Dave 这里不多说,看看怎么解决问题。

1. 查看无效对象

XEZF@xezf(qs-xezf-db1)> select object_name,object_type,status from all_objects where status='IN

- 重装系统之后oracle恢复

文强chu

oracle

前几天正在使用电脑,没有暂停oracle的各种服务。

突然win8.1系统奔溃,无法修复,开机时系统 提示正在搜集错误信息,然后再开机,再提示的无限循环中。

无耐我拿出系统u盘 准备重装系统,没想到竟然无法从u盘引导成功。

晚上到外面早了一家修电脑店,让人家给装了个系统,并且那哥们在我没反应过来的时候,

直接把我的c盘给格式化了 并且清理了注册表,再装系统。

然后的结果就是我的oracl

- python学习二( 一些基础语法)

小桔子

pthon基础语法

紧接着把!昨天没看继续看django 官方教程,学了下python的基本语法 与c类语言还是有些小差别:

1.ptyhon的源文件以UTF-8编码格式

2.

/ 除 结果浮点型

// 除 结果整形

% 除 取余数

* 乘

** 乘方 eg 5**2 结果是5的2次方25

_&

- svn 常用命令

aichenglong

SVN版本回退

1 svn回退版本

1)在window中选择log,根据想要回退的内容,选择revert this version或revert chanages from this version

两者的区别:

revert this version:表示回退到当前版本(该版本后的版本全部作废)

revert chanages from this versio

- 某小公司面试归来

alafqq

面试

先填单子,还要写笔试题,我以时间为急,拒绝了它。。时间宝贵。

老拿这些对付毕业生的东东来吓唬我。。

面试官很刁难,问了几个问题,记录下;

1,包的范围。。。public,private,protect. --悲剧了

2,hashcode方法和equals方法的区别。谁覆盖谁.结果,他说我说反了。

3,最恶心的一道题,抽象类继承抽象类吗?(察,一般它都是被继承的啊)

4,stru

- 动态数组的存储速度比较 集合框架

百合不是茶

集合框架

集合框架:

自定义数据结构(增删改查等)

package 数组;

/**

* 创建动态数组

* @author 百合

*

*/

public class ArrayDemo{

//定义一个数组来存放数据

String[] src = new String[0];

/**

* 增加元素加入容器

* @param s要加入容器

- 用JS实现一个JS对象,对象里有两个属性一个方法

bijian1013

js对象

<html>

<head>

</head>

<body>

用js代码实现一个js对象,对象里有两个属性,一个方法

</body>

<script>

var obj={a:'1234567',b:'bbbbbbbbbb',c:function(x){

- 探索JUnit4扩展:使用Rule

bijian1013

java单元测试JUnitRule

在上一篇文章中,讨论了使用Runner扩展JUnit4的方式,即直接修改Test Runner的实现(BlockJUnit4ClassRunner)。但这种方法显然不便于灵活地添加或删除扩展功能。下面将使用JUnit4.7才开始引入的扩展方式——Rule来实现相同的扩展功能。

1. Rule

&n

- [Gson一]非泛型POJO对象的反序列化

bit1129

POJO

当要将JSON数据串反序列化自身为非泛型的POJO时,使用Gson.fromJson(String, Class)方法。自身为非泛型的POJO的包括两种:

1. POJO对象不包含任何泛型的字段

2. POJO对象包含泛型字段,例如泛型集合或者泛型类

Data类 a.不是泛型类, b.Data中的集合List和Map都是泛型的 c.Data中不包含其它的POJO

- 【Kakfa五】Kafka Producer和Consumer基本使用

bit1129

kafka

0.Kafka服务器的配置

一个Broker,

一个Topic

Topic中只有一个Partition() 1. Producer:

package kafka.examples.producers;

import kafka.producer.KeyedMessage;

import kafka.javaapi.producer.Producer;

impor

- lsyncd实时同步搭建指南——取代rsync+inotify

ronin47

1. 几大实时同步工具比较 1.1 inotify + rsync

最近一直在寻求生产服务服务器上的同步替代方案,原先使用的是 inotify + rsync,但随着文件数量的增大到100W+,目录下的文件列表就达20M,在网络状况不佳或者限速的情况下,变更的文件可能10来个才几M,却因此要发送的文件列表就达20M,严重减低的带宽的使用效率以及同步效率;更为要紧的是,加入inotify

- java-9. 判断整数序列是不是二元查找树的后序遍历结果

bylijinnan

java

public class IsBinTreePostTraverse{

static boolean isBSTPostOrder(int[] a){

if(a==null){

return false;

}

/*1.只有一个结点时,肯定是查找树

*2.只有两个结点时,肯定是查找树。例如{5,6}对应的BST是 6 {6,5}对应的BST是

- MySQL的sum函数返回的类型

bylijinnan

javaspringsqlmysqljdbc

今天项目切换数据库时,出错

访问数据库的代码大概是这样:

String sql = "select sum(number) as sumNumberOfOneDay from tableName";

List<Map> rows = getJdbcTemplate().queryForList(sql);

for (Map row : rows

- java设计模式之单例模式

chicony

java设计模式

在阎宏博士的《JAVA与模式》一书中开头是这样描述单例模式的:

作为对象的创建模式,单例模式确保某一个类只有一个实例,而且自行实例化并向整个系统提供这个实例。这个类称为单例类。 单例模式的结构

单例模式的特点:

单例类只能有一个实例。

单例类必须自己创建自己的唯一实例。

单例类必须给所有其他对象提供这一实例。

饿汉式单例类

publ

- javascript取当月最后一天

ctrain

JavaScript

<!--javascript取当月最后一天-->

<script language=javascript>

var current = new Date();

var year = current.getYear();

var month = current.getMonth();

showMonthLastDay(year, mont

- linux tune2fs命令详解

daizj

linuxtune2fs查看系统文件块信息

一.简介:

tune2fs是调整和查看ext2/ext3文件系统的文件系统参数,Windows下面如果出现意外断电死机情况,下次开机一般都会出现系统自检。Linux系统下面也有文件系统自检,而且是可以通过tune2fs命令,自行定义自检周期及方式。

二.用法:

Usage: tune2fs [-c max_mounts_count] [-e errors_behavior] [-g grou

- 做有中国特色的程序员

dcj3sjt126com

程序员

从出版业说起 网络作品排到靠前的,都不会太难看,一般人不爱看某部作品也是因为不喜欢这个类型,而此人也不会全不喜欢这些网络作品。究其原因,是因为网络作品都是让人先白看的,看的好了才出了头。而纸质作品就不一定了,排行榜靠前的,有好作品,也有垃圾。 许多大牛都是写了博客,后来出了书。这些书也都不次,可能有人让为不好,是因为技术书不像小说,小说在读故事,技术书是在学知识或温习知识,有

- Android:TextView属性大全

dcj3sjt126com

textview

android:autoLink 设置是否当文本为URL链接/email/电话号码/map时,文本显示为可点击的链接。可选值(none/web/email/phone/map/all) android:autoText 如果设置,将自动执行输入值的拼写纠正。此处无效果,在显示输入法并输

- tomcat虚拟目录安装及其配置

eksliang

tomcat配置说明tomca部署web应用tomcat虚拟目录安装

转载请出自出处:http://eksliang.iteye.com/blog/2097184

1.-------------------------------------------tomcat 目录结构

config:存放tomcat的配置文件

temp :存放tomcat跑起来后存放临时文件用的

work : 当第一次访问应用中的jsp

- 浅谈:APP有哪些常被黑客利用的安全漏洞

gg163

APP

首先,说到APP的安全漏洞,身为程序猿的大家应该不陌生;如果抛开安卓自身开源的问题的话,其主要产生的原因就是开发过程中疏忽或者代码不严谨引起的。但这些责任也不能怪在程序猿头上,有时会因为BOSS时间催得紧等很多可观原因。由国内移动应用安全检测团队爱内测(ineice.com)的CTO给我们浅谈关于Android 系统的开源设计以及生态环境。

1. 应用反编译漏洞:APK 包非常容易被反编译成可读

- C#根据网址生成静态页面

hvt

Web.netC#asp.nethovertree

HoverTree开源项目中HoverTreeWeb.HVTPanel的Index.aspx文件是后台管理的首页。包含生成留言板首页,以及显示用户名,退出等功能。根据网址生成页面的方法:

bool CreateHtmlFile(string url, string path)

{

//http://keleyi.com/a/bjae/3d10wfax.htm

stri

- SVG 教程 (一)

天梯梦

svg

SVG 简介

SVG 是使用 XML 来描述二维图形和绘图程序的语言。 学习之前应具备的基础知识:

继续学习之前,你应该对以下内容有基本的了解:

HTML

XML 基础

如果希望首先学习这些内容,请在本站的首页选择相应的教程。 什么是SVG?

SVG 指可伸缩矢量图形 (Scalable Vector Graphics)

SVG 用来定义用于网络的基于矢量

- 一个简单的java栈

luyulong

java数据结构栈

public class MyStack {

private long[] arr;

private int top;

public MyStack() {

arr = new long[10];

top = -1;

}

public MyStack(int maxsize) {

arr = new long[maxsize];

top

- 基础数据结构和算法八:Binary search

sunwinner

AlgorithmBinary search

Binary search needs an ordered array so that it can use array indexing to dramatically reduce the number of compares required for each search, using the classic and venerable binary search algori

- 12个C语言面试题,涉及指针、进程、运算、结构体、函数、内存,看看你能做出几个!

刘星宇

c面试

12个C语言面试题,涉及指针、进程、运算、结构体、函数、内存,看看你能做出几个!

1.gets()函数

问:请找出下面代码里的问题:

#include<stdio.h>

int main(void)

{

char buff[10];

memset(buff,0,sizeof(buff));

- ITeye 7月技术图书有奖试读获奖名单公布

ITeye管理员

活动ITeye试读

ITeye携手人民邮电出版社图灵教育共同举办的7月技术图书有奖试读活动已圆满结束,非常感谢广大用户对本次活动的关注与参与。

7月试读活动回顾:

http://webmaster.iteye.com/blog/2092746

本次技术图书试读活动的优秀奖获奖名单及相应作品如下(优秀文章有很多,但名额有限,没获奖并不代表不优秀):

《Java性能优化权威指南》