【ffmpeg学习】Tutorial 04: Spawning Threads 将解析线程与视频解码线程分开

环境

FFmpeg:2013年9月9日 github master版本

SDL:SDL2

系统:macos 10.8 64位

ffmpeg编译参数:

./configure --cc=clang --disable-everything --enable-libfdk_aac --enable-libmp3lame --enable-protocol=file --enable-protocol=file --enable-decoder=aac --enable-decoder=aac --enable-decoder=mp3 --enable-encoder=libmp3lame --enable-encoder=libfdk_aac --enable-demuxer=aac --enable-demuxer=mp3 --enable-muxer=adts --enable-muxer=mp3 --enable-parser=aac --enable-decoder=flv --enable-decoder=h264 --enable-decoder=mpeg4 --enable-demuxer=avi --enable-demuxer=flv --enable-demuxer=h264 --enable-muxer=mp4 --enable-muxer=flv

代码源自

http://dranger.com/ffmpeg/tutorial04.html

修改后版本

// tutorial04.c

// A pedagogical video player that will stream through every video frame as fast as it can,

// and play audio (out of sync).

//

// This tutorial was written by Stephen Dranger (dranger@gmail.com).

//

// Code based on FFplay, Copyright (c) 2003 Fabrice Bellard,

// and a tutorial by Martin Bohme (boehme@inb.uni-luebeckREMOVETHIS.de)

// Tested on Gentoo, CVS version 5/01/07 compiled with GCC 4.1.1

//

// Use the Makefile to build all the samples.

//

// Run using

// tutorial04 myvideofile.mpg

//

// to play the video stream on your screen.

#include <libavcodec/avcodec.h>

#include <libavformat/avformat.h>

#include <libavformat/avio.h>

#include <libswscale/swscale.h>

#include <libavutil/avstring.h>

#include <SDL2/SDL.h>

#include <SDL2/SDL_thread.h>

#ifdef __MINGW32__

#undef main /* Prevents SDL from overriding main() */

#endif

#include <stdio.h>

#include <math.h>

#define SDL_AUDIO_BUFFER_SIZE 1024

#define MAX_AUDIO_FRAME_SIZE 192000

#define MAX_AUDIOQ_SIZE (5 * 16 * 1024)

#define MAX_VIDEOQ_SIZE (5 * 256 * 1024)

#define FF_ALLOC_EVENT (SDL_USEREVENT)

#define FF_REFRESH_EVENT (SDL_USEREVENT + 1)

#define FF_QUIT_EVENT (SDL_USEREVENT + 2)

#define VIDEO_PICTURE_QUEUE_SIZE 1

typedef struct PacketQueue {

AVPacketList *first_pkt, *last_pkt;

int nb_packets;

int size;

SDL_mutex *mutex;

SDL_cond *cond;

} PacketQueue;

typedef struct VideoPicture {

//SDL_Texture *texture;

AVFrame* rawdata;

//SDL_Overlay *bmp;

int width, height; /* source height & width */

int allocated;

} VideoPicture;

typedef struct VideoState {

AVFormatContext *pFormatCtx;

int videoStream, audioStream;

AVStream *audio_st;

PacketQueue audioq;

uint8_t audio_buf[(MAX_AUDIO_FRAME_SIZE * 3) / 2];

unsigned int audio_buf_size;

unsigned int audio_buf_index;

AVFrame audio_frame;

AVPacket audio_pkt;

uint8_t *audio_pkt_data;

int audio_pkt_size;

AVStream *video_st;

PacketQueue videoq;

VideoPicture pictq[VIDEO_PICTURE_QUEUE_SIZE];

int pictq_size, pictq_rindex, pictq_windex;

SDL_mutex *pictq_mutex;

SDL_cond *pictq_cond;

SDL_Thread *parse_tid;

SDL_Thread *video_tid;

char filename[1024];

int quit;

AVIOContext *io_context;

struct SwsContext *sws_ctx;

} VideoState;

/* Since we only have one decoding thread, the Big Struct

can be global in case we need it. */

VideoState *global_video_state;

void packet_queue_init(PacketQueue *q) {

memset(q, 0, sizeof(PacketQueue));

q->mutex = SDL_CreateMutex();

q->cond = SDL_CreateCond();

}

int packet_queue_put(PacketQueue *q, AVPacket *pkt) {

AVPacketList *pkt1;

if(av_dup_packet(pkt) < 0) {

return -1;

}

pkt1 = av_malloc(sizeof(AVPacketList));

if (!pkt1)

return -1;

pkt1->pkt = *pkt;

pkt1->next = NULL;

SDL_LockMutex(q->mutex);

if (!q->last_pkt)

q->first_pkt = pkt1;

else

q->last_pkt->next = pkt1;

q->last_pkt = pkt1;

q->nb_packets++;

q->size += pkt1->pkt.size;

SDL_CondSignal(q->cond);

SDL_UnlockMutex(q->mutex);

return 0;

}

static int packet_queue_get(PacketQueue *q, AVPacket *pkt, int block)

{

AVPacketList *pkt1;

int ret;

SDL_LockMutex(q->mutex);

for(;;) {

if(global_video_state->quit) {

ret = -1;

break;

}

pkt1 = q->first_pkt;

if (pkt1) {

q->first_pkt = pkt1->next;

if (!q->first_pkt)

q->last_pkt = NULL;

q->nb_packets--;

q->size -= pkt1->pkt.size;

*pkt = pkt1->pkt;

av_free(pkt1);

ret = 1;

break;

} else if (!block) {

ret = 0;

break;

} else {

SDL_CondWait(q->cond, q->mutex);

}

}

SDL_UnlockMutex(q->mutex);

return ret;

}

int audio_decode_frame(VideoState *is) {

int len1, data_size = 0;

AVPacket *pkt = &is->audio_pkt;

for(;;) {

while(is->audio_pkt_size > 0) {

int got_frame = 0;

len1 = avcodec_decode_audio4(is->audio_st->codec, &is->audio_frame, &got_frame, pkt);

if(len1 < 0) {

/* if error, skip frame */

is->audio_pkt_size = 0;

break;

}

if (got_frame)

{

data_size =

av_samples_get_buffer_size

(

NULL,

is->audio_st->codec->channels,

is->audio_frame.nb_samples,

is->audio_st->codec->sample_fmt,

1

);

memcpy(is->audio_buf, is->audio_frame.data[0], data_size);

}

is->audio_pkt_data += len1;

is->audio_pkt_size -= len1;

if(data_size <= 0) {

/* No data yet, get more frames */

continue;

}

/* We have data, return it and come back for more later */

return data_size;

}

if(pkt->data)

av_free_packet(pkt);

if(is->quit) {

return -1;

}

/* next packet */

if(packet_queue_get(&is->audioq, pkt, 1) < 0) {

return -1;

}

is->audio_pkt_data = pkt->data;

is->audio_pkt_size = pkt->size;

}

}

void audio_callback(void *userdata, Uint8 *stream, int len) {

VideoState *is = (VideoState *)userdata;

int len1, audio_size;

while(len > 0) {

if(is->audio_buf_index >= is->audio_buf_size) {

/* We have already sent all our data; get more */

audio_size = audio_decode_frame(is);

if(audio_size < 0) {

/* If error, output silence */

is->audio_buf_size = 1024;

memset(is->audio_buf, 0, is->audio_buf_size);

} else {

is->audio_buf_size = audio_size;

}

is->audio_buf_index = 0;

}

len1 = is->audio_buf_size - is->audio_buf_index;

if(len1 > len)

len1 = len;

memcpy(stream, (uint8_t *)is->audio_buf + is->audio_buf_index, len1);

len -= len1;

stream += len1;

is->audio_buf_index += len1;

}

}

static Uint32 sdl_refresh_timer_cb(Uint32 interval, void *opaque) {

SDL_Event event;

event.type = FF_REFRESH_EVENT;

event.user.data1 = opaque;

SDL_PushEvent(&event);

return 0; /* 0 means stop timer */

}

/* schedule a video refresh in 'delay' ms */

static void schedule_refresh(VideoState *is, int delay) {

SDL_AddTimer(delay, sdl_refresh_timer_cb, is);

}

void video_display(VideoState *is,SDL_Renderer* renderer,SDL_Texture* texture) {

SDL_Rect rect;

VideoPicture *vp;

//AVPicture pict;

float aspect_ratio;

//int w=0, h=0, x=0, y=0;

//screen->w;

//int i;

//renderer->w;

vp = &is->pictq[is->pictq_rindex];

if(vp->rawdata) {

if(is->video_st->codec->sample_aspect_ratio.num == 0) {

aspect_ratio = 0;

} else {

aspect_ratio = av_q2d(is->video_st->codec->sample_aspect_ratio) *

is->video_st->codec->width / is->video_st->codec->height;

}

if(aspect_ratio <= 0.0) {

aspect_ratio = (float)is->video_st->codec->width /

(float)is->video_st->codec->height;

}

//screen->h;

// h = screen->h;

// w = ((int)rint(h * aspect_ratio)) & -3;

// if(w > screen->w) {

// w = screen->w;

// h = ((int)rint(w / aspect_ratio)) & -3;

// }

// x = (screen->w - w) / 2;

// y = (screen->h - h) / 2;

rect.x = 0;

rect.y = 0;

rect.w = vp->width;

rect.h = vp->height;

//SDL_SetRenderDrawColor(renderer, 120, 0, 0, 255);

//SDL_RenderFillRect(renderer, &rect);

SDL_UpdateTexture( texture, &rect, vp->rawdata->data[0], vp->rawdata->linesize[0] );

SDL_RenderClear( renderer );

SDL_RenderCopy( renderer,texture , &rect, &rect );

SDL_RenderPresent( renderer );

//SDL_DisplayYUVOverlay(vp->bmp, &rect);

}

}

void video_refresh_timer(void *userdata,SDL_Renderer* renderer,SDL_Texture* texture) {

VideoState *is = (VideoState *)userdata;

// vp is used in later tutorials for synchronization

//VideoPicture *vp;

if(is->video_st) {

if(is->pictq_size == 0) {

schedule_refresh(is, 1);

} else {

//vp = &is->pictq[is->pictq_rindex];

/* Now, normally here goes a ton of code

about timing, etc. we're just going to

guess at a delay for now. You can

increase and decrease this value and hard code

the timing - but I don't suggest that ;)

We'll learn how to do it for real later.

*/

schedule_refresh(is, 30);

/* show the picture! */

video_display(is,renderer,texture);

/* update queue for next picture! */

if(++is->pictq_rindex == VIDEO_PICTURE_QUEUE_SIZE) {

is->pictq_rindex = 0;

}

SDL_LockMutex(is->pictq_mutex);

is->pictq_size--;

SDL_CondSignal(is->pictq_cond);

SDL_UnlockMutex(is->pictq_mutex);

}

} else {

schedule_refresh(is, 100);

}

}

void alloc_picture(void *userdata,SDL_Renderer* renderer) {

VideoState *is = (VideoState *)userdata;

VideoPicture *vp;

vp = &is->pictq[is->pictq_windex];

if(vp->rawdata) {

// we already have one make another, bigger/smaller

//SDL_DestroyTexture(vp->texture);

//vp->texture = NULL;

av_free(vp->rawdata);

//SDL_FreeYUVOverlay(vp->bmp);

}

// Allocate a place to put our YUV image on that screen

// vp->texture = SDL_CreateYUVOverlay(is->video_st->codec->width,

// is->video_st->codec->height,

// SDL_YV12_OVERLAY,

// screen);

vp->width = is->video_st->codec->width;

vp->height = is->video_st->codec->height;

AVCodecContext *pCodecCtx = NULL;

pCodecCtx = is->video_st->codec;

AVFrame* pFrameYUV = avcodec_alloc_frame();

if( pFrameYUV == NULL )

return;

int numBytes = avpicture_get_size(PIX_FMT_YUV420P, pCodecCtx->width,

pCodecCtx->height);

uint8_t* buffer = (uint8_t *)av_malloc(numBytes*sizeof(uint8_t));

avpicture_fill((AVPicture *)pFrameYUV, buffer, PIX_FMT_YUV420P,

pCodecCtx->width, pCodecCtx->height);

vp->rawdata = pFrameYUV;

//SDL_CreateTexture(renderer, SDL_PIXELFORMAT_IYUV, SDL_TEXTUREACCESS_STREAMING, vp->width, vp->height);

SDL_LockMutex(is->pictq_mutex);

vp->allocated = 1;

SDL_CondSignal(is->pictq_cond);

SDL_UnlockMutex(is->pictq_mutex);

}

int queue_picture(VideoState *is, AVFrame *pFrame) {

VideoPicture *vp;

//AVCodecContext *pCodecCtx;

/* wait until we have space for a new pic */

SDL_LockMutex(is->pictq_mutex);

while(is->pictq_size >= VIDEO_PICTURE_QUEUE_SIZE &&

!is->quit) {

SDL_CondWait(is->pictq_cond, is->pictq_mutex);

}

SDL_UnlockMutex(is->pictq_mutex);

if(is->quit)

return -1;

// windex is set to 0 initially

vp = &is->pictq[is->pictq_windex];

/* allocate or resize the buffer! */

if(!vp->rawdata ||

vp->width != is->video_st->codec->width ||

vp->height != is->video_st->codec->height) {

SDL_Event event;

vp->allocated = 0;

/* we have to do it in the main thread */

event.type = FF_ALLOC_EVENT;

event.user.data1 = is;

SDL_PushEvent(&event);

/* wait until we have a picture allocated */

SDL_LockMutex(is->pictq_mutex);

while(!vp->allocated && !is->quit) {

SDL_CondWait(is->pictq_cond, is->pictq_mutex);

}

SDL_UnlockMutex(is->pictq_mutex);

if(is->quit) {

return -1;

}

}

/* We have a place to put our picture on the queue */

if(vp->rawdata) {

//SDL_LockYUVOverlay(vp->bmp);

/* point pict at the queue */

// pict.data[0] = vp->bmp->pixels[0];

// pict.data[1] = vp->bmp->pixels[2];

// pict.data[2] = vp->bmp->pixels[1];

//

// pict.linesize[0] = vp->bmp->pitches[0];

// pict.linesize[1] = vp->bmp->pitches[2];

// pict.linesize[2] = vp->bmp->pitches[1];

// Convert the image into YUV format that SDL uses

sws_scale

(

is->sws_ctx,

(uint8_t const * const *)pFrame->data,

pFrame->linesize,

0,

is->video_st->codec->height,

vp->rawdata->data,

vp->rawdata->linesize

);

// SDL_Rect rect;

// rect.x = 0;

// rect.y = 0;

// rect.w = vp->width;

// rect.h = vp->height;

//printf("%d,%d\n",rect.w,rect.h);

//SDL_UpdateTexture( vp->texture, &rect, pFrameYUV->data[0], pFrameYUV->linesize[0] );

//av_free(pFrameYUV);

//SDL_UnlockYUVOverlay(vp->bmp);

/* now we inform our display thread that we have a pic ready */

if(++is->pictq_windex == VIDEO_PICTURE_QUEUE_SIZE) {

is->pictq_windex = 0;

}

SDL_LockMutex(is->pictq_mutex);

is->pictq_size++;

SDL_UnlockMutex(is->pictq_mutex);

}

return 0;

}

int video_thread(void *arg) {

VideoState *is = (VideoState *)arg;

AVPacket pkt1, *packet = &pkt1;

int frameFinished;

AVFrame *pFrame;

pFrame = avcodec_alloc_frame();

for(;;) {

if(packet_queue_get(&is->videoq, packet, 1) < 0) {

// means we quit getting packets

break;

}

// Decode video frame

avcodec_decode_video2(is->video_st->codec, pFrame, &frameFinished,

packet);

// Did we get a video frame?

if(frameFinished) {

//printf("video_thread\n");

if(queue_picture(is, pFrame) < 0) {

break;

}

}

av_free_packet(packet);

}

av_free(pFrame);

return 0;

}

int stream_component_open(VideoState *is, int stream_index) {

AVFormatContext *pFormatCtx = is->pFormatCtx;

AVCodecContext *codecCtx = NULL;

AVCodec *codec = NULL;

AVDictionary *optionsDict = NULL;

SDL_AudioSpec wanted_spec, spec;

if(stream_index < 0 || stream_index >= pFormatCtx->nb_streams) {

return -1;

}

// Get a pointer to the codec context for the video stream

codecCtx = pFormatCtx->streams[stream_index]->codec;

if(codecCtx->codec_type == AVMEDIA_TYPE_AUDIO) {

// Set audio settings from codec info

wanted_spec.freq = codecCtx->sample_rate;

wanted_spec.format = AUDIO_S16SYS;

wanted_spec.channels = codecCtx->channels;

wanted_spec.silence = 0;

wanted_spec.samples = SDL_AUDIO_BUFFER_SIZE;

wanted_spec.callback = audio_callback;

wanted_spec.userdata = is;

if(SDL_OpenAudio(&wanted_spec, &spec) < 0) {

fprintf(stderr, "SDL_OpenAudio: %s\n", SDL_GetError());

return -1;

}

}

codec = avcodec_find_decoder(codecCtx->codec_id);

if(!codec || (avcodec_open2(codecCtx, codec, &optionsDict) < 0)) {

fprintf(stderr, "Unsupported codec!\n");

return -1;

}

switch(codecCtx->codec_type) {

case AVMEDIA_TYPE_AUDIO:

is->audioStream = stream_index;

is->audio_st = pFormatCtx->streams[stream_index];

is->audio_buf_size = 0;

is->audio_buf_index = 0;

memset(&is->audio_pkt, 0, sizeof(is->audio_pkt));

packet_queue_init(&is->audioq);

SDL_PauseAudio(0);

break;

case AVMEDIA_TYPE_VIDEO:

is->videoStream = stream_index;

is->video_st = pFormatCtx->streams[stream_index];

packet_queue_init(&is->videoq);

is->video_tid = SDL_CreateThread(video_thread, "video_thread",is);

is->sws_ctx =

sws_getContext

(

is->video_st->codec->width,

is->video_st->codec->height,

is->video_st->codec->pix_fmt,

is->video_st->codec->width,

is->video_st->codec->height,

PIX_FMT_YUV420P,

SWS_BILINEAR,

NULL,

NULL,

NULL

);

break;

default:

break;

}

return 0;

}

int decode_interrupt_cb(void *opaque) {

return (global_video_state && global_video_state->quit);

}

int decode_thread(void *arg) {

VideoState *is = (VideoState *)arg;

AVFormatContext *pFormatCtx = NULL;

AVPacket pkt1, *packet = &pkt1;

int video_index = -1;

int audio_index = -1;

int i;

AVDictionary *io_dict = NULL;

AVIOInterruptCB callback;

is->videoStream=-1;

is->audioStream=-1;

global_video_state = is;

// will interrupt blocking functions if we quit!

callback.callback = decode_interrupt_cb;

callback.opaque = is;

if (avio_open2(&is->io_context, is->filename, 0, &callback, &io_dict))

{

fprintf(stderr, "Unable to open I/O for %s\n", is->filename);

return -1;

}

// Open video file

if(avformat_open_input(&pFormatCtx, is->filename, NULL, NULL)!=0)

return -1; // Couldn't open file

is->pFormatCtx = pFormatCtx;

// Retrieve stream information

if(avformat_find_stream_info(pFormatCtx, NULL)<0)

return -1; // Couldn't find stream information

// Dump information about file onto standard error

av_dump_format(pFormatCtx, 0, is->filename, 0);

// Find the first video stream

for(i=0; i<pFormatCtx->nb_streams; i++) {

if(pFormatCtx->streams[i]->codec->codec_type==AVMEDIA_TYPE_VIDEO &&

video_index < 0) {

video_index=i;

}

if(pFormatCtx->streams[i]->codec->codec_type==AVMEDIA_TYPE_AUDIO &&

audio_index < 0) {

audio_index=i;

}

}

if(audio_index >= 0) {

stream_component_open(is, audio_index);

}

if(video_index >= 0) {

stream_component_open(is, video_index);

}

if(is->videoStream < 0 || is->audioStream < 0) {

fprintf(stderr, "%s: could not open codecs\n", is->filename);

goto fail;

}

// main decode loop

for(;;) {

if(is->quit) {

break;

}

// seek stuff goes here

if(is->audioq.size > MAX_AUDIOQ_SIZE ||

is->videoq.size > MAX_VIDEOQ_SIZE) {

SDL_Delay(10);

continue;

}

if(av_read_frame(is->pFormatCtx, packet) < 0) {

if(is->pFormatCtx->pb->error == 0) {

SDL_Delay(100); /* no error; wait for user input */

continue;

} else {

break;

}

}

// Is this a packet from the video stream?

if(packet->stream_index == is->videoStream) {

packet_queue_put(&is->videoq, packet);

} else if(packet->stream_index == is->audioStream) {

packet_queue_put(&is->audioq, packet);

} else {

av_free_packet(packet);

}

}

/* all done - wait for it */

while(!is->quit) {

SDL_Delay(100);

}

fail:

if(1){

SDL_Event event;

event.type = FF_QUIT_EVENT;

event.user.data1 = is;

SDL_PushEvent(&event);

}

return 0;

}

int main(int argc, char *argv[]) {

SDL_Event event;

VideoState *is;

struct SDL_Window *pScreen;

struct SDL_Renderer *pRenderer;

is = av_mallocz(sizeof(VideoState));

if(argc < 2) {

fprintf(stderr, "Usage: test <file>\n");

exit(1);

}

// Register all formats and codecs

av_register_all();

if(SDL_Init(SDL_INIT_VIDEO | SDL_INIT_AUDIO | SDL_INIT_TIMER)) {

fprintf(stderr, "Could not initialize SDL - %s\n", SDL_GetError());

exit(1);

}

// Make a screen to put our video

//#ifndef __DARWIN__

// screen = SDL_SetVideoMode(640, 480, 0, 0);

//#else

// screen = SDL_SetVideoMode(640, 480, 24, 0);

//#endif

pScreen = SDL_CreateWindow("audio & video", SDL_WINDOWPOS_UNDEFINED, SDL_WINDOWPOS_UNDEFINED, 672,376, SDL_WINDOW_OPENGL);

if(!pScreen) {

fprintf(stderr, "SDL: could not set video mode - exiting\n");

exit(1);

}

//SDL_Window *windows = pScreen;

//pScreen->windowed;

pRenderer = SDL_CreateRenderer(pScreen, -1, 0);

SDL_Texture* texture = SDL_CreateTexture(pRenderer, SDL_PIXELFORMAT_IYUV, SDL_TEXTUREACCESS_STREAMING, 672, 376);

av_strlcpy(is->filename, argv[1], 1024);

is->pictq_mutex = SDL_CreateMutex();

is->pictq_cond = SDL_CreateCond();

schedule_refresh(is, 40);

is->parse_tid = SDL_CreateThread(decode_thread,"parse_thread", is);

if(!is->parse_tid) {

av_free(is);

return -1;

}

for(;;) {

SDL_WaitEvent(&event);

switch(event.type) {

case FF_QUIT_EVENT:

case SDL_QUIT:

is->quit = 1;

/*

* If the video has finished playing, then both the picture and

* audio queues are waiting for more data. Make them stop

* waiting and terminate normally.

*/

SDL_CondSignal(is->audioq.cond);

SDL_CondSignal(is->videoq.cond);

SDL_Quit();

return 0;

break;

case FF_ALLOC_EVENT:

alloc_picture(event.user.data1,pRenderer);

break;

case FF_REFRESH_EVENT:

video_refresh_timer(event.user.data1,pRenderer,texture);

break;

default:

break;

}

}

SDL_DestroyTexture(texture);

return 0;

}

这段代码的总体思路是跑一个parse线程解包,当解析到音频包时放入音频队列,当解析到视频包时放入视频队列,

当接到有视频包后,起一个线程用来解码视频包,解码完调用事件让主线程显示解码完的数据包

注意点

opengl相关操作需要在主线程中进行,比如SDL_UpdateTexture函数。

在我修改int queue_picture(VideoState *is,AVFrame *pFrame)代码时,误将SDL_Texure在此函数中写入数据

导致程序运行到SDL_UpdateTexture( vp->texture, &rect, pFrameYUV->data[0], pFrameYUV->linesize[0] );

后renderdata->glEnable(data->type);处报错,报bad_access,起先以为是pFrameYUV为空指针,后四处排查也找不到原因

突然想起opengl的操作是不是需要在主线程中进行?

后来我将SDL_UpdateTexture操作放置到video_display函数中,问题解决。

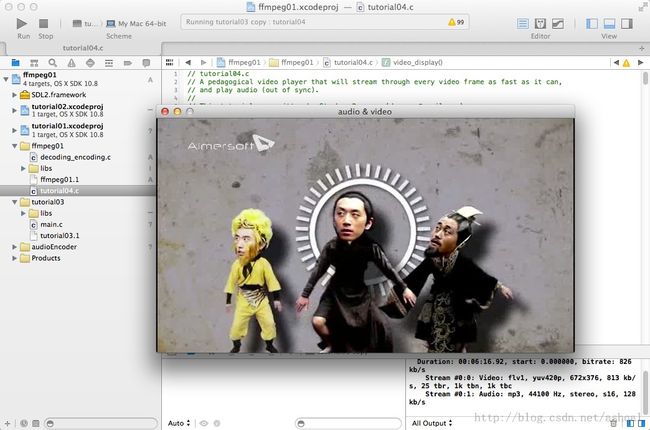

运行结果

引用库如下

结束语

2013年最后半小时了,本命年还算顺利

可喜的是这一年技术接触面很广,学会了ios开发,android水平有进阶,c/c++水平有提高,设计模式提高不少

可悲的是阿里前端实习被刷(死的很惨),论文毫无进展,老板要我搞云计算

最后感谢朱阿姝同学的陪伴

希望明年可以顺利完成论文,在github上多贡献几个项目攒人品,父母身体健康