用Face++实现人脸关键点检测

最近看了一篇很有意思的文章 http://matthewearl.github.io/2015/07/28/switching-eds-with-python/ ,本来想自己复现一下,后来发现自己太菜,用了一整天只完成了不到一半,最近要找工作了,看书看的有点烦,本来想写个有趣的代码放松下。哎。

开始正文。原作者用的是dlib的库完成关键点检测,试着装了一下,没装成,那就不用了。本来想用自己的库,后来想了下自己封装的太烂了,还是改用别人的吧,这样程序的大小也会小很多,训练好的文件还是比较大的。

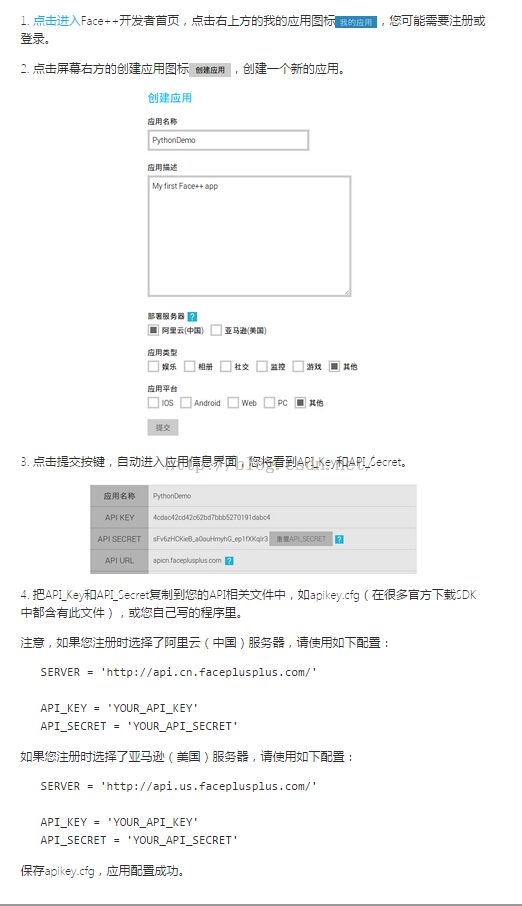

首先去Face++注册一个账号,然后创建应用(得到访问的密钥),这里直接截图了。

我用的是Python 接口,去github下载SDK https://github.com/FacePlusPlus/facepp-python-sdk/tree/v2.0

修改apikey.cfg的内容,可以运行hello.py的历程。

注意服务器的地址不要选错,还有就是官方的历程里是这样的,API_KEY = '<YOUR_API_KEY>',替换直接的密钥时记得把<>也删掉,不然也会报错。

SDK的facepp.py文件的350行左右修改一下,添加 '/detection/landmark',这句,不然的话人脸关键点检测的接口无法调用。

这里稍微吐槽一下,Face++的SDK写的真不怎么好,很多地方不够详细,而且程序有时会因为网络问题出现bug。想上传自己的图片也找不到接口,官网只给了这么几句,

感觉解释的太简单了吧,SDK里也没有见到有相关的本地图片上传接口。

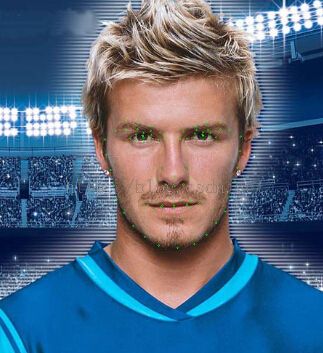

说了这么多,展示一下效果,然后贴代码。

由于开始选的两个脸对齐的比较好,所以换了一张,这里完成的效果是把第二幅图片的人脸缩放、旋转和第一张人脸对其。之后的操作就是裁剪、覆盖了。

下面贴一下代码,只为完成了一半(文章里写的这么多),后面的部分不太熟悉,要找工作了,没心情写代码。。。

这里建议大家自己注册一个账号,每个账号的开发者版本同时有3个线程,如果我这里修改了密钥程序应该会报错,这里可能程序无法运行。

#!/usr/bin/env python2 # -*- coding: utf-8 -*- # You need to register your App first, and enter you API key/secret. # 您需要先注册一个App,并将得到的API key和API secret写在这里。

API_KEY = 'de12a860239680fb8aba7c8283efffd9'

API_SECRET = '61MzhMy_j_L8T1-JAzVjlBSsKqy2pUap'

# Import system libraries and define helper functions

# 导入系统库并定义辅助函数

import time

import os

import cv2

import numpy

import urllib

import re

from facepp import API

ALIGN_POINTS = list(range(0,25))

OVERLAY_POINTS=list(range(0,25))

RIGHT_EYE_POINTS = list(range(2, 6))

LEFT_EYE_POINTS = list(range(4, 8))

FEATHER_AMOUNT = 11

SCALE_FACTOR = 1

COLOUR_CORRECT_BLUR_FRAC = 0.6

def encode(obj):

if type(obj) is unicode:

return obj.encode('utf-8')

if type(obj) is dict:

return {encode(k): encode(v) for (k, v) in obj.iteritems()}

if type(obj) is list:

return [encode(i) for i in obj]

return obj

def getPoints(text):

a=encode(text)

a=str(a)

#print a

x = re.findall(r'\'x\':......',a)

for i in range(len(x)):

x[i]=re.findall(r'\d+\.\d\d',x[i])

y = re.findall(r'\'y\':......',a)

for i in range(len(y)):

y[i]=re.findall(r'\d+\.\d\d',y[i])

xy =zip(x,y)

return xy

def drawPoints(img,xy): #画点,用于检测程序运行情况

Img = img

tmp = numpy.array(img)

h,w,c = tmp.shape

for i,j in xy:

xp=float(i[0])*w/100.

yp=float(j[0])*h/100.

point = (int(xp),int(yp))

cv2.circle(Img,point,1,(0,255,0))

return Img

def get_landmarks(path,tmpPic):

result = api.detection.detect(url = path,mode = 'oneface')

ID = result['face'][0]['face_id']

points = api.detection.landmark(face_id=ID,type = '25p')

xy = getPoints(points)

print 'downloading the picture....'

urllib.urlretrieve(path,tmpPic) #为防止图片内容有变化,每次都下载一遍,调试可以不用

tmp = cv2.imread(tmpPic)

#测试

Img = drawPoints(tmp,xy)

cv2.imwrite('point.jpg',Img)

tmp = numpy.array(tmp)

h,w,c = tmp.shape

points = numpy.empty([25,2],dtype=numpy.int16)

n=0

for i,j in xy:

xp=float(i[0])*w/100.

yp=float(j[0])*h/100.

points[n][0]=int(xp)

points[n][1]=int(yp)

n+=1

return numpy.matrix([[i[0], i[1]] for i in points])

#return points

def transformation_from_points(points1, points2):

"""

Return an affine transformation [s * R | T] such that:

sum ||s*R*p1,i + T - p2,i||^2

is minimized.

"""

# Solve the procrustes problem by subtracting centroids, scaling by the

# standard deviation, and then using the SVD to calculate the rotation. See

# the following for more details:

# https://en.wikipedia.org/wiki/Orthogonal_Procrustes_problem

points1 = points1.astype(numpy.float64)

points2 = points2.astype(numpy.float64)

c1 = numpy.mean(points1, axis=0)

c2 = numpy.mean(points2, axis=0)

points1 -= c1

points2 -= c2

s1 = numpy.std(points1)

s2 = numpy.std(points2)

points1 /= s1

points2 /= s2

U, S, Vt = numpy.linalg.svd(points1.T * points2)

# The R we seek is in fact the transpose of the one given by U * Vt. This

# is because the above formulation assumes the matrix goes on the right

# (with row vectors) where as our solution requires the matrix to be on the

# left (with column vectors).

R = (U * Vt).T

return numpy.vstack([numpy.hstack(((s2 / s1) * R,

c2.T - (s2 / s1) * R * c1.T)),

numpy.matrix([0., 0., 1.])])

def warp_im(im, M, dshape):

output_im = numpy.zeros(dshape, dtype=im.dtype)

cv2.warpAffine(im,

M[:2],

(dshape[1], dshape[0]),

dst=output_im,

borderMode=cv2.BORDER_TRANSPARENT,

flags=cv2.WARP_INVERSE_MAP)

return output_im

if __name__ == '__main__':

api = API(API_KEY, API_SECRET)

path1='http://www.faceplusplus.com/static/img/demo/17.jpg'

path2='http://www.faceplusplus.com/static/img/demo/7.jpg'

#path2='http://cimg.163.com/auto/2004/8/28/200408281055448e023.jpg'

tmp1='./tmp1.jpg'

tmp2='./tmp2.jpg'

landmarks1=get_landmarks(path1,tmp1)

landmarks2=get_landmarks(path2,tmp2)

im1 = cv2.imread(tmp1,cv2.IMREAD_COLOR)

im2 = cv2.imread(tmp2,cv2.IMREAD_COLOR)

M = transformation_from_points(landmarks1[ALIGN_POINTS],

landmarks2[ALIGN_POINTS])

warped_im2 = warp_im(im2, M, im1.shape)

cv2.imwrite('wrap.jpg',warped_im2)