吴恩达深度学习4-Week2课后作业1-Keras-Happy House

一、Deeplearning-assignment

在本周的任务中,将开始学习使用Keras:

- 学习使用Keras,这是一个用Python编写的高级神经网络API(编程框架),能够在包括TensorFlow和CNTK在内的几个底层框架上运行。

- 看看如何在几个小时内建立一个深度学习算法。

为什么我们使用Keras?Keras的开发是为了让深度学习的工程师能够非常快速地建立和尝试不同的模型。就像TensorFlow是一个比Python更高级别的框架一样,Keras是一个更高层次的框架,并提供额外的抽象。能够以最少的时间根据ideal获取结果是寻找好模型的关键。可是,Keras相对底层框架拥有更多的限制,所以有些能在TensorFlow中实现的非常复杂的模型在Keras中却不能实现。也就是说,Keras 只对常见的模型支持良好。

现在有这样一个实际问题:有一个房子,任何想要进入房子的人都必须证明自己当前是开心快乐的。

作为一名深度学习专家,为了确保 "Happy" rule 得到严格实施, 您将打算使用前门摄像头拍摄的图片来检查该人是否快乐。只有当人感到快乐时,门才会打开。

你收集了你的朋友和你自己的照片,由前门摄像头拍摄。数据集是带有标签的。

“Happy”数据集的详细信息::

- Images are of shape (64,64,3)

- Training: 600 pictures

- Test: 150 pictures

Keras 非常适合快速制作原型。在很短的时间内,你就能够构建一个模型,并获得出色的成果。

二、相关算法代码

import numpy as np

from keras import layers

from keras.layers import Input, Dense, Activation, ZeroPadding2D, BatchNormalization, Flatten, Conv2D

from keras.layers import AveragePooling2D, MaxPooling2D, Dropout, GlobalMaxPooling2D, GlobalAveragePooling2D

from keras.models import Model

from keras.preprocessing import image

from keras.utils import layer_utils

from keras.utils.data_utils import get_file

from keras.applications.imagenet_utils import preprocess_input

# import pydot

from IPython.display import SVG

from keras.utils.vis_utils import model_to_dot

from keras.utils import plot_model

from kt_utils import *

import keras.backend as K

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

K.set_image_data_format('channels_last')

import matplotlib.pyplot as plt

from matplotlib.pyplot import imshow

X_train_orig, Y_train_orig, X_test_orig, Y_test_orig, classes = load_dataset()

X_train = X_train_orig / 255

X_test = X_test_orig / 255

Y_train = Y_train_orig.T

Y_test = Y_test_orig.T

# print("number of training examples = " + str(X_train.shape[0]))

# print("number of test examples = " + str(X_test.shape[0]))

# print("X_train shape: " + str(X_train.shape))

# print("Y_train shape: " + str(Y_train.shape))

# print("X_test shape: " + str(X_test.shape))

# print("Y_test shape: " + str(Y_test.shape))

# Here is an example of a model in Keras:

def model(intput_shape):

X_input = Input(intput_shape)

# zero-padding

X = ZeroPadding2D((3, 3))(X_input)

# conv->bn->relu

X = Conv2D(32, (7, 7), strides=(1, 1), name='conv0')(X)

X = BatchNormalization(axis=3, name='bn0')(X)

X = Activation('relu')(X)

# maxpool

X = MaxPooling2D((2, 2), name='max_pool')(X)

# flatten x + fullyconnected

X = Flatten()(X)

X = Dense(1, activation='sigmoid', name='fc')(X)

model = Model(inputs=X_input, outputs=X, name='HappyModel')

return model

def HappyModel(input_shape):

X_input = Input(input_shape)

X = ZeroPadding2D((3, 3))(X_input)

X = Conv2D(32, (7, 7), strides=(1, 1), name='conv0')(X)

X = BatchNormalization(axis=3, name='bn0')(X)

X = Activation('relu')(X)

X = MaxPooling2D((2, 2), name='max_pool')(X)

X = Flatten()(X)

X = Dense(1, activation='sigmoid', name='fc')(X)

model = Model(inputs=X_input, outputs=X, name='HappyModel')

return model

happyModel = HappyModel(X_train.shape[1:])

happyModel.compile(optimizer="Adam", loss="binary_crossentropy", metrics=["accuracy"])

happyModel.fit(x=X_train, y=Y_train, epochs=39, batch_size=16)

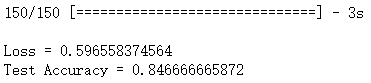

preds = happyModel.evaluate(x = X_test, y = Y_test)

print ("Loss = " + str(preds[0]))

print ("Test Accuracy = " + str(preds[1]))三、总结

从这次作业中我们需要记住以下几点:

- Keras是一种快速建立模型的工具,它允许在较短的时间内快速尝试不同的模型架构。

- 要记住我们是怎样通过创建的模型训练数据集达到想要的效果:Create->Compile->Fit/Train->Evaluate/Test。