【学习日记】人脸识别FaceNet之深度卷积网络NN2解读

这篇日记解读FaceNet的深度卷积网络NN2,并使用Tensorflow实现。

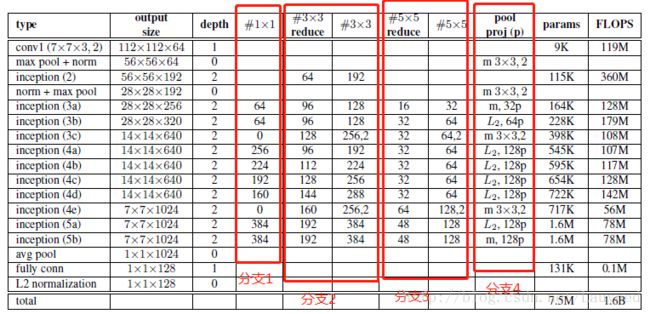

NN2网络的结构如下图所示:

NN2网络几乎和Inception V1一样,所以如果没有Inception V1的基础,依靠上面这张表格使用Tensorflow实现代码还是有点困难的。

这里先介绍inception模块。

inception模块是由谷歌发明的,并在2014年ILSVRC比赛中获得第一名。其主要解决两个问题:

1. 实现不同卷积核的自动选择,也就是说让网络自行决定是使用1*1卷积核,还是3*3,或是5*5,或者是否加池化层

2. 使用了1*1卷积,将时间复杂度,也就是浮点运算数大大降低。

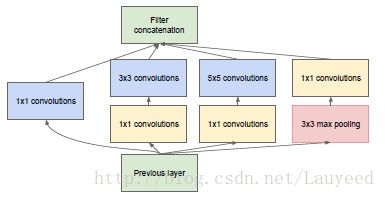

inception模块如下图所示:

总共有4个分支,第一个分支是1*1卷积,第二个分支是实现3*3卷积,第三个分支是实现5*5卷积,第4个分支是实现最大池化。

在每一个分支里,都使用了1*1卷积来减少时间复杂度(运算量)。

4个分支的不同参数在上面的表格分布如下:

分支1中#1*1位0意味着不存在该分支

分支2中的#3*3 reduce就是inception模块中的1*1卷积,数字后面加2的意思是步长为2

分支3中的#5*5 reduce就是inception模块中的1*1卷积

分支4中m表示池化类型为最大池化,L2表示池化类型为L2池化,2表示步长为2, 128P表示通道缩减为128,没有的话表示不减少通道数。

通过以下代码(NN2.py),即可实现上述卷积网络的构建:

import tensorflow as tf

from tensorflow.contrib.layers import conv2d, max_pool2d, avg_pool2d, fully_connected

import time

def NN2(x):

net = conv2d(x, 64, 7, stride=2, scope='conv1')

net = max_pool2d(net, [3, 3], stride=2, padding="SAME",scope='max_pool1')

net = conv2d(net, 64, 1, stride=1, scope='inception2_11')

net = conv2d(net, 192, 3, stride=1, scope='inception2_33')

net = max_pool2d(net, [3, 3], stride=2, padding="SAME",scope='max_pool2')

# inception3a

net = inception(net,1,64,96,128,16,32,32,scope='inception3a')

# inception3b

net = inception(net,1,64,96,128,32,64,64,scope='inception3b')

# inception3c

net = inception(net,2,0,128,256,32,64,0, scope='inception3c')

# inception4a

net = inception(net,1,256,96,192,32,42,128,scope='inception4a')

# inception4b

net = inception(net,1,224,112,224,32,64,128,scope='inception4b')

# inception4c

net = inception(net,1,192,128,256,32,64,128,scope='inception4c')

# inception4d

net = inception(net,1,160,144,288,32,64,128,scope='inception4d')

# inception4e

net = inception(net,2,0,160,256,64,128,0,scope='inception4e')

# inception5a

net = inception(net,1,384,192,384,48,128,128,scope='inception5a')

# inception5b

net = inception(net,1,384,192,384,48,128,128,scope='inception5b')

# avg pool

net = avg_pool2d(net, [7,7], stride=1, scope='avg_pool')

# fc

net = tf.reshape(net, [-1,1024])

net = fully_connected(net, 128, scope='fc')

# L2 norm

net = l2norm(net)

return net

def inception(x, st, b1c, b2c1, b2c2, b3c1, b3c2, b4c, scope):

with tf.name_scope(scope):

if b1c>0:

branch1 = conv2d(x, b1c, [1,1], stride=st)

branch2 = conv2d(x, b2c1, 1, stride=1)

branch2 = conv2d(branch2, b2c2, 3, stride=st)

branch3 = conv2d(x, b3c1, 1, stride=1)

branch3 = conv2d(branch3, b3c2, 5, stride=st)

branch4 = max_pool2d(x, [3, 3], stride=st, padding='SAME')

if b4c>0:

branch4 = conv2d(branch3, b4c, 1, stride=1)

if b1c>0:

net = tf.concat([branch1, branch2, branch3, branch4], 3)

else:

net = tf.concat([branch2, branch3, branch4], 3)

return net

def l2norm(x):

norm2 = tf.norm(x, ord=2, axis=1)

norm2 = tf.reshape(norm2,[-1,1])

l2norm = x/norm2

return l2norm

if __name__=="__main__":

time1 = time.time()

import numpy as np

x = np.float32(np.random.random([10,224,224,3]))

net = NN2(x)

time2 = time.time()

print("Using time: ", time2-time1)