自编码网络(二)—— 提取图片的二维特征,并利用二维特征还原图片

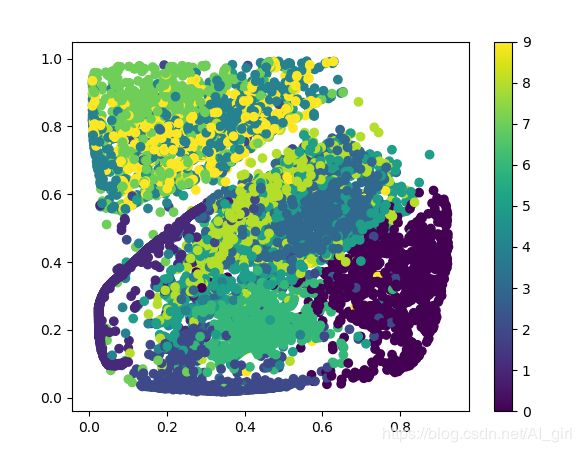

在自编码网络中使用线性解码器对MNIST数据特征进行再压缩,并将其映射到直角坐标系上。

这里使用4层逐渐压缩将784维度分别压缩成256、64、16、2这四个特征向量。

1.引入图文件,定义学习参数变量

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("/data/", one_hot = True)

#定义学习率

learning_rate = 0.01

#隐藏层设置

n_hidden_1 = 256

n_hidden_2 = 64

n_hidden_3 = 16

n_hidden_4 = 2

n_input = 784

#定义输入占位符

x = tf.placeholder("float", [None, n_input])

y = x

weights = {

'encoder_h1':tf.Variable(tf.random_normal([n_input, n_hidden_1],)),

'encoder_h2':tf.Variable(tf.random_normal([n_hidden_1, n_hidden_2], )),

'encoder_h3':tf.Variable(tf.random_normal([n_hidden_2, n_hidden_3], )),

'encoder_h4':tf.Variable(tf.random_normal([n_hidden_3, n_hidden_4], )),

'decoder_h1':tf.Variable(tf.random_normal([n_hidden_4 , n_hidden_3 ,])),

'decoder_h2': tf.Variable(tf.random_normal([n_hidden_3, n_hidden_2, ])),

'decoder_h3': tf.Variable(tf.random_normal([n_hidden_2, n_hidden_1, ])),

'decoder_h4': tf.Variable(tf.random_normal([n_hidden_1, n_input, ])),

}

biases = {

'encoder_b1':tf.Variable(tf.zeros([n_hidden_1])),

'encoder_b2': tf.Variable(tf.zeros([n_hidden_2])),

'encoder_b3': tf.Variable(tf.zeros([n_hidden_3])),

'encoder_b4': tf.Variable(tf.zeros([n_hidden_4])),

'decoder_b1': tf.Variable(tf.zeros([n_hidden_3])),

'decoder_b2': tf.Variable(tf.zeros([n_hidden_2])),

'decoder_b3': tf.Variable(tf.zeros([n_hidden_1])),

'decoder_b4': tf.Variable(tf.zeros([n_input])),

}

2.定义网络模型

下面的代码是定义编码和解码的网络结构,这里使用了线性解码器。在编码的最后一层,没有进行sigmoid变换,这是因为生成的二维数据其数据特征已经变得极为主要,所以希望它透视传到解码器中,少一些变换可以最大的保存原有的主要特征。

#定义网络模型

def encoder(x):

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['encoder_h1']), biases['encoder_b1']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['encoder_h2']), biases['encoder_b2']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['encoder_h3']), biases['encoder_b3']))

layer_4 = tf.nn.sigmoid(tf.add(tf.matmul(layer_3 , weights['encoder_h4']), biases['encoder_b4']))

return layer_4

def decoder(x):

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['decoder_h1']), biases['decoder_b1']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['decoder_h2']), biases['decoder_b2']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['decoder_h3']), biases['decoder_b3']))

layer_4 = tf.nn.sigmoid(tf.add(tf.matmul(layer_3 , weights['decoder_h4']), biases['decoder_b4']))

return layer_4

#构建模型

encoder_op = encoder(x)

y_pred = decoder(encoder_op)

cost = tf.reduce_mean(tf.pow(y-y_pred, 2))

optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost)

3.开始训练

#训练

training_epochs = 20

batch_size = 256

display_step = 1

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

total_batch = int(mnist.train.num_examples/batch_size)

#启动循环开始循环

for epoch in range(training_epochs):

#遍历全部数据集

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

_,c = sess.run([optimizer, cost], feed_dict = {x: batch_xs})

#显示训练中的详细信息

if epoch % display_step == 0:

print("Epoch:" , '%04d' %(epoch+1),"cost=","{:.9f}".format(c))

print("Finished!!")

输出结果如下:

Epoch: 0001 cost= 0.090200812

Epoch: 0002 cost= 0.075068496

Epoch: 0003 cost= 0.067612931

Epoch: 0004 cost= 0.065960728

Epoch: 0005 cost= 0.063145392

Epoch: 0006 cost= 0.061458681

Epoch: 0007 cost= 0.060466096

Epoch: 0008 cost= 0.056512658

Epoch: 0009 cost= 0.050669905

Epoch: 0010 cost= 0.054181825

Epoch: 0011 cost= 0.052422021

Epoch: 0012 cost= 0.053191587

Epoch: 0013 cost= 0.052487034

Epoch: 0014 cost= 0.051621195

Epoch: 0015 cost= 0.050946057

Epoch: 0016 cost= 0.051289815

Epoch: 0017 cost= 0.052038588

Epoch: 0018 cost= 0.050835565

Epoch: 0019 cost= 0.050824545

Epoch: 0020 cost= 0.049340181

Finished!!

通过自编码网络将748维的数据压缩成二维,用二维数据代替784维,这就是自编码网络的神奇之处。

4.对比输入和输出

#可视化结果

show_num = 10

encode_decode = sess.run(y_pred, feed_dict = {x: mnist.test.images[:show_num]})

#将自编码输出结果和原始样本显示出来

f, a = plt.subplots(2, 10, figsize = (10, 2))

for i in range(show_num):

a[0][i].imshow(np.reshape(mnist.test.images[i], (28, 28)))

a[1][i].imshow(np.reshape(encode_decode[i], (28, 28)))

plt.show()

执行上面代码,生成如下所示图片:

5.显示数据的二维特征

将数据压缩后的二维特征显示出来

#显示数据的二维特征

aa = [np.argmax(l)for l in mnist.test.labels]#将one_hot转成一般编码

encoder_result = sess.run(encoder_op, feed_dict={x: mnist.test.images})

plt.scatter(encoder_result[:,0], encoder_result[:,1], c=aa)

plt.colorbar()

plt.show()

执行上面代码,生成如下所示图片: