使用pyqery爬取知乎发现热门话题

使用的库是pyquery,首先我们还是来分析一下知乎发现热门话题的网页结构,https://www.zhihu.com/explore

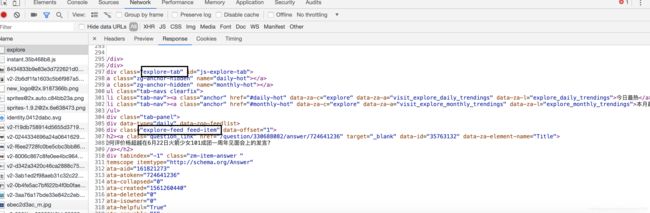

热门话题从class为explore-tab开始,每一个话题开始的class为explore-feed feed-item,话题在h2标签内

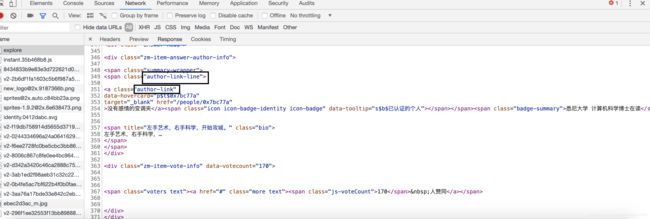

作者的class为author-link-line

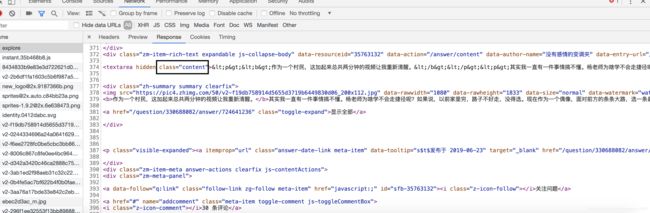

回答的内容class为content

分析完让我们来编写代码

import requests

from pyquery import PyQuery as pq

url = 'https://www.zhihu.com/explore'

headers = {

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_12_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36'

}

html = requests.get(url,headers=headers).text

doc = pq(html)

items = doc('.explore-tab .feed-item').items()

for item in items:

question = item.find('h2').text()

author = item.find('.author-link-line').text()

answer = pq(item.find('.content').html()).text()

print('\n问题',question)

print('\n作者',author)

print('\n答案',answer)

with open('explore.txt','a',encoding='utf-8') as file:

file.write('\n'.join([question,author,answer]))

file.write('\n'+'='*50+'\n')

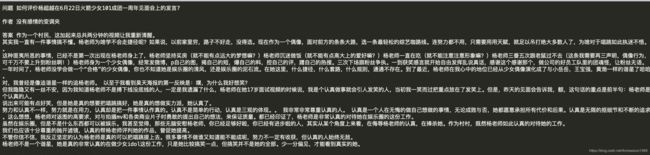

首先发送请求,将返回的网页进行解析,并将提取处理的问题,作者,回答等内容保存到本地文件中,效果如下

本地保存文件explore.txt