Sqoop是一个用来将Hadoop和关系型数据库中的数据相互转移的工具,可以将一个关系型数据库(例如 : MySQL ,Oracle ,Postgres等)中的数据导进到Hadoop的HDFS中,也可以将HDFS的数据导进到关系型数据库中。

对于某些NoSQL数据库它也提供了连接器。Sqoop,类似于其他ETL工具,使用元数据模型来判断数据类型并在数据从数据源转移到Hadoop时确保类型安全的数据处理。Sqoop专为大数据批量传输设计,能够分割数据集并创建Hadoop任务来处理每个区块。

一、安装配置

1、设置环境变量

[hadoop@hdp01 ~]$ vi .bash_profile

export SQOOP_HOME=/u01/sqoop

export SQOOP_CONF_DIR=$SQOOP_HOME/conf

export SQOOP_CLASSPATH=$SQOOP_CONF_DIR

export CATALINA_HOME=/u01/sqoop/server

export PATH=$PATH:$SQOOP_HOME/bin1、解压安装

安装非常简单,如下:

[hadoop@hdp01 u02]$ wget http://mirrors.hust.edu.cn/apache/sqoop/1.99.7/sqoop-1.99.7-bin-hadoop200.tar.gz

[hadoop@hdp01 u01]$ tar -xzf /u02/sqoop-1.99.7-bin-hadoop200.tar.gz

[hadoop@hdp01 u01]$ mv sqoop-1.99.7-bin-hadoop200 sqoop2、Sqoop配置

编辑sqoop.properties文件,修改如下内容:

2.1 日志文件路径以及数据文件路径

--日志文件路径定义,替换@LOGDIR@为绝对路径

org.apache.sqoop.log4j.appender.file.File=/u01/sqoop/logs/sqoop.log

org.apache.sqoop.log4j.appender.audit.File=/u01/sqoop/logs/audit.log

org.apache.sqoop.repository.sysprop.derby.stream.error.file=/u01/sqoop/logs/derbyrepo.log

--JDBC repository路径配置,替换@BASEDIR@为绝对路径

# JDBC repository provider configuration

org.apache.sqoop.repository.jdbc.handler=org.apache.sqoop.repository.derby.DerbyRepositoryHandler

org.apache.sqoop.repository.jdbc.transaction.isolation=READ_COMMITTED

org.apache.sqoop.repository.jdbc.maximum.connections=10

org.apache.sqoop.repository.jdbc.url=jdbc:derby:/u01/sqoop/data/repository/db;create=true

org.apache.sqoop.repository.jdbc.driver=org.apache.derby.jdbc.EmbeddedDriver

org.apache.sqoop.repository.jdbc.user=sa

org.apache.sqoop.repository.jdbc.password=

以上路径建议使用绝对路径。2.2 设置认证

这里采用simple方式,如下:

org.apache.sqoop.security.authentication.type=SIMPLE

org.apache.sqoop.security.authentication.handler=org.apache.sqoop.security.authentication.SimpleAuthenticationHandler

org.apache.sqoop.security.authentication.anonymous=true2.3 指定Hadoop配置文件目录

# Hadoop configuration directory

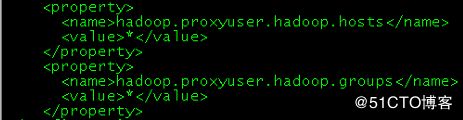

org.apache.sqoop.submission.engine.mapreduce.configuration.directory=/u01/hadoop/etc/hadoop另外,因为sqoop访问Hadoop的MapReduce使用的是代理的方式,必须在Hadoop中配置所接受的proxy用户和组。 如下图所示:

2.4 配置Hadoop库的路径

编辑$CATALINA_HOME下的catalina.properties文件,加入以下内容:

common.loader=${catalina.base}/lib,${catalina.base}/lib/*.jar,${catalina.home}/lib,${catalina.home}/lib/*.jar,${catalina.home}/../lib/*.jar,/u01/hadoop/share/hadoop/common/*.jar,/u01/hadoop/share/hadoop/common/lib/*.jar,/u01/hadoop/share/hadoop/hdfs/*.jar,/u01/hadoop/share/hadoop/hdfs/lib/*.jar,/u01/hadoop/share/hadoop/mapreduce/*.jar,/u01/hadoop/share/hadoop/mapreduce/lib/*.jar,/u01/hadoop/share/hadoop/tools/*.jar,/u01/hadoop/share/hadoop/tools/lib/*.jar,/u01/hadoop/share/hadoop/yarn/*.jar,/u01/hadoop/share/hadoop/yarn/lib/*.jar,/u01/hadoop/share/hadoop/httpfs/tomcat/lib/*.jar,另外,编辑sqoop.sh文件,加入如下内容:

export JAVA_HOME=/usr/java/jdk1.8.0_152

export HADOOP_COMMON_HOME=/u01/hadoop/share/hadoop/common

export HADOOP_HDFS_HOME=/u01/hadoop/share/hadoop/hdfs

export HADOOP_MAPRED_HOME=/u01/hadoop/share/hadoop/mapreduce

export HADOOP_YARN_HOME=/u01/hadoop/share/hadoop/yarn2.5 元数据仓库初始化

[hadoop@hdp01 ~]$ sqoop2-tool upgrade

[hadoop@hdp01 ~]$ sqoop2-tool verify

Setting conf dir: /u01/sqoop/bin/../conf

Sqoop home directory: /u01/sqoop

Sqoop tool executor:

Version: 1.99.7

Revision: 435d5e61b922a32d7bce567fe5fb1a9c0d9b1bbb

Compiled on Tue Jul 19 16:08:27 PDT 2016 by abefine

Running tool: class org.apache.sqoop.tools.tool.VerifyTool

0 [main] INFO org.apache.sqoop.core.SqoopServer - Initializing Sqoop server.

13 [main] INFO org.apache.sqoop.core.PropertiesConfigurationProvider - Starting config file poller thread

Verification was successful.

Tool class org.apache.sqoop.tools.tool.VerifyTool has finished correctly.2.6 安装JDBC驱动

默认情况下,sqoop不带mysql、PostgreSQL等jdbc驱动,需要单独下载并复制到$CATALINA_HOME/lib目录下。

2.7 其他配置

2.7.1 编辑yarn-site.xml

编辑此文件加入以下内容:

yarn.log-aggregation-enable=true若不启用此参数,会在在数据迁移的过程中出现“Aggregation is not enabled”的问题,导致数据迁移失败,如图:

2.7.2 启动historyserver服务

如果不启动JobHistoryServer,在启动job时遇到以下问题:

Call From xxxx to 0.0.0.0:10020 failed on connection exception: java.net.ConnectException: Connection refused。建议在hadoop cluster的所有namenode节点也启动该服务。

2.8 Sqoop的启动与关闭

[hadoop@hdp01 ~]$ sqoop2-server start

Setting conf dir: /u01/sqoop/bin/../conf

Sqoop home directory: /u01/sqoop

Starting the Sqoop2 server...

0 [main] INFO org.apache.sqoop.core.SqoopServer - Initializing Sqoop server.

14 [main] INFO org.apache.sqoop.core.PropertiesConfigurationProvider - Starting config file poller thread

Sqoop2 server started.

[hadoop@hdp01 ~]$ jps

18737 HMaster

5075 JobHistoryServer

8003 Jps

3317 Bootstrap

3686 QuorumPeerMain

4566 SecondaryNameNode

29559 RunJar

4747 ResourceManager

10012 RunJar

7852 SqoopJettyServer

4350 NameNode[hadoop@hdp01 ~]$ sqoop2-server stop

Setting conf dir: /u01/sqoop/bin/../conf

Sqoop home directory: /u01/sqoop

Stopping the Sqoop2 server...

Sqoop2 server stopped.到此,整个sqoop的安装配置已完成。

三、Sqoop使用介绍

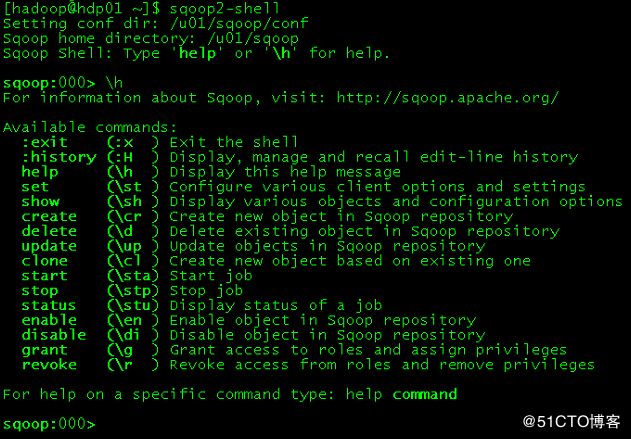

通过sqoop2-shell或者sqoop.sh client命令就可以登录命令符界面:

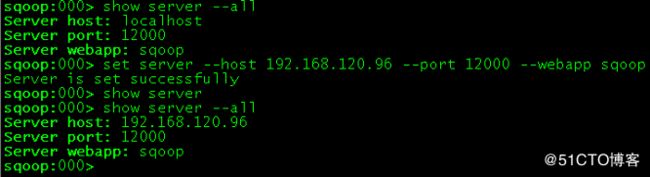

3.1 设置服务器

默认情况下,server的值是localhost(这里的sqoop服务器和客户端在一台机器上),如果通过sqoop客户端访问服务器,则需要设置server参数。

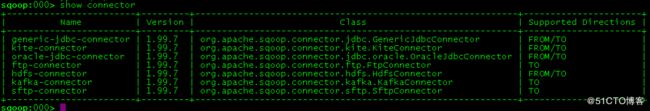

3.2 显示connector

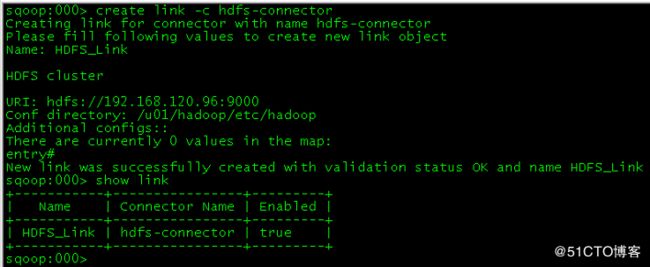

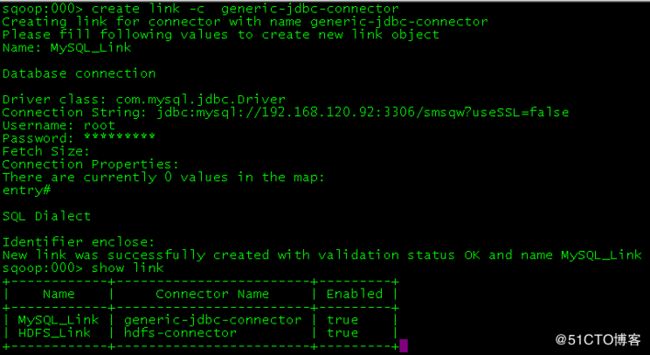

3.3 创建link

这里创建2个link实例:MySQL和HDFS:

注意:

创建MySQL链接中,Identifier enclose: 指定SQL中标识符的定界符,也就是说,有的SQL标示符是一个引号:select * from "table_name",这种定界符在MySQL中是会报错的。这个属性默认值就是双引号,所以不能使用回车,必须将之覆盖,可使用空格覆盖了这个值。

3.4 创建Job

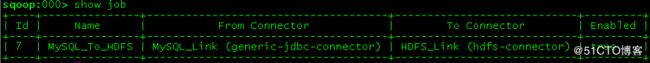

这里创建一个从MySQL复制表到HDFS上的job,如下:

sqoop:000> create job -f MySQL_Link -t HDFS_Link

Creating job for links with from name MySQL_Link and to name HDFS_Link

Please fill following values to create new job object

Name: MySQL_To_HDFS

Database source

Schema name: smsqw

Table name: tbMessage

SQL statement:

Column names:

There are currently 0 values in the list:

element#

Partition column:

Partition column nullable:

Boundary query:

Incremental read

Check column:

Last value:

Target configuration

Override null value:

Null value:

File format:

0 : TEXT_FILE

1 : SEQUENCE_FILE

2 : PARQUET_FILE

Choose: 0

Compression codec:

0 : NONE

1 : DEFAULT

2 : DEFLATE

3 : GZIP

4 : BZIP2

5 : LZO

6 : LZ4

7 : SNAPPY

8 : CUSTOM

Choose: 1

Custom codec:

Output directory: /user/DataSource

Append mode:

Throttling resources

Extractors:

Loaders:

Classpath configuration

Extra mapper jars:

There are currently 0 values in the list:

element#

New job was successfully created with validation status OK and name MySQL_To_HDFSsqoop:000> start job -n MySQL_To_HDFS -s