Producer:

Broker can send to producer a confirmation after receiving message and successfully persisted it. The producer needs to register a confirmation listener to process the confirmation message. In confirmation mode, each produced message for a channel contains an incremental sequence (nextPublishSeqNo). The confirmation message contains the largest confirmed sequence number (multiple=true) or a single confirmed sequence number (multiple=false). Each unconfirmed message must be persisted somehow and removed only after the correlated confirmation message is returned.

//enable publisher confirmation

channel.confirmSelect()

//register confirmation listener

channel.addConfirmListener(object: ConfirmListener {

override fun handleAck(deliveryTag: Long, multiple: Boolean) {

if (multiple) {

val headerMap = unackDataMap.headMap(deliveryTag)

headerMap.forEach { k, v ->

unackDataMap.remove(k)

callback(v)

}

}

else {

val dataToRemove = unackDataMap.remove(deliveryTag)

if (dataToRemove != null) {

callback(dataToRemove)

}

}

}

override fun handleNack(deliveryTag: Long, multiple: Boolean) {

}

})

//getting next sequence number

val deliverTag = channel.nextPublishSeqNo

//cache unacknowledged data

unackDataMap[deliverTag] = data

channel.basicPublish(exchange, routingKey, properties, body)Consumer:

There are serveral approaches to ensure reliable delivery:

- Nack + requeue message. The most naive way is to set consumer's auto acknowledgement to false and send ack/nack after message is being processed based on whether the message is processed succesfully or unsuccessfully. A nack message with "requeue" option = true will cause the message to be put back to the queue for reprocessing. However, a serious drawback of this approach is that, the message is put back to the head of the queue and reprocessed immediately. This tends to block normal message processing with QOS setting (Flow control purpose parameter. Controlls number of prefetched/unacked messages in each consumer. Broker push message to consumer if unacked <= QOS per consumer * number of consumers). And often, we want to delay retrying if the error is caused by network unstability or downstream service unavailability. Delay retrying is not supported with nack + requeue.

// simple retrying with nack + requeue

channel.basicConsume(receiveQueueName, autoAck = false, object: DefaultConsumer(channel) {

try {

channel.basicAck(deliveryTag=tag, multiple=false)

}

catch (e: Exception) {

if (isRetriable(e)) {

channel.basicReject(deliveryTag=tag, requeue=true)

}

else {

//persist error payload

channel.basicReject(deliveryTag=tag, requeue=false)

}

}

})-

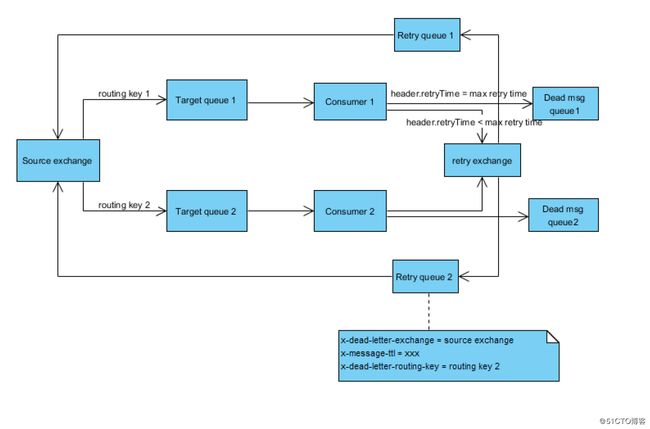

Use a separate deadletter exchange/queue for retrying. If deadletter exchange is specified for a queue, message will be put into it for the following cases:

- message is "nack" with requeue = false

- message is expired with TTL set

- message size exceeds queue limit

- message count exceeds queue limit

For retry case, instead of calling basicReject with "requeue" = true, it will use option "requeue" = false and stop putting the message back to the original qeueue. It will send the message to a separate retry exchange and in turn routes to a retry queue. Three important attributes must be set on retry queue: x-dead-letter-routing-key. x-dead-letter-exchange and x-message-ttl. x-message-ttl. The retry queue has no consumer, message will stay in the queue up to x-message-ttl and expired. As explained above the expired with will be routed to its deadletter exchange specified by x-dead-letter-exchange, which is exactly the original exchange where producer sends data to. x-dead-letter-routing-key is set to the original routing key so that the message finally reaches the correct queue. A special message header "retryTimes" is used to control number of retries, each time a message is put back to retry queue, its retryTimes will be incremented by 1.

//retry configuration

fun createRetryQueue(

channel: Channel,

schemaName: String,

deadLetterRoutingKey: String,

retryExchangeName: String,

retryQueueName: String,

retryBindingName: String

) {

val queueAttributes = mutableMapOf("x-dead-letter-exchange" to EVENT_GLOBAL_EXCHANGE,

"x-message-ttl" to 20000,

"x-dead-letter-routing-key" to deadLetterRoutingKey

)

channel.exchangeDeclare(retryExchangeName, "topic")

channel.queueDeclare(retryQueueName, true, false, false, queueAttributes)

channel.queueBind(retryQueueName, retryExchangeName, retryBindingName)

}

val receiveQueueName = "tx.$schemaName.compliance.submit.queue"

val receiveBindingName = "*.compliance.submit.${schemaName.toUpperCase()}.#"

createRetryQueue(channel, schemaName, "global.compliance.submit.${schemaName.toUpperCase()}.queue", COMPLIANCE_INBOUND_RETRY_EXCHANGE,

"tx.$schemaName.complianceinbound.retry.queue", "*.tx.$schemaName.complianceinbound.retry.#")// retry code

val prevRetryTimes: Int = headers.let { it[RabbitMQSourceContext.ATTRIB_RETRYTIMES] as Int? } ?: 0

if (prevRetryTimes < rabbitMQSourceConfig.maxRetry) {

propMap[RabbitMQSourceContext.ATTRIB_RETRYTIMES] = prevRetryTimes + 1

val rabbitProps = BasicPropertyFactory.create(MessageProperties.PERSISTENT_TEXT_PLAIN, propMap)

channel.basicNack(deliveryTag, false, false)

logger.debug(

"Sending retry message, exchange={}, routingKey={}, properties={}, deliveryTag={}",

retryExchangeName, retryRoutingKey, propMap, deliveryTag

)

channel.basicPublish(retryExchangeName, retryRoutingKey, rabbitProps, payload)

} else {

logger.warn("Max retry time exceeded for queue={}, deliveryTag={}", rabbitMQSourceConfig.sourceQueueName, deliveryTag)

}The major pro of this solution is that it supports delayed retry and message to retry will not block normal message processing

Cons of this solution is that it cannot really handle scenarios like exponential backoff. Because the TTL is a fixed value on a queue.