Python3+Scrapy+phantomJs+Selenium爬取今日头条

在实现爬虫的过程中,我们不可避免的会爬取又js以及Ajax等动态网页技术生成网页内容的网站,今日头条就是一个很好的例子。

本文所要介绍的是基于Python3,配合Scrapy+phantomjs+selenium框架的动态网页爬取技术。

本文所实现的2个项目已上传至Github中,求Star~ 1. 爬取今日头条新闻列表URL: 2. 爬取今日头条新闻内容:

静态网页爬取技术以及windows下爬虫环境搭建移步上几篇博客,必要的安装软件也在上一篇博客中提供。

本文介绍使用PhantongJs + Selenium实现新闻内容的爬取,爬取新闻列表的url也是相同的原理,不再赘述。

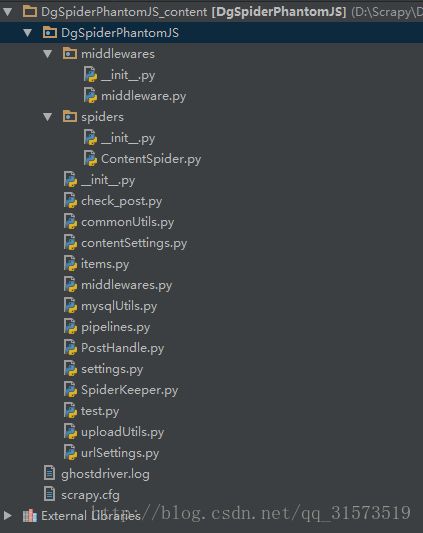

项目结构

项目原理

底层代码使用Python3,网络爬虫基础框架采用Scrapy,由于爬取的是动态网页,整个网页并不是直接生成页面,动过Ajax等技术动态生成。所以这里考虑采用 PhantomJs+Selenium模拟实现一个无界面的浏览器,去模拟用户操作,抓取网页代码内容。

代码文件说明

项目结构从上到下依次为:

middleware.py:整个项目的核心,用于启动中间件,在Scrapy抓取调用request的过程中实现模拟用户操作浏览器

ContentSpider.py:爬虫类文件,定义爬虫

commonUtils:工具类

items.py:爬虫所抓取到的字段存储类

pipelines.py:抓取到的数据处理类

这5个为关键类代码,其余的代码为业务相关代码。

关键代码讲解

middleware.py

douguo request middleware

for the page which loaded by js/ajax

ang changes should be recored here:

@author zhangjianfei

@date 2017/05/04

from selenium import webdriver

from scrapy.http import HtmlResponse

from DgSpiderPhantomJS import settings

from selenium.webdriver.common.desired_capabilities import DesiredCapabilities

import time

import random

class JavaScriptMiddleware(object):

print("LOGS Starting Middleware ...")

def process_request(self, request, spider):

print("LOGS: process_request is starting ...")

# 开启虚拟浏览器参数

dcap = dict(DesiredCapabilities.PHANTOMJS)

# 设置agents

dcap["phantomjs.page.settings.userAgent"] = (random.choice(settings.USER_AGENTS))

# 启动phantomjs

driver = webdriver.PhantomJS(executable_path=r"D:\phantomjs-2.1.1\bin\phantomjs.exe", desired_capabilities=dcap)

# 设置60秒页面超时返回

driver.set_page_load_timeout(60)

# 设置60秒脚本超时时间

driver.set_script_timeout(60)

# get page request

driver.get(request.url)

# simulate user behavior

js = "document.body.scrollTop=10000"

driver.execute_script(js) # 可执行js,模仿用户操作。此处为将页面拉至1000。

# 等待异步请求响应

driver.implicitly_wait(20)

# 获取页面源码

body = driver.page_source

return HtmlResponse(driver.current_url, body=body, encoding='utf-8', request=request)

-- coding: utf-8 --

import scrapy

import random

import time

from DgSpiderPhantomJS.items import DgspiderPostItem

from scrapy.selector import Selector

from DgSpiderPhantomJS import urlSettings

from DgSpiderPhantomJS import contentSettings

from DgSpiderPhantomJS.mysqlUtils import dbhandle_update_status

from DgSpiderPhantomJS.mysqlUtils import dbhandle_geturl

class DgContentSpider(scrapy.Spider):

print('LOGS: Spider Content_Spider Staring ...')

sleep_time = random.randint(60, 90)

print("LOGS: Sleeping :" + str(sleep_time))

time.sleep(sleep_time)

# get url from db

result = dbhandle_geturl()

url = result[0]

# spider_name = result[1]

site = result[2]

gid = result[3]

module = result[4]

# set spider name

name = 'Content_Spider'

# name = 'DgUrlSpiderPhantomJS'

# set domains

allowed_domains = [site]

# set scrapy url

start_urls = [url]

# change status

"""对于爬去网页,无论是否爬取成功都将设置status为1,避免死循环"""

dbhandle_update_status(url, 1)

# scrapy crawl

def parse(self, response):

# init the item

item = DgspiderPostItem()

# get the page source

sel = Selector(response)

print(sel)

# get post title

title_date = sel.xpath(contentSettings.POST_TITLE_XPATH)

item['title'] = title_date.xpath('string(.)').extract()

# get post page source

item['text'] = sel.xpath(contentSettings.POST_CONTENT_XPATH).extract()

# get url

item['url'] = DgContentSpider.url

yield item

-- coding: utf-8 --

Define here the models for your scraped items

See documentation in:

http://doc.scrapy.org/en/latest/topics/items.html

import scrapy

class DgspiderUrlItem(scrapy.Item):

url = scrapy.Field()

class DgspiderPostItem(scrapy.Item):

url = scrapy.Field()

title = scrapy.Field()

text = scrapy.Field()

-- coding: utf-8 --

Define your item pipelines here

Don't forget to add your pipeline to the ITEM_PIPELINES setting

See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html

import re

import datetime

import urllib.request

from DgSpiderPhantomJS import urlSettings

from DgSpiderPhantomJS import contentSettings

from DgSpiderPhantomJS.mysqlUtils import dbhandle_insert_content

from DgSpiderPhantomJS.uploadUtils import uploadImage

from DgSpiderPhantomJS.mysqlUtils import dbhandle_online

from DgSpiderPhantomJS.PostHandle import post_handel

from DgSpiderPhantomJS.mysqlUtils import dbhandle_update_status

from bs4 import BeautifulSoup

from DgSpiderPhantomJS.commonUtils import get_random_user

from DgSpiderPhantomJS.commonUtils import get_linkmd5id

class DgspiderphantomjsPipeline(object):

# post构造reply

cs = []

# 帖子title

title = ''

# 帖子文本

text = ''

# 当前爬取的url

url = ''

# 随机用户ID

user_id = ''

# 图片flag

has_img = 0

# get title flag

get_title_flag = 0

def __init__(self):

DgspiderphantomjsPipeline.user_id = get_random_user(contentSettings.CREATE_POST_USER)

# process the data

def process_item(self, item, spider):

self.get_title_flag += 1

# 获取当前网页url

DgspiderphantomjsPipeline.url = item['url']

# 获取post title

if len(item['title']) == 0:

title_tmp = ''

else:

title_tmp = item['title'][0]

# 替换标题中可能会引起 sql syntax 的符号

# 对于分页的文章,只取得第一页的标题

if self.get_title_flag == 1:

# 使用beautifulSoup格什化标题

soup_title = BeautifulSoup(title_tmp, "lxml")

title = ''

# 对于bs之后的html树形结构,不使用.prettify(),对于bs, prettify后每一个标签自动换行,造成多个、

# 多行的空格、换行,使用stripped_strings获取文本

for string in soup_title.stripped_strings:

title += string

title = title.replace("'", "”").replace('"', '“')

DgspiderphantomjsPipeline.title = title

# 获取正post内容

if len(item['text']) == 0:

text_temp = ''

else:

text_temp = item['text'][0]

soup = BeautifulSoup(text_temp, "lxml")

text_temp = str(soup)

# 获取图片

reg_img = re.compile(r'')

imgs = reg_img.findall(text_temp)

for img in imgs:

DgspiderphantomjsPipeline.has_img = 1

# matchObj = re.search('.*src="(.*)"{2}.*', img, re.M | re.I)

match_obj = re.search('.*src="(.*)".*', img, re.M | re.I)

img_url_tmp = match_obj.group(1)

# 去除所有Http:标签

img_url_tmp = img_url_tmp.replace("http:", "")

# 对于这种情况单独处理

imgUrl_tmp_list = img_url_tmp.split('"')

img_url_tmp = imgUrl_tmp_list[0]

# 加入http

imgUrl = 'http:' + img_url_tmp

list_name = imgUrl.split('/')

file_name = list_name[len(list_name)-1]

# if os.path.exists(settings.IMAGES_STORE):

# os.makedirs(settings.IMAGES_STORE)

# 获取图片本地存储路径

file_path = contentSettings.IMAGES_STORE + file_name

# 获取图片并上传至本地

urllib.request.urlretrieve(imgUrl, file_path)

upload_img_result_json = uploadImage(file_path, 'image/jpeg', DgspiderphantomjsPipeline.user_id)

# 获取上传之后返回的服务器图片路径、宽、高

img_u = upload_img_result_json['result']['image_url']

img_w = upload_img_result_json['result']['w']

img_h = upload_img_result_json['result']['h']

img_upload_flag = str(img_u)+';'+str(img_w)+';'+str(img_h)

# 在图片前后插入字符标记

text_temp = text_temp.replace(img, '[dgimg]' + img_upload_flag + '[/dgimg]')

# 替换标签

text_temp = text_temp.replace('', '').replace('', '')

# 使用beautifulSoup格什化HTML

soup = BeautifulSoup(text_temp, "lxml")

text = ''

# 对于bs之后的html树形结构,不使用.prettify(),对于bs, prettify后每一个标签自动换行,造成多个、

# 多行的空格、换行

for string in soup.stripped_strings:

text += string + '\n\n'

# 替换因为双引号为中文双引号,避免 mysql syntax

DgspiderphantomjsPipeline.text = self.text + text.replace('"', '“')

return item

# spider开启时被调用

def open_spider(self, spider):

pass

# sipder 关闭时被调用

def close_spider(self, spider):

# 数据入库:235

url = DgspiderphantomjsPipeline.url

title = DgspiderphantomjsPipeline.title

content = DgspiderphantomjsPipeline.text

user_id = DgspiderphantomjsPipeline.user_id

create_time = datetime.datetime.now().strftime('%Y-%m-%d %H:%M:%S')

dbhandle_insert_content(url, title, content, user_id, DgspiderphantomjsPipeline.has_img, create_time)

# 处理文本、设置status、上传至dgCommunity.dg_post

# 如果判断has_img为1,那么上传帖子

if DgspiderphantomjsPipeline.has_img == 1:

if title.strip() != '' and content.strip() != '':

spider.logger.info('status=2 , has_img=1, title and content is not null! Uploading post into db...')

post_handel(url)

else:

spider.logger.info('status=1 , has_img=1, but title or content is null! ready to exit...')

pass

else:

spider.logger.info('status=1 , has_img=0, changing status and ready to exit...')

pass

转自:

http://blog.csdn.net/qq_31573519/article/details/74248559

、