生成对抗网络(七)----------SGAN

一、SGAN介绍

SGAN来源于这篇论文:《Semi-Supervised Learning with Generative Adversarial Networks》

传统的机器学习分为监督式学习和无监督式学习。前者的数据是有标签的,后者的数据是无标签的。然而,在很多问题中,有标签的数据是非常少的,要想获得有标签的数据,需要人工标注等一些操作。而无标签的数据则比较容易获得。半监督学习就是要结合监督式和无监督式,利用少量标签数据与大量无标签数据进行训练,然后,实现对未标签数据进行分类。

在生成对抗网络中,真实数据可以被看做有标签数据集,生成器随机产生的数据则可以被看做是无标签数据集。在DCGAN中,使用生成模型特征提取后的判别器已经可以实现分类的效果。首先,由判别器D学习到的特征可以提升分类器C的效果。那么一个好的分类器也可以优化判别器的最终效果。而C和D是无法同时训练的。然后C和D是可以相互促进提升的。而提升了判别器的能力。生成器G的效果也会随之变得更好,三者会在一个交替过程中趋向一个理想的平衡点。

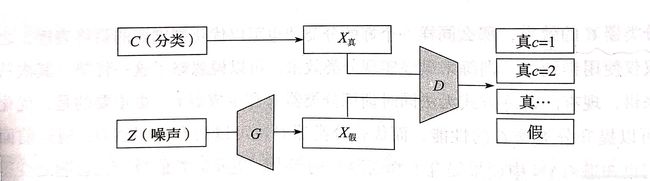

因次,提出了一个半监督式GAN,称之为SGAN。希望能够同时训练生成器与半监督式分类器,最终实现一个更优的半监督式分类器,以及一个成像更高的生成模型。在传统的二分类模式基础上,SGAN变成了多分类,类型数量为N+1。分别指代N个标签和一个“假”数据。在实际过程中,判别器和分类器是一体的,记作D/C。共同与生成器G形成一个博弈关系。目标函数为负向最大似然估计(NLL)。下面为伪代码:

输入:I:总迭代次数

for n = 1, ... , I do

从生成器前置随机分布![]() 取出m个随机样本

取出m个随机样本

从真实数据分布![]() 取出m个真实样本

取出m个真实样本

最小化NLL,更新D/C的参数

从生成器前置随机分布![]() 取出m个随机样本

取出m个随机样本

最大化NLL,更新G的参数

end for

相比较于cGAN,SGAN的生成是随机的。而且判别器的输出是一个分类器和判别器的结合。结构图如下:

二、代码实现

1. 导包

rom __future__ import print_function, division

from keras.datasets import mnist

from keras.layers import Input, Dense, Reshape, Flatten, Dropout

from keras.layers import BatchNormalization, Activation, ZeroPadding2D

from keras.layers.advanced_activations import LeakyReLU

from keras.layers.convolutional import UpSampling2D, Conv2D

from keras.models import Sequential, Model

from keras.optimizers import Adam

from keras.utils import to_categorical

import matplotlib.pyplot as plt

import numpy as np2. 初始化

class SGAN():

def __init__(self):

self.img_rows = 28

self.img_cols = 28

self.channels = 1

self.img_shape = (self.img_cols, self.img_rows, self.channels)

self.num_classes = 10

self.latent_dim = 100

optimizer = Adam(0.0002, 0.5)

# 构建判别器并编译

self.discriminator = self.build_discriminator()

self.discriminator.compile(loss=['binary_crossentropy', 'categorical_crossentropy'],

loss_weights=[0.5, 0.5],

optimizer=optimizer,

metrics=['accuracy'])

# 构建生成器

self.generator = self.build_generator()

noise = Input(shape=(100,))

img = self.generator(noise)

# 固定判别器

self.discriminator.trainable = False

# 生成器生成图像的判别结果

valid, _ = self.discriminator(img)

# 编译模型, 生成器和判别器的堆叠

self.combined = Model(noise, valid)

self.combined.compile(loss=['binary_crossentropy'],

optimizer=optimizer)3. 构建生成器

def build_generator(self):

model = Sequential()

model.add(Dense(128 * 7 * 7, activation='relu', input_dim=self.latent_dim))

model.add(Reshape((7, 7, 128)))

model.add(BatchNormalization(momentum=0.8))

model.add(UpSampling2D())

model.add(Conv2D(128, kernel_size=3, padding='same'))

model.add(Activation('relu'))

model.add(BatchNormalization(momentum=0.8))

model.add(UpSampling2D())

model.add(Conv2D(64, kernel_size=3, padding='same'))

model.add(Activation('relu'))

model.add(BatchNormalization(momentum=0.8))

model.add(Conv2D(1, kernel_size=3, padding='same'))

model.add(Activation('tanh'))

model.summary()

noise = Input(shape=(self.latent_dim,))

img = model(noise)

return Model(noise, img)

4. 构建判别器

def build_discriminator(self):

model = Sequential()

model.add(Conv2D(32, kernel_size=3, strides=2, input_shape=self.img_shape, padding='same'))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Conv2D(64, kernel_size=3, strides=2, padding='same'))

model.add(ZeroPadding2D(padding=((0, 1), (0, 1))))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(BatchNormalization(momentum=0.8))

model.add(Conv2D(128, kernel_size=3, strides=2, padding='same'))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(BatchNormalization(momentum=0.8))

model.add(Conv2D(256, kernel_size=3, strides=1, padding='same'))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Flatten())

model.summary()

img = Input(shape=self.img_shape)

features = model(img) # 经判别器提取的特征

valid = Dense(1, activation='sigmoid')(features) # 分类器输出结果

label = Dense(self.num_classes + 1, activation='softmax')(features) # 判别器结果

return Model(img, [valid, label])5. 训练模型

def train(self, epochs, batch_size=128, sample_interval=50):

# 加载数据

(X_train, y_train), (_, _) = mnist.load_data()

half_batch = batch_size / 2

# 归一化到-1~~1

X_train = (X_train.astype(np.float32) - 127.5) / 127.5

X_train = np.expand_dims(X_train, axis=3)

y_train = y_train.reshape(-1, 1)

# 分类权重

cw1 = {0: 1, 1: 1}

cw2 = {i: self.num_classes / half_batch for i in range(self.num_classes)}

cw2[self.num_classes] = 1 / half_batch

# 真实值

valid = np.ones((batch_size, 1))

fake = np.zeros((batch_size, 1))

for epoch in range(epochs):

'''训练判别器'''

# 选择图像批度

idx = np.random.randint(0, X_train.shape[0], batch_size)

imgs = X_train[idx]

# 噪声样本,生成新图像

noise = np.random.normal(0, 1, (batch_size, self.latent_dim))

gen_imgs = self.generator.predict(noise)

# 标签的one-hot变量

labels = to_categorical(y_train[idx], num_classes=self.num_classes + 1)

fake_labels = to_categorical(np.full((batch_size, 1), self.num_classes), num_classes=self.num_classes + 1)

# 训练判别器

d_loss_real = self.discriminator.train_on_batch(imgs, [valid, labels], class_weight=[cw1, cw2])

d_loss_fake = self.discriminator.train_on_batch(gen_imgs, [fake, fake_labels], class_weight=[cw1, cw2])

d_loss = 0.5 * np.add(d_loss_real, d_loss_fake)

'''训练生成器'''

g_loss = self.combined.train_on_batch(noise, valid, class_weight=[cw1, cw2])

print("%d [D loss: %f, acc: %.2f%%, op_acc: %.2f%%] [G loss: %f]" % (epoch, d_loss[0], 100 * d_loss[3], 100 * d_loss[4], g_loss))

if epoch % sample_interval == 0:

self.sample_images(epoch)6. 显示图像

def sample_images(self, epoch):

r, c = 5, 5

noise = np.random.normal(0, 1, (r * c, self.latent_dim))

gen_imgs = self.generator.predict(noise)

gen_imgs = 0.5 * gen_imgs + 1

fig, axs = plt.subplots(r, c)

cnt = 0

for i in range(r):

for j in range(c):

axs[i, j].imshow(gen_imgs[cnt, :, :, 0], cmap='gray')

axs[i, j].axis('off')

cnt += 1

fig.savefig("images/mnist_%d.png" % epoch)

plt.close()7. 运行代码

if __name__ == '__main__':

sgan = SGAN()

sgan.train(epochs=20000, batch_size=32, sample_interval=50)结果: