kinect沙池游戏的纹理混合

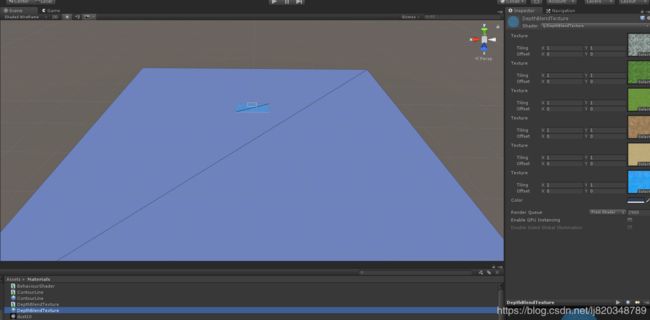

今天想讲讲kinect沙池游戏中的纹理混合,这是去年公司的项目,一个人历时数月完成的,当然这里只讲基于深度做出的纹理混合的原理,其他的就不便多说。先看效果图吧,我不懂怎么发动图

1,先获得kinect的深度数据,原始数据并不是一张图,而是一个数组,我们需要将此数组转成二维数组,方便使用着色器计算,专业术语应该是gpgpu:

m_ComputeBuffer = new ComputeBuffer(m_DepthMapLimitWidth * m_DepthMapLimitHeight, sizeof(float));

void UpdateDepthMap()

{

ushort[] ushortRes = m_KinectManager.GetRawDepthMap();

int arrLength = ushortRes.Length;

int curIndex = 0;

for (int i = 0; i < arrLength; ++i)

{

int depthCoordX = i % m_KinectManager.GetDepthImageWidth();

int depthCoordY = i / m_KinectManager.GetDepthImageWidth();

if (depthCoordX >= m_DepthMapOffsetX && depthCoordX < m_DepthMapLimitWidth + m_DepthMapOffsetX &&

depthCoordY >= m_DepthMapOffsetY && depthCoordY < m_DepthMapLimitHeight + m_DepthMapOffsetY)

{

if (ushortRes[i] == 0)

{

ushortRes[i] = 4500;

}

m_DepthMapBuffer[curIndex] = m_DepthMapBuffer[curIndex]*0.8f + ushortRes[i]*0.2f;

++curIndex;

}

}

MapManager.Single.UpdateDepthData(m_DepthMapBuffer);

m_ComputeBuffer.SetData(m_DepthMapBuffer);

m_DepthBlendTextureMat.SetBuffer("_DepthBuffer", m_ComputeBuffer);//这个材质球在下面会做说明

}此段代码意思为,先创建一个ComputeBuffer,用来存储kinect获取的深度数据,然后传入一个材质球的shader中。

2,我们需要定义深度值所对应的纹理,我们这个项目准备了6张图

这个材质球的shader等下再讲,先定义了六个深度值分别对应六张图,也就是说kinect获取了深度值,当该深度值等于对应的值得时候就显示对应图片上的像素,当该深度值处于两张图的中间,就取两张图根据深度差距做纹理的混合。

3,重点来了,上面的工作完成后,意味着,我们已经将kinect获取的深度数据完美地传入了shader,并且6张纹理也已经传进去,一切准备就绪,我们来看看shader是怎么工作的:

// Upgrade NOTE: replaced 'mul(UNITY_MATRIX_MVP,*)' with 'UnityObjectToClipPos(*)'

/*

* @authors: liangjian

* @desc:

*/

Shader "lj/DepthBlendTexture"

{

Properties

{

_BlendTex0("Texture", 2D) = "white" {}

_BlendTex1("Texture", 2D) = "white" {}

_BlendTex2("Texture", 2D) = "white" {}

_BlendTex3("Texture", 2D) = "white" {}

_BlendTex4("Texture", 2D) = "white" {}

_BlendTex5("Texture", 2D) = "white" {}

_Color ("Color", Color) = (1,1,1,1)

}

SubShader

{

Tags

{

"Queue" = "Transparent-100"

"RenderType" = "Transparent"

}

Pass

{

Blend SrcAlpha OneMinusSrcAlpha

CGPROGRAM

#pragma exclude_renderers d3d9

#pragma vertex vert

#pragma fragment frag

#include "UnityCG.cginc"

struct appdata

{

float4 vertex : POSITION;

float2 uv : TEXCOORD0;

};

struct v2f

{

float2 uv : TEXCOORD0;

float4 vertex : SV_POSITION;

};

v2f vert (appdata v)

{

v2f o;

o.vertex = UnityObjectToClipPos(v.vertex);

o.uv = v.uv;

return o;

}

sampler2D _BlendTex0;

sampler2D _BlendTex1;

sampler2D _BlendTex2;

sampler2D _BlendTex3;

sampler2D _BlendTex4;

sampler2D _BlendTex5;

fixed4 _Color;

uniform float _DepthValue0;

uniform float _DepthValue1;

uniform float _DepthValue2;

uniform float _DepthValue3;

uniform float _DepthValue4;

uniform float _DepthValue5;

uniform float _UvAnimationOffsetX;

uniform int _DepthMapWidth;

uniform int _DepthMapHeight;

uniform float _CloudDepthFlag_min;

uniform float _CloudDepthFlag_max;

StructuredBuffer _DepthBuffer;

fixed4 frag (v2f i) : SV_Target

{

int x = floor(i.uv.x * _DepthMapWidth);

int y = floor(i.uv.y * _DepthMapHeight);

float depthValue = _DepthBuffer[_DepthMapWidth* y + x];

fixed4 blendCol0 = tex2D(_BlendTex0, i.uv);

fixed4 blendCol1 = tex2D(_BlendTex1, i.uv);

fixed4 blendCol2 = tex2D(_BlendTex2, i.uv);

fixed4 blendCol3 = tex2D(_BlendTex3, i.uv);

fixed4 blendCol4 = tex2D(_BlendTex4, i.uv);

fixed4 blendCol5 = tex2D(_BlendTex5, float2(i.uv.x + _UvAnimationOffsetX, i.uv.y));

blendCol5.a = 0.4f;

float4 blendCol;

float alpha = 0.05f;

//_DepthValue0是雪山,距离kinect最近,所以深度值最小,如果depthValue 小于雪山的深度值,则设置为雪山的纹理

if (depthValue < _DepthValue0)

{

blendCol = blendCol0;

}

//_DepthValue1为树林纹理的深度值,如果depthValue 大于雪山,小于树林,则取雪山和树林的纹理间插值计算出混合的纹理,以下以此类推,不一一说明

else if (depthValue < _DepthValue1)

{

float offset01 = _DepthValue1 - _DepthValue0;

float alpha1 = (depthValue - _DepthValue0) / offset01;

blendCol = blendCol0*(1.0f - alpha1) + blendCol1*alpha1;

alpha = alpha1;

}

else if (depthValue < _DepthValue2)

{

float offset12 = _DepthValue2 - _DepthValue1;

float alpha2 = (depthValue - _DepthValue1) / offset12;

blendCol = blendCol1*(1.0f - alpha2) + blendCol2*alpha2;

alpha = alpha2;

}

else if (depthValue < _DepthValue3)

{

float offset23 = _DepthValue3 - _DepthValue2;

float alpha3 = (depthValue - _DepthValue2) / offset23;

blendCol = blendCol2*(1.0f - alpha3) + blendCol3*alpha3;

alpha = alpha3;

}

else if (depthValue < _DepthValue4)

{

float offset34 = _DepthValue4 - _DepthValue3;

float alpha4 = (depthValue - _DepthValue3) / offset34;

blendCol = blendCol3*(1.0f - alpha4) + blendCol4*alpha4;

alpha = alpha4;

}

else if (depthValue < _DepthValue5)

{

float offset45 = _DepthValue5 - _DepthValue4;

float alpha5 = (depthValue - _DepthValue4) / offset45;

blendCol = blendCol4*(1.0f - alpha5) + blendCol5*alpha5;

alpha = alpha5;

}

else

{

blendCol = blendCol5;

}

//将深度值大于海洋或者小于天空的当前像素颜色设置为黑色

if(depthValue > _CloudDepthFlag_min && depthValue < _CloudDepthFlag_max)

{

blendCol = fixed4(0.0f, 0.0f, 0.0f, 1.0f);

}

return blendCol;

}

ENDCG

}

}

FallBack "Diffuse"

}

在片元着色器中获取了6张上面说过的纹理像素颜色,float depthValue = _DepthBuffer[_DepthMapWidth* y + x];这一句就是kinect获取在此uv坐标下的深度值了,然后就是根据这个深度值处于哪两张纹理之间取得插值。