Hadoop搭建部署

GitChat 作者:鸣宇淳

原文: 史上最详细的Hadoop环境搭建

关注公众号:GitChat 技术杂谈,一本正经的讲技术

【不要错过文末活动哦】

前言

Hadoop在大数据技术体系中的地位至关重要,Hadoop是大数据技术的基础,对Hadoop基础知识的掌握的扎实程度,会决定在大数据技术道路上走多远。

这是一篇入门文章,Hadoop的学习方法很多,网上也有很多学习路线图。本文的思路是:以安装部署Apache Hadoop2.x版本为主线,来介绍Hadoop2.x的架构组成、各模块协同工作原理、技术细节。安装不是目的,通过安装认识Hadoop才是目的。

本文分为五个部分、十三节、四十九步。

第一部分:Linux环境安装

Hadoop是运行在Linux,虽然借助工具也可以运行在Windows上,但是建议还是运行在Linux系统上,第一部分介绍Linux环境的安装、配置、Java JDK安装等。

第二部分:Hadoop本地模式安装

Hadoop本地模式只是用于本地开发调试,或者快速安装体验Hadoop,这部分做简单的介绍。

第三部分:Hadoop伪分布式模式安装

学习Hadoop一般是在伪分布式模式下进行。这种模式是在一台机器上各个进程上运行Hadoop的各个模块,伪分布式的意思是虽然各个模块是在各个进程上分开运行的,但是只是运行在一个操作系统上的,并不是真正的分布式。

第四部分:完全分布式安装

完全分布式模式才是生产环境采用的模式,Hadoop运行在服务器集群上,生产环境一般都会做HA,以实现高可用。

第五部分:Hadoop HA安装

HA是指高可用,为了解决Hadoop单点故障问题,生产环境一般都做HA部署。这部分介绍了如何配置Hadoop2.x的高可用,并简单介绍了HA的工作原理。

安装过程中,会穿插简单介绍涉及到的知识。希望能对大家有所帮助。

第一部分:Linux环境安装

第一步、配置Vmware NAT网络

一、Vmware网络模式介绍

参考:http://blog.csdn.net/collection4u/article/details/14127671

二、NAT模式配置

NAT是网络地址转换,是在宿主机和虚拟机之间增加一个地址转换服务,负责外部和虚拟机之间的通讯转接和IP转换。

我们部署Hadoop集群,这里选择NAT模式,各个虚拟机通过NAT使用宿主机的IP来访问外网。

我们的要求是集群中的各个虚拟机有固定的IP、可以访问外网,所以进行如下设置:

1、 Vmware安装后,默认的NAT设置如下:

2、 默认的设置是启动DHCP服务的,NAT会自动给虚拟机分配IP,但是我们需要将各个机器的IP固定下来,所以要取消这个默认设置。

3、 为机器设置一个子网网段,默认是192.168.136网段,我们这里设置为100网段,将来各个虚拟机Ip就为 192.168.100.*。

4、 点击NAT设置按钮,打开对话框,可以修改网关地址和DNS地址。这里我们为NAT指定DNS地址。

5、 网关地址为当前网段里的.2地址,好像是固定的,我们不做修改,先记住网关地址就好了,后面会用到。

第二步、安装Linux操作系统

三、Vmware上安装Linux系统

1、 文件菜单选择新建虚拟机

2、 选择经典类型安装,下一步。

3、 选择稍后安装操作系统,下一步。

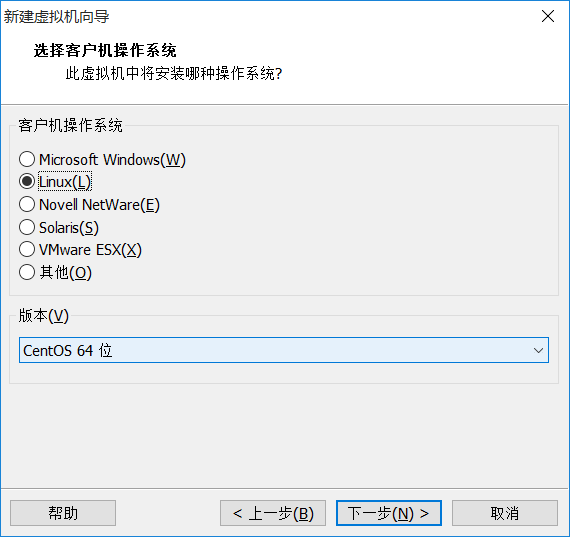

4、 选择Linux系统,版本选择CentOS 64位。

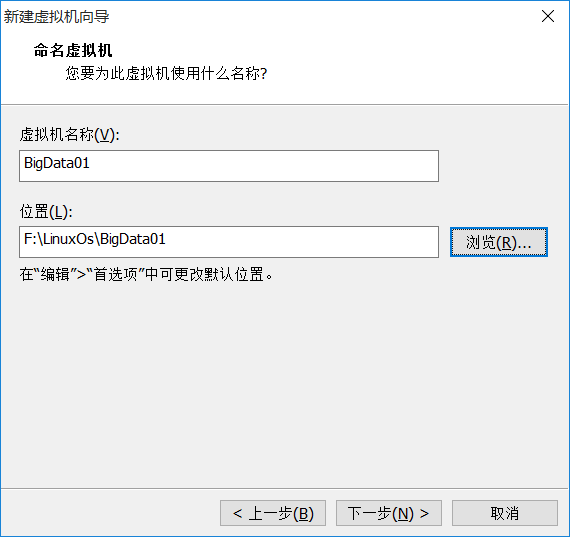

5、 命名虚拟机,给虚拟机起个名字,将来显示在Vmware左侧。并选择Linux系统保存在宿主机的哪个目录下,应该一个虚拟机保存在一个目录下,不能多个虚拟机使用一个目录。

6、 指定磁盘容量,是指定分给Linux虚拟机多大的硬盘,默认20G就可以,下一步。

7、 点击自定义硬件,可以查看、修改虚拟机的硬件配置,这里我们不做修改。

8、 点击完成后,就创建了一个虚拟机,但是此时的虚拟机还是一个空壳,没有操作系统,接下来安装操作系统。

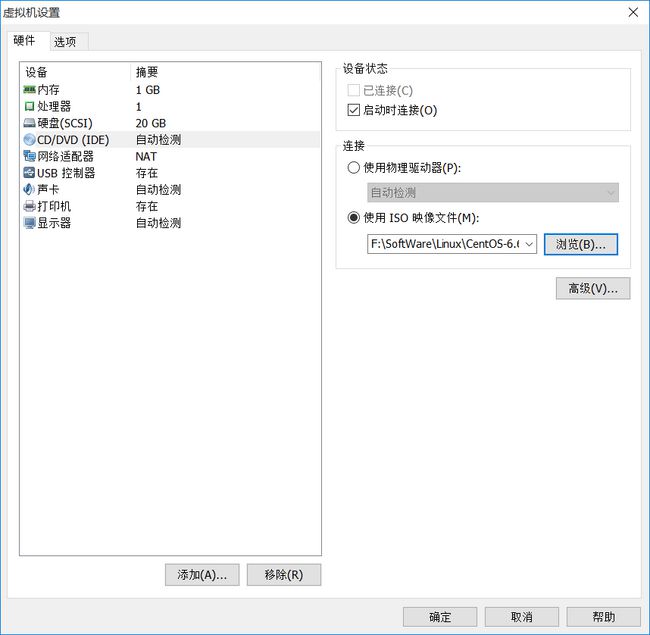

9、 点击编辑虚拟机设置,找到DVD,指定操作系统ISO文件所在位置。

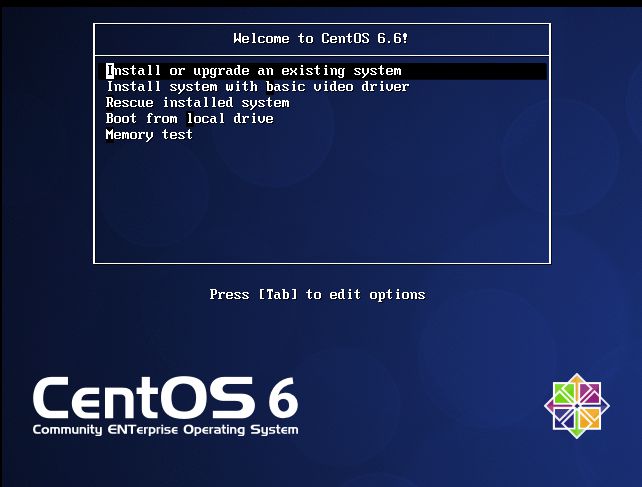

10、 点击开启此虚拟机,选择第一个回车开始安装操作系统。

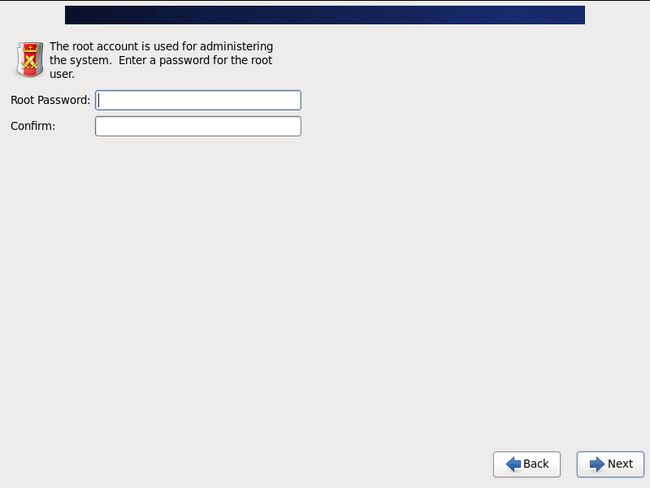

11、 设置root密码。

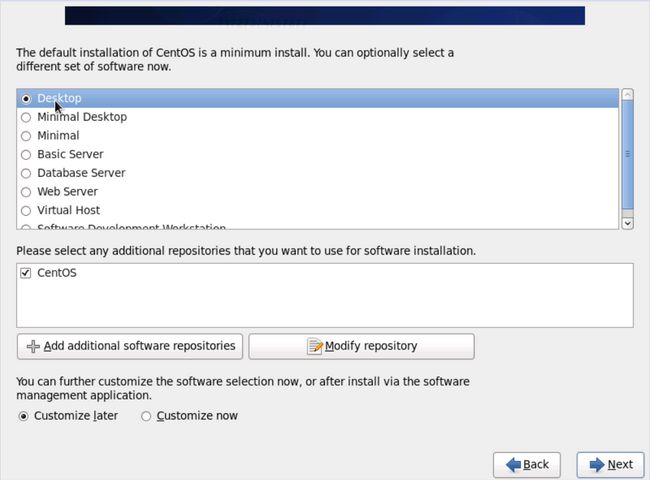

12、 选择Desktop,这样就会装一个Xwindow。

13、 先不添加普通用户,其他用默认的,就把Linux安装完毕了。

四、设置网络

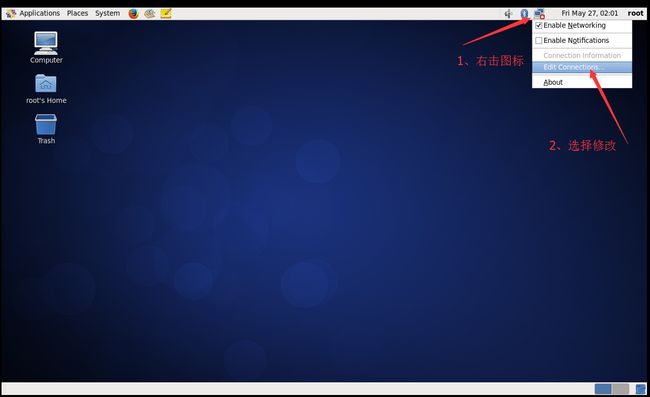

因为Vmware的NAT设置中关闭了DHCP自动分配IP功能,所以Linux还没有IP,需要我们设置网络各个参数。

1、 用root进入Xwindow,右击右上角的网络连接图标,选择修改连接。

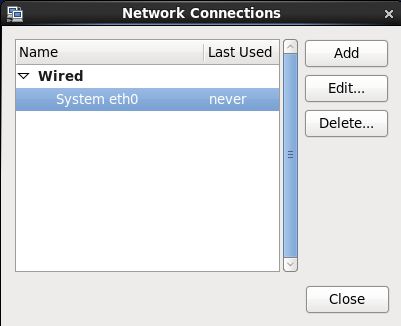

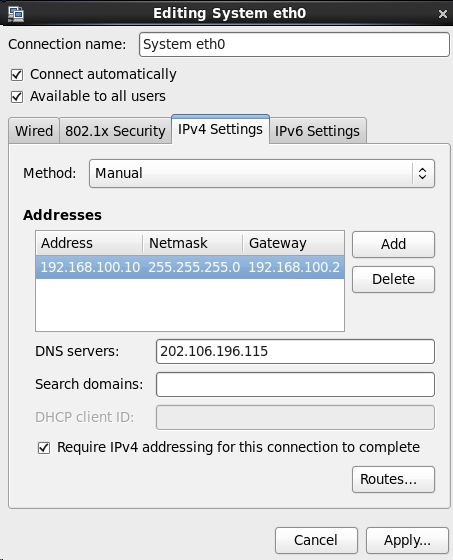

2、 网络连接里列出了当前Linux里所有的网卡,这里只有一个网卡System eth0,点击编辑。

3、 配置IP、子网掩码、网关(和NAT设置的一样)、DNS等参数,因为NAT里设置网段为100.*,所以这台机器可以设置为192.168.100.10网关和NAT一致,为192.168.100.2

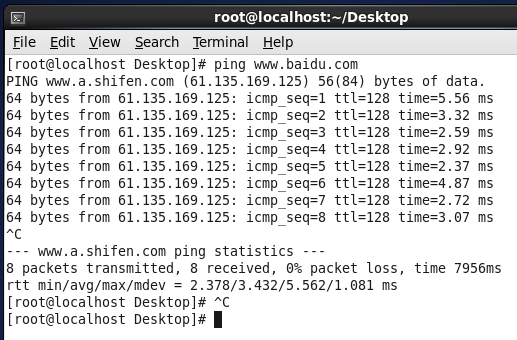

4、 用ping来检查是否可以连接外网,如下图,已经连接成功。

五、修改Hostname

1、 临时修改hostname

[root@localhost Desktop]# hostname bigdata-senior01.chybinmy.com

- 1

这种修改方式,系统重启后就会失效。

2、 永久修改hostname

想永久修改,应该修改配置文件 /etc/sysconfig/network。

命令:[root@bigdata-senior01 ~] vim /etc/sysconfig/network

- 1

打开文件后,

NETWORKING=yes #使用网络

HOSTNAME=bigdata-senior01.chybinmy.com #设置主机名

- 1

- 2

六、配置Host

命令:[root@bigdata-senior01 ~] vim /etc/hosts

添加hosts: 192.168.100.10 bigdata-senior01.chybinmy.com

- 1

- 2

七、关闭防火墙

学习环境可以直接把防火墙关闭掉。

(1) 用root用户登录后,执行查看防火墙状态。

[root@bigdata-senior01 hadoop]# service iptables status

- 1

(2) 用[root@bigdata-senior01 hadoop]# service iptables stop关闭防火墙,这个是临时关闭防火墙。

[root@bigdata-senior01 hadoop-2.5.0]# service iptables stop

iptables: Setting chains to policy ACCEPT: filter [ OK ]

iptables: Flushing firewall rules: [ OK ]

iptables: Unloading modules: [ OK ]

- 1

- 2

- 3

- 4

(3) 如果要永久关闭防火墙用。

[root@bigdata-senior01 hadoop]# chkconfig iptables off

- 1

关闭,这种需要重启才能生效。

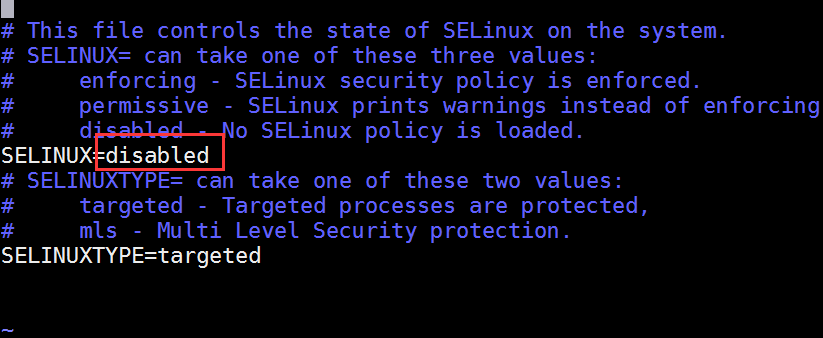

八、关闭selinux

selinux是Linux一个子安全机制,学习环境可以将它禁用。

[hadoop@bigdata-senior01 hadoop-2.5.0]$ vim /etc/sysconfig/selinux

- 1

# This file controls the state of SELinux on the system.

SELINUX= can take one of these three values:

enforcing - SELinux security policy is enforced.

permissive - SELinux prints warnings instead of enforcing.

disabled - No SELinux policy is loaded.

SELINUX=disabled

SELINUXTYPE= can take one of these two values:

targeted - Targeted processes are protected,

mls - Multi Level Security protection.

SELINUXTYPE=targeted

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

第三步、安装JDK

九、安装Java JDK

1、 查看是否已经安装了java JDK。

[root@bigdata-senior01 Desktop]# java –version

- 1

注意:Hadoop机器上的JDK,最好是Oracle的Java JDK,不然会有一些问题,比如可能没有JPS命令。

如果安装了其他版本的JDK,卸载掉。

2、 安装java JDK

(1) 去下载Oracle版本Java JDK:jdk-7u67-linux-x64.tar.gz

(2) 将jdk-7u67-linux-x64.tar.gz解压到/opt/modules目录下

[root@bigdata-senior01 /]# tar -zxvf jdk-7u67-linux-x64.tar.gz -C /opt/modules

- 1

(3) 添加环境变量

设置JDK的环境变量 JAVA_HOME。需要修改配置文件/etc/profile,追加

export JAVA_HOME="/opt/modules/jdk1.7.0_67"

export PATH=$JAVA_HOME/bin:$PATH

- 1

- 2

修改完毕后,执行 source /etc/profile

(4)安装后再次执行 java –version,可以看见已经安装完成。

[root@bigdata-senior01 /]# java -version

java version "1.7.0_67"

Java(TM) SE Runtime Environment (build 1.7.0_67-b01)

Java HotSpot(TM) 64-Bit Server VM (build 24.65-b04, mixed mode)

- 1

- 2

- 3

- 4

第二部分:Hadoop本地模式安装

第四步、Hadoop部署模式

Hadoop部署模式有:本地模式、伪分布模式、完全分布式模式、HA完全分布式模式。

区分的依据是NameNode、DataNode、ResourceManager、NodeManager等模块运行在几个JVM进程、几个机器。

| 模式名称 | 各个模块占用的JVM进程数 | 各个模块运行在几个机器数上 |

|---|---|---|

| 本地模式 | 1个 | 1个 |

| 伪分布式模式 | N个 | 1个 |

| 完全分布式模式 | N个 | N个 |

| HA完全分布式 | N个 | N个 |

第五步、本地模式部署

十、本地模式介绍

本地模式是最简单的模式,所有模块都运行与一个JVM进程中,使用的本地文件系统,而不是HDFS,本地模式主要是用于本地开发过程中的运行调试用。下载hadoop安装包后不用任何设置,默认的就是本地模式。

十一、解压hadoop后就是直接可以使用

1、 创建一个存放本地模式hadoop的目录

[hadoop@bigdata-senior01 modules]$ mkdir /opt/modules/hadoopstandalone- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">2、 解压hadoop文件

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 modules]$ tar -zxf /opt/sofeware/hadoop-2.5.0.tar.gz -C /opt/modules/hadoopstandalone/

- 1

3、 确保JAVA_HOME环境变量已经配置好

[hadoop@bigdata-senior01 modules]$ echo ${JAVA_HOME}

/opt/modules/jdk1.7.0_67

- 1

- 2

十二、运行MapReduce程序,验证

我们这里用hadoop自带的wordcount例子来在本地模式下测试跑mapreduce。

1、 准备mapreduce输入文件wc.input

[hadoop@bigdata-senior01 modules]$ cat /opt/data/wc.input

hadoop mapreduce hive

hbase spark storm

sqoop hadoop hive

spark hadoop

- 1

- 2

- 3

- 4

- 5

2、 运行hadoop自带的mapreduce Demo

[hadoop@bigdata-senior01 hadoopstandalone]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0.jar wordcount /opt/data/wc.input output2- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">"http://images.gitbook.cn/7492ce20-5cba-11e7-86d9-f17e4b747fa0" alt="enter image description here" title="" style="border: none; box-sizing: border-box; max-width: 100%;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">这里可以看到job ID中有local字样,说明是运行在本地模式下的。

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">3、 查看输出文件

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">本地模式下,mapreduce的输出是输出到本地。

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 hadoopstandalone]$ ll output2

total 4

-rw-r--r-- 1 hadoop hadoop 60 Jul 7 12:50 part-r-00000

-rw-r--r-- 1 hadoop hadoop 0 Jul 7 12:50 _SUCCESS

- 1

- 2

- 3

- 4

输出目录中有_SUCCESS文件说明JOB运行成功,part-r-00000是输出结果文件。

第三部分:Hadoop伪分布式模式安装

第六步、伪分布式Hadoop部署过程

十三、Hadoop所用的用户设置

1、 创建一个名字为hadoop的普通用户

[root@bigdata-senior01 ~]# useradd hadoop

[root@bigdata-senior01 ~]# passwd hadoop

- 1

- 2

2、 给hadoop用户sudo权限

[root@bigdata-senior01 ~]# vim /etc/sudoers

- 1

设置权限,学习环境可以将hadoop用户的权限设置的大一些,但是生产环境一定要注意普通用户的权限限制。

root ALL=(ALL) ALL

hadoop ALL=(root) NOPASSWD:ALL

- 1

- 2

注意:如果root用户无权修改sudoers文件,先手动为root用户添加写权限。

[root@bigdata-senior01 ~]# chmod u+w /etc/sudoers

- 1

3、 切换到hadoop用户

[root@bigdata-senior01 ~]# su - hadoop

[hadoop@bigdata-senior01 ~]$- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">4、 创建存放hadoop文件的目录

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 ~]$ sudo mkdir /opt/modules

- 1

5、 将hadoop文件夹的所有者指定为hadoop用户

如果存放hadoop的目录的所有者不是hadoop,之后hadoop运行中可能会有权限问题,那么就讲所有者改为hadoop。

[hadoop@bigdata-senior01 ~]# sudo chown -R hadoop:hadoop /opt/modules

- 1

十四、解压Hadoop目录文件

1、 复制hadoop-2.5.0.tar.gz到/opt/modules目录下。

2、 解压hadoop-2.5.0.tar.gz

[hadoop@bigdata-senior01 ~]# cd /opt/modules

[hadoop@bigdata-senior01 hadoop]# tar -zxvf hadoop-2.5.0.tar.gz

- 1

- 2

十五、配置Hadoop

1、 配置Hadoop环境变量

[hadoop@bigdata-senior01 hadoop]# vim /etc/profile

- 1

追加配置:

export HADOOP_HOME="/opt/modules/hadoop-2.5.0"

export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">执行:source /etc/profile 使得配置生效

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">验证HADOOP_HOME参数:

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 /]$ echo $HADOOP_HOME

/opt/modules/hadoop-2.5.0

- 1

- 2

2、 配置 hadoop-env.sh、mapred-env.sh、yarn-env.sh文件的JAVA_HOME参数

[hadoop@bigdata-senior01 ~]$ sudo vim ${HADOOP_HOME}/etc/hadoop/hadoop-env.sh

- 1

修改JAVA_HOME参数为:

export JAVA_HOME="/opt/modules/jdk1.7.0_67"

- 1

- 2

3、 配置core-site.xml

[hadoop@bigdata-senior01 ~]{HADOOP_HOME}/etc/hadoop/core-site.xml

(1) fs.defaultFS参数配置的是HDFS的地址。

<property>

<name>fs.defaultFSname>

<value>hdfs://bigdata-senior01.chybinmy.com:8020value>

property>

- 1

- 2

- 3

- 4

(2) hadoop.tmp.dir配置的是Hadoop临时目录,比如HDFS的NameNode数据默认都存放这个目录下,查看*-default.xml等默认配置文件,就可以看到很多依赖${hadoop.tmp.dir}的配置。

默认的hadoop.tmp.dir是/tmp/hadoop-${user.name},此时有个问题就是NameNode会将HDFS的元数据存储在这个/tmp目录下,如果操作系统重启了,系统会清空/tmp目录下的东西,导致NameNode元数据丢失,是个非常严重的问题,所有我们应该修改这个路径。

- 创建临时目录:

[hadoop@bigdata-senior01 hadoop-2.5.0]$ sudo mkdir -p /opt/data/tmp- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">

- "box-sizing: border-box;">将临时目录的所有者修改为hadoop

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0]$ sudo chown –R hadoop:hadoop /opt/data/tmp

- 1

- 修改hadoop.tmp.dir

<property>

<name>hadoop.tmp.dirname>

<value>/opt/data/tmpvalue>

property>

- 1

- 2

- 3

- 4

十六、配置、格式化、启动HDFS

1、 配置hdfs-site.xml

[hadoop@bigdata-senior01 hadoop-2.5.0]$ vim ${HADOOP_HOME}/etc/hadoop/hdfs-site.xml

- 1

<property>

<name>dfs.replicationname>

<value>1value>

property>

- 1

- 2

- 3

- 4

dfs.replication配置的是HDFS存储时的备份数量,因为这里是伪分布式环境只有一个节点,所以这里设置为1。

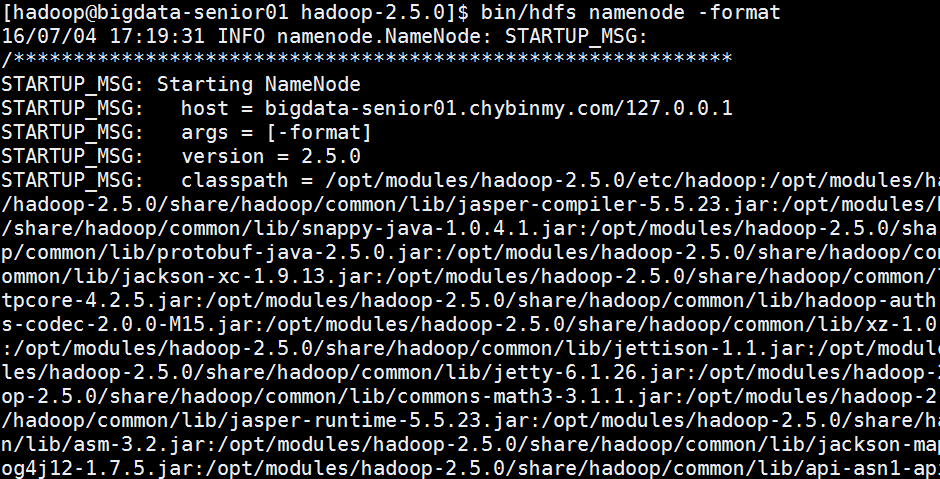

2、 格式化HDFS

[hadoop@bigdata-senior01 ~]$ hdfs namenode –format- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">格式化是对HDFS这个分布式文件系统中的DataNode进行分块,统计所有分块后的初始元数据的存储在NameNode中。

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">格式化后,查看core-site.xml里hadoop.tmp.dir(本例是/opt/data目录)指定的目录下是否有了dfs目录,如果有,说明格式化成功。

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">"box-sizing: border-box;">注意:

- "box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">

- "box-sizing: border-box;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box;">格式化时,这里注意hadoop.tmp.dir目录的权限问题,应该hadoop普通用户有读写权限才行,可以将/opt/data的所有者改为hadoop。

"box-sizing: border-box;">[hadoop@bigdata-senior01 hadoop-2.5.0]$ sudo chown -R hadoop:hadoop /opt/data 查看NameNode格式化后的目录。

- 1

namespaceID:NameNode的唯一ID。

clusterID:集群ID,NameNode和DataNode的集群ID应该一致,表明是一个集群。

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 1

- 2

- 1

- 2

- 1

- 2

- 1

- 2

- 3

- 4

- 5

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 1

- 1

- 2

- 3

- 4

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

yarn.nodemanager.aux-services配置了yarn的默认混洗方式,选择为mapreduce的默认混洗算法。

yarn.resourcemanager.hostname指定了Resourcemanager运行在哪个节点上。

- 1

- 1

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 1

- 2

- 1

- 2

- 3

output目录中有两个文件,_SUCCESS文件是空文件,有这个文件说明Job执行成功。

part-r-00000文件是结果文件,其中-r-说明这个文件是Reduce阶段产生的结果,mapreduce程序执行时,可以没有reduce阶段,但是肯定会有map阶段,如果没有reduce阶段这个地方有是-m-。

一个reduce会产生一个part-r-开头的文件。

查看输出文件内容。

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 2

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box;">Vmware左侧选中要克隆的机器,这里对原有的BigData01机器进行克隆,虚拟机菜单中,选中管理菜单下的克隆命令。

- "box-sizing: border-box;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box;">选择“创建完整克隆”,虚拟机名称为BigData02,选择虚拟机文件保存路径,进行克隆。

- "box-sizing: border-box;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box;">再次克隆一个名为BigData03的虚拟机。

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 2

- 3

- 4

- 1

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 1

- 1

- 2

- 3

- 4

- 5

- 6

- 1

- 2

- 3

- 4

- 1

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 1

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 1

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 2

- 1

- 2

- 3

- 4

- 5

- 6

- 1

- 2

- 3

- 4

- 5

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 2

- 3

- 4

- 1

- 2

- 3

- 1

- 1

- 2

- 3

- 4

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 1

- 2

- 3

- 4

- 1

- 2

- 3

- 4

- 5

- 6

- 1

- 2

- 3

- 4

- 1

- 2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 1

- 2

- 3

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- 1

- 2

- 3

- 1

- 2

- 3

- 1>

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;"

- 3></ul>>

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;"

>"http://images.gitbook.cn/e6e83a10-5cbe-11e7-86d9-f17e4b747fa0"

alt="enter image description here" title="" style="border: none; box-sizing: border-box; max-width: 100%;"></p> - sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- sizing: border-box;">

- 1

- 1

- 1

- 2

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 1

- 2

- 3

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">3

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">3

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 1

- 2

- 3

- 4

- 1

- 2

- 3

- 4

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">3

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">4

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

MasterHADaemon:控制RM的 Master的启动和停止,和RM运行在一个进程中,可以接收外部RPC命令。

共享存储:Active Master将信息写入共享存储,Standby Master读取共享存储信息以保持和Active Master同步。

ZKFailoverController:基于Zookeeper实现的切换控制器,由ActiveStandbyElector和HealthMonitor组成,ActiveStandbyElector负责与Zookeeper交互,判断所管理的Master是进入Active还是Standby;HealthMonitor负责监控Master的活动健康情况,是个监视器。

Zookeeper:核心功能是维护一把全局锁控制整个集群上只有一个Active的ResourceManager。

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- 1

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 1

- 2

- 3

- 4

- 5

- 6

- 1

- 2

- 3

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- 1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">3

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2

- "box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">3

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 1

- 2

[hadoop@bigdata-senior01 ~]$ ll /opt/data/tmp/dfs/name/current

fsimage是NameNode元数据在内存满了后,持久化保存到的文件。

fsimage*.md5 是校验文件,用于校验fsimage的完整性。

seen_txid 是hadoop的版本

vession文件里保存:

#Mon Jul 04 17:25:50 CST 2016

namespaceID=2101579007

clusterID=CID-205277e6-493b-4601-8e33-c09d1d23ece4

cTime=0

storageType=NAME_NODE

blockpoolID=BP-1641019026-127.0.0.1-1467624350057

layoutVersion=-57

3、 启动NameNode

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ${HADOOP_HOME}/sbin/hadoop-daemon.sh start namenode

starting namenode, logging to /opt/modules/hadoop-2.5.0/logs/hadoop-hadoop-namenode-bigdata-senior01.chybinmy.com.out

![]()

4、 启动DataNode

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ${HADOOP_HOME}/sbin/hadoop-daemon.sh start datanode

starting datanode, logging to /opt/modules/hadoop-2.5.0/logs/hadoop-hadoop-datanode-bigdata-senior01.chybinmy.com.out

![]()

5、 启动SecondaryNameNode

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ${HADOOP_HOME}/sbin/hadoop-daemon.sh start secondarynamenode

starting secondarynamenode, logging to /opt/modules/hadoop-2.5.0/logs/hadoop-hadoop-secondarynamenode-bigdata-senior01.chybinmy.com.out

![]()

6、 JPS命令查看是否已经启动成功,有结果就是启动成功了。

[hadoop@bigdata-senior01 hadoop-2.5.0]$ jps

3034 NameNode

3233 Jps

3193 SecondaryNameNode

3110 DataNode

7、 HDFS上测试创建目录、上传、下载文件

HDFS上创建目录

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ${HADOOP_HOME}/bin/hdfs dfs -mkdir /demo1

上传本地文件到HDFS上

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ${HADOOP_HOME}/bin/hdfs dfs -put

${HADOOP_HOME}/etc/hadoop/core-site.xml /demo1- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">读取HDFS上的文件内容

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0]$ ${HADOOP_HOME}/bin/hdfs dfs -cat /demo1/core-site.xml- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">"http://images.gitbook.cn/fe96fd30-5cba-11e7-8185-21ba04c77532" alt="enter image description here" title="" style="border: none; box-sizing: border-box; max-width: 100%;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">从HDFS上下载文件到本地

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0]$ bin/hdfs dfs -get /demo1/core-site.xml

十七、配置、启动YARN

1、 配置mapred-site.xml

默认没有mapred-site.xml文件,但是有个mapred-site.xml.template配置模板文件。复制模板生成mapred-site.xml。

[hadoop@bigdata-senior01 hadoop-2.5.0]# cp etc/hadoop/mapred-site.xml.template etc/hadoop/mapred-site.xml

添加配置如下:

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>

指定mapreduce运行在yarn框架上。

2、 配置yarn-site.xml

添加配置如下:

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.resourcemanager.hostnamename>

<value>bigdata-senior01.chybinmy.comvalue>

property>

3、 启动Resourcemanager

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ${HADOOP_HOME}/sbin/yarn-daemon.sh start resourcemanager

![]()

4、 启动nodemanager

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ${HADOOP_HOME}/sbin/yarn-daemon.sh start nodemanager

![]()

5、 查看是否启动成功

[hadoop@bigdata-senior01 hadoop-2.5.0]$ jps

3034 NameNode

4439 NodeManager

4197 ResourceManager

4543 Jps

3193 SecondaryNameNode

3110 DataNode

可以看到ResourceManager、NodeManager已经启动成功了。

6、 YARN的Web页面

YARN的Web客户端端口号是8088,通过http://192.168.100.10:8088/可以查看。

十八、运行MapReduce Job

在Hadoop的share目录里,自带了一些jar包,里面带有一些mapreduce实例小例子,位置在share/hadoop/mapreduce/hadoop-mapreduce-examples-2.5.0.jar,可以运行这些例子体验刚搭建好的Hadoop平台,我们这里来运行最经典的WordCount实例。

1、 创建测试用的Input文件

创建输入目录:

[hadoop@bigdata-senior01 hadoop-2.5.0]$ bin/hdfs dfs -mkdir -p /wordcountdemo/input- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">创建原始文件:

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">在本地/opt/data目录创建一个文件wc.input,内容如下。

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">"http://images.gitbook.cn/178763e0-5cbe-11e7-86d9-f17e4b747fa0" alt="enter image description here" title="" style="border: none; box-sizing: border-box; max-width: 100%;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">将wc.input文件上传到HDFS的/wordcountdemo/input目录中:

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0]$ bin/hdfs dfs -put /opt/data/wc.input /wordcountdemo/input

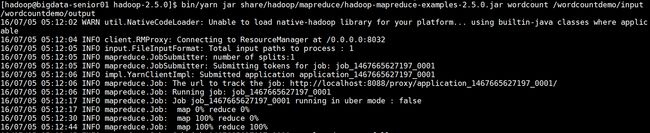

2、 运行WordCount MapReduce Job

[hadoop@bigdata-senior01 hadoop-2.5.0]$ bin/yarn jar share/hadoop/mapreduce/hadoop-mapreduce-examples-

2.5.0.jar wordcount /wordcountdemo/input /wordcountdemo/output

3、 查看输出结果目录

[hadoop@bigdata-senior01 hadoop-2.5.0]$ bin/hdfs dfs -ls /wordcountdemo/output

-rw-r--r-- 1 hadoop supergroup 0 2016-07-05 05:12 /wordcountdemo/output/_SUCCESS

-rw-r--r-- 1 hadoop supergroup 60 2016-07-05 05:12 /wordcountdemo/output/part-r-00000

[hadoop@bigdata-senior01 hadoop-2.5.0]$ bin/hdfs dfs -cat /wordcountdemo/output/part-r-00000

hadoop 3

hbase 1

hive 2

mapreduce 1

spark 2

sqoop 1

storm 1

结果是按照键值排好序的。

十九、停止Hadoop

[hadoop@bigdata-senior01 hadoop-2.5.0]$ sbin/hadoop-daemon.sh stop namenode

stopping namenode

[hadoop@bigdata-senior01 hadoop-2.5.0]$ sbin/hadoop-daemon.sh stop datanode

stopping datanode

[hadoop@bigdata-senior01 hadoop-2.5.0]$ sbin/yarn-daemon.sh stop resourcemanager

stopping resourcemanager

[hadoop@bigdata-senior01 hadoop-2.5.0]$ sbin/yarn-daemon.sh stop nodemanager

stopping nodemanager

二十、 Hadoop各个功能模块的理解

1、 HDFS模块

HDFS负责大数据的存储,通过将大文件分块后进行分布式存储方式,突破了服务器硬盘大小的限制,解决了单台机器无法存储大文件的问题,HDFS是个相对独立的模块,可以为YARN提供服务,也可以为HBase等其他模块提供服务。

2、 YARN模块

YARN是一个通用的资源协同和任务调度框架,是为了解决Hadoop1.x中MapReduce里NameNode负载太大和其他问题而创建的一个框架。

YARN是个通用框架,不止可以运行MapReduce,还可以运行Spark、Storm等其他计算框架。

3、 MapReduce模块

MapReduce是一个计算框架,它给出了一种数据处理的方式,即通过Map阶段、Reduce阶段来分布式地流式处理数据。它只适用于大数据的离线处理,对实时性要求很高的应用不适用。

第七步、开启历史服务

二十一、历史服务介绍

Hadoop开启历史服务可以在web页面上查看Yarn上执行job情况的详细信息。可以通过历史服务器查看已经运行完的Mapreduce作业记录,比如用了多少个Map、用了多少个Reduce、作业提交时间、作业启动时间、作业完成时间等信息。

二十二、开启历史服务

[hadoop@bigdata-senior01 hadoop-2.5.0]$ sbin/mr-jobhistory-daemon.sh start historyserver、- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">开启后,可以通过Web页面查看历史服务器:

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">"_blank" href="http://bigdata-senior01.chybinmy.com:19888/" style="color: rgb(79, 161, 219); text-decoration-line: none; box-sizing: border-box;">http://bigdata-senior01.chybinmy.com:19888/

二十三、Web查看job执行历史

1、 运行一个mapreduce任务

2、 job执行中

3、 查看job历史

历史服务器的Web端口默认是19888,可以查看Web界面。

但是在上面所显示的某一个Job任务页面的最下面,Map和Reduce个数的链接上,点击进入Map的详细信息页面,再查看某一个Map或者Reduce的详细日志是看不到的,是因为没有开启日志聚集服务。

二十四、开启日志聚集

4、 日志聚集介绍

MapReduce是在各个机器上运行的,在运行过程中产生的日志存在于各个机器上,为了能够统一查看各个机器的运行日志,将日志集中存放在HDFS上,这个过程就是日志聚集。

5、 开启日志聚集

配置日志聚集功能:

Hadoop默认是不启用日志聚集的。在yarn-site.xml文件里配置启用日志聚集。

<property>

<name>yarn.log-aggregation-enablename>

<value>truevalue>

property>

<property>

<name>yarn.log-aggregation.retain-secondsname>

<value>106800value>

property>

yarn.log-aggregation-enable:是否启用日志聚集功能。

yarn.log-aggregation.retain-seconds:设置日志保留时间,单位是秒。

将配置文件分发到其他节点:

[hadoop@bigdata-senior01 hadoop]$ scp /opt/modules/hadoop-2.5.0/etc/hadoop/yarn-site.xml bigdata-senior02.chybinmy.com:/opt/modules/hadoop-2.5.0/etc/hadoop/

[hadoop@bigdata-senior01 hadoop]$ scp /opt/modules/hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0/etc/hadoop/yarn-site.xml bigdata-senior03.chybinmy.com:/opt/modules/hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0/etc/hadoop/- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">重启Yarn进程:

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0]$ sbin/stop-yarn.sh

[hadoop@bigdata-senior01 hadoop-2.5.0]$ sbin/start-yarn.sh- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">重启HistoryServer进程:

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0]$ sbin/mr-jobhistory-daemon.sh stop historyserver

[hadoop@bigdata-senior01 hadoop-2.5.0]$ sbin/mr-jobhistory-daemon.sh start historyserver- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">6、 测试日志聚集

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">运行一个demo MapReduce,使之产生日志:

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">bin/yarn jar share/hadoop/mapreduce/hadoop-mapreduce-examples-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0.jar wordcount /input /output1- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">查看日志:

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">运行Job后,就可以在历史服务器Web页面查看各个Map和Reduce的日志了。

"box-sizing: border-box;">

"第四部分完全分布式安装-1" style="margin: 0.8em 0px; padding: 0px; box-sizing: border-box; font-family: "microsoft yahei"; font-weight: 300; line-height: 1.1; color: rgb(63, 63, 63); font-size: 1.7em;">"t4">"_blank" name="t17" style="color: rgb(79, 161, 219); box-sizing: border-box;">第四部分:完全分布式安装

"第八步完全布式环境部署hadoop" style="margin: 0.8em 0px; padding: 0px; box-sizing: border-box; font-family: "microsoft yahei"; font-weight: 300; line-height: 1.1; color: rgb(63, 63, 63); font-size: 1.25em;">"_blank" name="t18" style="color: rgb(79, 161, 219); box-sizing: border-box;">第八步、完全布式环境部署Hadoop

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">完全分部式是真正利用多台Linux主机来进行部署Hadoop,对Linux机器集群进行规划,使得Hadoop各个模块分别部署在不同的多台机器上。

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">二十五、环境准备

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">1、 克隆虚拟机

- "box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">2、 配置网络

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">修改网卡名称:

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">在BigData02和BigData03机器上编辑网卡信息。执行sudo vim /etc/udev/rules.d/70-persistent-net.rules命令。因为是从BigData01机器克隆来的,所以会保留BigData01的网卡eth0,并且再添加一个网卡eth1。并且eth0的Mac地址和BigData01的地址是一样的,Mac地址不允许相同,所以要删除eth0,只保留eth1网卡,并且要将eth1改名为eth0。将修改后的eth0的mac地址复制下来,修改network-scripts文件中的HWADDR属性。

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">sudo vim /etc/sysconfig/network-scripts/ifcfg-eth0- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">"http://images.gitbook.cn/692fc750-5cbe-11e7-8ca5-edc6aa6f5290" alt="enter image description here" title="" style="border: none; box-sizing: border-box; max-width: 100%;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">修改网络参数:

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">BigData02机器IP改为192.168.100.12

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">BigData03机器IP改为192.168.100.13

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">3、 配置Hostname

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">BigData02配置hostname为 bigdata-senior02.chybinmy.com

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">BigData03配置hostname为 bigdata-senior03.chybinmy.com

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">4、 配置hosts

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">BigData01、BigData02、BigData03三台机器hosts都配置为:

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-xml hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop@bigdata-senior01 hadoop-2.5.0]$ sudo vim /etc/hosts

192.168.100.10 bigdata-senior01.chybinmy.com

192.168.100.12 bigdata-senior02.chybinmy.com

192.168.100.13 bigdata-senior03.chybinmy.com

5、 配置Windows上的SSH客户端

在本地Windows中的SSH客户端上添加对BigData02、BigData03机器的SSH链接。

二十六、服务器功能规划

| bigdata-senior01.chybinmy.com | bigdata-senior02.chybinmy.com | bigdata-senior03.chybinmy.com |

|---|---|---|

| NameNode | ResourceManage | |

| DataNode | DataNode | DataNode |

| NodeManager | NodeManager | NodeManager |

| HistoryServer | SecondaryNameNode |

二十七、在第一台机器上安装新的Hadoop

为了和之前BigData01机器上安装伪分布式Hadoop区分开来,我们将BigData01上的Hadoop服务都停止掉,然后在一个新的目录/opt/modules/app下安装另外一个Hadoop。

我们采用先在第一台机器上解压、配置Hadoop,然后再分发到其他两台机器上的方式来安装集群。

6、 解压Hadoop目录:

[hadoop@bigdata-senior01 modules]$ tar -zxf /opt/sofeware/hadoop-2.5.0.tar.gz -C /opt/modules/app/code><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;"><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1li>ul>pre><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">7、 配置Hadoop JDK路径修改hadoop-env.sh、mapred-env.sh、yarn-env.sh文件中的JDK路径:p><pre class="prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;"><code class="language-xml hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">export JAVA_HOME="/opt/modules/jdk1.7.0_67"code><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;"><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1li>ul>pre><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">8、 配置core-site.xmlp><pre class="prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;"><code class="language-xml hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop@bigdata-senior01 hadoop-2.5.0]$ vim etc/hadoop/core-site.xml

<configuration>

<property>

<name>fs.defaultFSname>

<value>hdfs://bigdata-senior01.chybinmy.com:8020value>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/opt/modules/app/hadoop-2.5.0/data/tmpvalue>

property>

configuration>

fs.defaultFS为NameNode的地址。

hadoop.tmp.dir为hadoop临时目录的地址,默认情况下,NameNode和DataNode的数据文件都会存在这个目录下的对应子目录下。应该保证此目录是存在的,如果不存在,先创建。

9、 配置hdfs-site.xml

[hadoop@bigdata-senior01 hadoop-2.5.0]$ vim etc/hadoop/hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.secondary.http-addressname>

<value>bigdata-senior03.chybinmy.com:50090value>

property>

configuration>

dfs.namenode.secondary.http-address是指定secondaryNameNode的http访问地址和端口号,因为在规划中,我们将BigData03规划为SecondaryNameNode服务器。

所以这里设置为:bigdata-senior03.chybinmy.com:50090

10、 配置slaves

[hadoop@bigdata-senior01 hadoop-2.5.0]$ vim etc/hadoop/slaves

bigdata-senior01.chybinmy.com

bigdata-senior02.chybinmy.com

bigdata-senior03.chybinmy.com

slaves文件是指定HDFS上有哪些DataNode节点。

11、 配置yarn-site.xml

[hadoop@bigdata-senior01 hadoop-2.5.0]$ vim etc/hadoop/yarn-site.xml

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.resourcemanager.hostnamename>

<value>bigdata-senior02.chybinmy.comvalue>

property>

<property>

<name>yarn.log-aggregation-enablename>

<value>truevalue>

property>

<property>

<name>yarn.log-aggregation.retain-secondsname>

<value>106800value>

property>

根据规划yarn.resourcemanager.hostname这个指定resourcemanager服务器指向bigdata-senior02.chybinmy.com。

yarn.log-aggregation-enable是配置是否启用日志聚集功能。

yarn.log-aggregation.retain-seconds是配置聚集的日志在HDFS上最多保存多长时间。

12、 配置mapred-site.xml

从mapred-site.xml.template复制一个mapred-site.xml文件。

[hadoop@bigdata-senior01 hadoop-2.5.0]$ cp etc/hadoop/mapred-site.xml.template etc/hadoop/mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>

<property>

<name>mapreduce.jobhistory.addressname>

<value>bigdata-senior01.chybinmy.com:10020value>

property>

<property>

<name>mapreduce.jobhistory.webapp.addressname>

<value>bigdata-senior01.chybinmy.com:19888value>

property>

configuration>

mapreduce.framework.name设置mapreduce任务运行在yarn上。

mapreduce.jobhistory.address是设置mapreduce的历史服务器安装在BigData01机器上。

mapreduce.jobhistory.webapp.address是设置历史服务器的web页面地址和端口号。

二十八、设置SSH无密码登录

Hadoop集群中的各个机器间会相互地通过SSH访问,每次访问都输入密码是不现实的,所以要配置各个机器间的

SSH是无密码登录的。

1、 在BigData01上生成公钥

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ssh-keygen -t rsacode><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;"><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1li>ul>pre><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">一路回车,都设置为默认值,然后再当前用户的Home目录下的<code style="box-sizing: border-box; font-family: "Source Code Pro", monospace; padding: 2px 4px; font-size: 13.5px; background-color: rgba(128, 128, 128, 0.075); white-space: nowrap; border-radius: 0px;">.sshcode>目录中会生成公钥文件<code style="box-sizing: border-box; font-family: "Source Code Pro", monospace; padding: 2px 4px; font-size: 13.5px; background-color: rgba(128, 128, 128, 0.075); white-space: nowrap; border-radius: 0px;">(id_rsa.pub)code>和私钥文件<code style="box-sizing: border-box; font-family: "Source Code Pro", monospace; padding: 2px 4px; font-size: 13.5px; background-color: rgba(128, 128, 128, 0.075); white-space: nowrap; border-radius: 0px;">(id_rsa)code>。p><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">2、 分发公钥p><pre class="prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;"><code class="language-xml hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop@bigdata-senior01 hadoop-2.5.0]$ ssh-copy-id bigdata-senior01.chybinmy.com

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ssh-copy-id bigdata-senior02.chybinmy.com

[hadoop@bigdata-senior01 hadoop-2.5.0]$ ssh-copy-id bigdata-senior03.chybinmy.comcode><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;"><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1li><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2li><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">3li>ul>pre><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">3、 设置BigData02、BigData03到其他机器的无密钥登录p><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">同样的在BigData02、BigData03上生成公钥和私钥后,将公钥分发到三台机器上。p><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">二十九、分发Hadoop文件p><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">1、 首先在其他两台机器上创建存放Hadoop的目录p><pre class="prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;"><code class="language-xml hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop@bigdata-senior02 ~]$ mkdir /opt/modules/app

[hadoop@bigdata-senior03 ~]$ mkdir /opt/modules/appcode><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;"><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1li><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2li>ul>pre><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">2、 通过Scp分发p><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">Hadoop根目录下的share/doc目录是存放的hadoop的文档,文件相当大,建议在分发之前将这个目录删除掉,可以节省硬盘空间并能提高分发的速度。p><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">doc目录大小有1.6G。p><pre class="prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;"><code class="language-xml hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop@bigdata-senior01 hadoop-2.5.0]$ du -sh /opt/modules/app/hadoop-2.5.0/share/doc

1.6G /opt/modules/app/hadoop-2.5.0/share/doc

[hadoop@bigdata-senior01 hadoop-2.5.0]$ scp -r /opt/modules/app/hadoop-2.5.0/ bigdata-senior02.chybinmy.com:/opt/modules/app

[hadoop@bigdata-senior01 hadoop-2.5.0]$ scp -r /opt/modules/app/hadoop-2.5.0/ bigdata-senior03.chybinmy.com:/opt/modules/appcode><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;"><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">1li><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">2li><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">3li><li style="box-sizing: border-box; padding: 0px 5px; list-style: none; margin-left: 0px;">4li>ul>pre><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">三十、格式NameNodep><p style="margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">在NameNode机器上执行格式化:p><pre class="prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;"><code class="language-xml hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop@bigdata-senior01 hadoop-2.5.0]$ /opt/modules/app/hadoop-2.5.0/bin/hdfs namenode –format

注意:

如果需要重新格式化NameNode,需要先将原来NameNode和DataNode下的文件全部删除,不然会报错,NameNode和DataNode所在目录是在core-site.xml中hadoop.tmp.dir、dfs.namenode.name.dir、dfs.datanode.data.dir属性配置的。

<property>

<name>hadoop.tmp.dirname>

<value>/opt/data/tmpvalue>

property>

<property>

<name>dfs.namenode.name.dirname>

<value>file://${hadoop.tmp.dir}/dfs/namevalue>

property>

<property>

<name>dfs.datanode.data.dirname>

<value>file://${hadoop.tmp.dir}/dfs/datavalue>

property>

因为每次格式化,默认是创建一个集群ID,并写入NameNode和DataNode的VERSION文件中(VERSION文件所在目录为dfs/name/current 和 dfs/data/current),重新格式化时,默认会生成一个新的集群ID,如果不删除原来的目录,会导致namenode中的VERSION文件中是新的集群ID,而DataNode中是旧的集群ID,不一致时会报错。

另一种方法是格式化时指定集群ID参数,指定为旧的集群ID。

三十一、启动集群

1、 启动HDFS

[hadoop@bigdata-senior01 hadoop-2.5.0]$ /opt/modules/app/hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0/sbin/start-dfs.sh- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">"http://images.gitbook.cn/7c4619c0-5cbe-11e7-8ca5-edc6aa6f5290" alt="enter image description here" title="" style="border: none; box-sizing: border-box; max-width: 100%;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">2、 启动YARN

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0]$ /opt/modules/app/hadoop-2.5.0/sbin/start-yarn.sh

在BigData02上启动ResourceManager:

[hadoop@bigdata-senior02 hadoop-2.5.0]$ sbin/yarn-daemon.sh start resourcemanager- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">"http://images.gitbook.cn/98a56fd0-5cbe-11e7-8ca5-edc6aa6f5290" alt="enter image description here" title="" style="border: none; box-sizing: border-box; max-width: 100%;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">3、 启动日志服务器

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">因为我们规划的是在BigData03服务器上运行MapReduce日志服务,所以要在BigData03上启动。

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior03 ~]$ /opt/modules/app/hadoop-2.5.0/sbin/mr-jobhistory-daemon.sh start historyserver

starting historyserver, logging to /opt/modules/app/hadoop-2.5.0/logs/mapred-hadoop-historyserver-bigda ta-senior03.chybinmy.com.out

[hadoop@bigdata-senior03 ~]$ jps

3570 Jps

3537 JobHistoryServer

3310 SecondaryNameNode

3213 DataNode

3392 NodeManager

4、 查看HDFS Web页面

http://bigdata-senior01.chybinmy.com:50070/

5、 查看YARN Web 页面

http://bigdata-senior02.chybinmy.com:8088/cluster

三十二、测试Job

我们这里用hadoop自带的wordcount例子来在本地模式下测试跑mapreduce。

1、 准备mapreduce输入文件wc.input

[hadoop@bigdata-senior01 modules]$ cat /opt/data/wc.input

hadoop mapreduce hive

hbase spark storm

sqoop hadoop hive

spark hadoop

2、 在HDFS创建输入目录input

[hadoop@bigdata-senior01 hadoop-2.5.0]$ bin/hdfs dfs -mkdir /input- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">3、 将wc.input上传到HDFS

"prettyprint" name="code" style="white-space: nowrap; word-wrap: break-word; box-sizing: border-box; position: relative; overflow-y: hidden; overflow-x: auto; margin-top: 0px; margin-bottom: 1.1em; font-family: "Source Code Pro", monospace; padding: 5px 5px 5px 60px; font-size: 14px; line-height: 1.45; word-break: break-all; color: rgb(51, 51, 51); background-color: rgba(128, 128, 128, 0.05); border: 0px solid rgb(136, 136, 136); border-radius: 0px;">"language-java hljs has-numbering" style="display: block; padding: 0px; background: transparent; color: inherit; box-sizing: border-box; font-family: "Source Code Pro", monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal;">[hadoop"hljs-annotation" style="color: rgb(155, 133, 157); box-sizing: border-box;">@bigdata-senior01 hadoop-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0]$ bin/hdfs dfs -put /opt/data/wc.input /input/wc.input

4、 运行hadoop自带的mapreduce Demo

[hadoop@bigdata-senior01 hadoop-2.5.0]$ bin/yarn jar share/hadoop/mapreduce/hadoop-mapreduce-examples-"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">2.5"hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">.0.jar wordcount /input/wc.input /output- "pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; background-color: rgb(238, 238, 238); top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right: 1px solid rgb(221, 221, 221); list-style: none; text-align: right;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">"http://images.gitbook.cn/a5cbfbc0-5cbe-11e7-8185-21ba04c77532" alt="enter image description here" title="" style="border: none; box-sizing: border-box; max-width: 100%;">

"margin-top: 0px; margin-bottom: 1.1em; padding-top: 0px; padding-bottom: 0px; box-sizing: border-box; color: rgb(63, 63, 63); font-family: "microsoft yahei"; font-size: 15px;">5、 查看输出文件