如何使用Dropout去防止过拟合

一、Dropout的介绍

dropout是指在深度学习网络的训练过程中,对于神经网络单元,按照一定的概率将其暂时从网络中丢弃。注意是暂时,对于随机梯度下降来说,由于是随机丢弃,故而每一个mini-batch都在训练不同的网络。dropout是CNN中防止过拟合提高效果的一个大杀器,但对于其为何有效,却众说纷纭。

Dropout的思想是训练整体DNN,并平均整个集合的结果,而不是训练单个DNN。DNNs是以概率P舍弃部分神经元,其它神经元以概率q=1-p被保留,舍去的神经元的输出都被设置为零。

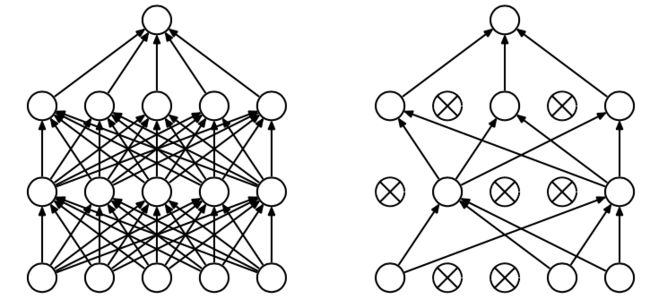

Dropout的出现很好的可以解决这个问题,每次做完dropout,相当于从原始的网络中找到一个更瘦的网络,如下图所示:

上图为Dropout的可视化表示,左边是应用Dropout之前的网络,右边是应用了Dropout的同一个网络。因而,对于一个有N个节点的神经网络,有了dropout后,就可以看做是2n个模型的集合了,但此时要训练的参数数目却是不变的,这就解脱了费时的问题。

更详细介绍请参考:

https://blog.csdn.net/stdcoutzyx/article/details/49022443

https://yq.aliyun.com/articles/68901

二、在二层NN网络中使用Dropout功能

具体代码如下:

#-*-coding:utf-8-*-

import tensorflow as tf

from sklearn.datasets import load_digits

from sklearn.cross_validation import train_test_split

from sklearn.preprocessing import LabelBinarizer

# load data

D = load_digits()

#print(D) #data;target;target_names

X = D.data #(1797,64)

Y = D.target #(1797,)

Z = D.target_names #[0 1 2 3 4 5 6 7 8 9] (10,)

print(X,X.shape)

print(Z,Z.shape)

y = LabelBinarizer().fit_transform(Y) #变为01向量,大小=(1797, 10)

print(y,y.shape)

train_x,test_x,train_y,test_y = train_test_split(X,y,test_size=.3)

def add_layer(inputs,in_size,out_size,layer_name,activation_function=None):

Weights = tf.Variable(tf.random_normal([in_size, out_size]), name='W')

biases = tf.Variable(tf.zeros([1, out_size]) + 0.1, name='b')

Wx_plus_b = tf.matmul(inputs, Weights) + biases

Wx_plus_b = tf.nn.dropout(Wx_plus_b,keep_prob) #dropout掉50%的结果

if activation_function is None: # 如何激活函数为空,则是线性函数

outputs = Wx_plus_b

else:

outputs = activation_function(Wx_plus_b)

tf.summary.histogram(layer_name+'/outputs',outputs)

return outputs

# define placeholder for inputs to network

keep_prob = tf.placeholder(tf.float32)

xs = tf.placeholder(tf.float32,[None,64]) #8*8

ys = tf.placeholder(tf.float32,[None,10])

# add output layer【2层网络input——hidden——output】

hidden_layer = add_layer(xs,64,50,'L1',activation_function=tf.nn.tanh)

Op = add_layer(hidden_layer,50,10,'L2',activation_function=tf.nn.softmax)

# the loss between prediction and real data

loss = tf.reduce_mean(-tf.reduce_sum(ys*tf.log(Op),

reduction_indices=[1]))

tf.summary.scalar('loss',loss)

train_step = tf.train.GradientDescentOptimizer(0.6).minimize(loss)

# summary writer goes in here

sess = tf.Session()

merged = tf.summary.merge_all()

train_writer = tf.summary.FileWriter("logsDO/train",sess.graph)

test_writer = tf.summary.FileWriter("logsDO/test",sess.graph)

sess.run(tf.global_variables_initializer())

for i in range(1000):

sess.run(train_step,feed_dict={xs:train_x,ys:train_y,keep_prob:0.3}) #Dropout保留50%的神经元

if i%50==0:

# record loss

train_result = sess.run(merged, feed_dict={xs: train_x, ys: train_y,keep_prob:1}) #不dropout任务东西

test_result = sess.run(merged,feed_dict={xs:test_x,ys:test_y,keep_prob:1})

train_writer.add_summary(train_result,i)

test_writer.add_summary(test_result,i)上述代码中,我们主要在:

1)add_layer()函数中把W*x+b进行dropout;

Wx_plus_b = tf.matmul(inputs, Weights) + biases

Wx_plus_b = tf.nn.dropout(Wx_plus_b,keep_prob) #dropout掉keep_prob=50%的结果2)在定义变量时定义一个keep_prob值

keep_prob = tf.placeholder(tf.float32)

xs = tf.placeholder(tf.float32,[None,64]) #8*8

ys = tf.placeholder(tf.float32,[None,10])3)在模型训练过程中修改输入值

for i in range(1000):

sess.run(train_step,feed_dict={xs:train_x,ys:train_y,keep_prob:0.3}) #Dropout保留50%的神经元

if i%50==0:

# record loss

train_result = sess.run(merged, feed_dict={xs: train_x, ys: train_y,keep_prob:1}) #不dropout任务东西

test_result = sess.run(merged,feed_dict={xs:test_x,ys:test_y,keep_prob:1})

train_writer.add_summary(train_result,i)

test_writer.add_summary(test_result,i)keep_prob:0.3(也可以取其他值,如0.5,0.6,…),在本实验中,取0.3时结果较好。

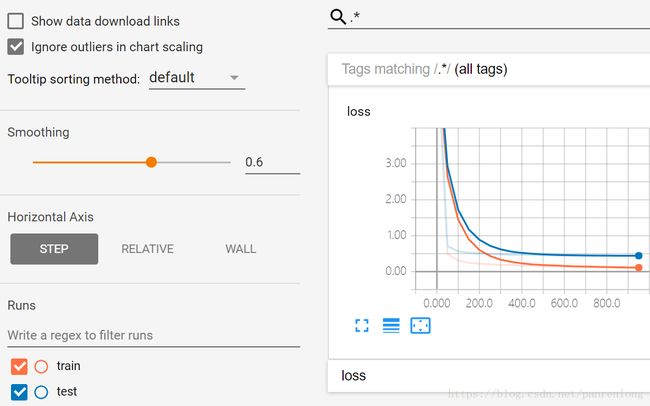

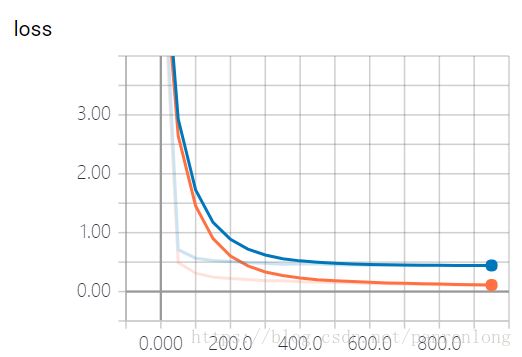

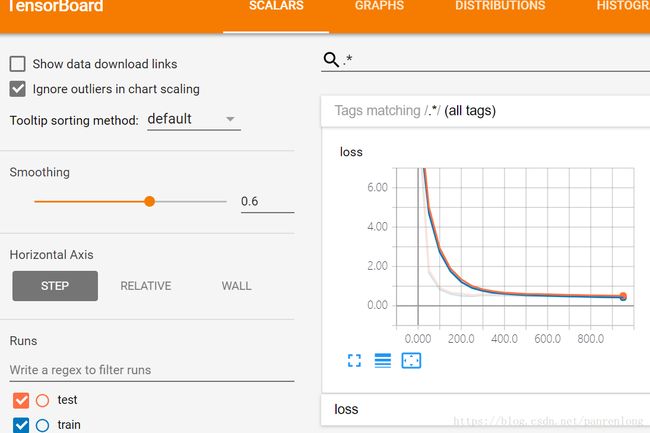

三、查看loss结果图

在代码中将其graph保存在当前目录下的logsDO/文件夹中。

train_writer = tf.summary.FileWriter("logsDO/train",sess.graph)

test_writer = tf.summary.FileWriter("logsDO/test",sess.graph)查看步骤:

1)运行代码;

2)代码“运行”——cmd;

3) 在命令窗口中输入d:回车;(即将其定位到D盘)

4)输入命令

tensorboard –logdir=ProgramFiles64\Python364\WorkSpaces\logsDO

然后回车,等待结果如下:

TensorBoard 0.4.0rc3 at http://DESKTOP-PAF8N1L:6006 (Press CTRL+C to quit)

5)将其网址http://DESKTOP-PAF8N1L:6006复制到谷歌(我试了360浏览器不能打开)浏览器中,即可打开graph。如下图所示

图1 没有Dropout的结果

图2 Dropout之后的结果图

它们的区别,可以看图中两条线的重合程度,有Dropout的训练loss和test的loss几乎重合。