python环境下运用kafka对数据实时传输

背景:

为了满足各个平台间数据的传输,以及能确保历史性和实时性。先选用kafka作为不同平台数据传输的中转站,来满足我们对跨平台数据发送与接收的需要。

kafka简介:

Kafka is a distributed,partitioned,replicated commit logservice。它提供了类似于JMS的特性,但是在设计实现上完全不同,此外它并不是JMS规范的实现。kafka对消息保存时根据Topic进行归类,发送消息者成为Producer,消息接受者成为Consumer,此外kafka集群有多个kafka实例组成,每个实例(server)成为broker。无论是kafka集群,还是producer和consumer都依赖于zookeeper来保证系统可用性集群保存一些meta信息。

总之:kafka做为中转站有以下功能:1.生产者(产生数据或者说是从外部接收数据)2.消费着(将接收到的数据转花为自己所需用的格式)

环境:

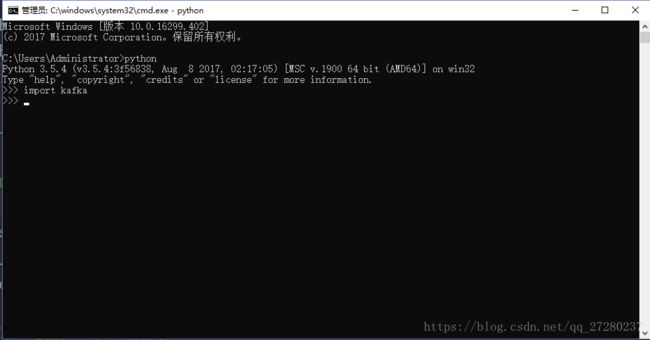

1.python3.5.x

2.kafka1.4.3

3.pandas

准备开始:

1.kafka的安装

pip install kafka-python2.检验kafka是否安装成功

3.pandas的安装

pip install pandas4.kafka数据的传输

直接撸代码:

# -*- coding: utf-8 -*-

'''

@author: 真梦行路

@file: kafka.py

@time: 2018/9/3 10:20

'''

import sys

import json

import pandas as pd

import os

from kafka import KafkaProducer

from kafka import KafkaConsumer

from kafka.errors import KafkaError

KAFAKA_HOST = "xxx.xxx.x.xxx" #服务器端口地址

KAFAKA_PORT = 9092 #端口号

KAFAKA_TOPIC = "topic0" #topic

data=pd.read_csv(os.getcwd()+'\\data\\1.csv')

key_value=data.to_json()

class Kafka_producer():

'''

生产模块:根据不同的key,区分消息

'''

def __init__(self, kafkahost, kafkaport, kafkatopic, key):

self.kafkaHost = kafkahost

self.kafkaPort = kafkaport

self.kafkatopic = kafkatopic

self.key = key

self.producer = KafkaProducer(bootstrap_servers='{kafka_host}:{kafka_port}'.format(

kafka_host=self.kafkaHost,

kafka_port=self.kafkaPort)

)

def sendjsondata(self, params):

try:

parmas_message = params #注意dumps

producer = self.producer

producer.send(self.kafkatopic, key=self.key, value=parmas_message.encode('utf-8'))

producer.flush()

except KafkaError as e:

print(e)

class Kafka_consumer():

def __init__(self, kafkahost, kafkaport, kafkatopic, groupid,key):

self.kafkaHost = kafkahost

self.kafkaPort = kafkaport

self.kafkatopic = kafkatopic

self.groupid = groupid

self.key = key

self.consumer = KafkaConsumer(self.kafkatopic, group_id=self.groupid,

bootstrap_servers='{kafka_host}:{kafka_port}'.format(

kafka_host=self.kafkaHost,

kafka_port=self.kafkaPort)

)

def consume_data(self):

try:

for message in self.consumer:

yield message

except KeyboardInterrupt as e:

print(e)

def sortedDictValues(adict):

items = adict.items()

items=sorted(items,reverse=False)

return [value for key, value in items]

def main(xtype, group, key):

'''

测试consumer和producer

'''

if xtype == "p":

# 生产模块

producer = Kafka_producer(KAFAKA_HOST, KAFAKA_PORT, KAFAKA_TOPIC, key)

print("===========> producer:", producer)

params =key_value

producer.sendjsondata(params)

if xtype == 'c':

# 消费模块

consumer = Kafka_consumer(KAFAKA_HOST, KAFAKA_PORT, KAFAKA_TOPIC, group,key)

print("===========> consumer:", consumer)

message = consumer.consume_data()

for msg in message:

msg=msg.value.decode('utf-8')

python_data=json.loads(msg) ##这是一个字典

key_list=list(python_data)

test_data=pd.DataFrame()

for index in key_list:

print(index)

if index=='Month':

a1=python_data[index]

data1 = sortedDictValues(a1)

test_data[index]=data1

else:

a2 = python_data[index]

data2 = sortedDictValues(a2)

test_data[index] = data2

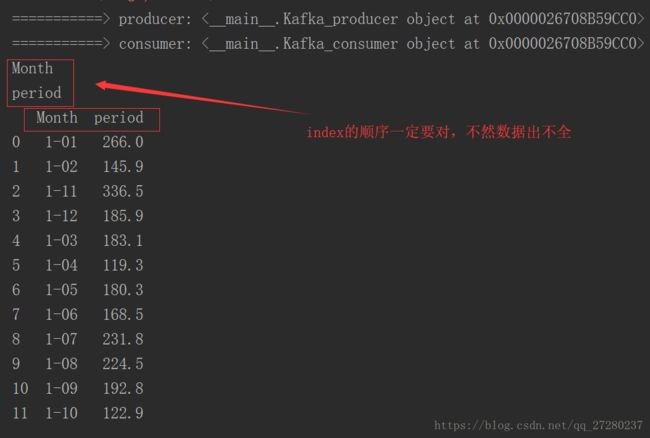

print(test_data)

# print('value---------------->', python_data)

# print('msg---------------->', msg)

# print('key---------------->', msg.kry)

# print('offset---------------->', msg.offset)

if __name__ == '__main__':

main(xtype='p',group='py_test',key=None)

main(xtype='c',group='py_test',key=None)

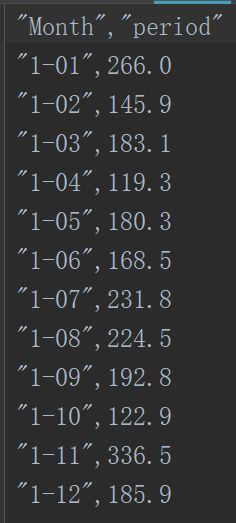

数据1.csv如下所示:

几点注意:

1.一定要有一个服务器的端口地址,不要用本机的ip或者乱写一个ip不然程序会报错。(我开始就是拿本机ip怼了半天,总是报错)

2.注意数据的传输格式以及编码问题(二进制传输),数据先转成json数据格式传输,然后将json格式转为需要格式。(不是json格式的注意dumps)

例中,dataframe->json->dataframe

3.例中dict转dataframe,也可以用简单方法直接转。

eg: type(data) ==>dict,data=pd.Dateframe(data)

参考文献:

1.kafka-python官方文档

2.https://blog.csdn.net/kuluzs/article/details/71171456