研究背景

根据老师要求,采用Faster-RCNN算法,使用VOC2007数据集和比赛数据集训练模型,测试图片并进行验证。

论文解读

整体架构

faster-rcnn原理及相应概念解释

学习参考

tf-faster rcnn 配置 及自己数据

CPU和GPU的区别、工作原理、及如何tensorflow-GPU安装等操作

Win-10 安装 TensorFlow-GPU

基于Faster-RCNN-TF的gpu运行总结(自己准备数据集)

环境配置

github代码

配置参考

Ubuntu 16.04 LTS

anaconda3

tensorflow1.2.1

python3.6.6

PyCharm Community Edition 2016.3

conda list 的CPU配置如下

henry@henry-Rev-1-0:~$ source activate tensorflow

(tensorflow) henry@henry-Rev-1-0:~$ conda list

# packages in environment at /home/henry/anaconda3/envs/tensorflow:

#

# Name Version Build Channel

_tflow_180_select 3.0 eigen defaults

absl-py 0.2.2 py36_0 defaults

astor 0.6.2 py36_0 defaults

backports.weakref 1.0rc1

blas 1.0 mkl defaults

bleach 1.5.0 py36_0 defaults

bzip2 1.0.6 h14c3975_5 defaults

ca-certificates 2018.03.07 0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

cairo 1.14.12 h7636065_2 defaults

certifi 2018.4.16 py36_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

cffi 1.11.5 py36h9745a5d_0 defaults

cudatoolkit 9.0 h13b8566_0 defaults

cudnn 7.1.2 cuda9.0_0 defaults

cycler 0.10.0 py36h93f1223_0 defaults

Cython 0.28.4

dbus 1.13.2 h714fa37_1 defaults

easydict 1.6

expat 2.2.5 he0dffb1_0 defaults

ffmpeg 4.0 h04d0a96_0 defaults

fontconfig 2.12.6 h49f89f6_0 defaults

freetype 2.8 hab7d2ae_1 defaults

gast 0.2.0 py36_0 defaults

glib 2.56.1 h000015b_0 defaults

graphite2 1.3.11 h16798f4_2 defaults

grpcio 1.12.1 py36hdbcaa40_0 defaults

gst-plugins-base 1.14.0 hbbd80ab_1 defaults

gstreamer 1.14.0 hb453b48_1 defaults

h5py 2.8.0 py36ha1f6525_0 defaults

harfbuzz 1.7.6 h5f0a787_1 defaults

hdf5 1.10.2 hba1933b_1 defaults

html5lib 0.9999999 py36_0 defaults

icu 58.2 h9c2bf20_1 defaults

intel-openmp 2018.0.3 0 defaults

jasper 1.900.1 hd497a04_4 defaults

jpeg 9b h024ee3a_2 defaults

keras 2.2.0 0 defaults

keras-applications 1.0.2 py36_0 defaults

keras-base 2.2.0 py36_0 defaults

keras-preprocessing 1.0.1 py36_0 defaults

kiwisolver 1.0.1 py36h764f252_0 defaults

libedit 3.1.20170329 h6b74fdf_2 defaults

libffi 3.2.1 hd88cf55_4 defaults

libgcc-ng 7.2.0 hdf63c60_3 defaults

libgfortran-ng 7.2.0 hdf63c60_3 defaults

libopencv 3.4.1 h1a3b859_1 defaults

libopus 1.2.1 hb9ed12e_0 defaults

libpng 1.6.34 hb9fc6fc_0 defaults

libprotobuf 3.5.2 h6f1eeef_0 defaults

libstdcxx-ng 7.2.0 hdf63c60_3 defaults

libtiff 4.0.9 he85c1e1_1 defaults

libvpx 1.7.0 h439df22_0 defaults

libxcb 1.13 h1bed415_1 defaults

libxml2 2.9.8 h26e45fe_1 defaults

libxslt 1.1.32 h1312cb7_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

lxml 4.2.2 py36hf71bdeb_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

markdown 2.6.11 py36_0 defaults

matplotlib 2.2.2 py36h0e671d2_1 defaults

mkl 2018.0.3 1 defaults

mkl_fft 1.0.1 py36h3010b51_0 defaults

mkl_random 1.0.1 py36h629b387_0 defaults

nccl 1.3.5 cuda9.0_0 defaults

ncurses 6.1 hf484d3e_0 defaults

ninja 1.8.2 py36h6bb024c_1 defaults

numpy 1.14.5

numpy 1.14.5 py36hcd700cb_3 defaults

numpy-base 1.14.5 py36hdbf6ddf_3 defaults

opencv 3.4.1 py36h6fd60c2_2 defaults

opencv-python 3.4.1.15

openssl 1.0.2o h20670df_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

pcre 8.42 h439df22_0 defaults

Pillow 5.2.0

pip 10.0.1 py36_0 defaults

pixman 0.34.0 hceecf20_3 defaults

protobuf 3.5.2 py36hf484d3e_0 defaults

py-opencv 3.4.1 py36h0676e08_1 defaults

pycparser 2.18 py36hf9f622e_1 defaults

pyparsing 2.2.0 py36hee85983_1 defaults

pyqt 5.9.2 py36h751905a_0 defaults

python 3.6.6 hc3d631a_0 defaults

python-dateutil 2.7.3 py36_0 defaults

pytorch 0.4.0 py36hdf912b8_0 defaults

pytz 2018.5 py36_0 defaults

pyyaml 3.12 py36hafb9ca4_1 defaults

qt 5.9.5 h7e424d6_0 defaults

readline 7.0 ha6073c6_4 defaults

scipy 1.1.0 py36hfc37229_0 defaults

setuptools 39.2.0 py36_0 defaults

sip 4.19.8 py36hf484d3e_0 defaults

six 1.11.0 py36h372c433_1 defaults

sqlite 3.24.0 h84994c4_0 defaults

tensorboard 1.8.0 py36hf484d3e_0 defaults

tensorflow 1.2.1

tensorflow 1.8.0 h57681fa_0 defaults

tensorflow-base 1.8.0 py36h5f64886_0 defaults

termcolor 1.1.0 py36_1 defaults

tk 8.6.7 hc745277_3 defaults

tornado 5.0.2 py36_0 defaults

werkzeug 0.14.1 py36_0 defaults

wheel 0.31.1 py36_0 defaults

xz 5.2.4 h14c3975_4 defaults

yaml 0.1.7 had09818_2 defaults

zlib 1.2.11 ha838bed_2 defaults

conda list 的GPU配置如下

(py36) ouc@ouc-yzb:~/LiuHongzhi/tf-faster-rcnn$ conda list

# packages in environment at /home/ouc/anaconda3/envs/py36:

#

# Name Version Build Channel

_tflow_180_select 3.0 eigen

absl-py 0.2.2 py36_0

astor 0.6.2 py36_1

backports 1.0 py36_1

backports.weakref 1.0rc1 py36_0

binutils_impl_linux-64 2.28.1 had2808c_3

binutils_linux-64 7.2.0 had2808c_27

blas 1.0 mkl

bleach 1.5.0 py36_0

ca-certificates 2018.03.07 0

certifi 2018.4.16 py36_0

cudatoolkit 8.0 3

cudnn 6.0.21 cuda8.0_0

cycler 0.10.0 py36_0

cython 0.28.3 py36h14c3975_0

dbus 1.13.2 h714fa37_1

easydict 1.6

enum34 1.1.6

expat 2.2.5 he0dffb1_0

fontconfig 2.13.0 h9420a91_0

freetype 2.9.1 h8a8886c_0

gast 0.2.0 py36_0

gcc_impl_linux-64 7.2.0 habb00fd_3

gcc_linux-64 7.2.0 h550dcbe_27

glib 2.56.1 h000015b_0

grpcio 1.12.1 py36hdbcaa40_0

gst-plugins-base 1.14.0 hbbd80ab_1

gstreamer 1.14.0 hb453b48_1

gxx_impl_linux-64 7.2.0 hdf63c60_3

gxx_linux-64 7.2.0 h550dcbe_27

h5py 2.8.0 py36h8d01980_0

hdf5 1.10.2 hba1933b_1

html5lib 0.9999999 py36_0

icu 58.2 h9c2bf20_1

intel-openmp 2018.0.3 0

jpeg 9b h024ee3a_2

Keras 2.1.2

keras-applications 1.0.2 py36_0

keras-base 2.2.0 py36_0

keras-preprocessing 1.0.1 py36_0

kiwisolver 1.0.1 py36hf484d3e_0

libedit 3.1.20170329 h6b74fdf_2

libffi 3.2.1 hd88cf55_4

libgcc 7.2.0 h69d50b8_2

libgcc-ng 7.2.0 hdf63c60_3

libgfortran-ng 7.2.0 hdf63c60_3

libgpuarray 0.7.6 h14c3975_0

libpng 1.6.34 hb9fc6fc_0

libprotobuf 3.5.2 h6f1eeef_0

libstdcxx-ng 7.2.0 hdf63c60_3

libtiff 4.0.9 he85c1e1_1

libuuid 1.0.3 h1bed415_2

libxcb 1.13 h1bed415_1

libxml2 2.9.8 h26e45fe_1

mako 1.0.7 py36_0

markdown 2.6.11 py36_0

markupsafe 1.0 py36h14c3975_1

matplotlib 2.2.2 py36hb69df0a_2

mkl 2018.0.3 1

mkl-service 1.1.2 py36h651fb7a_4

mkl_fft 1.0.2 py36h651fb7a_0

mkl_random 1.0.1 py36h4414c95_1

ncurses 6.1 hf484d3e_0

numpy 1.14.5 py36h1b885b7_4

numpy-base 1.14.5 py36hdbf6ddf_4

olefile 0.45.1 py36_0

opencv3 3.1.0 py36_0 menpo

openssl 1.0.2o h20670df_0

pcre 8.42 h439df22_0

pillow 5.1.0 py36heded4f4_0

pip 10.0.1 py36_0

pip 18.0

protobuf 3.5.2 py36hf484d3e_1

pygpu 0.7.6 py36h035aef0_0

pyparsing 2.2.0 py36_1

pyqt 5.9.2 py36h22d08a2_0

python 3.6.6 hc3d631a_0

python-dateutil 2.7.3 py36_0

pytz 2018.5 py36_0

pyyaml 3.12 py36h14c3975_1

qt 5.9.6 h52aff34_0

readline 7.0 ha6073c6_4

scipy 1.1.0 py36hc49cb51_0

setuptools 39.2.0 py36_0

setuptools 39.1.0

sip 4.19.8 py36hf484d3e_0

six 1.11.0 py36_1

sqlite 3.24.0 h84994c4_0

tensorflow-gpu 1.4.0

tensorflow-tensorboard 0.4.0

termcolor 1.1.0 py36_1

theano 1.0.2 py36h6bb024c_0

tk 8.6.7 hc745277_3

tornado 5.0.2 py36h14c3975_0

werkzeug 0.14.1 py36_0

wheel 0.31.1 py36_0

xz 5.2.4 h14c3975_4

yaml 0.1.7 had09818_2

zlib 1.2.11 ha838bed_2

在anaconda虚拟环境安装cuda8.0

conda install cudatoolkit=8.0 -c https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/linux-64/

在anaconda虚拟环境安装cudnn

conda install cudnn=7.0.5 -c https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/linux-64/

参考ubuntu利用conda创建虚拟环境,并安装cuda cudnn pytorch

一、Anaconda

官网下载地址

环境迁移

Anaconda入门使用指南

推荐版本 Anaconda 5.2 For Linux Installer

Python 3.6 version

- 将下载文件夹中的脚本文件.sh移动到指定文件夹路径中,在当前文件夹运行

bash ./Anaconda3-5.0.0-Linux-x86_64.sh

询问是否把anaconda的bin添加到用户的环境变量中,选择yes!安装完成。

- 运行以下指令建立运行环境,tensorflow为环境名称,可以自己指定。

conda create -n tensorflow python=3.6

- 激活conda环境,tensorflow为环境名称

source activate tensorflow

- 在tensorflow环境查看tensorflow版本的命令

Python

import tensorflow as tf

tf.version

- 在tensorflow环境查看已安装的包

conda list

- 在tensorflow环境安装如 matplotlib包

conda install matplotlib

- 在tensorflow环境更新如 matplotlib包

conda update matplotlib

- 在tensorflow环境删除如 matplotlib包

conda remove matplotlib

- conda中安装cuda

conda install cudatoolkit=8.0 -c https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/linux-64/

conda install cudnn=7.0.5 -c https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/linux-64/

ubuntu利用conda创建虚拟环境,并安装cuda,cudnn,pytorch

二、TensorFlow

- Anaconda 镜像使用帮助,TUNA 还提供了 Anaconda 仓库的镜像,运行以下命令:

conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/

conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/

conda config --set show_channel_urls yes

- TensorFlow 镜像使用帮助

TensorFlow 镜像 - CUDA 8.0下载地址

CUDA8.0

运行Demo

配置参考

- 安装指定版本Tensorflow,代码支持的是1.2的版本

pip install -I tensorflow==1.2.1

- 下载tf-faster-rcnn代码

git clone https://github.com/endernewton/tf-faster-rcnn.git

Git和GitHub环境的搭建

ubuntu使用Github

基于CPU版本运行Demo

- 修改tf-faster-rcnn/lib/model/nms_wrapper.py

from model.config import cfg

#from nms.gpu_nms

import gpu_nms from nms.cpu_nms

import cpu_nms

def nms(dets, thresh, force_cpu=False):

"""Dispatch to either CPU or GPU NMS implementations."""

if dets.shape[0] == 0:

return []

return cpu_nms(dets, thresh)

# if cfg.USE_GPU_NMS and not force_cpu:

# return gpu_nms(dets, thresh, device_id=0)

# else:

# return cpu_nms(dets, thresh)

- 注释代码 tf-faster-rcnn/lib/model/config.py

__C.USE_GPU_NMS = False

- 注释代码tf-faster-rcnn/lib/setup.py

CUDA = locate_cuda()

self.src_extensions.append('.cu')

Extension('nms.gpu_nms',

['nms/nms_kernel.cu', 'nms/gpu_nms.pyx'],

library_dirs=[CUDA['lib64']],

libraries=['cudart'],

language='c++',

runtime_library_dirs=[CUDA['lib64']],

# this syntax is specific to this build system

# we're only going to use certain compiler args with nvcc and not with gcc

# the implementation of this trick is in customize_compiler() below extra_compile_args={'gcc': ["-Wno-unused-function"],

'nvcc': ['-arch=sm_52',

'--ptxas-options=-v',

'-c',

'--compiler-options',

"'-fPIC'"]},

include_dirs = [numpy_include, CUDA['include']]

- 到tf-faster-rcnn/lib下编译Cython 模块,如果后续Demo运行出错,需从此处重新编译

cd tf-faster-rcnn/lib

make clean

make

cd ..

修改tf-faster-rcnn/lib/setup.py代码中的参数设置

extra_compile_args={'gcc': ["-Wno-unused-function"],

'nvcc': ['-arch=sm_61', # 修改此处

'--ptxas-options=-v',

'-c',

'--compiler-options',

"'-fPIC'"]},

include_dirs = [numpy_include, CUDA['include']]

- 安装Python COCO API:

cd data

git clone https://github.com/pdollar/coco.git

cd coco/PythonAPI

make

cd ../../..

- 下载预训练模型voc_0712_80k-110k.tgz,解压有4个文件

./data/scripts/fetch_faster_rcnn_models.sh

保存路径tf-faster-rcnn/output/vgg16/voc_2007_trainval+voc_2012_trainval/default

- 运行Demo,使用预处理模型进行测试

./tools/demo.py

建议用Pycharm进行调试,有包缺失或者有错及时修改

运行后可以看到测试照片的效果

服务器使用GPU训练模型

-

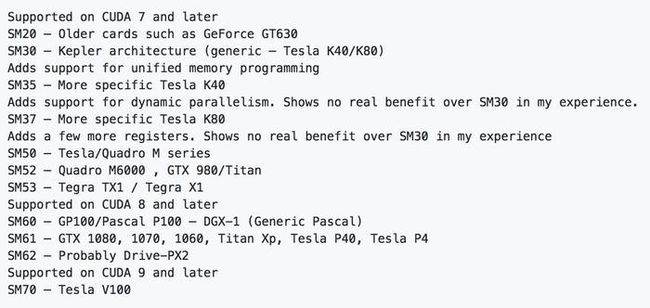

首先根据GPU的型号来修改计算能力(Architecture)

实验室服务器使用GTX1080,修改sm_52为sm_61

- 到tf-faster-rcnn/lib下编译Cython 模块,如果后续Demo运行出错,需从此处重新编译

cd tf-faster-rcnn/lib

make clean

make

cd ..

- 安装Python COCO API:

cd data

git clone https://github.com/pdollar/coco.git

cd coco/PythonAPI

make

cd ../../..

- 下载预训练模型

VGG16模型

路径 data/imagenet_weights,在/tf-faster-rcnn目录执行命令

mkdir -p data/imagenet_weights

cd data/imagenet_weights

wget -v http://download.tensorflow.org/models/vgg_16_2016_08_28.tar.gz

tar -xzvf vgg_16_2016_08_28.tar.gz

mv vgg_16.ckpt vgg16.ckpt

cd ../..

- 准备训练数据

数据集需要参考VOC2007的数据集格式

JPEGImages:存放用来训练的原始图像,图片编号要以6为数字命名,例如000034.jpg,图片要是JPEG/JPG格式的,图片的长宽比(width/height)要在0.462-6.828之间;

Annotations :存放原始图像中的Object的坐标信息,一个训练图片对应Annotations下的一个同名的XML文件;

ImageSets/Main :指定用来train,trainval,val和test的图片的编号,因为VOC的数据集可以做很多的CV任务,比如Object detection, Semantic segementation, Edge detection等,所以Imageset下有几个子文件夹(Layout, Main, Segementation),修改下Main下的文件 (train.txt, trainval.txt, val.txt, test.txt),里面写上想要进行任务的图片的编号。

将上述数据集放在tf-faster-rcnn/data/VOCdevkit2007/VOC2007下面,替换原始VOC2007的JPEGIMages,Imagesets,Annotations,这里也可以直接更换文件夹名称。

VOC2007数据集下载地址

wget http://host.robots.ox.ac.uk/pascal/VOC/voc2007/VOCtrainval_06-Nov-2007.tar

wget http://host.robots.ox.ac.uk/pascal/VOC/voc2007/VOCtest_06-Nov-2007.tar

wget http://host.robots.ox.ac.uk/pascal/VOC/voc2007/VOCdevkit_08-Jun-2007.tar

数据集解压命令,在当前文件夹解压,会自动生成VOCdevkit文件夹。

tar xvf VOCtrainval_06-Nov-2007.tar

tar xvf VOCtest_06-Nov-2007.tar

tar xvf VOCdevkit_08-Jun-2007.tar

- 训练模型

./experiments/scripts/train_faster_rcnn.sh [GPU_ID] [DATASET] [NET]

# GPU_ID is the GPU you want to test on

# NET in {vgg16, res50, res101, res152} is the network arch to use

# DATASET {pascal_voc, pascal_voc_0712, coco} is defined in train_faster_rcnn.sh

# Examples:

./experiments/scripts/train_faster_rcnn.sh 0 pascal_voc vgg16

./experiments/scripts/train_faster_rcnn.sh 1 coco res101

- Tensorboard查看收敛情况

tensorboard --logdir=tensorboard/vgg16/voc_2007_trainval/ --port=7001

- 训练的模型4个文件保存在tf-faster-rcnn/output/vgg16/voc_2007_trainval+voc_2012_trainval/default

output/[NET]/[DATASET]/default/

将训练的模型替换,运行Demo即可看到效果

用自己的数据集进行训练,需保证JPEGImages,Annotations和ImageSets/Main文件与VOC07数据集保持一致。

修改tf-faster-rcnn/lib/datasets/pascal_voc.py,classes内容与自己数据集一致,' '单引号内是识别的对象

self._classes = ('__background__', # always index 0

'aeroplane', 'bicycle', 'bird', 'boat',

'bottle', 'bus', 'car', 'cat', 'chair',

'cow', 'diningtable', 'dog', 'horse',

'motorbike', 'person', 'pottedplant',

'sheep', 'sofa', 'train', 'tvmonitor')

每次训练前将tf-faster-rcnn/data/cache和tf-faster-rcnn/output(输出的model存放的位置,不训练此文件夹没有)两个文件夹删除。

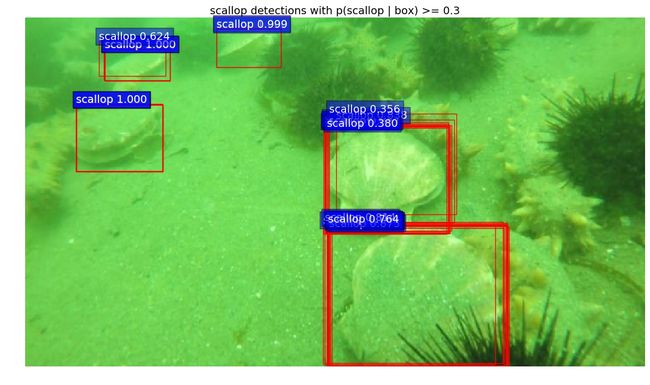

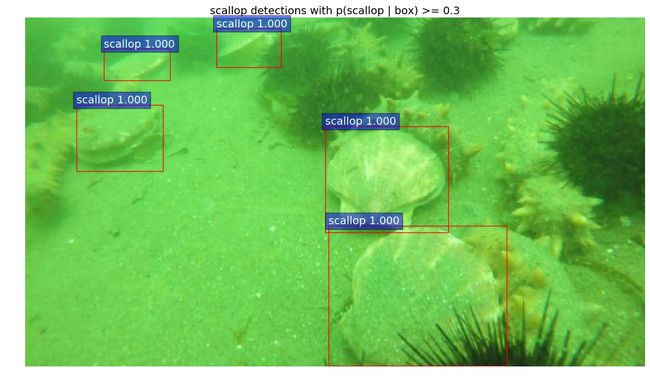

tf-faster-rcnn测试过程

1、运行demo2.py,可以遍历测试图片,并框出物体。

测试数据集保存位置/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/data/demo/.jpg

模型存放在/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/output/vgg16/voc_2007_trainval+voc_2012_trainval/default/,其中有4个文件。

输出的测试图片路径/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/testfigs/*.jpg。注意需要在运行前首先新建testfigs文件夹。

2、运行demo3.py,可以遍历测试图片,并输出真值表。

测试数据集保存位置 /home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/data/demo/.jpg。

需要测试图片的文档位置 /home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/data/VOCdevkit2007/contest/test.txt。

模型存放在 /home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/output/vgg16/voc_2007_trainval+voc_2012_trainval/default/,其中有4个文件。

输出的测试图片路径 /home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/result.txt。

输出格式为

1 1 0.377665907145 115.43637085 410.561065674 402.517791748 479.0

tf-faster-rcnn的工程目录进行简单介绍

data: 存放数据,以及读取文件的cache;

experiments: 存放配置文件以及运行的log文件,配置文件

lib: python接口

output: 输出的model存放的位置,不训练此文件夹没有

tensorboard: 可视化部分

tools: 训练和测试的python文件faster-rcnn检测出来的结果保存成txt

faster-rcnn检测出来的结果保存成txt,再转成xml

训练过程中出现问题

- 1、训练自己的数据集时出现error

File "/home/hope/jhson/caffe/py-faster-rcnn2/tools/../lib/datasets/imdb.py", line 67, in roidb

self._roidb = self.roidb_handler()

File "/home/hope/jhson/caffe/py-faster-rcnn2/tools/../lib/datasets/pascal_voc.py", line 103, in gt_roidb

for index in self.image_index]

File "/home/hope/jhson/caffe/py-faster-rcnn2/tools/../lib/datasets/pascal_voc.py", line 208, in _load_pascal_annotation

cls = self._class_to_ind[obj.find('name').text.lower().strip()]

KeyError: 'chair'

首先核对tf-faster-rcnn/lib/datasets/pascal_voc.py文件中self._class内容

其次寻找以下类似代码

objs = diff_objs (or non_diff_objs)

并在下方添加代码

cls_objs = [obj for obj in objs if obj.find('name').text in self._classes]

objs = cls_objs

一般可以解决

- 2、训练自己的数据集时出现error

File “/py-faster-rcnn/tools/../lib/datasets/imdb.py”, line 108, in append_flipped_images

assert (boxes[:, 2] >= boxes[:, 0]).all()

AssertionError

检查自己数据发现,左上角坐标(x,y)可能为0,或标定区域溢出图片。而faster rcnn会对Xmin,Ymin,Xmax,Ymax进行减一操作,如果Xmin为0,减一后变为65535。

a、修改lib/datasets/imdb.py,append_flipped_images()函数

数据整理,在一行代码

boxes[:, 2] = widths[i] - oldx1 - 1

下方加入代码:

for b in range(len(boxes)):

if boxes[b][2]< boxes[b][0]:

boxes[b][0] = 0

b、修改lib/datasets/pascal_voc.py,_load_pascal_annotation(,)函数

将对Xmin,Ymin,Xmax,Ymax的-1去掉

for ix, obj in enumerate(objs):

bbox = obj.find('bndbox')

# Make pixel indexes 0-based

x1 = float(bbox.find('xmin').text) - 1

y1 = float(bbox.find('ymin').text) - 1

x2 = float(bbox.find('xmax').text) - 1

y2 = float(bbox.find('ymax').text) - 1

cls = self._class_to_ind[obj.find('name').text.lower().strip()]

可以参考Faster RCNN坐标问题分析

- 3、TensorBoard可视化结果

TensorBoard是Tensorflow的一个可视化工具,可以看见整个网络结构,以及将模型训练过程中的各种汇总数据展示出来,包括标量、图片、音频、计算图、数据分布、直方图和嵌入向量。

在Terminal终端中运行

tensorboard --logdir=tensorboard/vgg16/voc_2007_trainval/ --port=6006

(tensorflow) henry@henry-Rev-1-0:~$ tensorboard --logdir=tensorboard/vgg16/voc_2007_trainval/ --port=6006

Starting TensorBoard b'54' at http://henry-Rev-1-0:6006

(Press CTRL+C to quit)

WARNING:tensorflow:Found more than one graph event per run, or there was a metagraph containing a graph_def, as well as one or more graph events. Overwriting the graph with the newest event.

WARNING:tensorflow:Found more than one metagraph event per run. Overwriting the metagraph with the newest event.

在此项目中,我的tensorboard保存路径为/home/henry/tensorboard,只要保证文件结构正确就可以在浏览器中搜索http://henry-Rev-1-0:6006,即可自动打开效果。

4、比赛用URPC数据集文件结构

-

Annotation

-

train

- G0024172 1800张

000000.xml-001799.xml - G0024173 1800张

000000.xml-001799.xml - G0024174 1800张

000000.xml-001799.xml - YDXJ0003 7755张

000000.xml-007754.xml - YDXJ0013 4500张

000000.xml-004499.xml

- G0024172 1800张

-

test

- YDXJ0012 1327张

000000.xml-001326.xml

- YDXJ0012 1327张

-

-

ImageSets

- Layout

test.txt 1327张正序排列

train.txt 17655正序排列

val.txt 同test.txt

- Layout

-

JPEGImages

-

*.jpg

- G0024172 1800张

000000.jpg-001799.jpg - G0024173 1800张

000000.jpg-001799.jpg - G0024174 1800张

000000.jpg-001799.jpg - YDXJ0003 7755张

000000.jpg-007754.jpg - YDXJ0013 4500张

000000.jpg-004499.jpg

- G0024172 1800张

-

5、用自己数据集训练

参考tf-faster rcnn 配置 及自己数据6、运行./tools/demo.py报错

terminate called after throwing an instance of 'std::bad_alloc'

what(): std::bad_alloc

Process finished with exit code 134 (interrupted by signal 6: SIGABRT)

分析原因:

这个错误是程序运行时数据量太大。代码中频繁的使用 new 生成数组。程序中频繁的调malloc(),导致可用内存不断减小,最终内存不够,无法分配新的空间,程序终止。

解决思路:

free -m #查看运行内存

relaybot@ubuntu:~/swap$ free -m

total used free shared buffers cached

Mem: 7916 7459 456 95 20 1404

-/+ buffers/cache: 6034 1881

Swap: 0 0 0

出现类似error后,可以重启机器,开机后只运行pycharm或者终端运行demo.py可解决问题。

参考内存不够程序终止错误解决方案

- 7、换数据集后,demo.py部分code未修改产生错误

报错内容

Traceback (most recent call last):

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/client/session.py", line 1139, in _do_call

return fn(*args)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/client/session.py", line 1121, in _run_fn

status, run_metadata)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/contextlib.py", line 88, in __exit__

next(self.gen)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/framework/errors_impl.py", line 466, in raise_exception_on_not_ok_status

pywrap_tensorflow.TF_GetCode(status))

tensorflow.python.framework.errors_impl.InvalidArgumentError: Assign requires shapes of both tensors to match. lhs shape= [84] rhs shape= [16]

[[Node: save/Assign = Assign[T=DT_FLOAT, _class=["loc:@vgg_16/bbox_pred/biases"], use_locking=true, validate_shape=true, _device="/job:localhost/replica:0/task:0/cpu:0"](vgg_16/bbox_pred/biases, save/RestoreV2)]]

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/henry/File/tf-faster-rcnn-contest/tools/demo.py", line 153, in

saver.restore(sess, tfmodel)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/training/saver.py", line 1548, in restore

{self.saver_def.filename_tensor_name: save_path})

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/client/session.py", line 789, in run

run_metadata_ptr)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/client/session.py", line 997, in _run

feed_dict_string, options, run_metadata)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/client/session.py", line 1132, in _do_run

target_list, options, run_metadata)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/client/session.py", line 1152, in _do_call

raise type(e)(node_def, op, message)

tensorflow.python.framework.errors_impl.InvalidArgumentError: Assign requires shapes of both tensors to match. lhs shape= [84] rhs shape= [16]

[[Node: save/Assign = Assign[T=DT_FLOAT, _class=["loc:@vgg_16/bbox_pred/biases"], use_locking=true, validate_shape=true, _device="/job:localhost/replica:0/task:0/cpu:0"](vgg_16/bbox_pred/biases, save/RestoreV2)]]

Caused by op 'save/Assign', defined at:

File "/home/henry/File/tf-faster-rcnn-contest/tools/demo.py", line 152, in

saver = tf.train.Saver()

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/training/saver.py", line 1139, in __init__

self.build()

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/training/saver.py", line 1170, in build

restore_sequentially=self._restore_sequentially)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/training/saver.py", line 691, in build

restore_sequentially, reshape)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/training/saver.py", line 419, in _AddRestoreOps

assign_ops.append(saveable.restore(tensors, shapes))

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/training/saver.py", line 155, in restore

self.op.get_shape().is_fully_defined())

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/ops/state_ops.py", line 271, in assign

validate_shape=validate_shape)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/ops/gen_state_ops.py", line 45, in assign

use_locking=use_locking, name=name)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/framework/op_def_library.py", line 767, in apply_op

op_def=op_def)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/framework/ops.py", line 2506, in create_op

original_op=self._default_original_op, op_def=op_def)

File "/home/henry/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow/python/framework/ops.py", line 1269, in __init__

self._traceback = _extract_stack()

InvalidArgumentError (see above for traceback): Assign requires shapes of both tensors to match. lhs shape= [84] rhs shape= [16]

[[Node: save/Assign = Assign[T=DT_FLOAT, _class=["loc:@vgg_16/bbox_pred/biases"], use_locking=true, validate_shape=true, _device="/job:localhost/replica:0/task:0/cpu:0"](vgg_16/bbox_pred/biases, save/RestoreV2)]]

Process finished with exit code 1

分析原因

net.create_architecture("TEST", 21,tag='default', anchor_scales=[8, 16, 32])

21是VOC的20种类别+background,但是自己数据集只有3种类别,属于模型与测试的参数不匹配产生的错误,因此需要按如下修改:

net.create_architecture("TEST", 4,tag='default', anchor_scales=[8, 16, 32])

问题解决,可以正常测试,输出如下:

Loaded network output/vgg16/voc_2007_trainval+voc_2012_trainval/default/vgg16_faster_rcnn_iter_70000.ckpt

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

Demo for data/demo/000337.jpg

Detection took 29.147s for 300 object proposals

Process finished with exit code 0

- 8、增加openCV打开摄像头,识别的代码。

#im_names = ['000456.jpg', '000542.jpg', '001150.jpg',

# '001763.jpg', '004545.jpg'] #default

#im_names = ['000023.jpg']

#for im_name in im_names:

# print('~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~')

# print('Demo for data/demo/{}'.format(im_name))

# demo(sess, net, im_name)

videoCapture = cv2.VideoCapture(0)

print('~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~')

while 1:

ret, im = videoCapture.read()

cv2.imshow("capture", im)

#print('Demo for data/demo/{}'.format(im))

demo(sess, net, im)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

videoCapture.release()

cv2.destroyAllWindows()

plt.show()

- 9、训练自己的模型,报错ZeroDivisionError。

Fix VGG16 layers..

Fixed.

Traceback (most recent call last):

File "./tools/trainval_net.py", line 139, in

max_iters=args.max_iters)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest-release/tools/../lib/model/train_val.py", line 377, in train_net

sw.train_model(sess, max_iters)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest-release/tools/../lib/model/train_val.py", line 278, in train_model

blobs = self.data_layer.forward()

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest-release/tools/../lib/roi_data_layer/layer.py", line 87, in forward

blobs = self._get_next_minibatch()

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest-release/tools/../lib/roi_data_layer/layer.py", line 83, in _get_next_minibatch

return get_minibatch(minibatch_db, self._num_classes)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest-release/tools/../lib/roi_data_layer/minibatch.py", line 27, in get_minibatch

assert(cfg.TRAIN.BATCH_SIZE % num_images == 0), \

ZeroDivisionError: integer division or modulo by zero

Command exited with non-zero status 1

14.62user 2.53system 0:17.01elapsed 100%CPU (0avgtext+0avgdata 2721756maxresident)k

0inputs+9504outputs (0major+1190329minor)pagefaults 0swaps

解决方式

首先检查./data/VOCdevkit2007/VOC2007/ImageSets/Main路径下的train.txt和test.txt文件不为空。

删除缓存文件,data/VOCdevkit/cache和data/cache/文件。

get zero division errors #160

- 10、训练自己的模型,报错AttributeError。

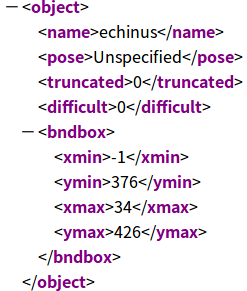

一般是由于/home/ouc/LiuHongzhi/tf-faster-rcnn-contest/data/VOCdevkit2007/VOC2007/Annotations/*.xml文件造成,格式不符合VOC2007,修改xml格式直到符合标准。

Appending horizontally-flipped training examples...

Traceback (most recent call last):

File "./tools/trainval_net.py", line 105, in

imdb, roidb = combined_roidb(args.imdb_name)

File "./tools/trainval_net.py", line 76, in combined_roidb

roidbs = [get_roidb(s) for s in imdb_names.split('+')]

File "./tools/trainval_net.py", line 76, in

roidbs = [get_roidb(s) for s in imdb_names.split('+')]

File "./tools/trainval_net.py", line 73, in get_roidb

roidb = get_training_roidb(imdb)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/model/train_val.py", line 328, in get_training_roidb

imdb.append_flipped_images()

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/imdb.py", line 113, in append_flipped_images

boxes = self.roidb[i]['boxes'].copy()

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/imdb.py", line 74, in roidb

self._roidb = self.roidb_handler()

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/pascal_voc.py", line 111, in gt_roidb

for index in self.image_index]

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/pascal_voc.py", line 111, in

for index in self.image_index]

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/pascal_voc.py", line 148, in _load_pascal_annotation

obj for obj in objs if int(obj.find('difficult').text) == 0]

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/pascal_voc.py", line 148, in

obj for obj in objs if int(obj.find('difficult').text) == 0]

AttributeError: 'NoneType' object has no attribute 'text'

Command exited with non-zero status 1

1.50user 0.14system 0:01.64elapsed 99%CPU (0avgtext+0avgdata 251932maxresident)k

0inputs+24outputs (0major+51834minor)pagefaults 0swaps

修改方案,注释以下代码:

non_diff_objs = [

obj for obj in objs if int(obj.find('difficult').text) == 0]

- 11、训练自己的模型,报错KeyError。

'USE_GPU_NMS': True}

Loaded dataset `voc_2007_trainval` for training

Set proposal method: gt

Appending horizontally-flipped training examples...

Traceback (most recent call last):

File "./tools/trainval_net.py", line 105, in

imdb, roidb = combined_roidb(args.imdb_name)

File "./tools/trainval_net.py", line 76, in combined_roidb

roidbs = [get_roidb(s) for s in imdb_names.split('+')]

File "./tools/trainval_net.py", line 76, in

roidbs = [get_roidb(s) for s in imdb_names.split('+')]

File "./tools/trainval_net.py", line 73, in get_roidb

roidb = get_training_roidb(imdb)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/model/train_val.py", line 328, in get_training_roidb

imdb.append_flipped_images()

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/imdb.py", line 113, in append_flipped_images

boxes = self.roidb[i]['boxes'].copy()

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/imdb.py", line 74, in roidb

self._roidb = self.roidb_handler()

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/pascal_voc.py", line 111, in gt_roidb

for index in self.image_index]

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/pascal_voc.py", line 111, in

for index in self.image_index]

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest (copy)/tools/../lib/datasets/pascal_voc.py", line 175, in _load_pascal_annotation

cls = self._class_to_ind[obj.find('name').text.lower().strip()]

KeyError: '"scallop"'

Command exited with non-zero status 1

1.54user 0.22system 0:01.81elapsed 97%CPU (0avgtext+0avgdata 251004maxresident)k

0inputs+0outputs (0major+51792minor)pagefaults 0swaps

删除py-faster-rcnn/data/VOCdevkit2007/annotations_cache这个文件夹;

删除py-faster-rcnn/data/cache文件夹。

可能是xml中有self_classes没有的类别scallop。

- 12、训练自己的模型,报错Attribute Error。

Attribute Error: 'NoneType' object has no attribute 'astype'

建议检查demo文档里,测试图片的名字是否写错,尤其是扩展名。比如把.jpeg写成了.jepg。

- 13、测试自己的模型,报错TypeError。

Saving cached annotations to /home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/data/VOCdevkit2007/VOC2007/ImageSets/Main/test.txt_annots.pkl

Traceback (most recent call last):

File "./tools/test_net.py", line 120, in

test_net(sess, net, imdb, filename, max_per_image=args.max_per_image)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/tools/../lib/model/test.py", line 196, in test_net

imdb.evaluate_detections(all_boxes, output_dir)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/tools/../lib/datasets/pascal_voc.py", line 285, in evaluate_detections

self._do_python_eval(output_dir)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/tools/../lib/datasets/pascal_voc.py", line 248, in _do_python_eval

use_07_metric=use_07_metric, use_diff=self.config['use_diff'])

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/tools/../lib/datasets/voc_eval.py", line 122, in voc_eval

pickle.dump(recs, f)

TypeError: write() argument must be str, not bytes

Command exited with non-zero status 1

一开始尝试在/tf-faster-rcnn-contest -2018/tools/../lib/datasets/voc_eval.py中修改

with open(cachefile, 'w') as f:

修改为

with open(cachefile, 'wb') as f:

出现新的报错

Evaluating detections

Writing holothurian VOC results file

Writing echinus VOC results file

Writing scallop VOC results file

Writing starfish VOC results file

VOC07 metric? Yes

Traceback (most recent call last):

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/tools/../lib/datasets/voc_eval.py", line 128, in voc_eval

recs = pickle.load(f)

EOFError: Ran out of input

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "./tools/test_net.py", line 120, in

test_net(sess, net, imdb, filename, max_per_image=args.max_per_image)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/tools/../lib/model/test.py", line 196, in test_net

imdb.evaluate_detections(all_boxes, output_dir)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/tools/../lib/datasets/pascal_voc.py", line 285, in evaluate_detections

self._do_python_eval(output_dir)

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/tools/../lib/datasets/pascal_voc.py", line 248, in _do_python_eval

use_07_metric=use_07_metric, use_diff=self.config['use_diff'])

File "/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/tools/../lib/datasets/voc_eval.py", line 130, in voc_eval

recs = pickle.load(f, encoding='bytes')

EOFError: Ran out of input

Command exited with non-zero status 1

参考EOFError: Ran out of input #171

将tf-faster-rcnn-contest -2018/tools/../lib/datasets/voc_eval.py中找到

cachefile = os.path.join(cachedir, '%s_annots.pkl' % imagesetfile)

print('Saving cached annotations to {:s}'.format(cachefile))

with open(cachefile, 'w') as f:

pickle.dump(recs, f)

修改为

cachefile = os.path.join(cachedir, ('%s_annots.pkl' %'imagesetfile'))

#cachefile = os.path.join(cachedir, '%s_annots.pkl' % imagesetfile.split("/")[-1].split(".")[0])

with open(cachefile, 'wb') as f:

pickle.dump(recs, f)

- 14、测试数据集,根据输入test_list对demo中的图片进行检测,输出比赛格式需要的txt文档结果的demo.py。

#!/usr/bin/env python

# --------------------------------------------------------

# Tensorflow Faster R-CNN

# Licensed under The MIT License [see LICENSE for details]

# Written by Xinlei Chen, based on code from Ross Girshick

# --------------------------------------------------------

"""

Demo script showing detections in sample images.

See README.md for installation instructions before running.

"""

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import _init_paths

from model.config import cfg

from model.test import im_detect

from model.nms_wrapper import nms

from utils.timer import Timer

import tensorflow as tf

import matplotlib.pyplot as plt

import numpy as np

import os, cv2

import os.path

import argparse

from nets.vgg16 import vgg16

from nets.resnet_v1 import resnetv1

import scipy.io as sio

import os, sys, cv2

import argparse

import os

import numpy

from PIL import Image #导入Image模块

from pylab import * #导入savetxt模块

CLASSES = ('__background__',

'holothurian', 'echinus', 'scallop', 'starfish')

NETS = {'vgg16': ('vgg16_faster_rcnn_iter_70000.ckpt',),'res101': ('res101_faster_rcnn_iter_110000.ckpt',)}

DATASETS= {'pascal_voc': ('voc_2007_trainval',),'pascal_voc_0712': ('voc_2007_trainval+voc_2012_trainval',)}

def vis_detections(im, class_name, dets, thresh=0.5):

"""Draw detected bounding boxes."""

inds = np.where(dets[:, -1] >= thresh)[0]

if len(inds) == 0:

return

#im = im[:, :, (2, 1, 0)]

#fig, ax = plt.subplots(figsize=(12, 12))

#ax.imshow(im, aspect='equal')

# !/usr/bin/env python

# -*- coding: UTF-8 -*-

# --------------------------------------------------------

# Faster R-CNN

# Copyright (c) 2015 Microsoft

# Licensed under The MIT License [see LICENSE for details]

# Written by Ross Girshick

# --------------------------------------------------------

for i in inds:

bbox = dets[i, :4]

score = dets[i, -1]

if class_name == '__background__':

fw = open('result.txt', 'a') # 最终的txt保存在这个路径下,下面的都改

fw.write(str(im_name[1]) + ' ' + class_name + ' ' + str(score) + ' ' +str(int(bbox[0])) + ' ' + str(int(bbox[1])) + ' ' + str(int(bbox[2])) + ' ' + str(int(bbox[3])) + '\n')

fw.close()

elif class_name == 'holothurian':

fw = open('result.txt', 'a') # 最终的txt保存在这个路径下,下面的都改

fw.write(str(im_name[1]) + ' ' + str(1) + ' ' + str(score) + ' ' +str(int(bbox[0])) + ' ' + str(int(bbox[1])) + ' ' + str(int(bbox[2])) + ' ' + str(int(bbox[3])) + '\n')

fw.close()

elif class_name == 'echinus':

fw = open('result.txt', 'a') # 最终的txt保存在这个路径下,下面的都改

fw.write(str(im_name[1]) + ' ' + str(2) + ' ' + str(score) + ' ' +str(int(bbox[0])) + ' ' + str(int(bbox[1])) + ' ' + str(int(bbox[2])) + ' ' + str(int(bbox[3])) + '\n')

fw.close()

elif class_name == 'scallop':

fw = open('result.txt', 'a') # 最终的txt保存在这个路径下,下面的都改

fw.write(str(im_name[1]) + ' ' + str(3) + ' ' + str(score) + ' ' +str(int(bbox[0])) + ' ' + str(int(bbox[1])) + ' ' + str(int(bbox[2])) + ' ' + str(int(bbox[3])) + '\n')

fw.close()

elif class_name == 'starfish':

fw = open('result.txt', 'a') # 最终的txt保存在这个路径下,下面的都改

fw.write(str(im_name[1]) + ' ' + str(4) + ' ' + str(score) + ' ' +str(int(bbox[0])) + ' ' + str(int(bbox[1])) + ' ' + str(int(bbox[2])) + ' ' + str(int(bbox[3])) + '\n')

fw.close()

def demo(sess, net, image_name):

"""Detect object classes in an image using pre-computed object proposals."""

# Load the demo image

all_name = image_name + '.jpg'

im_file = os.path.join(cfg.DATA_DIR, 'demo', all_name)

im = cv2.imread(im_file)

# Detect all object classes and regress object bounds

timer = Timer()

timer.tic()

scores, boxes = im_detect(sess, net, im)

timer.toc()

print('Detection took {:.3f}s for {:d} object proposals'.format(timer.total_time, boxes.shape[0]))

#save_jpg = os.path.join('/data/test',im_name)

# Visualize detections for each class

CONF_THRESH = 0.8

NMS_THRESH = 0.3

#im = im[:, :, (2, 1, 0)]

#fig,ax = plt.subplots(figsize=(12, 12))

#ax.imshow(im, aspect='equal')

for cls_ind, cls in enumerate(CLASSES[1:]):

cls_ind += 1 # because we skipped background

cls_boxes = boxes[:, 4*cls_ind:4*(cls_ind + 1)]

cls_scores = scores[:, cls_ind]

dets = np.hstack((cls_boxes,

cls_scores[:, np.newaxis])).astype(np.float32)

keep = nms(dets, NMS_THRESH)

dets = dets[keep, :]

vis_detections(im, cls, dets,thresh=CONF_THRESH)

def parse_args():

"""Parse input arguments."""

parser = argparse.ArgumentParser(description='Tensorflow Faster R-CNN demo')

#parser.add_argument('--net', dest='demo_net', help='Network to use [vgg16 res101]',

# choices=NETS.keys(), default='res101') #default

parser.add_argument('--net', dest='demo_net', help='Network to use [vgg16 res101]',

choices=NETS.keys(), default='vgg16')

parser.add_argument('--dataset', dest='dataset', help='Trained dataset [pascal_voc pascal_voc_0712]',

choices=DATASETS.keys(), default='pascal_voc_0712')

args = parser.parse_args()

return args

if __name__ == '__main__':

cfg.TEST.HAS_RPN = True # Use RPN for proposals

args = parse_args()

cfg.USE_GPU_NMS = False

# model path

demonet = args.demo_net

dataset = args.dataset

tfmodel = os.path.join('output', demonet, DATASETS[dataset][0], 'default',

NETS[demonet][0])

if not os.path.isfile(tfmodel + '.meta'):

raise IOError(('{:s} not found.\nDid you download the proper networks from '

'our server and place them properly?').format(tfmodel + '.meta'))

# set config

tfconfig = tf.ConfigProto(allow_soft_placement=True)

tfconfig.gpu_options.allow_growth=True

# init session

sess = tf.Session(config=tfconfig)

# load network

if demonet == 'vgg16':

net = vgg16()

elif demonet == 'res101':

net = resnetv1(num_layers=101)

else:

raise NotImplementedError

net.create_architecture("TEST",5,

tag='default', anchor_scales=[8, 16, 32])

saver = tf.train.Saver()

saver.restore(sess, tfmodel)

print('Loaded network {:s}'.format(tfmodel))

#im_names = ['000456.jpg', '000542.jpg', '001150.jpg',

# '001763.jpg', '004545.jpg'] #default

#im_names = ['000456.jpg', '000542.jpg', '001150.jpg',

# '001763.jpg', '004545.jpg']

im = 128 * np.ones((300, 500, 3), dtype=np.uint8)

for i in range(2):

_, _= im_detect(sess,net, im)

#im_names = get_imlist(r"/home/henry/Files/tf-faster-rcnn-contest/data/demo")

fr = open('/home/ouc/LiuHongzhi/tf-faster-rcnn-contest -2018/data/VOCdevkit2007/test_list.txt', 'r')

for im_name in fr:

#path = "/home/henry/Files/URPC2018/VOC/VOC2007/JPEGImages/G0024172/*.jpg"

#filelist = os.listdir(path)

#for im_name in path:

im_name = im_name.strip()

im_name = im_name.split(' ')

print('~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~')

print('mainDemo for data/demo/{}{}'.format(im_name[0], '.jpg'))

print('mainDemo for data/demo/{}{}'.format(im_name[1], '.jpg'))

demo(sess, net, im_name[0])

#plt.show()

fr.close

- 15、制作VOC镜像训练集,训练模型,报错RuntimeWarning。

/home/ouc/LiuHongzhi/tf-faster-rcnn-contest-2018/tools/../lib/model/bbox_transform.py:27: RuntimeWarning: invalid value encountered in log

targets_dw = np.log(gt_widths / ex_widths)

iter: 100 / 70000, total loss: nan

>>> rpn_loss_cls: 0.668627

>>> rpn_loss_box: nan

>>> loss_cls: 0.009253

>>> loss_box: 0.000000

>>> lr: 0.001000

speed: 0.342s / iter

iter: 120 / 70000, total loss: nan

>>> rpn_loss_cls: 0.657523

>>> rpn_loss_box: nan

>>> loss_cls: 0.001831

>>> loss_box: 0.000000

>>> lr: 0.001000

原因分析,Annotation中的xm文件的bounding box坐标超出图片范围,如下图所示:

对xmin修改后,可以正常训练。

相关参考 faster rcnn训练过程出现loss=nan解决办法

- 16、训练结束后测试时出现Keyerror报错

File "/home/hyzhan/py-faster-rcnn/tools/../lib/datasets/voc_eval.py", line 126, in voc_eval

R = [obj for obj in recs[imagename] if obj['name'] == classname]

KeyError: '000002'

解决方法:删除data/VOCdekit2007下的annotations_cache文件夹

参考链接 用faster-rcnn训练自己的数据集

- 17、运行程序发现去重框有问题,应该定位到NMS问题

,根据GPU的型号来选择合适的计算能力(Architecture),在setup.py修改后需要到tf-faster-rcnn/lib重新编译。

- 18、minibatch_db为空时

报错

assert(cfg.TRAIN.BATCH_SIZE % num_images ==0)

ZeroDivisionError: integer division or modulo by zero

minibatch_db: [{'boxes': array([[164, 103, 280, 237],

[524, 232, 687, 385]], dtype=uint16),

'gt_classes': array([2, 2], dtype=int32),

'gt_overlaps': <2x5 sparse matrix of type ''with 2 stored elements in Compressed Sparse Row format>,

'flipped': False, 'seg_areas': array([15795., 25256.], dtype=float32),

'image': '/home/henry/Files/URPC2019/faster-rcnn-contest/data/VOCdevkit2007/VOC2007/JPEGImages/YN030001_3285.jpg',

'width': 720,

'height': 405,

'max_classes': array([2, 2]),

'max_overlaps': array([1., 1.], dtype=float32)}]

解决方式,在/lib/roi_data_layer/layer.py加入如下代码:

def _get_next_minibatch_inds(self):

"""Return the roidb indices for the next minibatch."""

if self._cur + cfg.TRAIN.IMS_PER_BATCH >= len(self._roidb):

self._shuffle_roidb_inds()

db_inds = self._perm[self._cur:self._cur + cfg.TRAIN.IMS_PER_BATCH]

self._cur += cfg.TRAIN.IMS_PER_BATCH

if self._cur == self._perm.size: # add

self._cur = 0 # add

#print("\n db_inds: ", db_inds) # db_inds: [5138]

#print("\n self._perm: ", self._perm.shape) # self._perm: (7388,)

#print("\n self._cur: ", self._cur) # self._cur: 27

return db_inds