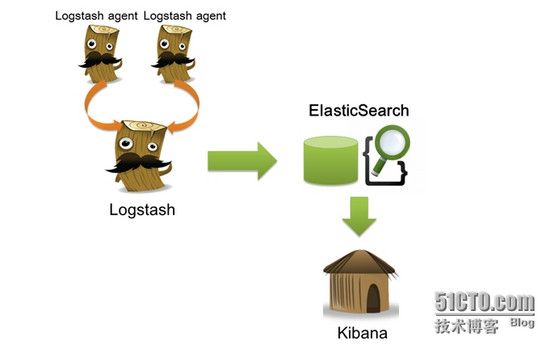

开源实时日志分析 ELK 平台能够完美的解决我们上述的问题, ELK 由 ElasticSearch 、 Logstash 和 Kiabana 三个开源工具组成。官方网站: https://www.elastic.co/products

l Elasticsearch 是个开源分布式搜索引擎,它的特点有:分布式,零配置,自动发现,索引自动分片,索引副本机制, restful 风格接口,多数据源,自动搜索负载等。

l Logstash 是一个完全开源的工具,他可以对你的日志进行收集、分析,并将其存储供以后使用(如,搜索)。

l kibana 也是一个开源和免费的工具,他 Kibana 可以为 Logstash 和 ElasticSearch 提供的日志分析友好的 Web 界面,可以帮助您汇总、分析和搜索重要数据日志。

工作原理如下如所示:

开源实时日志分析ELK平台部署流程:

( 1 )安装 Logstash 依赖包 JDK

Logstash 的运行依赖于 Java 运行环境, Logstash 1.5 以上版本不低于 java 7 推荐使用最新版本的 Java 。由于我们只是运行 Java 程序,而不是开发,下载 JRE 即可。首先,在 Oracle 官方下载新版 jre ,下载地址: http://www.oracle.com/technetwork/java/javase/downloads/jre8-downloads-2133155.html

#wget http://download.oracle.com/otn-pub/java/jdk/8u45-b14/jdk-8u45-linux-x64.tar.gz

# mkdir /usr/local/java # tar -zxf jdk-8u45-linux-x64.tar.gz -C /usr/local/java/

# tail -3 ~/.bash_profileexport JAVA_HOME=/usr/local/java/jdk1.8.0_45export PATH=$PATH:$JAVA_HOME/binexportCLASSPATH=.:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib/dt.jar:$CLASSPATH

# java -version java version "1.8.0_45"Java(TM) SE Runtime Environment (build 1.8.0_45-b14) Java HotSpot(TM) 64-Bit Server VM (build 25.45-b02,mixed mode)

( 2 )安装 Logstash

下载并安装 Logstash ,安装 logstash 只需将它解压的对应目录即可,例如: /usr/local 下:

# https://download.elastic.co/logstash/logstash/logstash-1.5.2.tar.gz# tar zxf logstash-1.5.2.tar.gz -C /usr/local/

# /usr/local/logstash-1.5.2/bin/logstash -e 'input { stdin { } } output { stdout {} }'

Logstash startup completed

Hello World!2015-07-15T03:28:56.938Z noc.vfast.com Hello World!

3 )安装 Elasticsearch

下载 Elasticsearch 后,解压到对应的目录就完成 Elasticsearch 的安装。

# tar -zxf elasticsearch-1.6.0.tar.gz -C /usr/local/

启动 Elasticsearch

# /usr/local/elasticsearch-1.6.0/bin/elasticsearch

如果使用远程连接的 Linux 的方式并想后台运行 elasticsearch 执行如下命令:

# nohup /usr/local/elasticsearch-1.6.0/bin/elasticsearch >nohup &

确认 elasticsearch 的 9200 端口已监听,说明 elasticsearch 已成功运行

# netstat -anp |grep :9200tcp 0 0 :::9200 :::* LISTEN 3362/java

# cat logstash-es-simple.confinput { stdin { } }

output {

elasticsearch {host => "localhost" }

stdout { codec=> rubydebug }

}

执行如下命令

# /usr/local/logstash-1.5.2/bin/logstash agent -f logstash-es-simple.conf… …

Logstash startup completed

hello logstash

{ "message" => "hello logstash", "@version" => "1", "@timestamp" => "2015-07-15T18:12:00.450Z", "host" => "noc.vfast.com"}

# curl 'http://localhost:9200/_search?pretty'返回结果

{ "took": 58, "timed_out" : false, "_shards" : { "total" : 5, "successful" : 5, "failed" : 0

}, "hits": { "total" : 1, "max_score" : 1.0, "hits" : [ { "_index" : "logstash-2015.07.15", "_type" : "logs", "_id" : "AU6TWiixxDXYhySMyTkP", "_score" : 1.0, "_source":{"message":"hellologstash","@version":"1","@timestamp":"2015-07-15T20:13:55.199Z","host":"noc.vfast.com"}

} ]

}

}

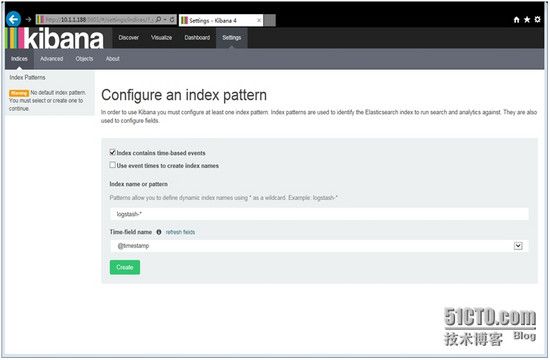

5 )安装 Kibana

下载 kibana 后,解压到对应的目录就完成 kibana 的安装

# tar -zxf kibana-4.1.1-linux-x64.tar.gz -C /usr/local/

启动 kibana

# /usr/local/kibana-4.1.1-linux-x64/bin/kibana

使用 http://kibanaServerIP : 5601 访问 Kibana ,登录后,首先,配置一个索引,默认, Kibana 的数据被指向 Elasticsearch ,使用默认的 logstash-* 的索引名称,并且是基于时间的,点击“ Create ”即可。

至此,ELK环境部署完成

以下为分析nginx日志的配置:

定义nginx日志格式:

[root@vm10-100-0-5 logstash-1.5.2]# cat /etc/nginx/nginx.conf

user nginx;

worker_processes 1;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format logstashlog '$http_host ' '$remote_addr - $remote_user [$time_local] ' '"$request" $status $body_bytes_sent "$request_body" ' '"$http_referer" "$http_user_agent" "$http_x_forwarded_for" ' '$request_time ';

access_log /var/log/nginx/access.log logstashlog;

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

include conf.d/*.conf;

}

[root@vm10-100-0-5 logstash-1.5.2]# cat logstash-nginx_log.conf

input {

file {

path => [ "/var/log/nginx/access.log" ]

start_position => "beginning"

}

}

filter {

grok {

patterns_dir => ['/opt/logstash/patterns/']

match => { "message" => "%{NGINXACCESS}" }

}

geoip {

source => "http_x_forwarded_for"

target => "geoip"

database => "/etc/logstash/GeoLiteCity.dat"

add_field => [ "[geoip][coordinates]", "%{[geoip][longitude]}" ]

add_field => [ "[geoip][coordinates]", "%{[geoip][latitude]}" ]

}

mutate {

convert => [ "[geoip][coordinates]", "float" ]

convert => [ "response","integer" ]

convert => [ "bytes","integer" ]

replace => { "type" => "nginx_access" }

remove_field => "message"

}

date {

match => [ "timestamp","dd/MMM/yyyy:HH:mm:ss Z"]

}

mutate {

remove_field => "timestamp"

}

}

output {

elasticsearch {

host => "localhost"

index => "logstash-nginx-access-%{+YYYY.MM.dd}"

}

stdout {codec => rubydebug}

}

[root@vm10-100-0-5 logstash-1.5.2]# cat /opt/logstash/patterns/nginx

URIPARAM1 \?[A-Za-z0-9$.+!*'|(){},~@#%&/=:;_?\-\[\]<>]*

URIPARAM (?:%{URIPARAM1})?

NGINXACCESS %{IPORHOST:http_host} %{IPORHOST:remote_addr} - %{USERNAME:remote_user} \[%{HTTPDATE:time_local}\] "%{WORD:method} %{URIPATH:request}%{URIPARAM:requestparam} HTTP/%{NUMBER:http_version}" %{INT:status} %{INT:body_bytes_sent} %{QS:request_body} %{QS:http_referer} %{QS:http_user_agent} %{QS:http_x_forwarded_for} %{NUMBER:request_time:float}

# bin/logstash -f logstash-nginx_log.conf

# bin/kibana

效果如图: