1.选一个自己感兴趣的主题。

网址: http://news.gzcc.cn/html/xiaoyuanxinwen/

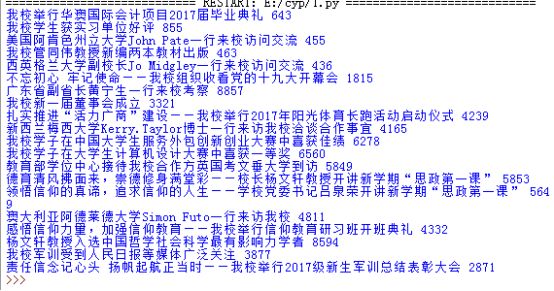

2.网络上爬取相关的数据

import requests

import re

from bs4 import BeautifulSoup

url='http://news.gzcc.cn/html/xiaoyuanxinwen/'

res=requests.get(url)

res.encoding='utf-8'

soup=BeautifulSoup(res.text,'html.parser')

def getclick(newurl):

id=re.search('_(.*).html',newurl).group(1).split('/')[1]

clickurl='http://oa.gzcc.cn/api.php?op=count&id={}&modelid=80'.format(id)

click=int(requests.get(clickurl).text.split(".")[-1].lstrip("html('").rstrip("');"))

return click

def getonpages(listurl):

res=requests.get(listurl)

res.encoding='utf-8'

soup=BeautifulSoup(res.text,'html.parser')

for news in soup.select('li'):

if len(news.select('.news-list-title'))>0:

title=news.select('.news-list-title')[0].text

time=news.select('.news-list-info')[0].contents[0].text

url1=news.select('a')[0]['href']

bumen=news.select('.news-list-info')[0].contents[1].text

description=news.select('.news-list-description')[0].text

resd=requests.get(url1)

resd.encoding='utf-8'

soupd=BeautifulSoup(resd.text,'html.parser')

detail=soupd.select('.show-content')[0].text

click=getclick(url1)

print(title,click)

count=int(soup.select('.a1')[0].text.rstrip("条"))

pages=count//10+1

for i in range(2,4):

pagesurl="http://news.gzcc.cn/html/xiaoyuanxinwen/{}.html".format(i)

getonpages(pagesurl)

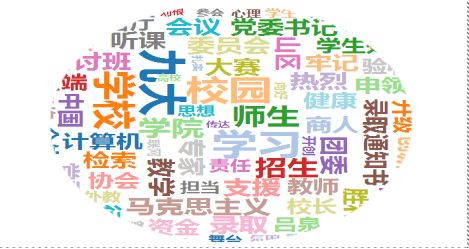

3.进行文本分析,生成词云

import jieba

fr=open("58.txt",'r',encoding='utf-8')

s=list(jieba.cut(fr.read()))

exp={'pve','\n','.','_','”','“',':','http',' ','?','(',')','*',':','4917',';','ershouche','/','com'}

key=set(s)-exp

dic={}for i in key:

dic[i]=s.count(i)

wc=list(dic.items())

wc.sort(key=lambda x:x[1],reverse=True)for i in range(20):

print(wc[i])

fr.close()

import jiebafrom wordcloud import WordCloudimport matplotlib.pyplot as pltfrom wordcloud import WordCloud,STOPWORDS,ImageColorGenerator

text =open("58.txt",'r',encoding='utf-8').read()print(text)

wordlist = jieba.cut(text,cut_all=True)

wl_split = "/".join(wordlist)

backgroud_Image = plt.imread('heart.jpg')

mywc = WordCloud(

background_color = 'white',

mask = backgroud_Image,

max_words = 2000,

stopwords = STOPWORDS,

font_path = 'C:/Users/Windows/fonts/msyh.ttf',

max_font_size = 200,

random_state = 30,

).generate(text)

plt.imshow(mywc)

plt.axis("off")

plt.show()

4.词云结果