hadoop-HDFS除了在linux上以shell的方式进行操作外,还可以利用java来操作,接下来我们就来实现吧

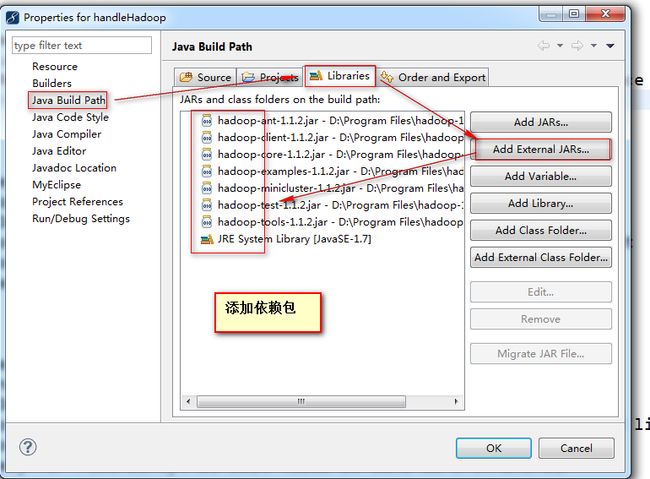

【1】新建java工程,引入hadoop的源码包

当然,也可以新建lib包,复制hadoop/下的jar

【2】代码操作

方式一:FileSystem与IOUtils

/**

*

* @author mis

* 使用systemfile

*/

public class app2 {

//查看hadoop的根目录下的hello文件

public static final String HDFS_PATH = "hdfs://hadoop:9000/hello";

public static final String DIR_PATH = "/DIR001";

public static final String FILE_PATH = "/DIR001/F1";

private static FsPermission permission;

public static void main(String[] args) throws Exception {

final FileSystem file = FileSystem.get(new URI(HDFS_PATH ), new Configuration());

//创建文件夹

//file.mkdirs(f)

file.mkdirs(new Path(DIR_PATH));

//file.mkdirs(f, permission)

file.mkdirs(new Path("/DIR002"), permission);

//file.mkdirs(fs, dir, permission)

//上传文件

final FSDataOutputStream out = file.create(new Path(FILE_PATH));

final FileInputStream in = new FileInputStream("E:/Demo.py");

IOUtils.copyBytes(in, out, 1024,true);

//下载文件

final FSDataInputStream in = file.open(new Path(FILE_PATH));

IOUtils.copyBytes(in, System.out, 1024,true);

//删除文件(夹)

file.delete(new Path(DIR_PATH), true);

//后边的boolean值是表示 递归与否(如果是删除文件夹就需要用true,问建true或false)

//文件路径是否存在

// boolean falg = file.exists(new Path("/DIR003/file/Demo.py"));

// System.out.println(falg);

//重命名

// file.rename(new Path("/DIR003/Demo1.py"), new Path("/DIR003/Demo.py"));

//是否是文件

// boolean falg1 = file.isFile(new Path("/DIR003/Demo.py"));

// System.out.println(falg1);

//剪切本地文件到hdfs

// file.moveFromLocalFile(new Path("E:/Demo.py"), new Path("/Demo.py"));

//复制本地文件到hdfs

// file.copyFromLocalFile(new Path("E:/Demo.py"), new Path("/Demo.py"));

... ...

}

}

方式二:FileSystem

public class app3 extends TestCase {

public static String hdfsUrl = "hdfs://hadoop:9000";

@Test

// create HDFS folder 创建一个文件夹

public void testHDFSMkdir() throws Exception {

//一般url只认识http协议

//URL.setURLStreamHandlerFactory(new FsUrlStreamHandlerFactory());//保证url也认识hdfs协议,这样就可以解析HDFS_PATH了

//普通操作

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), conf);

Path path = new Path("/test5");

fs.mkdirs(path);

//简化

FileSystem fs = FileSystem.get(new URI(hdfsUrl), new Configuration());

fs.mkdirs(new Path("/dir01"));

}

@Test

// create a file 创建一个文件

public void testCreateFile() throws Exception {

//标准操作

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), conf);

Path path = new Path("/test/a.txt");

FSDataOutputStream out = fs.create(path);

out.write("hello hadoop!".getBytes());

//简单操作

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), new Configuration());

FSDataOutputStream out = fs.create(new Path("/dir01/deom2.txt"));

out.write("hadoop 小试牛刀!".getBytes("utf-8"));

}

@Test

// rename a file 重命名

public void testRenameFile() throws Exception { // rename a file 重命名

//标准操作

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), conf);

Path path = new Path("/test/a.txt");

Path newPath = new Path("/test/bb.txt");

System.out.println(fs.rename(path, newPath));

//简洁操作

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), new Configuration());

System.out.print(fs.rename(new Path("/dir01/deom2.txt"), new Path("/dir01/demo03.txt")));

}

@Test

// 上传文件

public void testUploadLocalFile1() throws Exception { // upload a local file

//标准操作

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), conf);

Path src = new Path("E:/Demo.py");

Path dst = new Path("/test");

fs.copyFromLocalFile(src, dst);

//简洁操作

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), new Configuration());

fs.copyFromLocalFile(new Path("E:/Demo.py"), new Path("/dir01"));

}

@Test

//上传文件

public void testUploadLocalFile2() throws Exception {

//标准书写

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), conf);

Path src = new Path("E:/Demo.py");

Path dst = new Path("/test1");

InputStream in = new BufferedInputStream(new FileInputStream(new File(

"/home/hadoop/hadoop-1.2.1/bin/rcc")));

FSDataOutputStream out = fs.create(new Path("/test/rcc1"));

IOUtils.copyBytes(in, out, 4096);

//简单

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), new Configuration());

final FSDataOutputStream out = fs.create(new Path("/dir01/files"));

final FileInputStream in = new FileInputStream("E:/Demo.py");

IOUtils.copyBytes(in, out, 1024,true);

}

@Test

// 列出文件

public void testListFIles() throws Exception { // list files under folder

//标准书写

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), conf);

Path dst = new Path("/test");

FileStatus[] files = fs.listStatus(dst);

for (FileStatus file : files) {

System.out.println(file.getPath().toString());

}

//简洁书写

FileSystem fs = FileSystem.get(URI.create(hdfsUrl),new Configuration());

FileStatus[] files = fs.listStatus(new Path("/dir01"));

for (FileStatus file : files) {

System.out.println(file.getPath().toString());

}

}

@Test

// 查找文件所在的数据块

public void testGetBlockInfo() throws Exception { // list block info of file

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), conf);

Path dst = new Path("/dir01/Demo.py");

FileStatus fileStatus = fs.getFileStatus(dst);

BlockLocation[] blkloc = fs.getFileBlockLocations(fileStatus, 0,

fileStatus.getLen()); // 查找文件所在数据块

for (BlockLocation loc : blkloc) {

for (int i = 0; i < loc.getHosts().length; i++)

System.out.println(loc.getHosts()[i]);

}

}

@Test

//删除文件、文件夹

public void testRemoveFile () throws Exception {

FileSystem fs = FileSystem.get(URI.create(hdfsUrl), new Configuration());

fs.delete(new Path("/test5"), true);//是否递归删除

}

}