Flume快速入门系列(3) | 如何实时读取本地/目录文件到HDFS上

上一篇我们已经简单的介绍了Flume,那么这一篇文章博主继续为大家介绍如何实时读取本地/目录文件到HDFS上。

目录

- 一. 实时读取本地文件到HDFS

- 1.1需求:

- 1.2 需求分析

- 1.3 实现步骤

- 1. Flume要想将数据输出到HDFS,必须持有Hadoop相关jar包

- 2. 创建flume-file-hdfs.conf文件

- 3. 执行监控配置

- 4. 开启Hadoop和Hive并操作Hive产生日志

- 5. 在HDFS上查看文件

- 二. 实时读取目录文件到HDFS

- 2.1 案例需求

- 2.2 需求分析

- 2.3 实现步骤

- 1. 创建配置文件flume-dir-hdfs.conf

- 2. 启动监控文件夹命令

- 3. 向upload文件夹中添加文件

- 4. 查看HDFS上的数据

此部分所需要的文档,博主已经打包上传到百度云。如有需要请自行下载:

链接:https://pan.baidu.com/s/11KET693o47XR2WRXhbzFAA

提取码:n4fl

一. 实时读取本地文件到HDFS

1.1需求:

实时监控Hive日志,并上传到HDFS中

1.2 需求分析

1.3 实现步骤

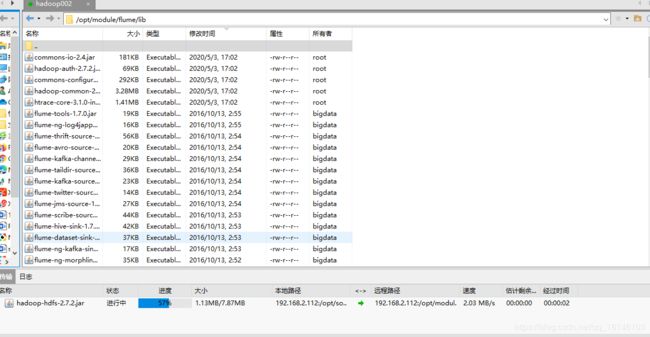

1. Flume要想将数据输出到HDFS,必须持有Hadoop相关jar包

将

commons-configuration-1.6.jar、

hadoop-auth-2.7.2.jar、

hadoop-common-2.7.2.jar、

hadoop-hdfs-2.7.2.jar、

commons-io-2.4.jar、

htrace-core-3.1.0-incubating.jar

2. 创建flume-file-hdfs.conf文件

- 1.创建文件

[bigdata@hadoop002 job]$ vim flume-file-hdfs.conf

注:要想读取Linux系统中的文件,就得按照Linux命令的规则执行命令。由于Hive日志在Linux系统中所以读取文件的类型选择:exec即execute执行的意思。表示执行Linux命令来读取文件。

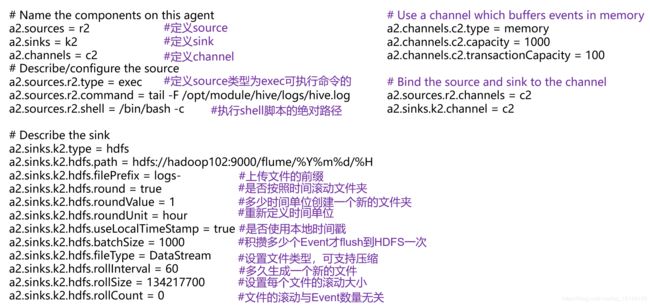

- 2. 向文件中添加

# Name the components on this agent

a2.sources = r2

a2.sinks = k2

a2.channels = c2

# Describe/configure the source

a2.sources.r2.type = exec

a2.sources.r2.command = tail -F /opt/module/datas/flume_tmp.log

a2.sources.r2.shell = /bin/bash -c

# Describe the sink

a2.sinks.k2.type = hdfs

a2.sinks.k2.hdfs.path = hdfs://hadoop002:9000/flume/%Y%m%d/%H

#上传文件的前缀

a2.sinks.k2.hdfs.filePrefix = logs-

#是否按照时间滚动文件夹

a2.sinks.k2.hdfs.round = true

#多少时间单位创建一个新的文件夹

a2.sinks.k2.hdfs.roundValue = 1

#重新定义时间单位

a2.sinks.k2.hdfs.roundUnit = hour

#是否使用本地时间戳

a2.sinks.k2.hdfs.useLocalTimeStamp = true

#积攒多少个Event才flush到HDFS一次

a2.sinks.k2.hdfs.batchSize = 1000

#设置文件类型,可支持压缩

a2.sinks.k2.hdfs.fileType = DataStream

#多久生成一个新的文件

a2.sinks.k2.hdfs.rollInterval = 60

#设置每个文件的滚动大小

a2.sinks.k2.hdfs.rollSize = 134217700

#文件的滚动与Event数量无关

a2.sinks.k2.hdfs.rollCount = 0

#最小冗余数

a2.sinks.k2.hdfs.minBlockReplicas = 1

# Use a channel which buffers events in memory

a2.channels.c2.type = memory

a2.channels.c2.capacity = 1000

a2.channels.c2.transactionCapacity = 100

# Bind the source and sink to the channel

a2.sources.r2.channels = c2

a2.sinks.k2.channel = c2

注意:

对于所有与时间相关的转义序列,Event Header中必须存在以 “timestamp”的key(除非hdfs.useLocalTimeStamp设置为true,此方法会使用TimestampInterceptor自动添加timestamp)。

a3.sinks.k3.hdfs.useLocalTimeStamp = true

- 3. 文档的详细解释

3. 执行监控配置

[bigdata@hadoop002 flume]$ bin/flume-ng agent --conf conf/ --name a2 --conf-file job/flume-file-hdfs.conf

4. 开启Hadoop和Hive并操作Hive产生日志

[bigdata@hadoop002 flume]$ start-dfs.sh

[bigdata@hadoop002 flume]$ start-yarn.sh // 可不启动yarn 节省内存

[bigdata@hadoop002 flume hive]$ bin/hive

hive (default)>

[bigdata@hadoop002 flume hive]echo 123 > /opt/module/datas/flume_tmp.log //先写入一个日志

5. 在HDFS上查看文件

- 1. 查看内容

二. 实时读取目录文件到HDFS

2.1 案例需求

使用Flume监听整个目录的文件

2.2 需求分析

2.3 实现步骤

1. 创建配置文件flume-dir-hdfs.conf

- 1. 创建一个文件

[bigdata@hadoop002 job]$ vim flume-dir-hdfs.conf

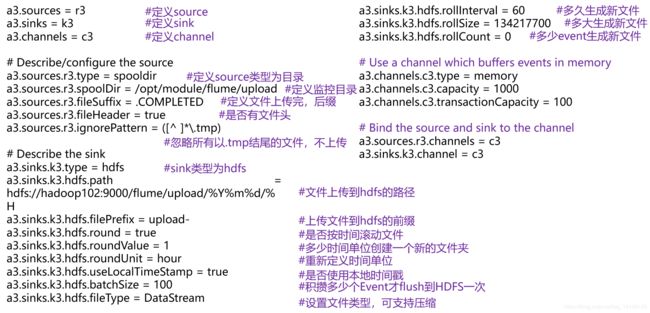

- 2. 添加如下内容

a3.sources = r3

a3.sinks = k3

a3.channels = c3

# Describe/configure the source

a3.sources.r3.type = spooldir

a3.sources.r3.spoolDir = /opt/module/flume/upload

a3.sources.r3.fileSuffix = .COMPLETED

a3.sources.r3.fileHeader = true

#忽略所有以.tmp结尾的文件,不上传

a3.sources.r3.ignorePattern = ([^ ]*\.tmp)

# Describe the sink

a3.sinks.k3.type = hdfs

a3.sinks.k3.hdfs.path = hdfs://hadoop002:9000/flume/upload/%Y%m%d/%H

#上传文件的前缀

a3.sinks.k3.hdfs.filePrefix = upload-

#是否按照时间滚动文件夹

a3.sinks.k3.hdfs.round = true

#多少时间单位创建一个新的文件夹

a3.sinks.k3.hdfs.roundValue = 1

#重新定义时间单位

a3.sinks.k3.hdfs.roundUnit = hour

#是否使用本地时间戳

a3.sinks.k3.hdfs.useLocalTimeStamp = true

#积攒多少个Event才flush到HDFS一次

a3.sinks.k3.hdfs.batchSize = 100

#设置文件类型,可支持压缩

a3.sinks.k3.hdfs.fileType = DataStream

#多久生成一个新的文件

a3.sinks.k3.hdfs.rollInterval = 60

#设置每个文件的滚动大小大概是128M

a3.sinks.k3.hdfs.rollSize = 134217700

#文件的滚动与Event数量无关

a3.sinks.k3.hdfs.rollCount = 0

# Use a channel which buffers events in memory

a3.channels.c3.type = memory

a3.channels.c3.capacity = 1000

a3.channels.c3.transactionCapacity = 100

# Bind the source and sink to the channel

a3.sources.r3.channels = c3

a3.sinks.k3.channel = c3

2. 启动监控文件夹命令

[bigdata@hadoop002 flume]$ bin/flume-ng agent --conf conf/ --name a3 --conf-file job/flume-dir-hdfs.conf

说明: 在使用Spooling Directory Source时

1.不要在监控目录中创建并持续修改文件

2.上传完成的文件会以.COMPLETED结尾

3.被监控文件夹每500毫秒扫描一次文件变动

3. 向upload文件夹中添加文件

- 1. 在/opt/module/flume目录下创建upload文件夹

[bigdata@hadoop002 flume]$ mkdir upload

- 2. 向upload文件夹中添加文件

[bigdata@hadoop002 flume]$ cd upload/

[bigdata@hadoop002 upload]$ touch buwenbuhuo.txt

[bigdata@hadoop002 upload]$ touch buwenbuhuo.tmp

[bigdata@hadoop002 upload]$ touch buwenbuhuo.log

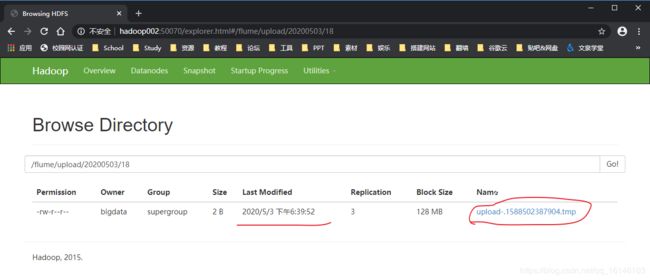

4. 查看HDFS上的数据

本次的分享就到这里了,

![]()

看 完 就 赞 , 养 成 习 惯 ! ! ! \color{#FF0000}{看完就赞,养成习惯!!!} 看完就赞,养成习惯!!!^ _ ^ ❤️ ❤️ ❤️

码字不易,大家的支持就是我坚持下去的动力。点赞后不要忘了关注我哦!