CDH中安装Kafka基本指令总结——topic使用与测试producer产生数据、consumer消费数据

这里主要总结一些我在CDH中安装的kafka测试的一些比较基础的指令。

一、相关基础内容

Kafka群集中的每个主机都运行一个称为代理的服务器,该服务器存储发送到主题的消息并服务于消费者请求。

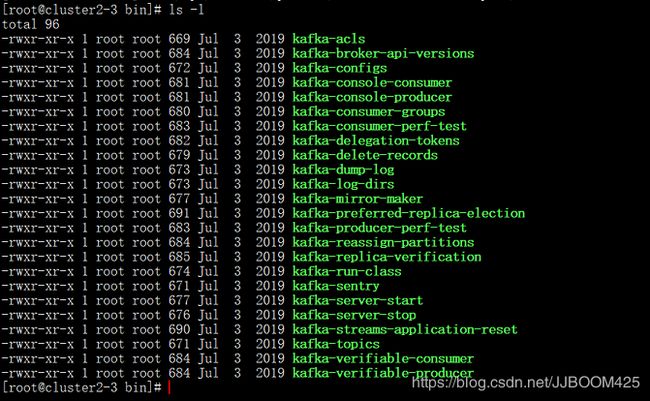

首先先看服务器安装kafka的实例信息:

注意:

然后正常kafka的指令是 : ./bin/kafka-topics.sh --zookeeper cluster2-4:2181 .......

但是使用CDH安装的kafka则不需要全写出此 ./bin/kafka-topics.sh 部分。只许直接写 kafka-topics 即可,这是很重要的一个区别,使用CDH安装的kafka时候要特别注意一下。

具体有哪些指令可以看此路径下:

/opt/cloudera/parcels/KAFKA-4.1.0-1.4.1.0.p0.4/bin

二、topic主题使用

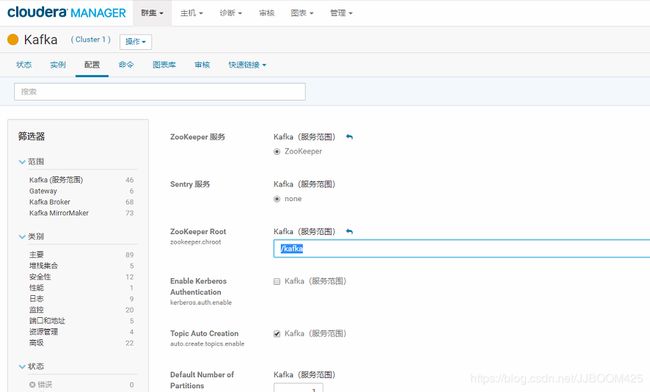

接下来测试topic指令,这里我们要先看CDH中配置的这个ZooKeeper Root的Kafka服务范围为: " /kafka "。

所以我们使用topic的指令格式应该都类似:

kafka-topics --zookeeper cluster2-4:2181/kafka ......

A.创建一个名为 test 的主题(Topic):

kafka-topics --zookeeper cluster2-4:2181/kafka --create -replication-factor 1 --partitions 3 --topic testOr

若是上述中的 ZooKeeper Root 的Kafka服务范围为: " / "。则这里的创建主题指令改为:

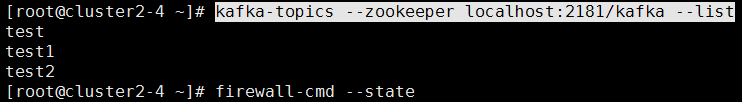

kafka-topics --zookeeper cluster2-4:2181 --create --replication-factor 1 --partitions 3 --topic testB.查询现在已经存在的topic:

kafka-topics --zookeeper localhost:2181/kafka --listC.删除创建的topic:

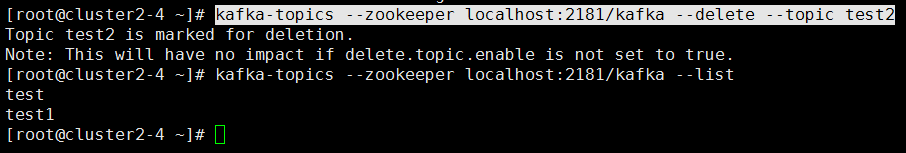

kafka-topics --zookeeper localhost:2181/kafka --delete --topic test2拓展:

这里如果直接删除,则会输出 Topic *** is marked for deletion 如上图,如果我们topic中消息堆积的太多,或者kafka所在磁盘空间满了等等,则会需要彻底清理一下kafka topic。

方法一:修改kafaka配置文件server.properties, 添加 delete.topic.enable=true,重启kafka,之后通过kafka命令行就可以直接删除topic。

方法二:通过命令行删除topic: ./bin/kafka-topics.sh --delete --zookeeper {zookeeper server} --topic {topic name}

因为kafaka配置文件中server.properties没有配置delete.topic.enable=true,此时的删除并不是真正的删除,只是把topic标记为:marked for deletion 你可以通过命令:./bin/kafka-topics --zookeeper {zookeeper server} --list 来查看所有topic

方法三:若需要真正删除它,需要登录zookeeper客户端:

zookeeper-client找到topic所在的目录:

ls /kafka/brokers/topics执行命令,即可,此时topic被彻底删除:

rmr /kafka/brokers/topics/{topic name}D.修改topic的分区数:

kafka-topics --zookeeper localhost:2181/kafka --alter --topic test \ partitions 5![]()

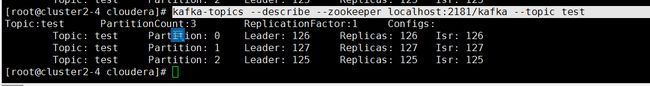

E.查看topic的详细信息:

kafka-topics --describe --zookeeper localhost:2181/kafka --topic testF.我们还可以在这里测试分布式是否连接正常:

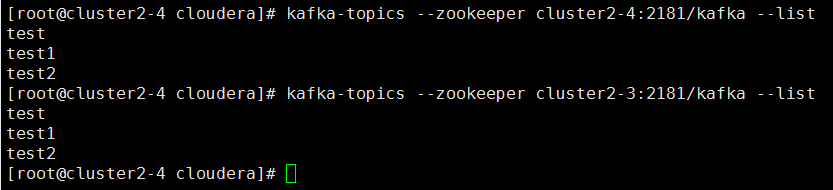

kafka-topics --zookeeper cluster2-4:2181/kafka --list

kafka-topics --zookeeper cluster2-3:2181/kafka --list可以看到在2-4这台服务器中,我们后面输入 cluster2-4:2181/kafka 与 cluster2-3:2181/kafka 均可得到统一的信息。

topic指令参数:

Option Description

------ -----------

--alter Alter the number of partitions,

replica assignment, and/or

configuration for the topic.

--bootstrap-server to. In case of providing this, a

direct Zookeeper connection won't be

required.

--command-config passed to Admin Client. This is used

only with --bootstrap-server option

for describing and altering broker

configs.

--config A topic configuration override for the

topic being created or altered.The

following is a list of valid

configurations:

cleanup.policy

compression.type

delete.retention.ms

file.delete.delay.ms

flush.messages

flush.ms

follower.replication.throttled.

replicas

index.interval.bytes

leader.replication.throttled.replicas

max.message.bytes

message.downconversion.enable

message.format.version

message.timestamp.difference.max.ms

message.timestamp.type

min.cleanable.dirty.ratio

min.compaction.lag.ms

min.insync.replicas

preallocate

retention.bytes

retention.ms

segment.bytes

segment.index.bytes

segment.jitter.ms

segment.ms

unclean.leader.election.enable

See the Kafka documentation for full

details on the topic configs.It is

supported only in combination with --

create if --bootstrap-server option

is used.

--create Create a new topic.

--delete Delete a topic

--delete-config A topic configuration override to be

removed for an existing topic (see

the list of configurations under the

--config option). Not supported with

the --bootstrap-server option.

--describe List details for the given topics.

--disable-rack-aware Disable rack aware replica assignment

--exclude-internal exclude internal topics when running

list or describe command. The

internal topics will be listed by

default

--force Suppress console prompts

--help Print usage information.

--if-exists if set when altering or deleting or

describing topics, the action will

only execute if the topic exists.

Not supported with the --bootstrap-

server option.

--if-not-exists if set when creating topics, the

action will only execute if the

topic does not already exist. Not

supported with the --bootstrap-

server option.

--list List all available topics.

--partitions The number of partitions for the topic

being created or altered (WARNING:

If partitions are increased for a

topic that has a key, the partition

logic or ordering of the messages

will be affected

--replica-assignment

--replication-factor partition in the topic being created.

--topic The topic to create, alter, describe

or delete. It also accepts a regular

expression, except for --create

option. Put topic name in double

quotes and use the '\' prefix to

escape regular expression symbols; e.

g. "test\.topic".

--topics-with-overrides if set when describing topics, only

show topics that have overridden

configs

--unavailable-partitions if set when describing topics, only

show partitions whose leader is not

available

--under-replicated-partitions if set when describing topics, only

show under replicated partitions

--zookeeper DEPRECATED, The connection string for

the zookeeper connection in the form

host:port. Multiple hosts can be

given to allow fail-over. 三、测试producer产生数据、consumer消费数据

之前我们创建好topic以后,这里测试一下如何使用kafka中的kafka-console-producer与kafka-console-consumer来生产数据、另一端消费数据。

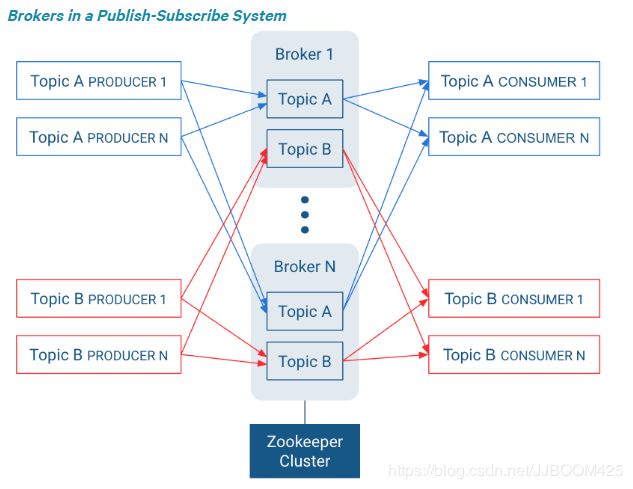

还需先了解这里 发布-订阅系统中的代理结构:

producer产生数据到Topic中,然后consumer从要消费的Topic中消费数据。

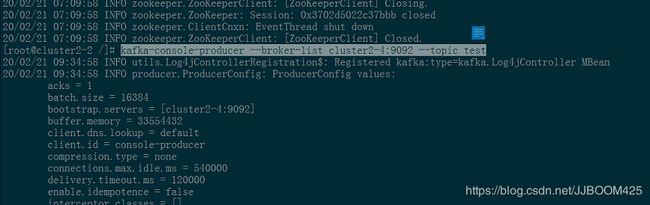

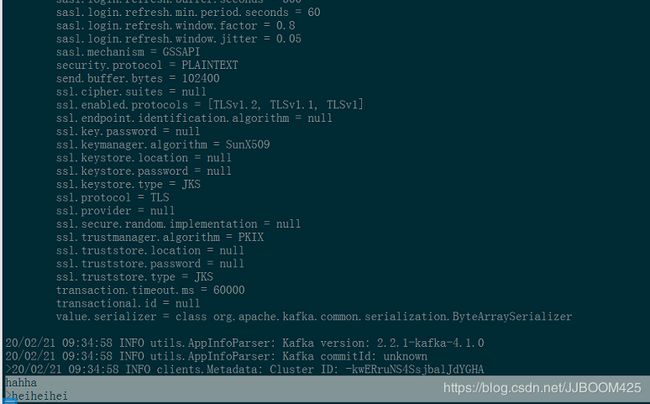

- 首先启动producer:

kafka-console-producer --broker-list cluster2-4:9092 --topic test- 在这里输入数据,这些数据会上传到zookeeper中的 /kafka/broker/test 主题中。

kafka-console-producer 生产者的指令参数:

Option Description

------ -----------

--batch-size Number of messages to send in a single

batch if they are not being sent

synchronously. (default: 200)

--broker-list REQUIRED: The broker list string in

the form HOST1:PORT1,HOST2:PORT2.

--compression-codec [String: The compression codec: either 'none',

compression-codec] 'gzip', 'snappy', 'lz4', or 'zstd'.

If specified without value, then it

defaults to 'gzip'

--help Print usage information.

--line-reader The class name of the class to use for

reading lines from standard in. By

default each line is read as a

separate message. (default: kafka.

tools.

ConsoleProducer$LineMessageReader)

--max-block-ms block for during a send request

(default: 60000)

--max-memory-bytes to buffer records waiting to be sent

to the server. (default: 33554432)

--max-partition-memory-bytes partition. When records are received

which are smaller than this size the

producer will attempt to

optimistically group them together

until this size is reached.

(default: 16384)

--message-send-max-retries Brokers can fail receiving the message

for multiple reasons, and being

unavailable transiently is just one

of them. This property specifies the

number of retires before the

producer give up and drop this

message. (default: 3)

--metadata-expiry-ms after which we force a refresh of

metadata even if we haven't seen any

leadership changes. (default: 300000)

--producer-property properties in the form key=value to

the producer.

--producer.config Producer config properties file. Note

that [producer-property] takes

precedence over this config.

--property A mechanism to pass user-defined

properties in the form key=value to

the message reader. This allows

custom configuration for a user-

defined message reader.

--request-required-acks requests (default: 1)

--request-timeout-ms requests. Value must be non-negative

and non-zero (default: 1500)

--retry-backoff-ms Before each retry, the producer

refreshes the metadata of relevant

topics. Since leader election takes

a bit of time, this property

specifies the amount of time that

the producer waits before refreshing

the metadata. (default: 100)

--socket-buffer-size The size of the tcp RECV size.

(default: 102400)

--sync If set message send requests to the

brokers are synchronously, one at a

time as they arrive.

--timeout If set and the producer is running in

asynchronous mode, this gives the

maximum amount of time a message

will queue awaiting sufficient batch

size. The value is given in ms.

(default: 1000)

--topic REQUIRED: The topic id to produce

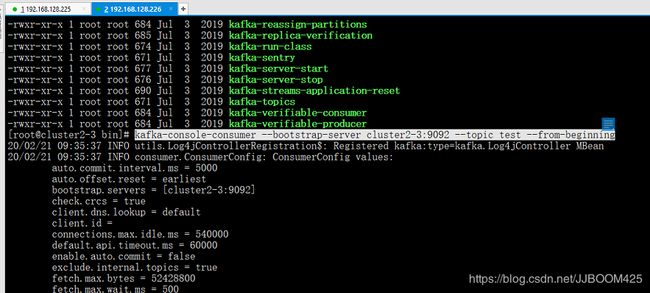

messages to. - 接着启动消费者:

kafka-console-consumer --bootstrap-server cluster2-3:9092 --topic test --from-beginning后面的 --from-beginning 表示从指定主题中有效的起始位移位置开始消费所有分区的消息。

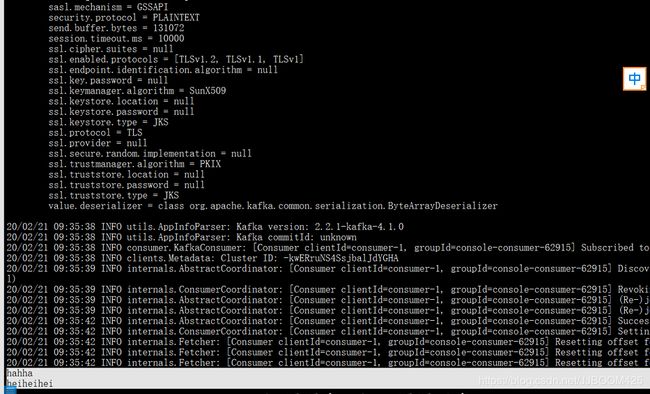

- 消费者消费到topic的数据:

kafka-console-consumer 消费者的指令参数:

Option Description

------ -----------

--bootstrap-server

--consumer-property properties in the form key=value to

the consumer.

--consumer.config Consumer config properties file. Note

that [consumer-property] takes

precedence over this config.

--enable-systest-events Log lifecycle events of the consumer

in addition to logging consumed

messages. (This is specific for

system tests.)

--formatter The name of a class to use for

formatting kafka messages for

display. (default: kafka.tools.

DefaultMessageFormatter)

--from-beginning If the consumer does not already have

an established offset to consume

from, start with the earliest

message present in the log rather

than the latest message.

--group The consumer group id of the consumer.

--help Print usage information.

--isolation-level Set to read_committed in order to

filter out transactional messages

which are not committed. Set to

read_uncommittedto read all

messages. (default: read_uncommitted)

--key-deserializer

--max-messages The maximum number of messages to

consume before exiting. If not set,

consumption is continual.

--offset The offset id to consume from (a non-

negative number), or 'earliest'

which means from beginning, or

'latest' which means from end

(default: latest)

--partition The partition to consume from.

Consumption starts from the end of

the partition unless '--offset' is

specified.

--property The properties to initialize the

message formatter. Default

properties include:

print.timestamp=true|false

print.key=true|false

print.value=true|false

key.separator=

line.separator=

key.deserializer=

value.deserializer=

Users can also pass in customized

properties for their formatter; more

specifically, users can pass in

properties keyed with 'key.

deserializer.' and 'value.

deserializer.' prefixes to configure

their deserializers.

--skip-message-on-error If there is an error when processing a

message, skip it instead of halt.

--timeout-ms If specified, exit if no message is

available for consumption for the

specified interval.

--topic The topic id to consume on.

--value-deserializer

--whitelist Regular expression specifying

whitelist of topics to include for

consumption.