说明:

1.教程中出现字体加粗和加红的说明需要大家仔细阅读,按照步骤进行安装,都是比较重要的细节,如果有同学忘记或者跳过说明的步骤,环境大家的过程中问题会非常的多.

2.本教程主要引导同学进行hadoop 2.x版本的安装,之所以还要进行hadoop2.x版本的安装,是我们现在市场中大部分很早的企业部署的是hadoop2.x,上课主要讲解hadoop2.x,经历的hadoop3.x版本的安装之后,需要同学们进行独立的按照步骤将hadoop2.x版本安装完成.

3.本教程与之前【三节点大数据环境安装教程】相同的是虚拟机,ip配置,免密登录配置,时间同步都是一样的,不同的是安装和配置发生了小小的变化,从本教程【4.安装hadoop2】节开始不一样,大体步骤和之前的一样,只有几个小步骤不一样,大家需要留意细节。

1.主机名和IP配置

我们按照【三节点大数据环境安装教程1】已经完成虚拟机的克隆,但是我们克隆出来的三台虚拟机的配置是一样的需要做简单的修改.

1.1 启动三台虚拟机

1.启动第一台虚拟机

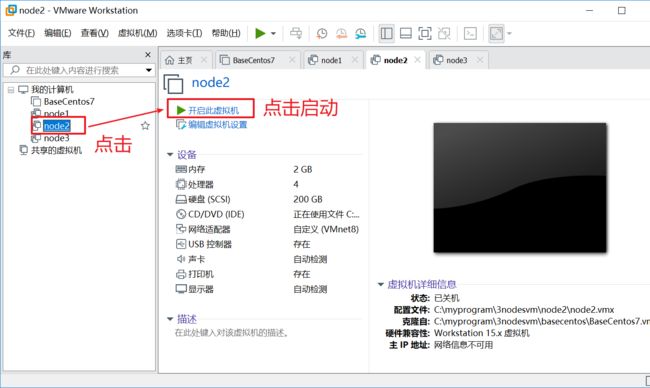

2.启动第二台虚拟机

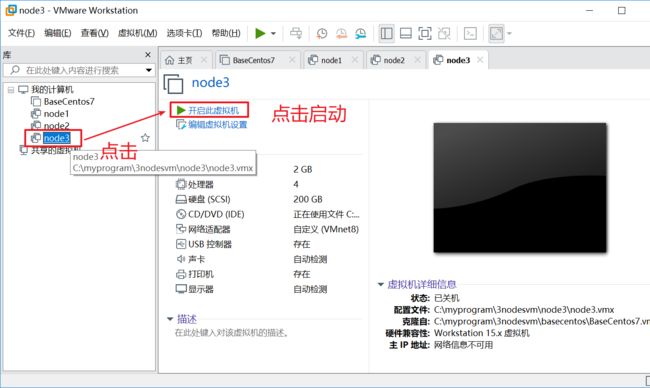

3.启动第三台虚拟机

1.2 配置三台虚拟机主机名

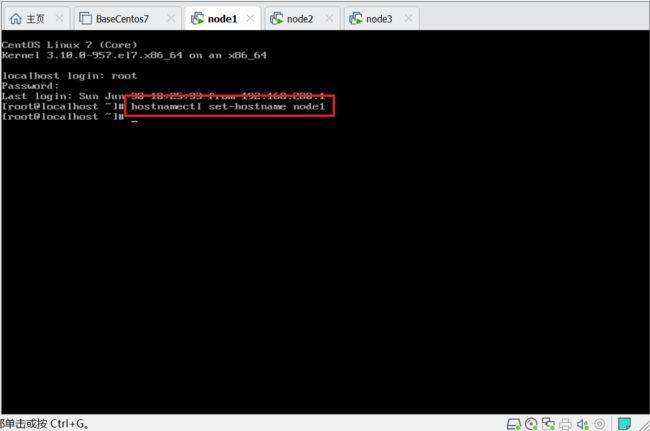

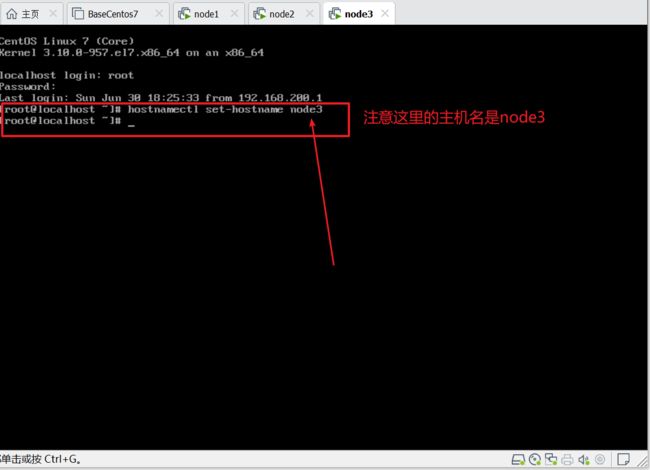

1. 首先使用root用户名和root密码分别登录三台虚拟机

2. 分别在三台虚拟机上执行命令:hostnamectl set-hostname nodeXXX(虚拟机名)

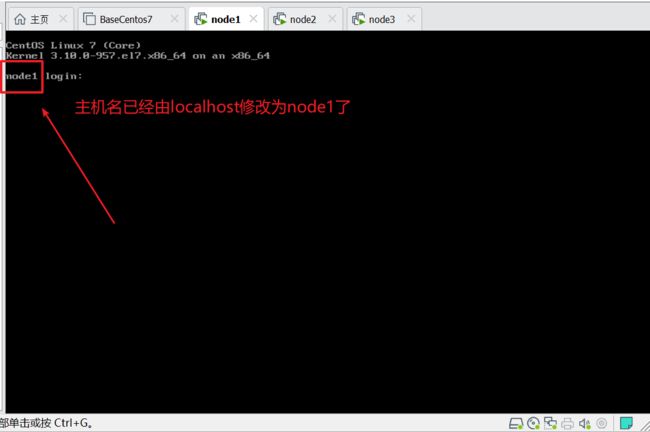

第一台机器上设置主机名node1

第二台机器上设置主机名node2

第三台机器上设置主机名node3

然后在三台机器上分别执行命令:logout

发现主机名已经修改成node1了,相同的操作大家在其他两台机器上执行下看看效果.

1.3 ip配置

三节点ip规划如下:

| 节点名称 | ip |

|---|---|

| node1 | 192.168.200.11 |

| node2 | 192.168.200.12 |

| node3 | 192.168.200.13 |

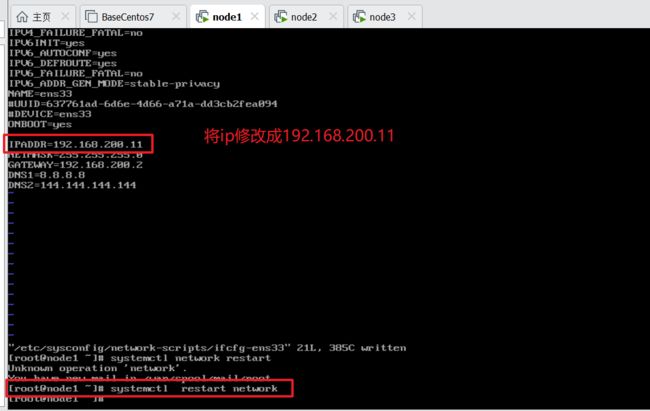

如下图,将node1上的ip修改为192.168.200.11,修改完后使用命令:systemctl restart network重启网卡

按照上面步骤一次修改node2的ip为:192.168.200.12,修改完后使用systemctl restart nework命令重启网卡,node3的ip修改方法一样,修改为192.168.200.13,修改完后重启网卡.

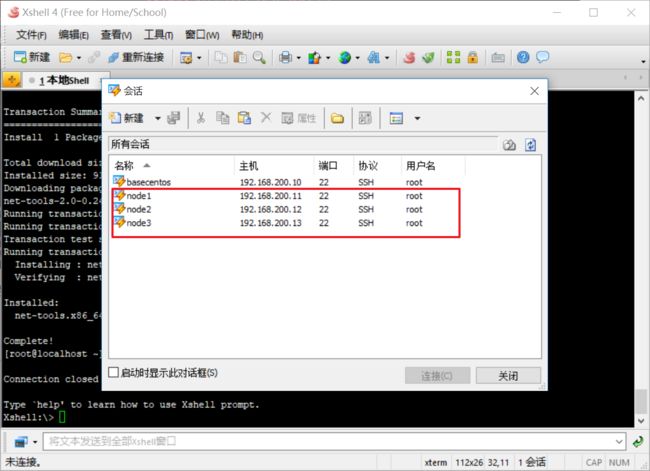

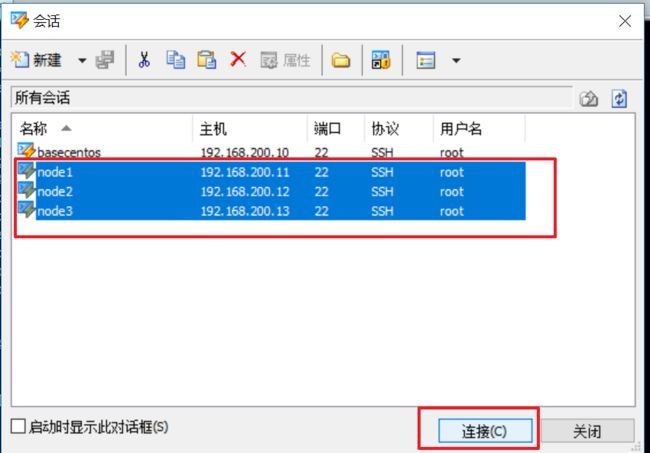

2.在xshell中创建三台虚拟机连接会话

3.root用户的免密登录配置

3.1 连接三台虚拟机

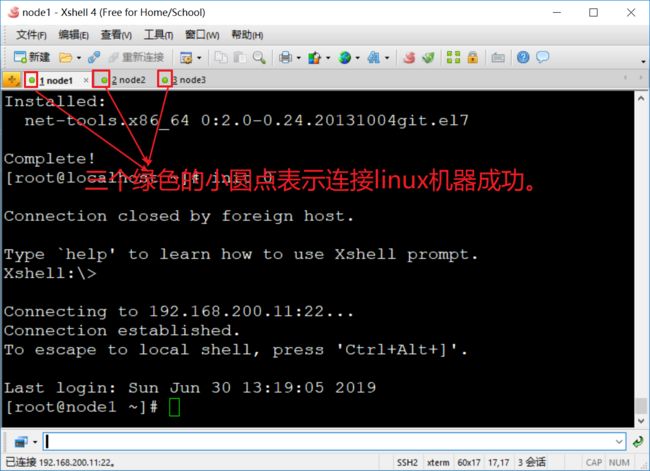

按住Ctrl一次选择三台虚拟机的会话连接,点击连接,这时会一次性打开三台虚拟机的连接会话

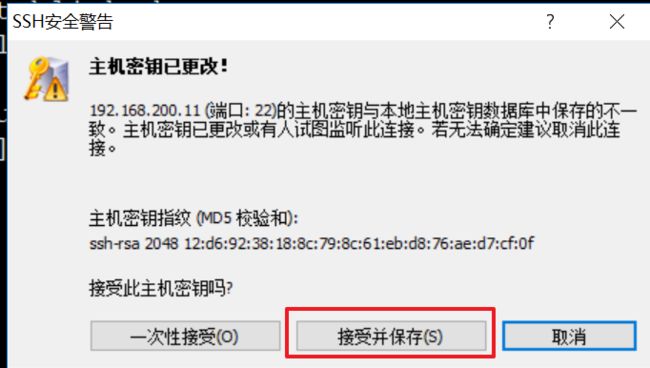

会出现三次安全警告,连续点击三次接受并保存即可.

3.2 生成公钥和私钥

使用此命令:ssh-keygen -t rsa 分别在三台机器中都执行一遍,这里只在node1上做演示,其他两台机器也需要执行此命令。

[root@node1 ~]# ssh-keygen -t rsa #<--回车

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa): #<--回车

#会在root用户的家目录下生成.ssh目录,此目录中会保存生成的公钥和私钥

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase): #<--回车

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:gpDw08iG9Tq+sGZ48TXirWTY17ajXhIea3drjy+pU3g root@node1

The key's randomart image is:

+---[RSA 2048]----+

|. . |

| * = |

|. O o |

| . + . |

| o . + S. |

| ..+..o*. E |

|o o+++*.=o.. |

|.=.+oo.=oo+o |

|+.. .oo.o=o+o |

+----[SHA256]-----+

You have new mail in /var/spool/mail/root

[root@node1 ~]#

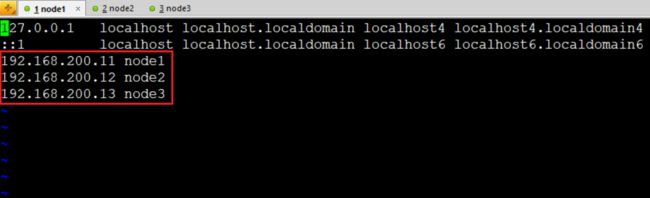

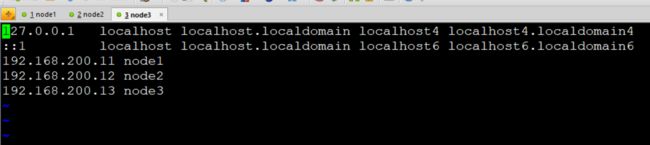

3.3 配置hosts文件

#hosts文件中配置三台机器ip和主机名的映射关系,其他两台机器按照相同的方式操作.

[root@node1 ~]# vi /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.200.11 node1

192.168.200.12 node2

192.168.200.13 node3

node1的配置:

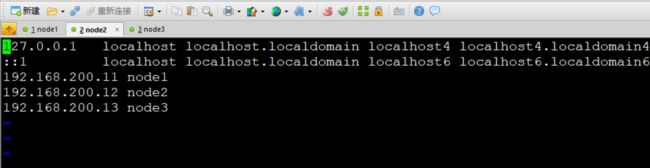

node2的配置:

node3的配置:

3.4 拷贝公钥文件

1. 将node1的公钥拷贝到node2,node3上

2. 将node2的公钥拷贝到node1,node3上

3. 将node3的公钥拷贝到node1,node2上

以下以node1为例执行秘钥复制命令:ssh-copy-id -i 主机名

#复制到node2上

[root@node1 ~]# ssh-copy-id -i node2

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

The authenticity of host 'node2 (192.168.200.12)' can't be established.

ECDSA key fingerprint is SHA256:rJzUyoggUP/Zn9v5rvqKpWppnG9xZ4gBZuXqHWxPy5k.

ECDSA key fingerprint is MD5:f3:37:16:c4:bb:00:3e:59:ec:b3:37:23:1b:24:88:e6.

Are you sure you want to continue connecting (yes/no)? yes #询问是否要连接输入yes回车

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@node2's password: #输入root用户的密码root后回车

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'node2'"

and check to make sure that only the key(s) you wanted were added.

#复制到node3上

[root@node1 ~]# ssh-copy-id -i node3

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

The authenticity of host 'node3 (192.168.200.13)' can't be established.

ECDSA key fingerprint is SHA256:rJzUyoggUP/Zn9v5rvqKpWppnG9xZ4gBZuXqHWxPy5k.

ECDSA key fingerprint is MD5:f3:37:16:c4:bb:00:3e:59:ec:b3:37:23:1b:24:88:e6.

Are you sure you want to continue connecting (yes/no)? yes #询问是否要连接输入yes回车

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@node3's password: #输入root用户的密码root后回车

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'node3'"

and check to make sure that only the key(s) you wanted were added.

[root@node1 ~]#

3.4 验证免密登录配置

此操作只在node1上操作,其他机器上大家在验证。

#使用ssh 命令登录node2

[root@node1 ~]# ssh node2

Last login: Sun Jun 30 13:56:53 2019 from node1

#登录成功后这里的命令提示符已经变为[root@node2 ~]#说明登录成功

[root@node2 ~]# logout #退出node2继续 验证登录node3

Connection to node2 closed.

#登录node3

[root@node1 ~]# ssh node3

Last login: Sun Jun 30 13:56:55 2019 from node1

#登录成功

[root@node3 ~]# logout

Connection to node3 closed.

You have new mail in /var/spool/mail/root

[root@node1 ~]#

3.5 添加本地认证公钥到认证文件中

#进入到root用户的家目录下

[root@node1 ~]# cd ~

[root@node1 ~]# cd .ssh/

#讲生成的公钥添加到认证文件中

[root@node1 .ssh]# cat id_rsa.pub >> authorized_keys

[root@node1 .ssh]#

4.安装hadoop2

4.1 创建hadoop用户组和hadoop用户

创建hadoop用户组和hadoop用户需要在三台机器上分别操作,这里以node1节点配置过程为例

#1.创建用户组hadoop

[root@node1 ~]# groupadd hadoop

#2.创建用户hadoop并添加到hadoop用户组中

[root@node1 ~]# useradd -g hadoop hadoop

#3.使用id命令查看hadoop用户组和hadoop用户创建是否成功

[root@node1 ~]# id hadoop

#用户uid 用户组id gid 用户组名

uid=1000(hadoop) gid=1000(hadoop) groups=1000(hadoop)

#设置hadoop用户密码为hadoop

[root@node1 ~]# passwd hadoop

Changing password for user hadoop.

New password: #输入hadoop后回车

BAD PASSWORD: The password is shorter than 8 characters

Retype new password: #再次输入hadoop后回车

passwd: all authentication tokens updated successfully.

[root@node1 ~]# chown -R hadoop:hadoop /home/hadoop/

[root@node1 ~]# chmod -R 755 /home/hadoop/

#把root用户的环境变量文件复制并覆盖hadoop用户下的.bash_profile

[root@node1 ~]# cp .bash_profile /home/hadoop/

重要的话必须说三次,三次,三次,再三次,看看下面的三行红色的字,不做,后面集群启动不了,让你后悔一万年,不懂照着做,啥都不要想,一个字就是干,一路操作猛如虎!

请参考 3.2生成公钥和私钥,3.4 验证免密码登录配置,3.5 添加本地认证公钥到认证文件中,在hadoop用户下,对hadoop用户做免密码登录配置

请参考 3.2生成公钥和私钥,3.4 验证免密码登录配置,3.5 添加本地认证公钥到认证文件中,在hadoop用户下,对hadoop用户做免密码登录配置

请参考 3.2生成公钥和私钥,3.4 验证免密码登录配置,3.5 添加本地认证公钥到认证文件中,在hadoop用户下,对hadoop用户做免密码登录配置

[hadoop@node1 ~] su - hadoop

[hadoop@node1 ~] source.bash_profile

#使用su - hadoop切换到hadoop用户下执行如下操作

[hadoop@node1 ~]# ssh-keygen -t rsa #<--回车

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa): #<--回车

#会在root用户的家目录下生成.ssh目录,此目录中会保存生成的公钥和私钥

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase): #<--回车

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:gpDw08iG9Tq+sGZ48TXirWTY17ajXhIea3drjy+pU3g root@node1

The key's randomart image is:

+---[RSA 2048]----+

|. . |

| * = |

|. O o |

| . + . |

| o . + S. |

| ..+..o*. E |

|o o+++*.=o.. |

|.=.+oo.=oo+o |

|+.. .oo.o=o+o |

+----[SHA256]-----+

You have new mail in /var/spool/mail/root

[hadoop@node1 ~]#

#修改.ssh目录权限

[hadoop@node1 ~]$ chmod -R 755 .ssh/

[hadoop@node1 ~]$ cd .ssh/

[hadoop@node1 .ssh]$ chmod 644 *

[hadoop@node1 .ssh]$ chmod 600 id_rsa

[hadoop@node1 .ssh]$ chmod 600 id_rsa.pub

[hadoop@node1 .ssh]$

4.2 配置hadoop

在一台机器上配置好后复制到其他机器上即可,这样保证三台机器的hadoop配置是一致的.

1.上传hadoop安装包,进行解压

#1.创建hadoop安装目录

[root@node1 ~]# mkdir -p /opt/bigdata

#2.解压hadoop-2.7.3.tar.gz

[root@node1 ~]# tar -xzvf hadoop-2.7.3.tar.gz -C /opt/bigdata/

2.配置hadoop环境变量

1.配置环境变量

[root@node1 ~]# vi .bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

JAVA_HOME=/usr/java/jdk1.8.0_211-amd64

HADOOP_HOME=/opt/bigdata/hadoop-2.7.3

PATH=$PATH:$HOME/bin:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

export JAVA_HOME

export HADOOP_HOME

export PATH

~

:wq!

2.验证环境变量

#1.使环境变量生效

[root@node1 ~]# source .bash_profile

#2.显示hadoop的版本信息

[root@node1 ~]# hadoop version

#3.显示出hadoop版本信息表示安装和环境变量成功.

Hadoop 2.7.3

Source code repository https://github.com/apache/hadoop.git -r 1019dde65bcf12e05ef48ac71e84550d589e5d9a

Compiled by sunilg on 2019-01-29T01:39Z

Compiled with protoc 2.5.0

From source with checksum 64b8bdd4ca6e77cce75a93eb09ab2a9

This command was run using /opt/bigdata/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar

[root@node1 ~]#

hadoop用户下也需要按照root用户配置环境变量的方式操作一下

3.配置hadoop-env.sh,yarn-env.sh

修改hadoop-env.sh,yarn-env.sh,这个文件只需要配置JAVA_HOME的值即可,在文件中找到export JAVA_HOME字眼的位置,删除最前面的#

export JAVA_HOME=/usr/java/jdk1.8.0_211-amd64

hadoop-env.sh文件配置

[root@node1 ~]# cd /opt/bigdata/hadoop-2.7.3/etc/hadoop/

You have new mail in /var/spool/mail/root

[root@node1 hadoop]# pwd

/opt/bigdata/hadoop-2.7.3/etc/hadoop

[root@node1 hadoop]# vi hadoop-env.sh

yarn-env.sh文件配置

[root@node1 ~]# cd /opt/bigdata/hadoop-2.7.3/etc/hadoop/

You have new mail in /var/spool/mail/root

[root@node1 hadoop]# pwd

/opt/bigdata/hadoop-2.7.3/etc/hadoop

[root@node1 hadoop]# vi yarn-env.sh

4.配置core-site.xml

切换到cd /opt/bigdata/hadoop-2.7.3/etc/hadoop/目录下

[root@node1 ~]# cd /opt/bigdata/hadoop-2.7.3/etc/hadoop/

fs.defaultFS

hdfs://node1:9000

hadoop.tmp.dir

/opt/bigdata/hadoop-2.7.3/tmpdata

5.配置hdfs-site.xml

配置/opt/bigdata/hadoop-2.7.3/etc/hadoop/目录下的hdfs-site.xml

dfs.namenode.name.dir

/opt/bigdata/hadoop-2.7.3/hadoop/dfs/name/

dfs.datanode.data.dir

/opt/bigdata/hadoop-2.7.3/hadoop/hdfs/data/

dfs.replication

3

6.配置mapred-site.xml

在/opt/bigdata/hadoop-2.7.3/etc/hadoop路径下mapred-site.xml本身不存在的,只有一个mapred-site.xml.template模板文件,这时需要把mapred-site.xml.template模板文件复制一份配置文件出来作为配置文件,不建议直接在模板文件上修改,如果修改失败就会破坏模板文件,复制一份新的文件出来修改即可,失败了可以再次从模板文件复制一份.(注意这里和hadoop3不同的是mapred-site.xml文件本身已经存在)

#从模板文件复制一份配置文件处理

[root@node1 hadoop]# cp mapred-site.xml.template mapred-site.xml

[root@node1 hadoop]#

配置/opt/bigdata/hadoop-2.7.3/etc/hadoop/目录下的mapred-site.xml

mapreduce.framework.name

yarn

7.配置yarn-site.xml

配置/opt/bigdata/hadoop-2.7.3/etc/hadoop/目录下的yarn-site.xml

yarn.nodemanager.aux-services

mapreduce_shuffle

yarn.resourcemanager.address

node1:18040

yarn.resourcemanager.scheduler.address

node1:18030

yarn.resourcemanager.resource-tracker.address

node1:18025

yarn.resourcemanager.admin.address

node1:18141

yarn.resourcemanager.webapp.address

node1:18088

8.编辑slaves

此文件用于配置集群有多少个数据节点,我们把node2,node3作为数据节点,node1作为集群管理节点.

配置/opt/bigdata/hadoop-2.7.3/etc/hadoop/目录下的slaves

[root@node1 hadoop]# vi slaves

#将localhost这一行删除掉

node2

node3

~

4.3 远程复制hadoop到集群机器

#1.进入到root用户家目录下

[root@node1 hadoop]# cd ~

#2.使用scp远程拷贝命令将root用户的环境变量配置文件复制到node2

[root@node1 ~]# scp .bash_profile root@node2:~

.bash_profile 100% 338 566.5KB/s 00:00

#3.使用scp远程拷贝命令将root用户的环境变量配置文件复制到node3

[root@node1 ~]# scp .bash_profile root@node3:~

.bash_profile 100% 338 212.6KB/s 00:00

[root@node1 ~]#

#4.进入到hadoop的share目录下

[root@node1 ~]# cd /opt/bigdata/hadoop-2.7.3/share/

You have new mail in /var/spool/mail/root

[root@node1 share]# ll

total 0

drwxr-xr-x 3 1001 1002 20 Jan 29 12:05 doc

drwxr-xr-x 8 1001 1002 88 Jan 29 11:36 hadoop

#5.删除doc目录,这个目录存放的是用户手册,比较大,等会儿下面进行远程复制的时候时间比较长,删除后节约复制时间

[root@node1 share]# rm -rf doc/

[root@node1 share]# cd ~

You have new mail in /var/spool/mail/root

[root@node1 ~]# scp -r /opt root@node2:/

[root@node1 ~]# scp -r /opt root@node3:/

4.4 使集群所有机器环境变量生效

在node2,node3的root用户家目录下使环境变量生效

node2节点如下操作:

[root@node2 hadoop-2.7.3]# cd ~

[root@node2 ~]# source .bash_profile

[root@node2 ~]# hadoop version

Hadoop 2.7.3

Source code repository https://github.com/apache/hadoop.git -r 1019dde65bcf12e05ef48ac71e84550d589e5d9a

Compiled by sunilg on 2019-01-29T01:39Z

Compiled with protoc 2.5.0

From source with checksum 64b8bdd4ca6e77cce75a93eb09ab2a9

This command was run using /opt/bigdata/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar

[root@node2 ~]#

node3节点如下操作:

[root@node3 bin]# cd ~

[root@node3 ~]# source .bash_profile

[root@node3 ~]# hadoop version

Hadoop 2.7.3

Source code repository https://github.com/apache/hadoop.git -r 1019dde65bcf12e05ef48ac71e84550d589e5d9a

Compiled by sunilg on 2019-01-29T01:39Z

Compiled with protoc 2.5.0

From source with checksum 64b8bdd4ca6e77cce75a93eb09ab2a9

This command was run using /opt/bigdata/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar

[root@node3 ~]#

5.修改hadoop安装目录的权限

node2,node3也需要进行如下操作

#1.修改目录所属用户和组为hadoop:hadoop

[root@node1 ~]# chown -R hadoop:hadoop /opt/

You have new mail in /var/spool/mail/root

You have new mail in /var/spool/mail/root

#2.修改目录所属用户和组的权限值为755

[root@node1 ~]# chmod -R 755 /opt/

[root@node1 ~]# chmod -R g+w /opt/

[root@node1 ~]# chmod -R o+w /opt/

[root@node1 ~]#

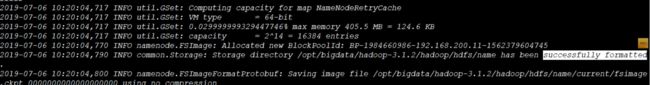

6.格式化hadoop

#切换

[root@node1 ~]# su - hadoop

[hadoop@node1 hadoop]$ hdfs namenode -format

2019-06-30 16:11:35,914 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = node1/192.168.200.11

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.7.3

#此处省略部分日志

2019-06-30 16:11:36,636 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at node1/192.168.200.11

************************************************************/

[hadoop@node1 hadoop]$

格式化成功提示信息,如下图:

7.启动集群

[hadoop@node1 ~]$ start-all.sh

WARNING: Attempting to start all Apache Hadoop daemons as hadoop in 10 seconds.

WARNING: This is not a recommended production deployment configuration.

WARNING: Use CTRL-C to abort.

Starting namenodes on [node1]

Starting datanodes

Starting secondary namenodes [node1]

Starting resourcemanager

Starting nodemanagers

#使用jps显示java进程

[hadoop@node1 ~]$ jps

40852 ResourceManager

40294 NameNode

40615 SecondaryNameNode

41164 Jps

[hadoop@node1 ~]$

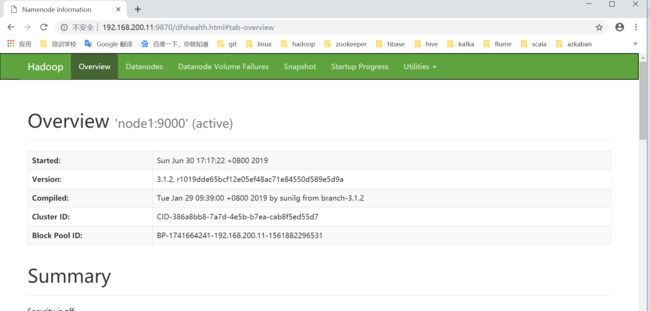

在浏览器地址栏中输入:http://192.168.200.11:9870查看namenode的web界面.

8.运行mapreduce程序

mapreduce程序(行话程为词频统计程序(中文名),英文名:wordcount),就是统计一个文件中每一个单词出现的次数,也是我们学习大数据技术最基础,最简单的程序,入门必须要会要懂的第一个程序,其地位和java,php,c#,javascript等编程语言的第一个入门程序HelloWorld(在控制台打印“hello world!”等字样)程序一样,尤为重要,不同的是它们是单机应用程序,我们接下来要运行的程序(wordcount)是一个分布式运行的程序,是在一个大数据集群中运行的程序。wordcount程序能够正常的运行成功,输入结果,意味着我们的大数据环境正确的安装和配置成功。好,简单的先介绍到这里,接下来让我们爽一把吧。

#1.使用hdfs dfs -ls / 命令浏览hdfs文件系统,集群刚开始搭建好,由于没有任何目录所以什么都不显示.

[hadoop@node1 ~]$ hdfs dfs -ls /

#2.创建测试目录

[hadoop@node1 ~]$ hdfs dfs -mkdir /test

#3.在此使用hdfs dfs -ls 发现我们刚才创建的test目录

[hadoop@node1 ~]$ hdfs dfs -ls /

Found 1 items

drwxr-xr-x - hadoop supergroup 0 2019-06-30 17:23 /test

#4.使用touch命令在linux本地目录创建一个words文件

[hadoop@node1 ~]$ touch words

#5.文件中输入如下内容

[hadoop@node1 ~]$ vi words

i love you

are you ok

#6.将创建的本地words文件上传到hdfs的test目录下

[hadoop@node1 ~]$ hdfs dfs -put words /test

#7.查看上传的文件是否成功

[hadoop@node1 ~]$ hdfs dfs -ls -r /test

Found 1 items

-rw-r--r-- 3 hadoop supergroup 23 2019-06-30 17:28 /test/words

#/test/words 是hdfs上的文件存储路径 /test/output是mapreduce程序的输出路径,这个输出路径是不能已经存在的路径,mapreduce程序运行的过程中会自动创建输出路径,数据路径存在的话会报错,这里需要同学注意下.

[hadoop@node1 ~]$ hadoop jar /opt/bigdata/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar wordcount /test/words /test/output

19/07/05 21:03:21 INFO client.RMProxy: Connecting to ResourceManager at node1/192.168.200.11:18040

19/07/05 21:03:25 INFO input.FileInputFormat: Total input paths to process : 1

19/07/05 21:03:25 INFO mapreduce.JobSubmitter: number of splits:1

19/07/05 21:03:26 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1562330745510_0001

19/07/05 21:03:28 INFO impl.YarnClientImpl: Submitted application application_1562330745510_0001

19/07/05 21:03:29 INFO mapreduce.Job: The url to track the job: http://node1:18088/proxy/application_1562330745510_0001/

19/07/05 21:03:29 INFO mapreduce.Job: Running job: job_1562330745510_0001

19/07/05 21:03:57 INFO mapreduce.Job: Job job_1562330745510_0001 running in uber mode : false

19/07/05 21:03:57 INFO mapreduce.Job: map 0% reduce 0%

19/07/05 21:04:15 INFO mapreduce.Job: map 100% reduce 0%

19/07/05 21:04:29 INFO mapreduce.Job: map 100% reduce 100%

19/07/05 21:04:30 INFO mapreduce.Job: Job job_1562330745510_0001 completed successfully

19/07/05 21:04:31 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=54

FILE: Number of bytes written=237145

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=116

HDFS: Number of bytes written=28

HDFS: Number of read operations=6

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=15210

Total time spent by all reduces in occupied slots (ms)=11065

Total time spent by all map tasks (ms)=15210

Total time spent by all reduce tasks (ms)=11065

Total vcore-milliseconds taken by all map tasks=15210

Total vcore-milliseconds taken by all reduce tasks=11065

Total megabyte-milliseconds taken by all map tasks=15575040

Total megabyte-milliseconds taken by all reduce tasks=11330560

Map-Reduce Framework

Map input records=2

Map output records=6

Map output bytes=46

Map output materialized bytes=54

Input split bytes=93

Combine input records=6

Combine output records=5

Reduce input groups=5

Reduce shuffle bytes=54

Reduce input records=5

Reduce output records=5

Spilled Records=10

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=1501

CPU time spent (ms)=9710

Physical memory (bytes) snapshot=443453440

Virtual memory (bytes) snapshot=4226617344

Total committed heap usage (bytes)=282591232

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=23

File Output Format Counters

Bytes Written=28

[hadoop@node1 ~]$ hdfs dfs -ls /test/output

Found 2 items

-rw-r--r-- 3 hadoop supergroup 0 2019-07-05 21:04 /test/output/_SUCCESS

-rw-r--r-- 3 hadoop supergroup 28 2019-07-05 21:04 /test/output/part-r-00000

[hadoop@node1 ~]$ hdfs dfs -text /test/output/part-r-00000

are 1

i 1

love 1

ok 1

you 2

[hadoop@node1 ~]$

9.停止集群

[hadoop@node1 ~]$ stop-all.sh

至此三节点的hadoop集群环境搭建完成,谢谢大家能够有耐心的看完!