Ceph集群报错解决方案笔记

当前Ceph版本和CentOS版本:

[root@ceph1 ceph]# ceph -v

ceph version 13.2.2 (02899bfda814146b021136e9d8e80eba494e1126) mimic (stable)

[root@ceph1 ceph]# cat /etc/redhat-release

CentOS Linux release 7.5.1804 (Core)

1.节点间配置文件内容不一致错误

输入ceph-deploy mon create-initial命令获取密钥key,会在当前目录(如我的是~/etc/ceph/)下生成几个key,但报错如下。意思是:就是配置失败的两个结点的配置文件的内容于当前节点不一致,提示使用--overwrite-conf参数去覆盖不一致的配置文件。

[root@ceph1 ceph]# ceph-deploy mon create-initial

...

[ceph2][DEBUG ] remote hostname: ceph2

[ceph2][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.mon][ERROR ] RuntimeError: config file /etc/ceph/ceph.conf exists with different content; use --overwrite-conf to overwrite

[ceph_deploy][ERROR ] GenericError: Failed to create 2 monitors

...

输入命令如下(此处我共配置了三个结点ceph1~3):

[root@ceph1 ceph]# ceph-deploy --overwrite-conf mon create ceph{3,1,2}

...

[ceph2][DEBUG ] remote hostname: ceph2

[ceph2][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph2][DEBUG ] create the mon path if it does not exist

[ceph2][DEBUG ] checking for done path: /var/lib/ceph/mon/ceph-ceph2/done

...

之后配置成功,可继续进行初始化磁盘操作。

2.too few PGs per OSD (21 < min 30)警告

[root@ceph1 ceph]# ceph -s

cluster:

id: 8e2248e4-3bb0-4b62-ba93-f597b1a3bd40

health: HEALTH_WARN

too few PGs per OSD (21 < min 30)

services:

mon: 3 daemons, quorum ceph2,ceph1,ceph3

mgr: ceph2(active), standbys: ceph1, ceph3

osd: 3 osds: 3 up, 3 in

rgw: 1 daemon active

data:

pools: 4 pools, 32 pgs

objects: 219 objects, 1.1 KiB

usage: 3.0 GiB used, 245 GiB / 248 GiB avail

pgs: 32 active+clean

- 从上面集群状态信息可查,每个osd上的pg数量=21<最小的数目30个。pgs为32,因为我之前设置的是2副本的配置,所以当有3个osd的时候,每个osd上均分了

32÷3*2=21个pgs,也就是出现了如上的错误 小于最小配置30个。 - 集群这种状态如果进行数据的存储和操作,会发现集群卡死,无法响应io,同时会导致大面积的osd down。

解决办法:增加pg数

因为我的一个pool有8个pgs,所以我需要增加两个pool才能满足osd上的pg数量=48÷3*2=32>最小的数目30。

[root@ceph1 ceph]# ceph osd pool create mytest 8

pool 'mytest' created

[root@ceph1 ceph]# ceph osd pool create mytest1 8

pool 'mytest1' created

[root@ceph1 ceph]# ceph -s

cluster:

id: 8e2248e4-3bb0-4b62-ba93-f597b1a3bd40

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph2,ceph1,ceph3

mgr: ceph2(active), standbys: ceph1, ceph3

osd: 3 osds: 3 up, 3 in

rgw: 1 daemon active

data:

pools: 6 pools, 48 pgs

objects: 219 objects, 1.1 KiB

usage: 3.0 GiB used, 245 GiB / 248 GiB avail

pgs: 48 active+clean

集群健康状态显示正常。

3.集群状态是HEALTH_WARN application not enabled on 1 pool(s)

如果此时,查看集群状态是HEALTH_WARN application not enabled on 1 pool(s):

[root@ceph1 ceph]# ceph -s

cluster:

id: 13430f9a-ce0d-4d17-a215-272890f47f28

health: HEALTH_WARN

application not enabled on 1 pool(s)

[root@ceph1 ceph]# ceph health detail

HEALTH_WARN application not enabled on 1 pool(s)

POOL_APP_NOT_ENABLED application not enabled on 1 pool(s)

application not enabled on pool 'mytest'

use 'ceph osd pool application enable ' , where <app-name> is 'cephfs', 'rbd', 'rgw', or freeform for custom applications.

运行ceph health detail命令发现是新加入的存储池mytest没有被应用程序标记,因为之前添加的是RGW实例,所以此处依提示将mytest被rgw标记即可:

[root@ceph1 ceph]# ceph osd pool application enable mytest rgw

enabled application 'rgw' on pool 'mytest'

再次查看集群状态发现恢复正常

[root@ceph1 ceph]# ceph health

HEALTH_OK

4.删除存储池报错

以下以删除mytest存储池为例,运行ceph osd pool rm mytest命令报错,显示需要在原命令的pool名字后再写一遍该pool名字并最后加上--yes-i-really-really-mean-it参数

[root@ceph1 ceph]# ceph osd pool rm mytest

Error EPERM: WARNING: this will *PERMANENTLY DESTROY* all data stored in pool mytest. If you are *ABSOLUTELY CERTAIN* that is what you want, pass the pool name *twice*, followed by --yes-i-really-really-mean-it.

按照提示要求复写pool名字后加上提示参数如下,继续报错:

[root@ceph1 ceph]# ceph osd pool rm mytest mytest --yes-i-really-really-mean-it

Error EPERM: pool deletion is disabled; you must first set the

mon_allow_pool_delete config option to true before you can destroy a pool

错误信息显示,删除存储池操作被禁止,应该在删除前现在ceph.conf配置文件中增加mon_allow_pool_delete选项并设置为true。所以分别登录到每一个节点并修改每一个节点的配置文件。操作如下:

[root@ceph1 ceph]# vi ceph.conf

[root@ceph1 ceph]# systemctl restart ceph-mon.target

在ceph.conf配置文件底部加入如下参数并设置为true,保存退出后使用systemctl restart ceph-mon.target命令重启服务。

[mon]

mon allow pool delete = true

其余节点操作同理。

[root@ceph2 ceph]# vi ceph.conf

[root@ceph2 ceph]# systemctl restart ceph-mon.target

[root@ceph3 ceph]# vi ceph.conf

[root@ceph3 ceph]# systemctl restart ceph-mon.target

再次删除,即成功删除mytest存储池。

[root@ceph1 ceph]# ceph osd pool rm mytest mytest --yes-i-really-really-mean-it

pool 'mytest' removed

5.集群节点宕机后恢复节点排错

笔者将ceph集群中的三个节点分别关机并重启后,查看ceph集群状态如下:

[root@ceph1 ~]# ceph -s

cluster:

id: 13430f9a-ce0d-4d17-a215-272890f47f28

health: HEALTH_WARN

1 MDSs report slow metadata IOs

324/702 objects misplaced (46.154%)

Reduced data availability: 126 pgs inactive

Degraded data redundancy: 144/702 objects degraded (20.513%), 3 pgs degraded, 126 pgs undersized

services:

mon: 3 daemons, quorum ceph2,ceph1,ceph3

mgr: ceph1(active), standbys: ceph2, ceph3

mds: cephfs-1/1/1 up {0=ceph1=up:creating}

osd: 3 osds: 3 up, 3 in; 162 remapped pgs

data:

pools: 8 pools, 288 pgs

objects: 234 objects, 2.8 KiB

usage: 3.0 GiB used, 245 GiB / 248 GiB avail

pgs: 43.750% pgs not active

144/702 objects degraded (20.513%)

324/702 objects misplaced (46.154%)

162 active+clean+remapped

123 undersized+peered

3 undersized+degraded+peered

查看

[root@ceph1 ~]# ceph health detail

HEALTH_WARN 1 MDSs report slow metadata IOs; 324/702 objects misplaced (46.154%); Reduced data availability: 126 pgs inactive; Degraded data redundancy: 144/702 objects degraded (20.513%), 3 pgs degraded, 126 pgs undersized

MDS_SLOW_METADATA_IO 1 MDSs report slow metadata IOs

mdsceph1(mds.0): 9 slow metadata IOs are blocked > 30 secs, oldest blocked for 42075 secs

OBJECT_MISPLACED 324/702 objects misplaced (46.154%)

PG_AVAILABILITY Reduced data availability: 126 pgs inactive

pg 8.28 is stuck inactive for 42240.369934, current state undersized+peered, last acting [0]

pg 8.2a is stuck inactive for 45566.934835, current state undersized+peered, last acting [0]

pg 8.2d is stuck inactive for 42240.371314, current state undersized+peered, last acting [0]

pg 8.2f is stuck inactive for 45566.913284, current state undersized+peered, last acting [0]

pg 8.32 is stuck inactive for 42240.354304, current state undersized+peered, last acting [0]

....

pg 8.28 is stuck undersized for 42065.616897, current state undersized+peered, last acting [0]

pg 8.2a is stuck undersized for 42065.613246, current state undersized+peered, last acting [0]

pg 8.2d is stuck undersized for 42065.951760, current state undersized+peered, last acting [0]

pg 8.2f is stuck undersized for 42065.610464, current state undersized+peered, last acting [0]

pg 8.32 is stuck undersized for 42065.959081, current state undersized+peered, last acting [0]

....

可见在数据修复中, 出现了inactive和undersized的值, 则是不正常的现象

解决方法:

①处理inactive的pg:

重启一下osd服务即可

[root@ceph1 ~]# systemctl restart ceph-osd.target

继续查看集群状态发现,inactive值的pg已经恢复正常,此时还剩undersized的pg。

[root@ceph1 ~]# ceph -s

cluster:

id: 13430f9a-ce0d-4d17-a215-272890f47f28

health: HEALTH_WARN

1 filesystem is degraded

241/723 objects misplaced (33.333%)

Degraded data redundancy: 59 pgs undersized

services:

mon: 3 daemons, quorum ceph2,ceph1,ceph3

mgr: ceph1(active), standbys: ceph2, ceph3

mds: cephfs-1/1/1 up {0=ceph1=up:rejoin}

osd: 3 osds: 3 up, 3 in; 229 remapped pgs

rgw: 1 daemon active

data:

pools: 8 pools, 288 pgs

objects: 241 objects, 3.4 KiB

usage: 3.0 GiB used, 245 GiB / 248 GiB avail

pgs: 241/723 objects misplaced (33.333%)

224 active+clean+remapped

59 active+undersized

5 active+clean

io:

client: 1.2 KiB/s rd, 1 op/s rd, 0 op/s wr

②处理undersized的pg:

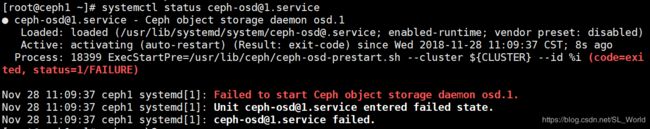

![]()

学会出问题先查看健康状态细节,仔细分析发现虽然设定的备份数量是3,但是PG 12.x却只有两个拷贝,分别存放在OSD 0~2的某两个上。

[root@ceph1 ~]# ceph health detail

HEALTH_WARN 241/723 objects misplaced (33.333%); Degraded data redundancy: 59 pgs undersized

OBJECT_MISPLACED 241/723 objects misplaced (33.333%)

PG_DEGRADED Degraded data redundancy: 59 pgs undersized

pg 12.8 is stuck undersized for 1910.001993, current state active+undersized, last acting [2,0]

pg 12.9 is stuck undersized for 1909.989334, current state active+undersized, last acting [2,0]

pg 12.a is stuck undersized for 1909.995807, current state active+undersized, last acting [0,2]

pg 12.b is stuck undersized for 1910.009596, current state active+undersized, last acting [1,0]

pg 12.c is stuck undersized for 1910.010185, current state active+undersized, last acting [0,2]

pg 12.d is stuck undersized for 1910.001526, current state active+undersized, last acting [1,0]

pg 12.e is stuck undersized for 1909.984982, current state active+undersized, last acting [2,0]

pg 12.f is stuck undersized for 1910.010640, current state active+undersized, last acting [2,0]

进一步查看集群osd状态树,发现ceph2和cepn3宕机再恢复后,osd.1 和osd.2进程已不在ceph2和cepn3上。

[root@ceph1 ~]# ceph osd tree

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

-1 0.24239 root default

-9 0.16159 host centos7evcloud

1 hdd 0.08080 osd.1 up 1.00000 1.00000

2 hdd 0.08080 osd.2 up 1.00000 1.00000

-3 0.08080 host ceph1

0 hdd 0.08080 osd.0 up 1.00000 1.00000

-5 0 host ceph2

-7 0 host ceph3

解决方法:

分别进入到ceph2和ceph3节点中重启osd.1 和osd.2服务,将这两个服务重新映射到ceph2和ceph3节点中。

[root@ceph1 ~]# ssh ceph2

[root@ceph2 ~]# systemctl restart ceph-osd@1.service

[root@ceph2 ~]# ssh ceph3

[root@ceph3 ~]# systemctl restart ceph-osd@2.service

最后查看集群osd状态树发现这两个服务重新映射到ceph2和ceph3节点中。

[root@ceph3 ~]# ceph osd tree

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

-1 0.24239 root default

-9 0 host centos7evcloud

-3 0.08080 host ceph1

0 hdd 0.08080 osd.0 up 1.00000 1.00000

-5 0.08080 host ceph2

1 hdd 0.08080 osd.1 up 1.00000 1.00000

-7 0.08080 host ceph3

2 hdd 0.08080 osd.2 up 1.00000 1.00000

集群状态也显示了久违的HEALTH_OK。

[root@ceph3 ~]# ceph -s

cluster:

id: 13430f9a-ce0d-4d17-a215-272890f47f28

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph2,ceph1,ceph3

mgr: ceph1(active), standbys: ceph2, ceph3

mds: cephfs-1/1/1 up {0=ceph1=up:active}

osd: 3 osds: 3 up, 3 in

rgw: 1 daemon active

data:

pools: 8 pools, 288 pgs

objects: 241 objects, 3.6 KiB

usage: 3.1 GiB used, 245 GiB / 248 GiB avail

pgs: 288 active+clean

6.卸载CephFS后再挂载时报错

挂载命令如下:

mount -t ceph 10.0.86.246:6789,10.0.86.221:6789,10.0.86.253:6789:/ /mnt/mycephfs/ -o name=admin,secret=AQBAI/JbROMoMRAAbgRshBRLLq953AVowLgJPw==

卸载CephFS后再挂载时报错:mount error(2): No such file or directory

说明:首先检查/mnt/mycephfs/目录是否存在并可访问,我的是存在的但依然报错No such file or directory。但是我重启了一下osd服务意外好了,可以正常挂载CephFS。

[root@ceph1 ~]# systemctl restart ceph-osd.target

[root@ceph1 ~]# mount -t ceph 10.0.86.246:6789,10.0.86.221:6789,10.0.86.253:6789:/ /mnt/mycephfs/ -o name=admin,secret=AQBAI/JbROMoMRAAbgRshBRLLq953AVowLgJPw==

可见挂载成功~!

[root@ceph1 ~]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/vda2 48G 7.5G 41G 16% /

devtmpfs 1.9G 0 1.9G 0% /dev

tmpfs 2.0G 8.0K 2.0G 1% /dev/shm

tmpfs 2.0G 17M 2.0G 1% /run

tmpfs 2.0G 0 2.0G 0% /sys/fs/cgroup

tmpfs 2.0G 24K 2.0G 1% /var/lib/ceph/osd/ceph-0

tmpfs 396M 0 396M 0% /run/user/0

10.0.86.246:6789,10.0.86.221:6789,10.0.86.253:6789:/ 249G 3.1G 246G 2% /mnt/mycephfs

积累中。。。

=========================================================================

总结:

查看集群状态发现报错或警告后,往往通过ceph health detail命令可以查看到系统给出的处理建议。通过这些建议一般可以处理大多数集群出现的问题。