手写数字识别改进之全连接网络

1.前向传播 mnist_forward.py

import tensorflow as tf

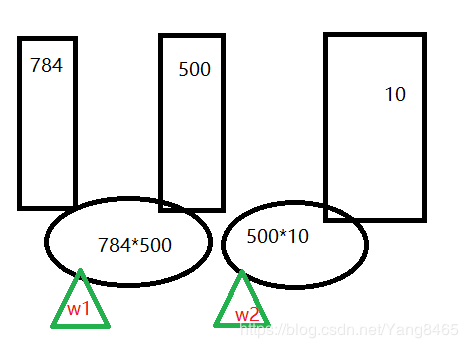

INPUT_NODE = 784 #28*28

OUTPUT_NODE = 10 #输出0~9

LAYER1_NODE = 500 #隐藏层节点个数

#权值函数

def get_weight(shape,regularizer):

w = tf.Variable(tf.truncated_normal(shape,stddev=0.1))

#正则化权重,采用l2方法

if regularizer!=None:

tf.add_to_collection('losses',tf.contrib.layers.l2_regularizer(regularizer)(w))

return w

#偏执值

def get_bias(shape):

b = tf.Variable(tf.zeros(shape))

return b

#前向传播网络,输入x 和正则参数

def forward(x,regularizer):

w1 = get_weight([INPUT_NODE,LAYER1_NODE],regularizer)

b1 = get_bias([LAYER1_NODE])

y1 = tf.nn.relu(tf.matmul(x,w1)+b1)

w2 = get_weight([LAYER1_NODE,OUTPUT_NODE],regularizer)

b2 = get_bias([OUTPUT_NODE])

y = tf.matmul(y1,w2)+b2

return y

X 为 N行784列

2.反向传播 mnist_back.py

import tensorflow as tf

import mnist_forward

import os

from tensorflow.examples.tutorials.mnist import input_data

BATCH_SIZE = 200

LEARNING_RATE_BASE = 0.1

LEARNING_RATE_DECAY=0.99

REGULARIZER = 0.0001

STEPS =50000

MOVING_AVERAGE_DECAY = 0.99

MODEL_SAVE_PATH = './model/'

MODEL_NAME = 'mnist_model'

def backward(mnist):

x = tf.placeholder(tf.float32,[None,mnist_forward.INPUT_NODE])

y_= tf.placeholder(tf.float32,[None,mnist_forward.OUTPUT_NODE])

y = mnist_forward.forward(x,REGULARIZER)

global_step = tf.Variable(0,trainable=False)

ce = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y,labels=tf.arg_max(y_,1))

cem = tf.reduce_mean(ce)

loss = cem + tf.add_n(tf.get_collection('losses'))

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples/BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True

)

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss,global_step)

ema = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY,global_step)

ema_op = ema.apply(tf.trainable_variables())

with tf.control_dependencies([train_step,ema_op]):

train_op = tf.no_op(name='train')

saver = tf.train.Saver()

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

# ckpt = tf.train.get_checkpoint_state(MODEL_SAVE_PATH)

# if ckpt and ckpt.model_checkpoint_path:

# saver.restore(sess,ckpt.model_checkpoint_path)

for i in range(STEPS):

xs,ys = mnist.train.next_batch(BATCH_SIZE)

_,loss_value,step = sess.run([train_op,loss,global_step],feed_dict={x:xs,y_:ys})

if i %1000 ==0:

print('After %d training steps,loss on training batch is %g.'%(step,loss_value))

saver.save(sess,os.path.join(MODEL_SAVE_PATH,MODEL_NAME),global_step=global_step)

def main():

mnist =input_data.read_data_sets('./datas/MNIST_data',one_hot=True)

print('a')

backward(mnist)

if __name__ =='__main__':

main()

3.测试 test.py

import time

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_forward

import mnist_backward

TEST_INTERVAL_SECS =5

def test(mnist):

with tf.Graph().as_default() as g:

x = tf.placeholder(tf.float32,[None,mnist_forward.INPUT_NODE])

y_ = tf.placeholder(tf.float32,[None,mnist_forward.OUTPUT_NODE])

y = mnist_forward.forward(x,None)

ema = tf.train.ExponentialMovingAverage(mnist_backward.MOVING_AVERAGE_DECAY)

ema_restore = ema.variables_to_restore()

saver = tf.train.Saver(ema_restore)

correct_prediction = tf.equal(tf.arg_max(y,1),tf.arg_max(y_,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32))

while True:

with tf.Session() as sess:

ckpt = tf.train.get_checkpoint_state(mnist_backward.MODEL_SAVE_PATH)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess,ckpt.model_checkpoint_path)

global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

accuracy_score = sess.run(accuracy,feed_dict={x:mnist.test.images,y_:mnist.test.labels})

print('After %s training step,test accuracy = %g'%(global_step,accuracy_score))

else:

print('No checkpoint file found')

return

time.sleep(TEST_INTERVAL_SECS)

def main():

mnist = input_data.read_data_sets('./datas/MNIST_data',one_hot=True)

test(mnist)

if __name__ =='__main__':

main()

4.API mnist_app.py

import tensorflow as tf

from PIL import Image

import numpy as np

import mnist_backward

import mnist_forward

def restore_model(testPictArr):

with tf.Graph().as_default() as g:

x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE])

y = mnist_forward.forward(x,None)

preValue = tf.argmax(y,1)

variable_averages = tf.train.ExponentialMovingAverage(mnist_backward.MOVING_AVERAGE_DECAY)

varibale_to_restore = variable_averages.variables_to_restore()

saver = tf.train.Saver(varibale_to_restore)

with tf.Session() as sess:

ckpt = tf.train.get_checkpoint_state(mnist_backward.MODEL_SAVE_PATH)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess,ckpt.model_checkpoint_path)

preValue = sess.run(preValue,feed_dict={x:testPictArr})

return preValue

else:

print('No checkpoint file found')

return -1

def pre_pic(picName):

img = Image.open(picName)

reIm = img.resize((28,28),Image.ANTIALIAS)#消除锯齿的方法

im_arr = np.array(reIm.convert('L'))#变成灰度图

threshold = 50

for i in range(28):

for j in range(28):

im_arr[i][j] = 288-im_arr[i][j]

if(im_arr[i][j]5000轮,准率0.972