YOLOv4的Tricks解读一 --- 多图融合的数据增强(MixUp/CutMix/Mosaic)

目录

- MixUp

- CutMix

- Mosaic

YOLOv4 = CSPDarknet53 + SPP + PAN + YOLOv3

YOLOv4采用的trick可以分为以下几类:

- 用于骨干网的 Bag of Freebies(BoF):

CutMix和Mosaic数据增强,DropBlock正则化,Label Smooth - 用于骨干网的 Bag of Specials(BoS):

Mish,跨阶段部分连接(CSP),多输入加权剩余连接(MiWRC) - 用于检测器的 Bag of Specials(BoS):

Mish,SPP块,SAM块,PAN路径聚集块,DIoU-NMS

本文就YOLOv4中涉及和采用的部分数据增强tricks进行总结和学习。

MixUp

论文:https://arxiv.org/pdf/1710.09412.pdf

代码(官方):https://github.com/hongyi-zhang/mixup

复现版本:https://github.com/tengshaofeng/ResidualAttentionNetwork-pytorch

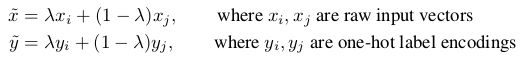

mixup主要是用于图像分类,从训练样本中随机抽取两个样本进行简单的随机加权求和,同时样本的标签也对应加权求和,然后预测结果与加权求和之后的标签求损失,在反向求导更新参数,公式定义如下:

由公式可以看到,加权融合同时作用在图片和label两个维度。

Pytorch代码如下:

def mixup_data(x, y, alpha=1.0, use_cuda=True):

'''Compute the mixup data. Return mixed inputs, pairs of targets, and lambda'''

if alpha > 0.:

lam = np.random.beta(alpha, alpha)

else:

lam = 1.

batch_size = x.size()[0]

if use_cuda:

index = torch.randperm(batch_size).cuda()

else:

index = torch.randperm(batch_size)

mixed_x = lam * x + (1 - lam) * x[index,:]

y_a, y_b = y, y[index]

return mixed_x, y_a, y_b, lam

由代码看出,mixup_data并不是同时取出两个batch,而是取一个batch,并将该batch中的样本ID顺序打乱(shuffle),然后再进行加权求和。

整体的MixUp算法流程如下:

- 对于输入的一个batch的待测图片images,我们将其和随机抽取的图片进行融合,融合比例为lam,得到混合张量inputs;

- 第1步中图片融合的比例lam是[0,1]之间的随机实数,符合beta分布,相加时两张图对应的每个像素值直接相加,即 inputs = lam*images + (1-lam)*images_random;将1中得到的混合张量inputs传递给model得到输出张量outpus,

- 随后计算损失函数时,我们针对两个图片的标签分别计算损失函数,然后按照比例lam进行损失函数的加权求和,即loss = lam * criterion(outputs, targets_a) + (1 - lam) * criterion(outputs, targets_b);

- 反向求导更新参数。

Pytorch代码如下:

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(net.parameters(), lr=base_learning_rate, momentum=0.9, weight_decay=args.decay)

""" 训练 """

def train(epoch):

print('\nEpoch: %d' % epoch)

net.train()

train_loss = 0

correct = 0

total = 0

for batch_idx, (inputs, targets) in enumerate(trainloader):

if use_cuda:

inputs, targets = inputs.cuda(), targets.cuda()

""" generate mixed inputs, two one-hot label vectors and mixing coefficient """

inputs, targets_a, targets_b, lam = mixup_data(inputs, targets, args.alpha, use_cuda)

inputs, targets_a, targets_b = Variable(inputs), Variable(targets_a), Variable(targets_b)

outputs = net(inputs)

""" 计算loss """

loss_func = mixup_criterion(targets_a, targets_b, lam)

loss = loss_func(criterion, outputs)

""" 更新梯度 """

optimizer.zero_grad()

loss.backward()

optimizer.step()

其中mixup_criterion定义如下,损失函数是输出的预测值对这两组标签分别求损失.

def mixup_criterion(y_a, y_b, lam):

return lambda criterion, pred: lam * criterion(pred, y_a) + (1 - lam) * criterion(pred, y_b)

目标检测实现见:

mixup for detection

CutMix

论文:https://arxiv.org/abs/1905.04899v2

代码:https://github.com/clovaai/CutMix-PyTorch

CutMix的处理方式也比较简单,同样也是对一对图片做操作,简单讲就是随机生成一个裁剪框Box,裁剪掉A图的相应位置,然后用B图片相应位置的ROI放到A图中被裁剪的区域形成新的样本,计算损失时同样采用加权求和的方式进行求解。

两张图合并操作定义如下:

其中,M表示二进制0,1矩阵,表示从两个图像中删除并填充的位置,实际就是用来标记需要裁剪的区域和保留的区域,裁剪的区域值均为0,其余位置为1。1是所有元素都是1的矩阵,维度大小与M相同。图像A和B组合得到新样本,最后两个图的标签也对应求加权和。权值同mixup一样是采用bata分布随机得到,alpha的值为论文中取值为1,这样加权系数就服从beta分布,请注意,主要区别在于CutMix用另一个训练图像中的补丁替换了图像区域,并且比Mixup生成了更多的本地自然图像。

为了对二进制掩码M进行采样,首先要对剪裁区域的边界框B= (r_x, r_y, r_w, r_h}进行采样,用来对样本x_A和x_B做裁剪区域的指示标定。

裁剪区域的边界框采样公式如下:

W,H是二进制掩码矩阵M的宽高大小,且剪裁区域的比例满足:

![]()

确定好裁剪区域B之后,将二进制掩码M中的裁剪区域B置0,其他区域置1,这样就就完成了掩码M的采样,然后将M点乘A将样本A中的剪裁区域B移除,(1-M)点乘B将样本B中的剪裁区域B进行裁剪填充到样本A,形成一个全新样本。

- 生成剪裁区域

"""输入为:样本的size和生成的随机lamda值"""

def rand_bbox(size, lam):

W = size[2]

H = size[3]

"""论文里的公式2,求出B的rw,rh"""

cut_rat = np.sqrt(1. - lam)

cut_w = np.int(W * cut_rat)

cut_h = np.int(H * cut_rat)

# uniform

"""论文里的公式2,求出B的rx,ry(bbox的中心点)"""

cx = np.random.randint(W)

cy = np.random.randint(H)

# np.clip限制大小

"""限制B坐标区域不超过样本大小"""

bbx1 = np.clip(cx - cut_w // 2, 0, W)

bby1 = np.clip(cy - cut_h // 2, 0, H)

bbx2 = np.clip(cx + cut_w // 2, 0, W)

bby2 = np.clip(cy + cut_h // 2, 0, H)

return bbx1, bby1, bbx2, bby2

- 应用CutMix大概流程

for i, (input, target) in enumerate(train_loader):

# measure data loading time

data_time.update(time.time() - end)

input = input.cuda()

target = target.cuda()

r = np.random.rand(1)

if args.beta > 0 and r < args.cutmix_prob:

# generate mixed sample

"""设定lamda的值,服从beta分布"""

lam = np.random.beta(args.beta, args.beta)

rand_index = torch.randperm(input.size()[0]).cuda()

"""获取batch里面的两个随机样本 """

target_a = target

target_b = target[rand_index]

"""获取裁剪区域bbox坐标位置 """

bbx1, bby1, bbx2, bby2 = rand_bbox(input.size(), lam)

"""将原有的样本A中的B区域,替换成样本B中的B区域"""

input[:, :, bbx1:bbx2, bby1:bby2] = input[rand_index, :, bbx1:bbx2, bby1:bby2]

# adjust lambda to exactly match pixel ratio

"""根据剪裁区域坐标框的值调整lam的值 """

lam = 1 - ((bbx2 - bbx1) * (bby2 - bby1) / (input.size()[-1] * input.size()[-2]))

# compute output

"""计算模型输出 """

output = model(input)

"""计算损失 """

loss = criterion(output, target_a) * lam + criterion(output, target_b) * (1. - lam)

else:

# compute output

output = model(input)

loss = criterion(output, target)

Mosaic

Mosaic可以说是YOLOv4中的一个亮点,但是Mosaic并不是YOLOv4提出的(我不是杠精),在u版旧版本的yolo3(现已更新)中就已经有mosaic的实现。

Mosaic混合了4个训练图像, 因此,混合了4个不同的上下文,而CutMix仅混合了2个输入图像,这就是Mosaic更强的原因。

可以理解为Mosaic混合更多图像创造了更多的可能性,见多识广。

顺便一提的是YOLOv4后的Stitcher小目标检测方法与Mosaic有点类似,也是拼接了四个图像,用以提升小目标检测。参考我的博文:Stitcher学习笔记:提升小目标检测 — 简单而有效

直接上Mosaic的代码,摘自https://github.com/ultralytics/yolov3/blob/master/utils/datasets.py

def load_mosaic(self, index):

# loads images in a mosaic

labels4 = []

s = self.img_size

xc, yc = [int(random.uniform(s * 0.5, s * 1.5)) for _ in range(2)] # mosaic center x, y

indices = [index] + [random.randint(0, len(self.labels) - 1) for _ in range(3)] # 3 additional image indices

for i, index in enumerate(indices):

# Load image

img, _, (h, w) = load_image(self, index)

# place img in img4

if i == 0: # top left

img4 = np.full((s * 2, s * 2, img.shape[2]), 114, dtype=np.uint8) # base image with 4 tiles

x1a, y1a, x2a, y2a = max(xc - w, 0), max(yc - h, 0), xc, yc # xmin, ymin, xmax, ymax (large image)

x1b, y1b, x2b, y2b = w - (x2a - x1a), h - (y2a - y1a), w, h # xmin, ymin, xmax, ymax (small image)

elif i == 1: # top right

x1a, y1a, x2a, y2a = xc, max(yc - h, 0), min(xc + w, s * 2), yc

x1b, y1b, x2b, y2b = 0, h - (y2a - y1a), min(w, x2a - x1a), h

elif i == 2: # bottom left

x1a, y1a, x2a, y2a = max(xc - w, 0), yc, xc, min(s * 2, yc + h)

x1b, y1b, x2b, y2b = w - (x2a - x1a), 0, max(xc, w), min(y2a - y1a, h)

elif i == 3: # bottom right

x1a, y1a, x2a, y2a = xc, yc, min(xc + w, s * 2), min(s * 2, yc + h)

x1b, y1b, x2b, y2b = 0, 0, min(w, x2a - x1a), min(y2a - y1a, h)

img4[y1a:y2a, x1a:x2a] = img[y1b:y2b, x1b:x2b] # img4[ymin:ymax, xmin:xmax]

padw = x1a - x1b

padh = y1a - y1b

# Labels

x = self.labels[index]

labels = x.copy()

if x.size > 0: # Normalized xywh to pixel xyxy format

labels[:, 1] = w * (x[:, 1] - x[:, 3] / 2) + padw

labels[:, 2] = h * (x[:, 2] - x[:, 4] / 2) + padh

labels[:, 3] = w * (x[:, 1] + x[:, 3] / 2) + padw

labels[:, 4] = h * (x[:, 2] + x[:, 4] / 2) + padh

labels4.append(labels)

# Concat/clip labels

if len(labels4):

labels4 = np.concatenate(labels4, 0)

# np.clip(labels4[:, 1:] - s / 2, 0, s, out=labels4[:, 1:]) # use with center crop

np.clip(labels4[:, 1:], 0, 2 * s, out=labels4[:, 1:]) # use with random_affine

# Augment

# img4 = img4[s // 2: int(s * 1.5), s // 2:int(s * 1.5)] # center crop (WARNING, requires box pruning)

img4, labels4 = random_affine(img4, labels4,

degrees=self.hyp['degrees'],

translate=self.hyp['translate'],

scale=self.hyp['scale'],

shear=self.hyp['shear'],

border=-s // 2) # border to remove

return img4, labels4

参考:

https://blog.csdn.net/ouyangfushu/article/details/105575258

https://blog.csdn.net/weixin_38715903/article/details/103999227

https://zhuanlan.zhihu.com/p/138855612

https://www.zhihu.com/question/308572298